6/15/2017

cover

cover

next page >

title:

author:

publisher:

isbn10 | asin:

print isbn13:

ebook isbn13:

language:

subject

publication date:

lcc:

ddc:

subject:

An Introduction to Computational Learning Theory

Kearns, Michael J.; Vazirani, Umesh Virkumar.

MIT Press

9780262111935

9780585350530

English

Machine learning, Artificial intelligence, Algorithms,

Neural networks (Computer science)

1994

Q325.5.K44 1994eb

006.3

Machine learning, Artificial intelligence, Algorithms,

Neural networks (Computer science)

cover

next page >

If you like this book, buy it!

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/cover.html

1/1

�

6/15/2017

< previous page

page_iii

page_iii

next page >

Page iii

An Introduction to Computational Learning Theory

Michael J. Kearns

Umesh V. Vazirani

< previous page

page_iii

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_iii.html

1/1

�

6/15/2017

< previous page

page_iv

page_iv

next page >

Page iv

Second printing, 1997

©1994 Massachusetts Institute of Technology

All rights reserved. No part of this book may be reproduced in any form by any electronic or mechanical

means (including photocopying, recording, or information storage and retrieval) without permission in

writing from the publisher.

This book was typeset by the authors and was printed and bound in the United States of America.

Library of Congress Cataloging-in-Publication Data

Kearns, Michael J.

An introduction to computational learning theory / Michael J.

Kearns, Umesh V. Vazirani.

p. cm.

Includes bibliographical references and index.

ISBN 0-262-11193-4

1. Machine learning. 2. Artificial intelligence. 3. Algorithms.

4. Neural networks. I. Vazirani, Umesh Virkumar. II. Title.

Q325.5.K44 1994

006.3dc20 94-16588

CIP

< previous page

page_iv

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_iv.html

1/1

�

6/15/2017

< previous page

page_v

page_v

next page >

Page v

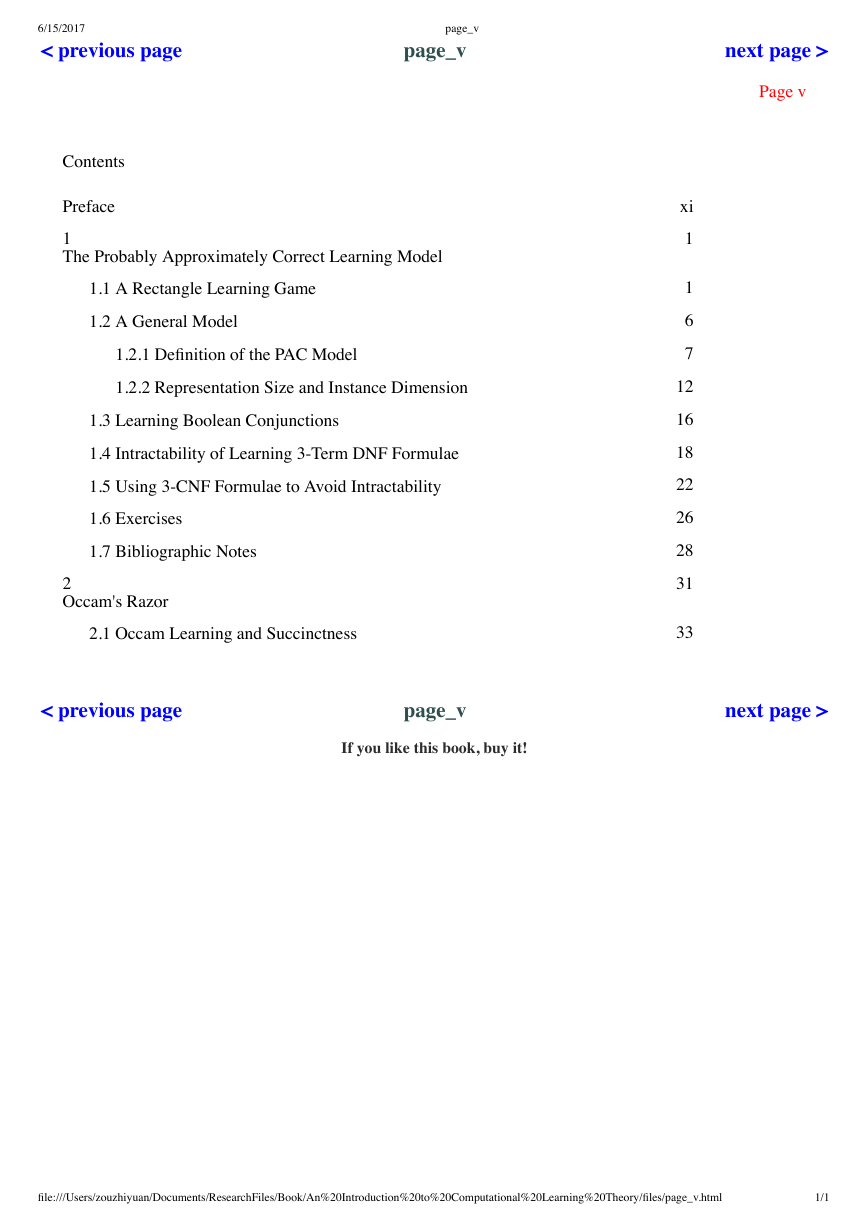

Contents

Preface

1

The Probably Approximately Correct Learning Model

1.1 A Rectangle Learning Game

1.2 A General Model

1.2.1 Definition of the PAC Model

1.2.2 Representation Size and Instance Dimension

1.3 Learning Boolean Conjunctions

1.4 Intractability of Learning 3-Term DNF Formulae

1.5 Using 3-CNF Formulae to Avoid Intractability

1.6 Exercises

1.7 Bibliographic Notes

2

Occam's Razor

2.1 Occam Learning and Succinctness

xi

1

1

6

7

12

16

18

22

26

28

31

33

< previous page

page_v

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_v.html

1/1

�

6/15/2017

< previous page

page_vi

page_vi

next page >

Page vi

2.2 Improving the Sample Size for Learning Conjunctions

2.3 Learning Conjunctions with Few Relevant Variables

2.4 Learning Decision Lists

2.5 Exercises

2.6 Bibliographic Notes

3

The Vapnik-Chervonenkis Dimension

3.1 When Can Infinite Classes Be Learned with a Finite Sample?

3.2 The Vapnik-Chervonenkis Dimension

3.3 Examples of the VC Dimension

3.4 A Polynomial Bound on |PC(S)|

3.5 A Polynomial Bound on the Sample Size for PAC Learning

3.5.1 The Importance of e-Nets

3.5.2 A Small e-Net from Random Sampling

3.6 Sample Size Lower Bounds

3.7 An Application to Neural Networks

3.8 Exercises

3.9 Bibliographic Notes

4

Weak and Strong Learning

4.1 A Relaxed Definition of Learning?

4.2 Boosting the Confidence

37

38

42

44

46

49

49

50

51

54

57

57

59

62

64

67

70

73

73

76

< previous page

page_vi

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_vi.html

1/1

�

6/15/2017

< previous page

page_vii

page_vii

next page >

Page vii

4.3 Boosting the Accuracy

4.3.1 A Modest Accuracy Boosting Procedure

4.3.2 Error Analysis for the Modest Procedure

4.3.3 A Recursive Accuracy Boosting Algorithm

4.3.4 Bounding the Depth of the Recursion

4.3.5 Analysis of Filtering Efficiency

4.3.6 Finishing Up

4.4 Exercises

4.5 Bibliographic Notes

5

Learning in the Presence of Noise

5.1 The Classification Noise Model

5.2 An Algorithm for Learning Conjunctions from Statistics

5.3 The Statistical Query Learning Model

5.4 Simulating Statistical Queries in the Presence of Noise

5.4.1 A Nice Decomposition of Pc

5.4.2 Solving for an Estimate of Pc

5.4.3 Guessing and Verifying the Noise Rate

5.4.4 Description of the Simulation Algorithm

5.5 Exercises

5.6 Bibliographic Notes

< previous page

78

79

81

85

88

89

96

101

102

103

104

106

108

111

112

114

115

117

119

121

page_vii

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_vii.html

1/1

�

6/15/2017

< previous page

page_viii

page_viii

next page >

Page viii

6

Inherent Unpredictability

6.1 Representation Dependent and Independent Hardness

6.2 The Discrete Cube Root Problem

6.2.1 The Difficulty of Discrete Cube Roots

6.2.2 Discrete Cube Roots As a Learning Problem

6.3 Small Boolean Circuits Are Inherently Unpredictable

6.4 Reducing the Depth of Inherently Unpredictable Circuits

6.4.1 Expanding the Input

6.5 A General Method and Its Application to Neural Networks

6.6 Exercises

6.7 Bibliographic Notes

7

Reducibility in PAC Learning

7.1 Reducing DNF to Monotone DNF

7.2 A General Method for Reducibility

7.3 Reducing Boolean Formulae to Finite Automata

7.4 Exercises

7.5 Bibliographic Notes

8

Learning Finite Automata by Experimentation

8.1 Active and Passive Learning

8.2 Exact Learning Using Queries

123

123

124

126

128

131

133

135

139

140

141

143

144

147

149

153

154

155

155

158

< previous page

page_viii

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_viii.html

1/1

�

6/15/2017

< previous page

page_ix

page_ix

next page >

Page ix

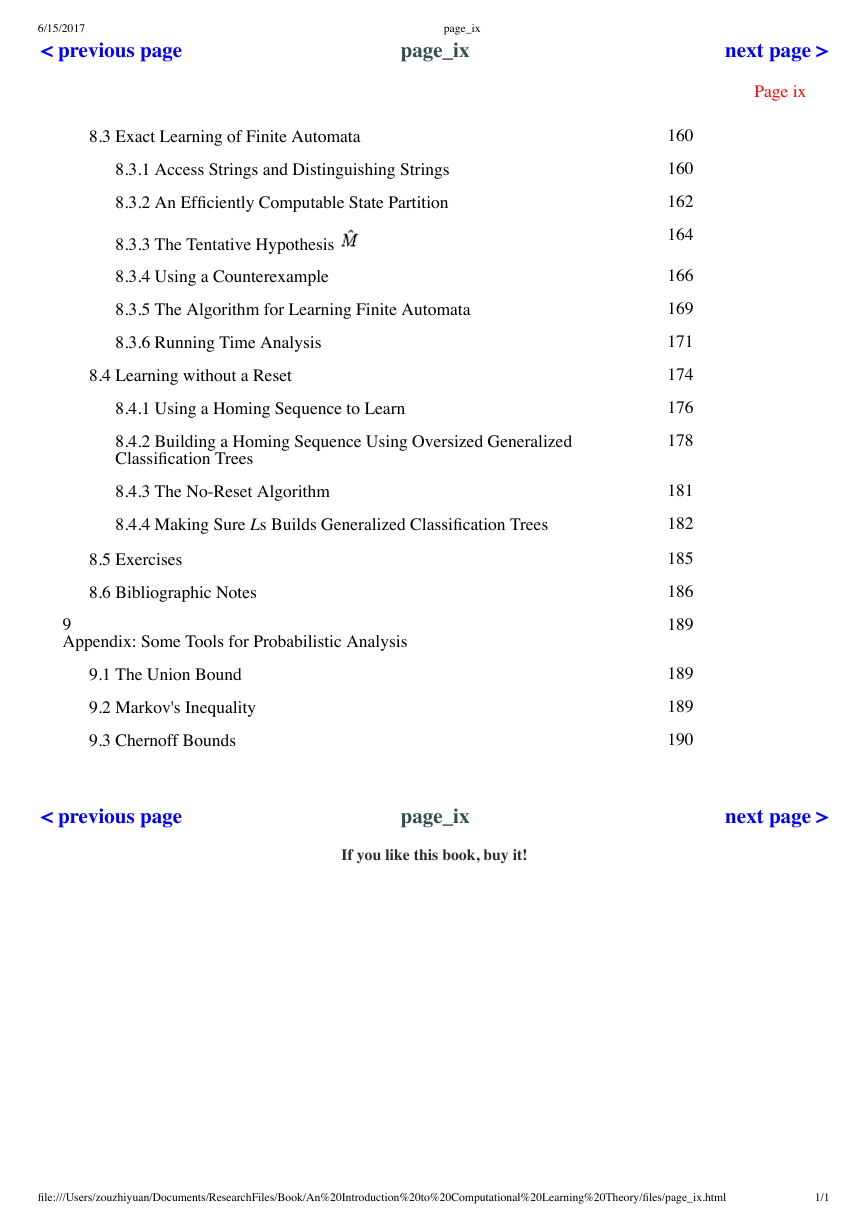

8.3 Exact Learning of Finite Automata

8.3.1 Access Strings and Distinguishing Strings

8.3.2 An Efficiently Computable State Partition

8.3.3 The Tentative Hypothesis

8.3.4 Using a Counterexample

8.3.5 The Algorithm for Learning Finite Automata

8.3.6 Running Time Analysis

8.4 Learning without a Reset

8.4.1 Using a Homing Sequence to Learn

8.4.2 Building a Homing Sequence Using Oversized Generalized

Classification Trees

8.4.3 The No-Reset Algorithm

8.4.4 Making Sure Ls Builds Generalized Classification Trees

8.5 Exercises

8.6 Bibliographic Notes

9

Appendix: Some Tools for Probabilistic Analysis

9.1 The Union Bound

9.2 Markov's Inequality

9.3 Chernoff Bounds

< previous page

160

160

162

164

166

169

171

174

176

178

181

182

185

186

189

189

189

190

page_ix

If you like this book, buy it!

next page >

file:///Users/zouzhiyuan/Documents/ResearchFiles/Book/An%20Introduction%20to%20Computational%20Learning%20Theory/files/page_ix.html

1/1

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc