NVIDIA TESLA V100 GPU

ARCHITECTURE

THE WORLD’S MOST ADVANCED DATA CENTER GPU

WP-08608-001_v1.1 | August 2017

�

WP-08608-001_v1.1

TABLE OF CONTENTS

Introduction to the NVIDIA Tesla V100 GPU Architecture ...................................... 1

Tesla V100: The AI Computing and HPC Powerhouse ............................................ 2

Key Features ................................................................................................... 2

Extreme Performance for AI and HPC ..................................................................... 5

NVIDIA GPUs The Fastest and Most Flexible Deep Learning Platform .................... 6

Deep Learning Background ................................................................................. 6

GPU-Accelerated Deep Learning ........................................................................... 7

GV100 GPU Hardware Architecture In-Depth ....................................................... 8

Extreme Performance and High Efficiency ............................................................... 11

Volta Streaming Multiprocessor ........................................................................... 12

Tensor Cores .............................................................................................. 14

Enhanced L1 Data Cache and Shared Memory ...................................................... 17

Simultaneous Execution of FP32 and INT32 Operations ........................................... 18

Compute Capability .......................................................................................... 18

NVLink: Higher bandwidth, More Links, More Features ............................................... 19

More Links, Faster Links ................................................................................. 19

More Features ............................................................................................. 19

HBM2 Memory Architecture ................................................................................ 21

ECC Memory Resiliency .................................................................................. 22

Copy Engine Enhancements ............................................................................... 23

Tesla V100 Board Design ................................................................................... 23

GV100 CUDA Hardware and Software Architectural Advances............................... 25

Independent Thread Scheduling .......................................................................... 26

Prior NVIDIA GPU SIMT Models ........................................................................ 26

Volta SIMT Model ......................................................................................... 27

Starvation-Free Algorithms .............................................................................. 29

Volta Multi-Process Service ................................................................................. 30

Unified Memory and Address Translation Services ..................................................... 32

Cooperative Groups ......................................................................................... 33

Conclusion .................................................................................................... 36

Appendix A NVIDIA DGX-1 with Tesla V100 ...................................................... 37

NVIDIA DGX-1 System Specifications .................................................................... 38

DGX-1 Software .............................................................................................. 39

Appendix B NVIDIA DGX Station - A Personal AI Supercomputer for Deep Learning 41

Preloaded with the Latest Deep Learning Software .................................................... 43

Kickstarting AI initiatives ................................................................................... 43

Appendix C Accelerating Deep Learning and Artificial Intelligence with GPUs ........ 44

Deep Learning in a Nutshell ................................................................................ 44

NVIDIA GPUs: The Engine of Deep Learning ........................................................... 47

Training Deep Neural Networks ........................................................................ 48

Inferencing Using a Trained Neural Network ........................................................ 49

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | ii

�

Comprehensive Deep Learning Software Development Kit ........................................... 50

Self-driving Cars .......................................................................................... 51

Robots ...................................................................................................... 52

Healthcare and Life Sciences ........................................................................... 52

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | iii

�

LIST OF FIGURES

Figure 1. NVIDIA Tesla V100 SXM2 Module with Volta GV100 GPU ....................... 1

Figure 2. New Technologies in Tesla V100 ................................................... 4

Figure 3. Tesla V100 Provides a Major Leap in Deep Learning Performance with New

Tensor Cores .......................................................................... 5

Figure 4. Volta GV100 Full GPU with 84 SM Units ........................................... 9

Figure 5. Volta GV100 Streaming Multiprocessor (SM) ..................................... 13

Figure 6. cuBLAS Single Precision (FP32) .................................................... 14

Figure 7. cuBLAS Mixed Precision (FP16 Input, FP32 Compute) .......................... 15

Figure 8. Tensor Core 4x4 Matrix Multiply and Accumulate ............................... 15

Figure 9. Mixed Precision Multiply and Accumulate in Tensor Core ...................... 16

Figure 10. Pascal and Volta 4x4 Matrix Multiplication ........................................ 16

Figure 11. Comparison of Pascal and Volta Data Cache ..................................... 17

Figure 12. Hybrid Cube Mesh NVLink Topology as used in DGX-1 with V100 ............ 20

Figure 13. V100 with NVLink Connected GPU-to-GPU and GPU-to-CPU ................... 20

Figure 14. Second Generation NVLink Performance ......................................... 21

Figure 15. HBM2 Memory Speedup on V100 vs P100 ....................................... 22

Figure 16. Tesla V100 Accelerator (Front) .................................................... 23

Figure 17. Tesla V100 Accelerator (Back) ..................................................... 24

Figure 18. NVIDIA Tesla V100 SXM2 Module - Stylized Exploded View ................... 24

Figure 19. Deep Learning Methods Developed Using CUDA ................................ 25

Figure 20. SIMT Warp Execution Model of Pascal and Earlier GPUs ....................... 26

Figure 21. Volta Warp with Per-Thread Program Counter and Call Stack ................. 27

Figure 22. Volta Independent Thread Scheduling ............................................ 28

Figure 23. Programs use Explicit Synchronization to Reconverge Threads in a Warp ... 28

Figure 24. Doubly Linked List with Fine-Grained Locks ...................................... 29

Figure 25. Software-based MPS Service in Pascal vs Hardware-Accelerated MPS Service

in Volta ................................................................................ 31

Figure 26. Volta MPS for Inference ............................................................. 32

Figure 27. Two Phases of a Particle Simulation............................................... 35

Figure 28. NVIDIA DGX-1 Server ............................................................... 37

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | iv

�

Figure 29. DGX-1 Delivers up to 3x Faster Training Compared to Eight-way GP100

Based Server ......................................................................... 38

Figure 30. NVIDIA DGX-1 Fully Integrated Software Stack for Instant Productivity ..... 40

Figure 31. Tesla V100 Powered DGX Station ................................................. 41

Figure 32. NVIDIA DGX Station Delivers 47x Faster Training ............................... 42

Figure 33. Perceptron is the Simplest Model of a Neural Network ......................... 45

Figure 34. Complex Multi-Layer Neural Network Models Require Increased Amounts of

Compute Power ...................................................................... 47

Figure 35. Training a Neural Network .......................................................... 48

Figure 36. Inferencing on a Neural Network .................................................. 49

Figure 37. Accelerate Every Framework ....................................................... 50

Figure 38. Organizations Engaged with NVIDIA on Deep Learning ........................ 51

Figure 39. NVIDIA DriveNet ..................................................................... 52

LIST OF TABLES

Table 1. Comparison of NVIDIA Tesla GPUs ................................................ 10

Table 2. Compute Capabilities: GK180 vs GM200 vs GP100 vs GV100 ................. 18

Table 3. NVIDIA DGX-1 System Specifications ............................................. 38

Table 4. DGX Station Specifications.......................................................... 42

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | v

�

INTRODUCTION TO THE NVIDIA TESLA

V100 GPU ARCHITECTURE

Since the introduction of the pioneering CUDA GPU Computing platform over 10 years ago, each

new NVIDIA® GPU generation has delivered higher application performance, improved power

efficiency, added important new compute features, and simplified GPU programming. Today,

NVIDIA GPUs accelerate thousands of High Performance Computing (HPC), data center, and

machine learning applications. NVIDIA GPUs have become the leading computational engines

powering the Artificial Intelligence (AI) revolution.

NVIDIA GPUs accelerate numerous deep learning systems and applications including autonomous

vehicle platforms, high-accuracy speech, image, and text recognition systems, intelligent video

analytics, molecular simulations, drug discovery, disease diagnosis, weather forecasting, big data

analytics, financial modeling, robotics, factory automation, real-time language translation, online

search optimizations, and personalized user recommendations, to name just a few.

The new NVIDIA® Tesla® V100 accelerator (shown in Figure 1) incorporates the powerful new

Volta™ GV100 GPU. GV100 not only builds upon the advances of its predecessor, the Pascal™

GP100 GPU, it significantly improves performance and scalability, and adds many new features

that improve programmability. These advances will supercharge HPC, data center,

supercomputer, and deep learning systems and applications.

This white paper presents the Tesla V100 accelerator and the Volta GV100 GPU architecture.

Figure 1.

NVIDIA Tesla V100 SXM2 Module with Volta GV100 GPU

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | 1

�

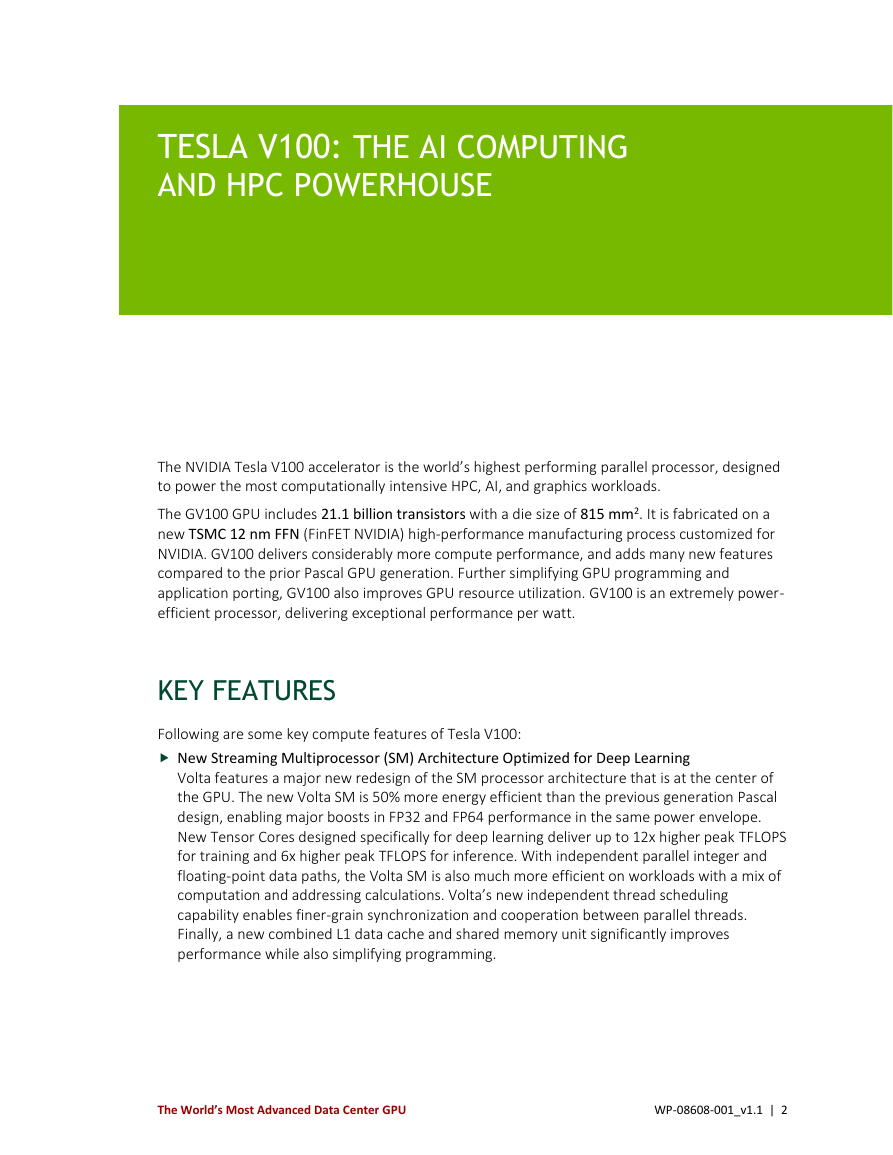

TESLA V100: THE AI COMPUTING

AND HPC POWERHOUSE

The NVIDIA Tesla V100 accelerator is the world’s highest performing parallel processor, designed

to power the most computationally intensive HPC, AI, and graphics workloads.

The GV100 GPU includes 21.1 billion transistors with a die size of 815 mm2. It is fabricated on a

new TSMC 12 nm FFN (FinFET NVIDIA) high-performance manufacturing process customized for

NVIDIA. GV100 delivers considerably more compute performance, and adds many new features

compared to the prior Pascal GPU generation. Further simplifying GPU programming and

application porting, GV100 also improves GPU resource utilization. GV100 is an extremely power-

efficient processor, delivering exceptional performance per watt.

KEY FEATURES

Following are some key compute features of Tesla V100:

New Streaming Multiprocessor (SM) Architecture Optimized for Deep Learning

Volta features a major new redesign of the SM processor architecture that is at the center of

the GPU. The new Volta SM is 50% more energy efficient than the previous generation Pascal

design, enabling major boosts in FP32 and FP64 performance in the same power envelope.

New Tensor Cores designed specifically for deep learning deliver up to 12x higher peak TFLOPS

for training and 6x higher peak TFLOPS for inference. With independent parallel integer and

floating-point data paths, the Volta SM is also much more efficient on workloads with a mix of

computation and addressing calculations. Volta’s new independent thread scheduling

capability enables finer-grain synchronization and cooperation between parallel threads.

Finally, a new combined L1 data cache and shared memory unit significantly improves

performance while also simplifying programming.

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | 2

�

Tesla V100: The AI Computing and HPC Powerhouse

Second-Generation NVIDIA NVLink™

The second generation of NVIDIA’s NVLink high-speed interconnect delivers higher bandwidth,

more links, and improved scalability for multi-GPU and multi-GPU/CPU system configurations.

Volta GV100 supports up to six NVLink links and total bandwidth of 300 GB/sec, compared to

four NVLink links and 160 GB/s total bandwidth on GP100. NVLink now supports CPU

mastering and cache coherence capabilities with IBM Power 9 CPU-based servers. The new

NVIDIA DGX-1 with V100 AI supercomputer uses NVLink to deliver greater scalability for ultra-

fast deep learning training.

HBM2 Memory: Faster, Higher Efficiency

Volta’s highly tuned 16 GB HBM2 memory subsystem delivers 900 GB/sec peak memory

bandwidth. The combination of both a new generation HBM2 memory from Samsung, and a

new generation memory controller in Volta, provides 1.5x delivered memory bandwidth

versus Pascal GP100, with up to 95% memory bandwidth utilization running many workloads.

Volta Multi-Process Service

Volta Multi-Process Service (MPS) is a new feature of the Volta GV100 architecture providing

hardware acceleration of critical components of the CUDA MPS server, enabling improved

performance, isolation, and better quality of service (QoS) for multiple compute applications

sharing the GPU. Volta MPS also triples the maximum number of MPS clients from 16 on

Pascal to 48 on Volta.

Enhanced Unified Memory and Address Translation Services

GV100 Unified Memory technology includes new access counters to allow more accurate

migration of memory pages to the processor that accesses them most frequently, improving

efficiency for memory ranges shared between processors. On IBM Power platforms, new

Address Translation Services (ATS) support allows the GPU to access the CPU’s page tables

directly.

Maximum Performance and Maximum Efficiency Modes

In Maximum Performance mode, the Tesla V100 accelerator will operate up to its TDP

(Thermal Design Power) level of 300 W to accelerate applications that require the fastest

computational speed and highest data throughput. Maximum Efficiency Mode allows data

center managers to tune power usage of their Tesla V100 accelerators to operate with optimal

performance per watt. A not-to-exceed power cap can be set across all GPUs in a rack,

reducing power consumption dramatically, while still obtaining excellent rack performance.

Cooperative Groups and New Cooperative Launch APIs

Cooperative Groups is a new programming model introduced in CUDA 9 for organizing groups

of communicating threads. Cooperative Groups allows developers to express the granularity at

which threads are communicating, helping them to express richer, more efficient parallel

decompositions. Basic Cooperative Groups functionality is supported on all NVIDIA GPUs since

Kepler. Pascal and Volta include support for new cooperative launch APIs that support

synchronization amongst CUDA thread blocks. Volta adds support for new synchronization

patterns.

The World’s Most Advanced Data Center GPU

WP-08608-001_v1.1 | 3

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc