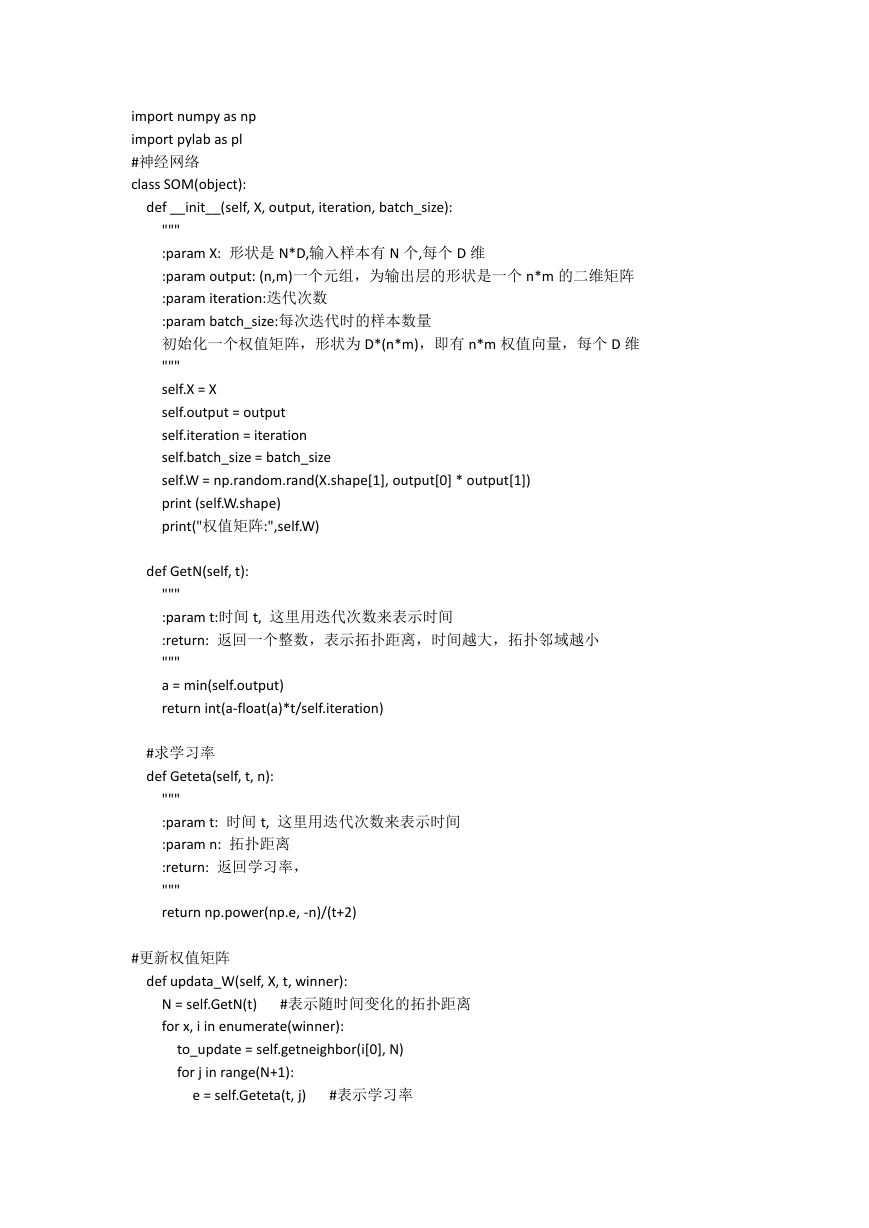

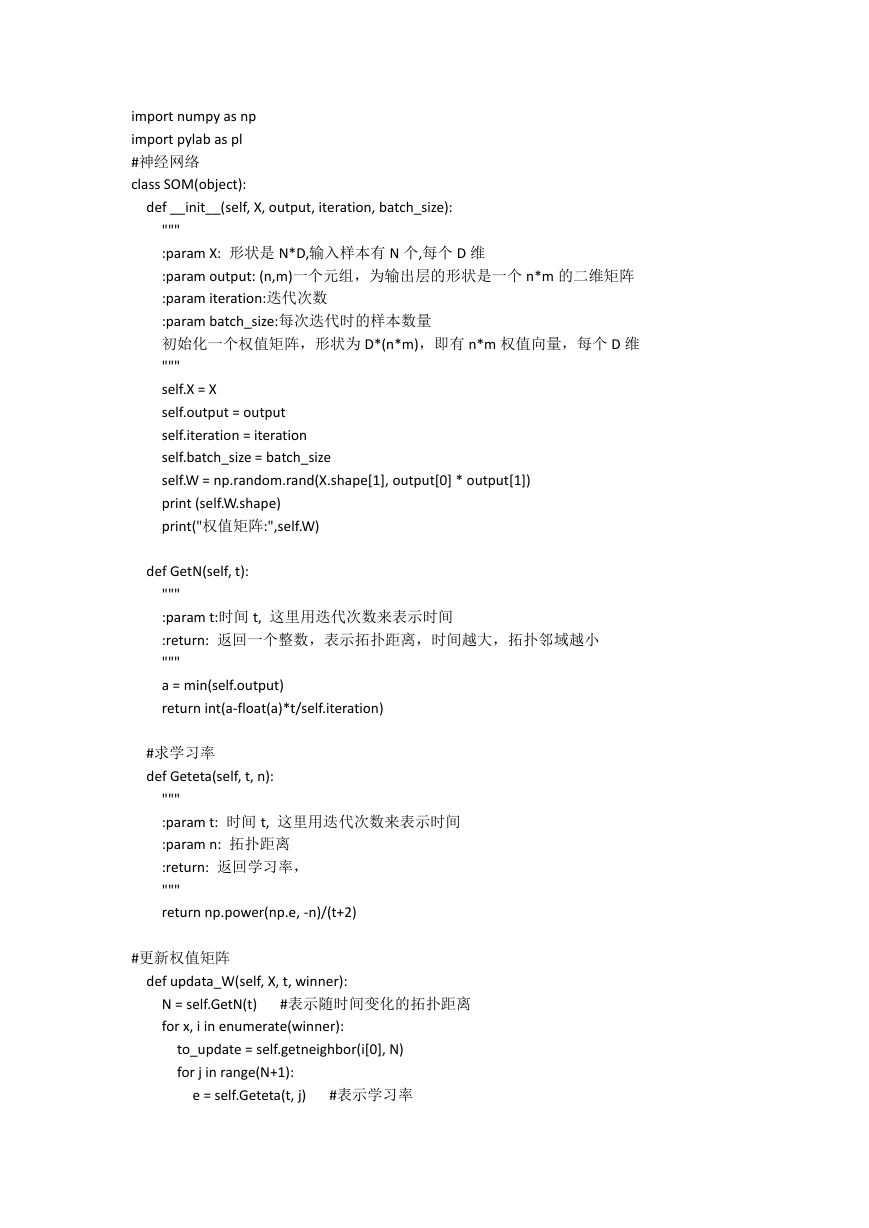

import numpy as np

import pylab as pl

#神经网络

class SOM(object):

def __init__(self, X, output, iteration, batch_size):

"""

:param X: 形状是 N*D,输入样本有 N 个,每个 D 维

:param output: (n,m)一个元组,为输出层的形状是一个 n*m 的二维矩阵

:param iteration:迭代次数

:param batch_size:每次迭代时的样本数量

初始化一个权值矩阵,形状为 D*(n*m),即有 n*m 权值向量,每个 D 维

"""

self.X = X

self.output = output

self.iteration = iteration

self.batch_size = batch_size

self.W = np.random.rand(X.shape[1], output[0] * output[1])

print (self.W.shape)

print("权值矩阵:",self.W)

def GetN(self, t):

"""

:param t:时间 t, 这里用迭代次数来表示时间

:return: 返回一个整数,表示拓扑距离,时间越大,拓扑邻域越小

"""

a = min(self.output)

return int(a-float(a)*t/self.iteration)

#求学习率

def Geteta(self, t, n):

"""

:param t: 时间 t, 这里用迭代次数来表示时间

:param n: 拓扑距离

:return: 返回学习率,

"""

return np.power(np.e, -n)/(t+2)

#更新权值矩阵

def updata_W(self, X, t, winner):

N = self.GetN(t)

for x, i in enumerate(winner):

#表示随时间变化的拓扑距离

to_update = self.getneighbor(i[0], N)

for j in range(N+1):

e = self.Geteta(t, j)

#表示学习率

�

for w in to_update[j]:

self.W[:, w] = np.add(self.W[:,w], e*(X[x,:] - self.W[:,w]))

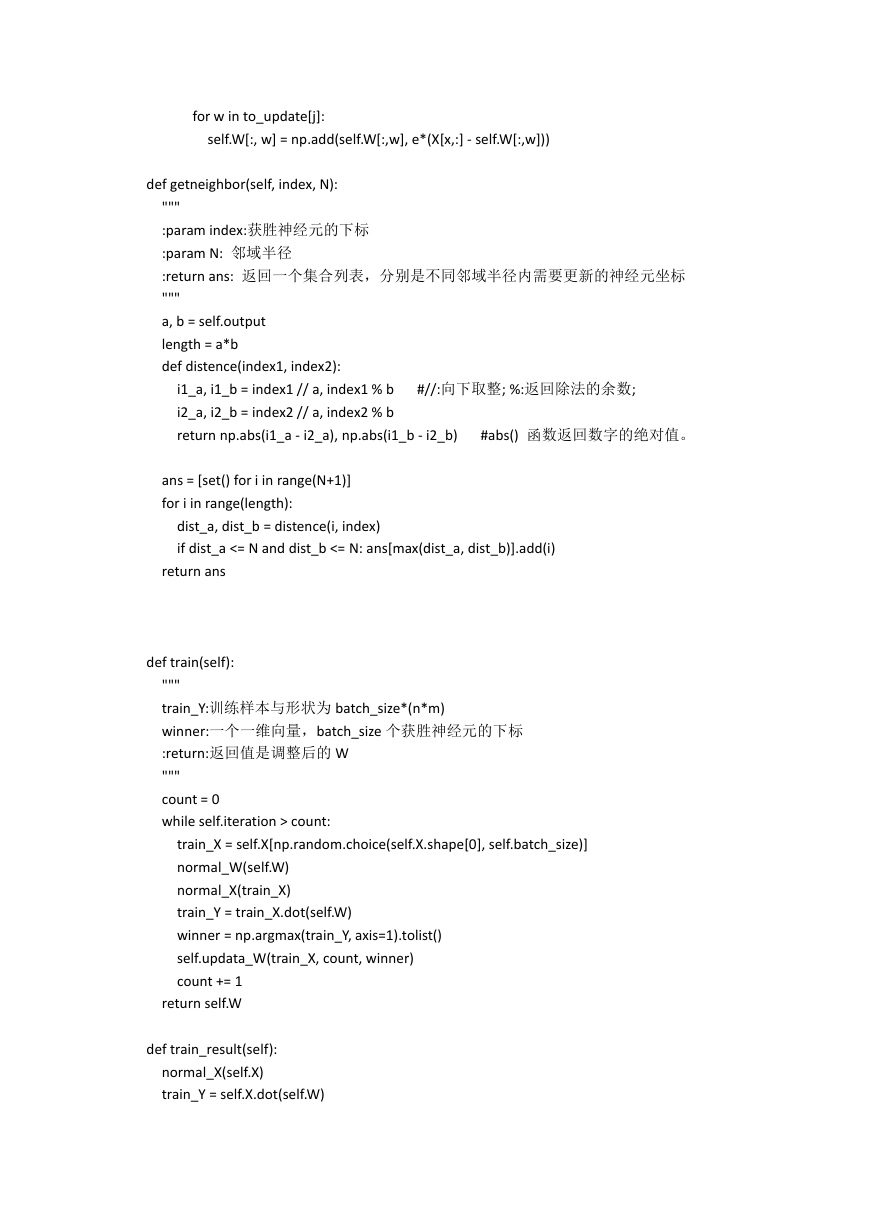

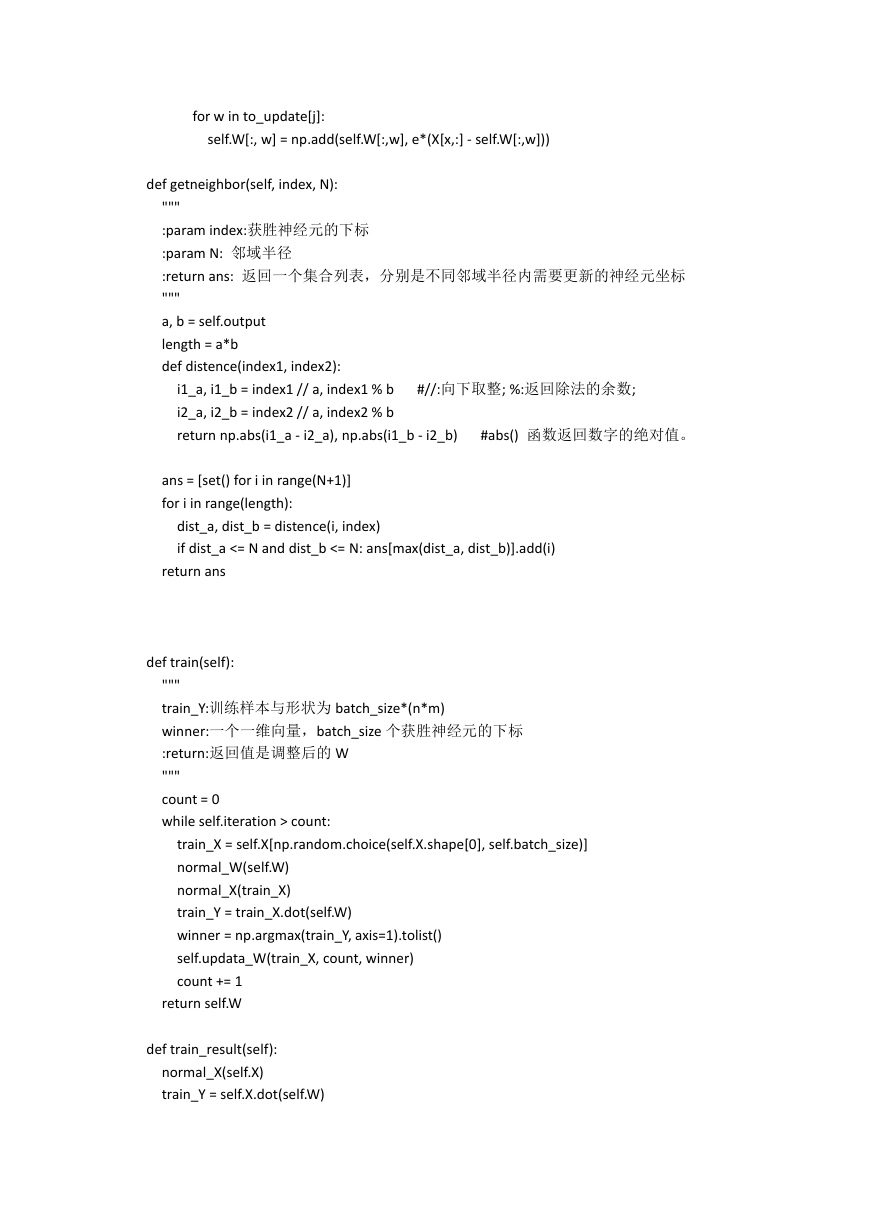

def getneighbor(self, index, N):

"""

:param index:获胜神经元的下标

:param N: 邻域半径

:return ans: 返回一个集合列表,分别是不同邻域半径内需要更新的神经元坐标

"""

a, b = self.output

length = a*b

def distence(index1, index2):

i1_a, i1_b = index1 // a, index1 % b

i2_a, i2_b = index2 // a, index2 % b

return np.abs(i1_a - i2_a), np.abs(i1_b - i2_b)

#//:向下取整; %:返回除法的余数;

#abs() 函数返回数字的绝对值。

ans = [set() for i in range(N+1)]

for i in range(length):

dist_a, dist_b = distence(i, index)

if dist_a <= N and dist_b <= N: ans[max(dist_a, dist_b)].add(i)

return ans

def train(self):

"""

train_Y:训练样本与形状为 batch_size*(n*m)

winner:一个一维向量,batch_size 个获胜神经元的下标

:return:返回值是调整后的 W

"""

count = 0

while self.iteration > count:

train_X = self.X[np.random.choice(self.X.shape[0], self.batch_size)]

normal_W(self.W)

normal_X(train_X)

train_Y = train_X.dot(self.W)

winner = np.argmax(train_Y, axis=1).tolist()

self.updata_W(train_X, count, winner)

count += 1

return self.W

def train_result(self):

normal_X(self.X)

train_Y = self.X.dot(self.W)

�

winner = np.argmax(train_Y, axis=1).tolist()

print (winner)

return winner

def normal_X(X):

"""

:param X:二维矩阵,N*D,N 个 D 维的数据

:return: 将 X 归一化的结果

"""

N, D = X.shape

for i in range(N):

temp = np.sum(np.multiply(X[i], X[i]))

X[i] /= np.sqrt(temp)

return X

def normal_W(W):

"""

:param W:二维矩阵,D*(n*m),D 个 n*m 维的数据

:return: 将 W 归一化的结果

"""

for i in range(W.shape[1]):

temp = np.sum(np.multiply(W[:,i], W[:,i]))

W[:, i] /= np.sqrt(temp)

return W

#画图

def draw(C):

colValue = ['r', 'y', 'g', 'b', 'c', 'k', 'm']

for i in range(len(C)):

coo_X = []

coo_Y = []

for j in range(len(C[i])):

#x 坐标列表

#y 坐标列表

coo_X.append(C[i][j][0])

coo_Y.append(C[i][j][1])

pl.scatter(coo_X, coo_Y, marker='x', color=colValue[i%len(colValue)], label=i)

pl.legend(loc='upper right')

pl.show()

#图例位置

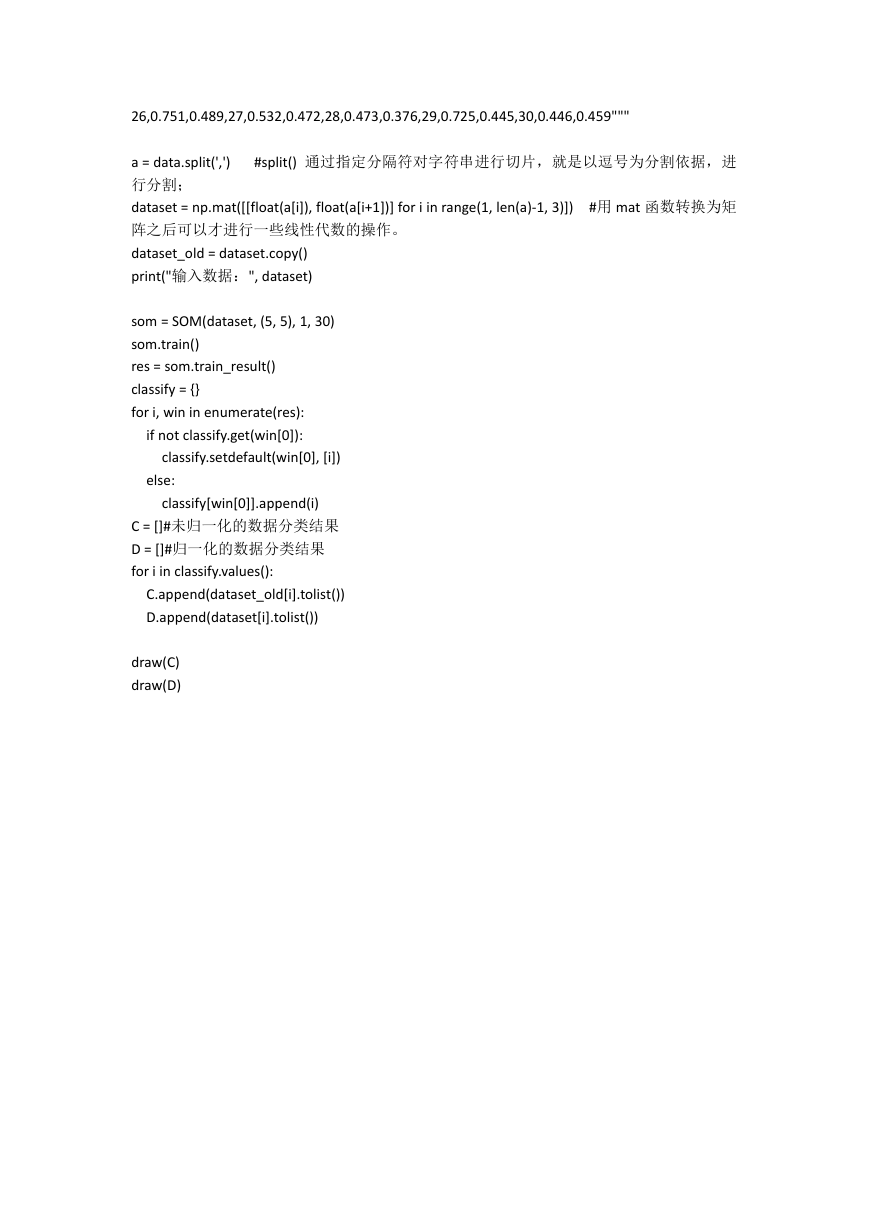

#数据集:每三个是一组分别是西瓜的编号,密度,含糖量

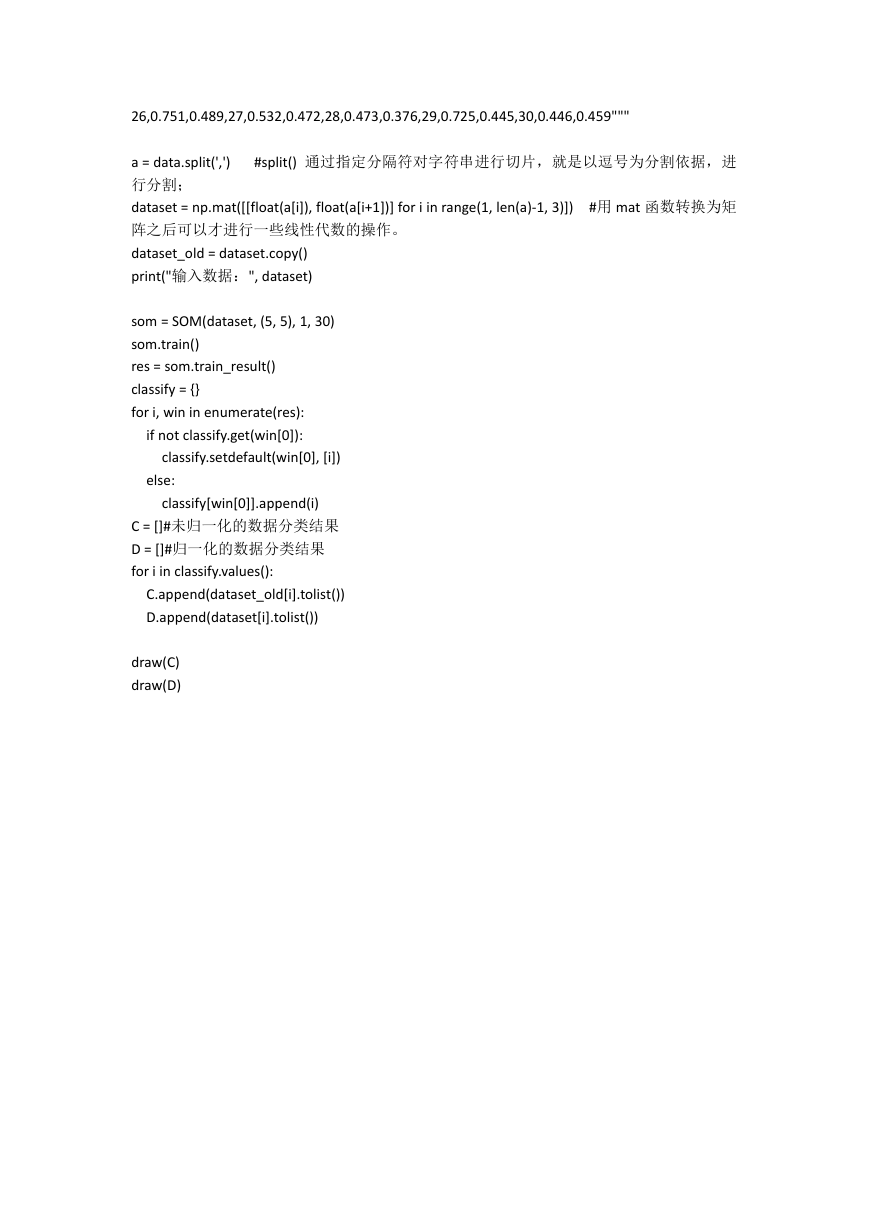

data = """

1,0.697,0.46,2,0.774,0.376,3,0.634,0.264,4,0.608,0.318,5,0.556,0.215,

6,0.403,0.237,7,0.481,0.149,8,0.437,0.211,9,0.666,0.091,10,0.243,0.267,

11,0.245,0.057,12,0.343,0.099,13,0.639,0.161,14,0.657,0.198,15,0.36,0.37,

16,0.593,0.042,17,0.719,0.103,18,0.359,0.188,19,0.339,0.241,20,0.282,0.257,

21,0.748,0.232,22,0.714,0.346,23,0.483,0.312,24,0.478,0.437,25,0.525,0.369,

�

26,0.751,0.489,27,0.532,0.472,28,0.473,0.376,29,0.725,0.445,30,0.446,0.459"""

#split() 通过指定分隔符对字符串进行切片,就是以逗号为分割依据,进

a = data.split(',')

行分割;

dataset = np.mat([[float(a[i]), float(a[i+1])] for i in range(1, len(a)-1, 3)])

阵之后可以才进行一些线性代数的操作。

dataset_old = dataset.copy()

print("输入数据:", dataset)

#用 mat 函数转换为矩

som = SOM(dataset, (5, 5), 1, 30)

som.train()

res = som.train_result()

classify = {}

for i, win in enumerate(res):

if not classify.get(win[0]):

classify.setdefault(win[0], [i])

else:

classify[win[0]].append(i)

C = []#未归一化的数据分类结果

D = []#归一化的数据分类结果

for i in classify.values():

C.append(dataset_old[i].tolist())

D.append(dataset[i].tolist())

draw(C)

draw(D)

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc