DP-200

Implementing an Azure Data Solution

193Q

nityte�

Implement data storage solutions

Question Set 1

QUESTION 1

You are a data engineer implementing a lambda architecture on Microsoft Azure. You use an open-source

big data solution to collect, process, and maintain data. The analytical data store performs poorly.

You must implement a solution that meets the following requirements:

Provide data warehousing

Reduce ongoing management activities

Deliver SQL query responses in less than one second

You need to create an HDInsight cluster to meet the requirements.

Which type of cluster should you create?

A.

Interactive Query

B. Apache Hadoop

C. Apache HBase

D. Apache Spark

Correct Answer: D

Section: (none)

Explanation

Explanation/Reference:

Explanation:

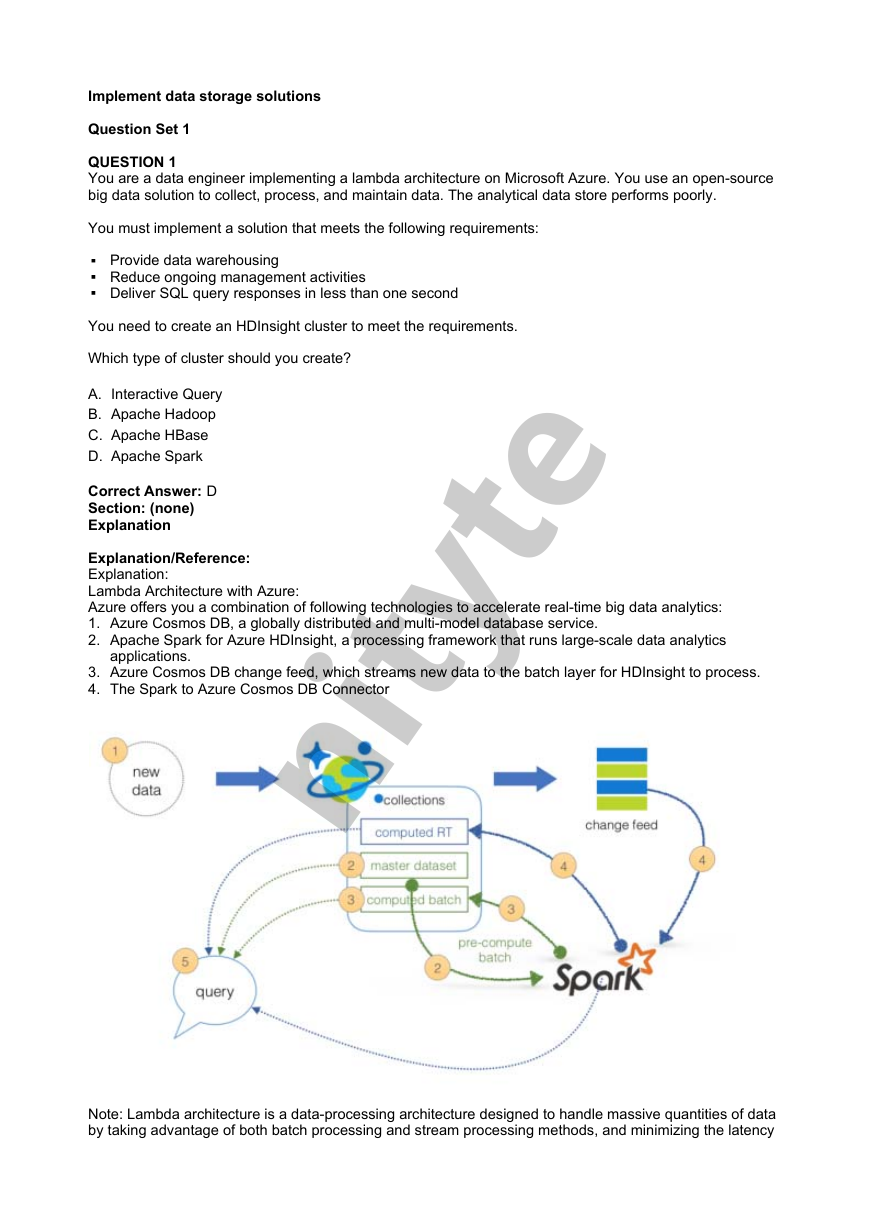

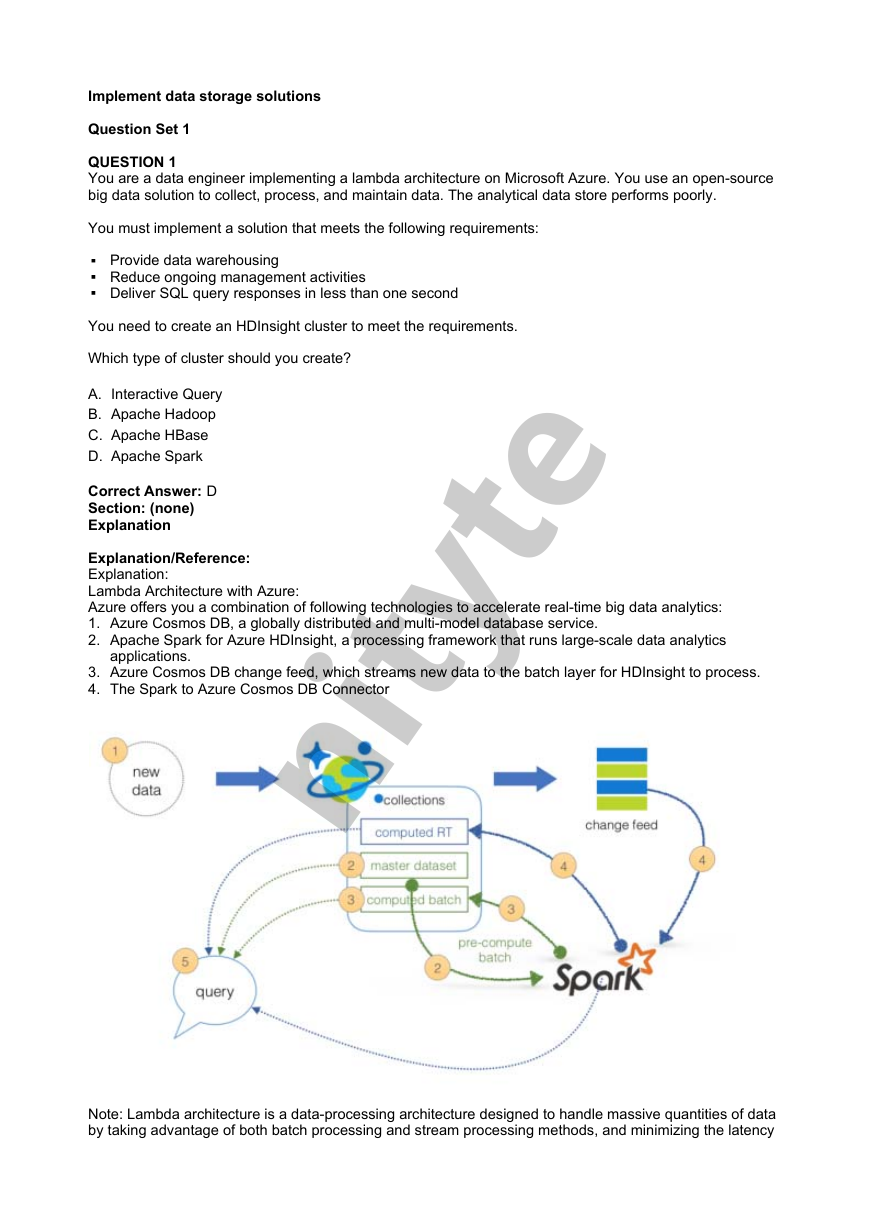

Lambda Architecture with Azure:

Azure offers you a combination of following technologies to accelerate real-time big data analytics:

1. Azure Cosmos DB, a globally distributed and multi-model database service.

2. Apache Spark for Azure HDInsight, a processing framework that runs large-scale data analytics

applications.

3. Azure Cosmos DB change feed, which streams new data to the batch layer for HDInsight to process.

4. The Spark to Azure Cosmos DB Connector

Note: Lambda architecture is a data-processing architecture designed to handle massive quantities of data

by taking advantage of both batch processing and stream processing methods, and minimizing the latency

909A545160E581887343EF295A26525D

nityte�

involved in querying big data.

References:

https://sqlwithmanoj.com/2018/02/16/what-is-lambda-architecture-and-what-azure-offers-with-its-new-

cosmos-db/

QUESTION 2

DRAG DROP

You develop data engineering solutions for a company. You must migrate data from Microsoft Azure Blob

storage to an Azure SQL Data Warehouse for further transformation. You need to implement the solution.

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list

of actions to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Section: (none)

Explanation

Explanation/Reference:

Explanation:

Step 1: Provision an Azure SQL Data Warehouse instance.

Create a data warehouse in the Azure portal.

909A545160E581887343EF295A26525D

nityte�

Step 2: Connect to the Azure SQL Data warehouse by using SQL Server Management Studio

Connect to the data warehouse with SSMS (SQL Server Management Studio)

Step 3: Build external tables by using the SQL Server Management Studio

Create external tables for data in Azure blob storage.

You are ready to begin the process of loading data into your new data warehouse. You use external tables

to load data from the Azure storage blob.

Step 4: Run Transact-SQL statements to load data.

You can use the CREATE TABLE AS SELECT (CTAS) T-SQL statement to load the data from Azure

Storage Blob into new tables in your data warehouse.

References:

https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/sql-data-warehouse/load-data-from-

azure-blob-storage-using-polybase.md

QUESTION 3

You develop data engineering solutions for a company. The company has on-premises Microsoft SQL

Server databases at multiple locations.

The company must integrate data with Microsoft Power BI and Microsoft Azure Logic Apps. The solution

must avoid single points of failure during connection and transfer to the cloud. The solution must also

minimize latency.

You need to secure the transfer of data between on-premises databases and Microsoft Azure.

What should you do?

A.

B.

C.

D.

Install a standalone on-premises Azure data gateway at each location

Install an on-premises data gateway in personal mode at each location

Install an Azure on-premises data gateway at the primary location

Install an Azure on-premises data gateway as a cluster at each location

Correct Answer: D

Section: (none)

Explanation

Explanation/Reference:

Explanation:

You can create high availability clusters of On-premises data gateway installations, to ensure your

organization can access on-premises data resources used in Power BI reports and dashboards. Such

clusters allow gateway administrators to group gateways to avoid single points of failure in accessing on-

premises data resources. The Power BI service always uses the primary gateway in the cluster, unless it’s

not available. In that case, the service switches to the next gateway in the cluster, and so on.

References:

https://docs.microsoft.com/en-us/power-bi/service-gateway-high-availability-clusters

QUESTION 4

You are a data architect. The data engineering team needs to configure a synchronization of data between

an on-premises Microsoft SQL Server database to Azure SQL Database.

Ad-hoc and reporting queries are being overutilized the on-premises production instance. The

synchronization process must:

Perform an initial data synchronization to Azure SQL Database with minimal downtime

Perform bi-directional data synchronization after initial synchronization

You need to implement this synchronization solution.

Which synchronization method should you use?

909A545160E581887343EF295A26525D

nityte�

transactional replication

A.

B. Data Migration Assistant (DMA)

C. backup and restore

D. SQL Server Agent job

E. Azure SQL Data Sync

Correct Answer: E

Section: (none)

Explanation

Explanation/Reference:

Explanation:

SQL Data Sync is a service built on Azure SQL Database that lets you synchronize the data you select bi-

directionally across multiple SQL databases and SQL Server instances.

With Data Sync, you can keep data synchronized between your on-premises databases and Azure SQL

databases to enable hybrid applications.

Compare Data Sync with Transactional Replication

References:

https://docs.microsoft.com/en-us/azure/sql-database/sql-database-sync-data

QUESTION 5

An application will use Microsoft Azure Cosmos DB as its data solution. The application will use the

Cassandra API to support a column-based database type that uses containers to store items.

You need to provision Azure Cosmos DB. Which container name and item name should you use? Each

correct answer presents part of the solutions.

NOTE: Each correct answer selection is worth one point.

A. collection

B. rows

C. graph

D. entities

E.

table

Correct Answer: BE

Section: (none)

Explanation

Explanation/Reference:

Explanation:

B: Depending on the choice of the API, an Azure Cosmos item can represent either a document in a

collection, a row in a table or a node/edge in a graph. The following table shows the mapping between

API-specific entities to an Azure Cosmos item:

909A545160E581887343EF295A26525D

nityte�

E: An Azure Cosmos container is specialized into API-specific entities as follows:

References:

https://docs.microsoft.com/en-us/azure/cosmos-db/databases-containers-items

QUESTION 6

A company has a SaaS solution that uses Azure SQL Database with elastic pools. The solution contains a

dedicated database for each customer organization. Customer organizations have peak usage at different

periods during the year.

You need to implement the Azure SQL Database elastic pool to minimize cost.

Which option or options should you configure?

A. Number of transactions only

B. eDTUs per database only

C. Number of databases only

D. CPU usage only

E. eDTUs and max data size

Correct Answer: E

Section: (none)

Explanation

Explanation/Reference:

Explanation:

The best size for a pool depends on the aggregate resources needed for all databases in the pool. This

involves determining the following:

Maximum resources utilized by all databases in the pool (either maximum DTUs or maximum vCores

depending on your choice of resourcing model).

Maximum storage bytes utilized by all databases in the pool.

Note: Elastic pools enable the developer to purchase resources for a pool shared by multiple databases to

accommodate unpredictable periods of usage by individual databases. You can configure resources for

the pool based either on the DTU-based purchasing model or the vCore-based purchasing model.

References:

https://docs.microsoft.com/en-us/azure/sql-database/sql-database-elastic-pool

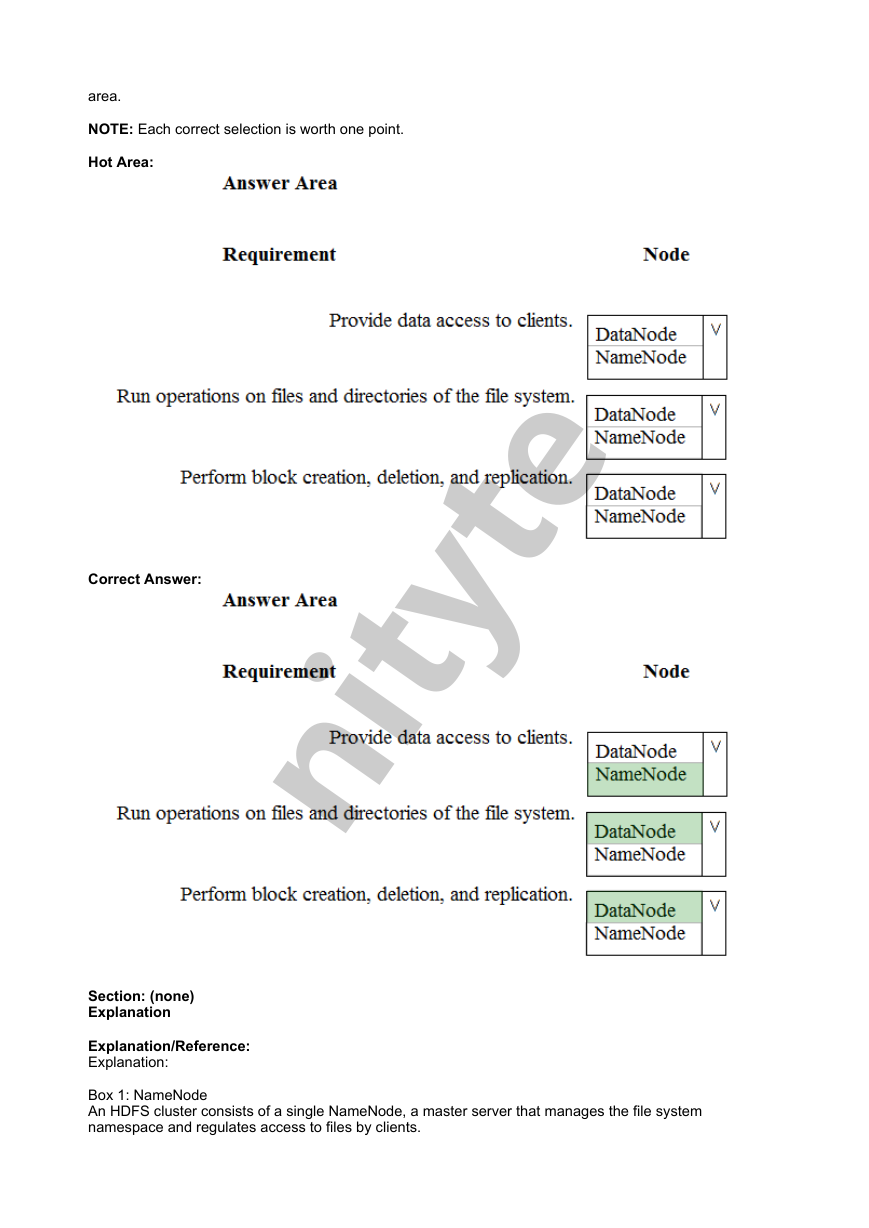

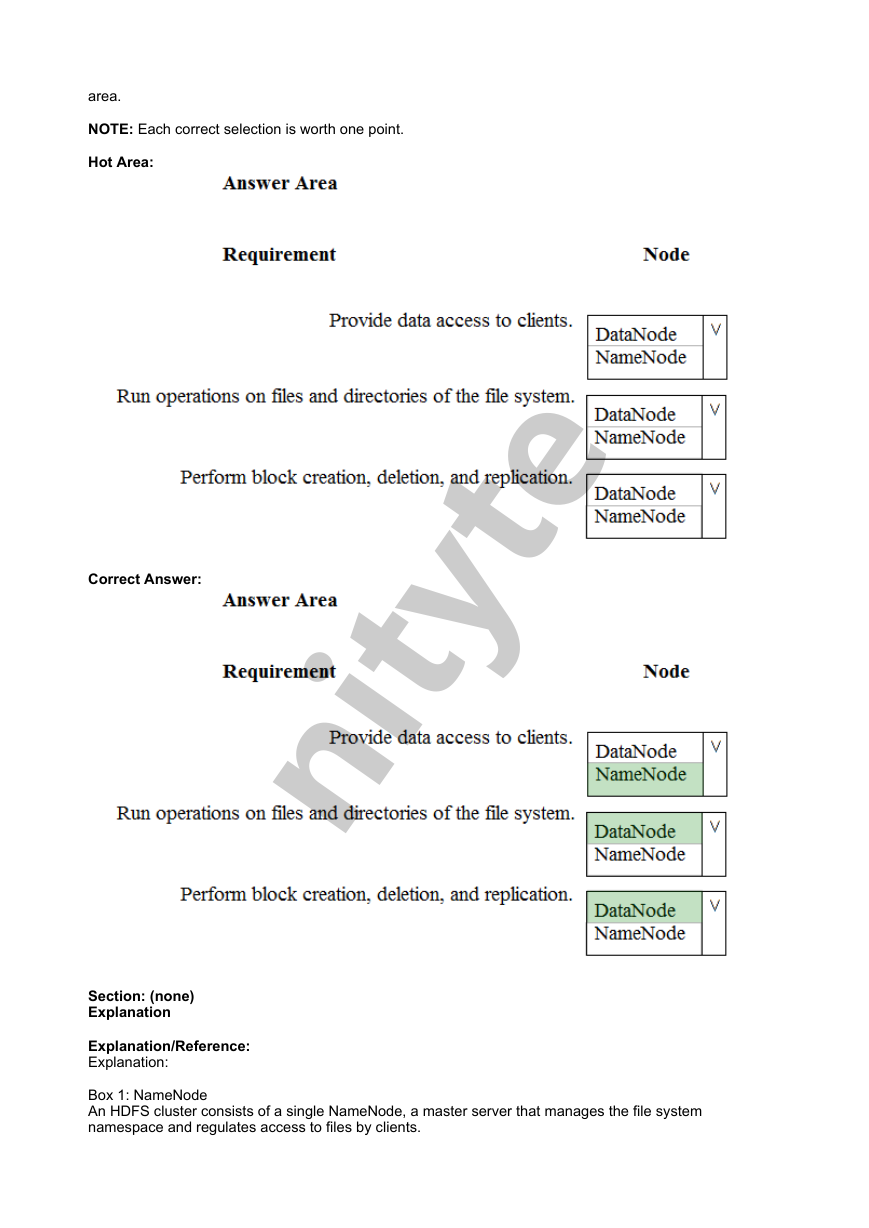

QUESTION 7

HOTSPOT

You are a data engineer. You are designing a Hadoop Distributed File System (HDFS) architecture. You

plan to use Microsoft Azure Data Lake as a data storage repository.

You must provision the repository with a resilient data schema. You need to ensure the resiliency of the

Azure Data Lake Storage. What should you use? To answer, select the appropriate options in the answer

909A545160E581887343EF295A26525D

nityte�

area.

NOTE: Each correct selection is worth one point.

Hot Area:

Correct Answer:

Section: (none)

Explanation

Explanation/Reference:

Explanation:

Box 1: NameNode

An HDFS cluster consists of a single NameNode, a master server that manages the file system

namespace and regulates access to files by clients.

909A545160E581887343EF295A26525D

nityte�

Box 2: DataNode

The DataNodes are responsible for serving read and write requests from the file system’s clients.

Box 3: DataNode

The DataNodes perform block creation, deletion, and replication upon instruction from the NameNode.

Note: HDFS has a master/slave architecture. An HDFS cluster consists of a single NameNode, a master

server that manages the file system namespace and regulates access to files by clients. In addition, there

are a number of DataNodes, usually one per node in the cluster, which manage storage attached to the

nodes that they run on. HDFS exposes a file system namespace and allows user data to be stored in files.

Internally, a file is split into one or more blocks and these blocks are stored in a set of DataNodes. The

NameNode executes file system namespace operations like opening, closing, and renaming files and

directories. It also determines the mapping of blocks to DataNodes. The DataNodes are responsible for

serving read and write requests from the file system’s clients. The DataNodes also perform block creation,

deletion, and replication upon instruction from the NameNode.

References:

https://hadoop.apache.org/docs/r1.2.1/hdfs_design.html#NameNode+and+DataNodes

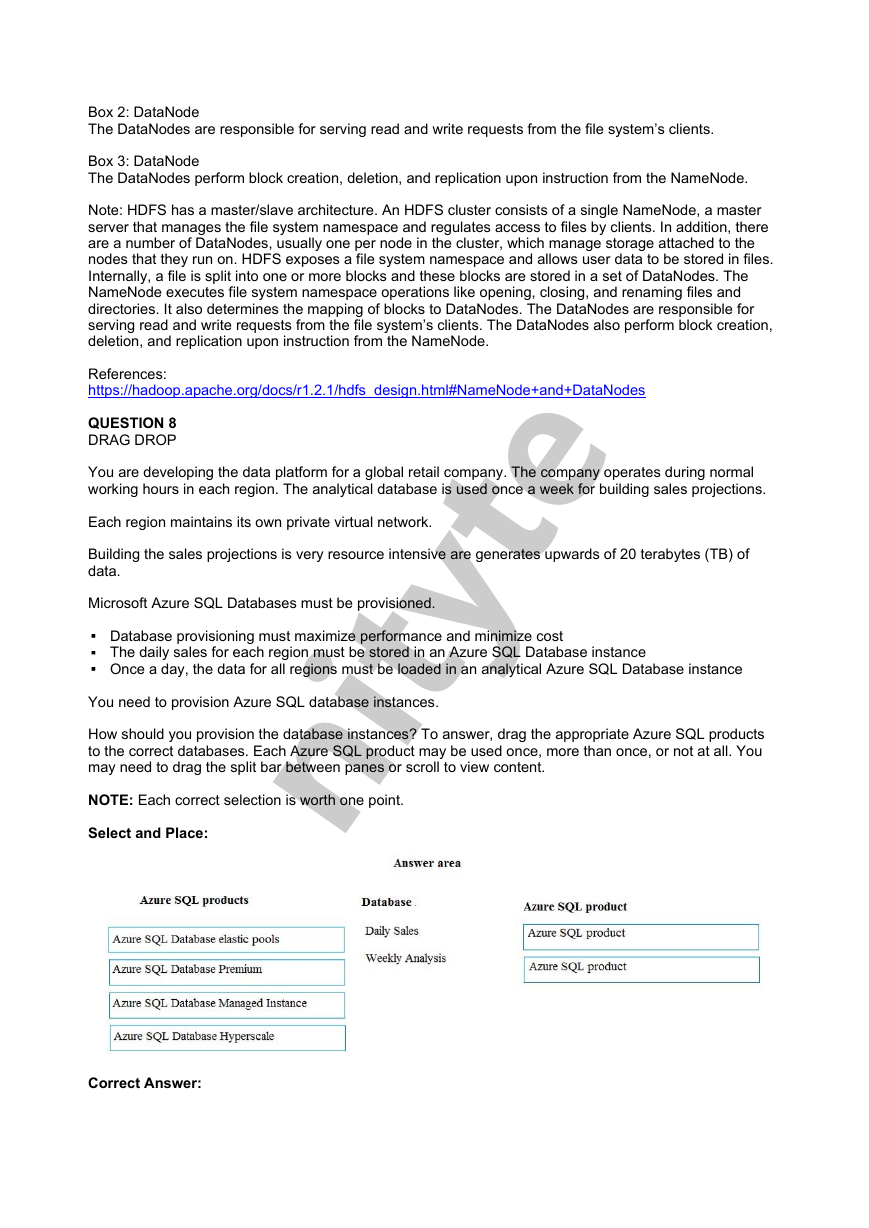

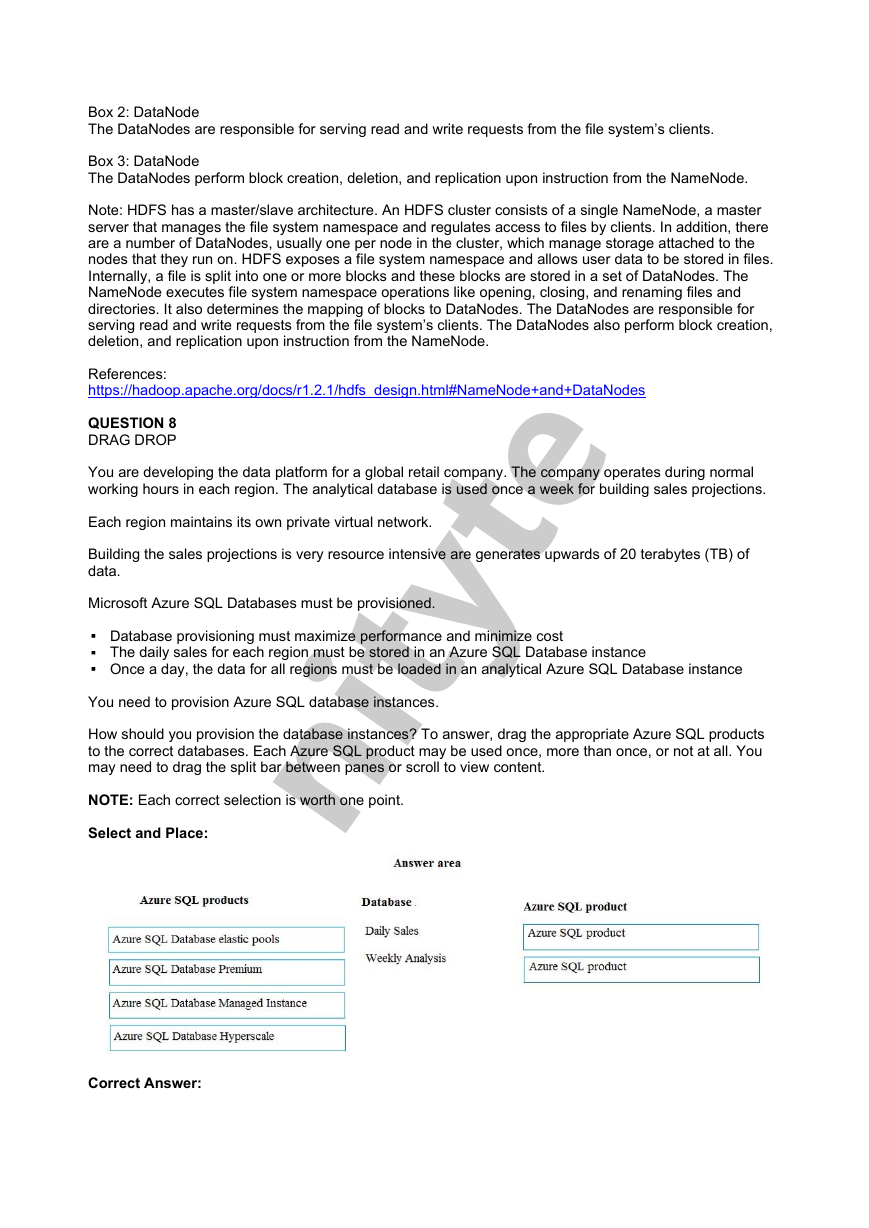

QUESTION 8

DRAG DROP

You are developing the data platform for a global retail company. The company operates during normal

working hours in each region. The analytical database is used once a week for building sales projections.

Each region maintains its own private virtual network.

Building the sales projections is very resource intensive are generates upwards of 20 terabytes (TB) of

data.

Microsoft Azure SQL Databases must be provisioned.

Database provisioning must maximize performance and minimize cost

The daily sales for each region must be stored in an Azure SQL Database instance

Once a day, the data for all regions must be loaded in an analytical Azure SQL Database instance

You need to provision Azure SQL database instances.

How should you provision the database instances? To answer, drag the appropriate Azure SQL products

to the correct databases. Each Azure SQL product may be used once, more than once, or not at all. You

may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Select and Place:

Correct Answer:

909A545160E581887343EF295A26525D

nityte�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc