一、The learning problem

1. Course Introduction

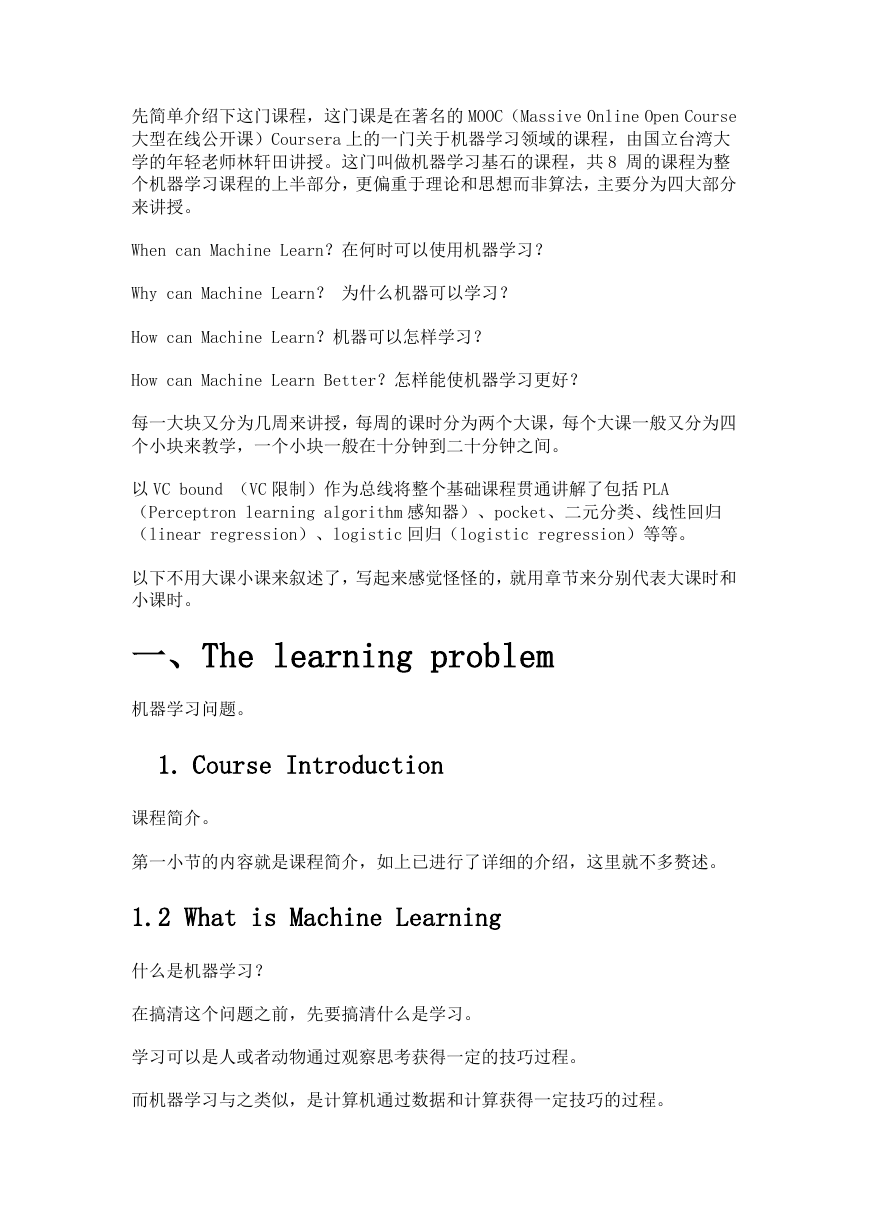

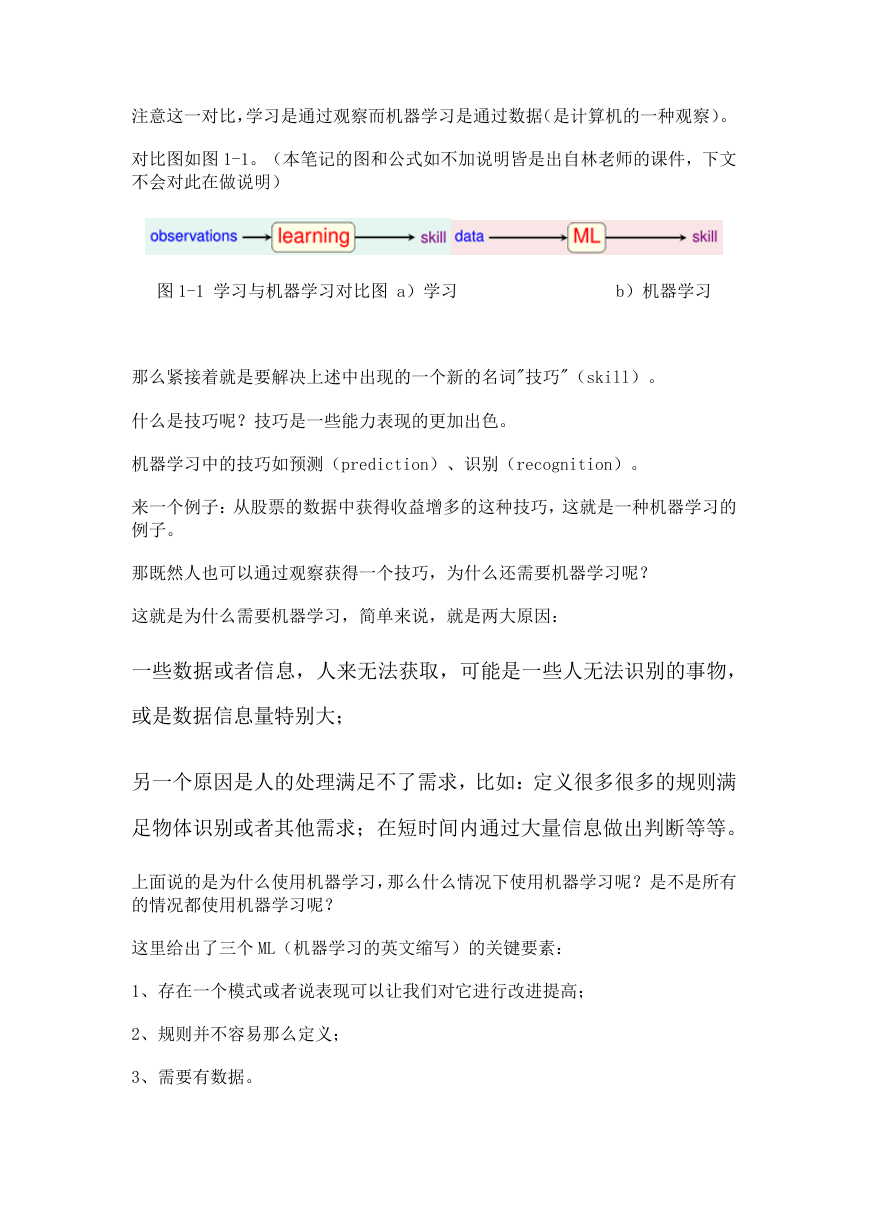

1.2 What is Machine Learning

1.3 Applications of Machine Learning

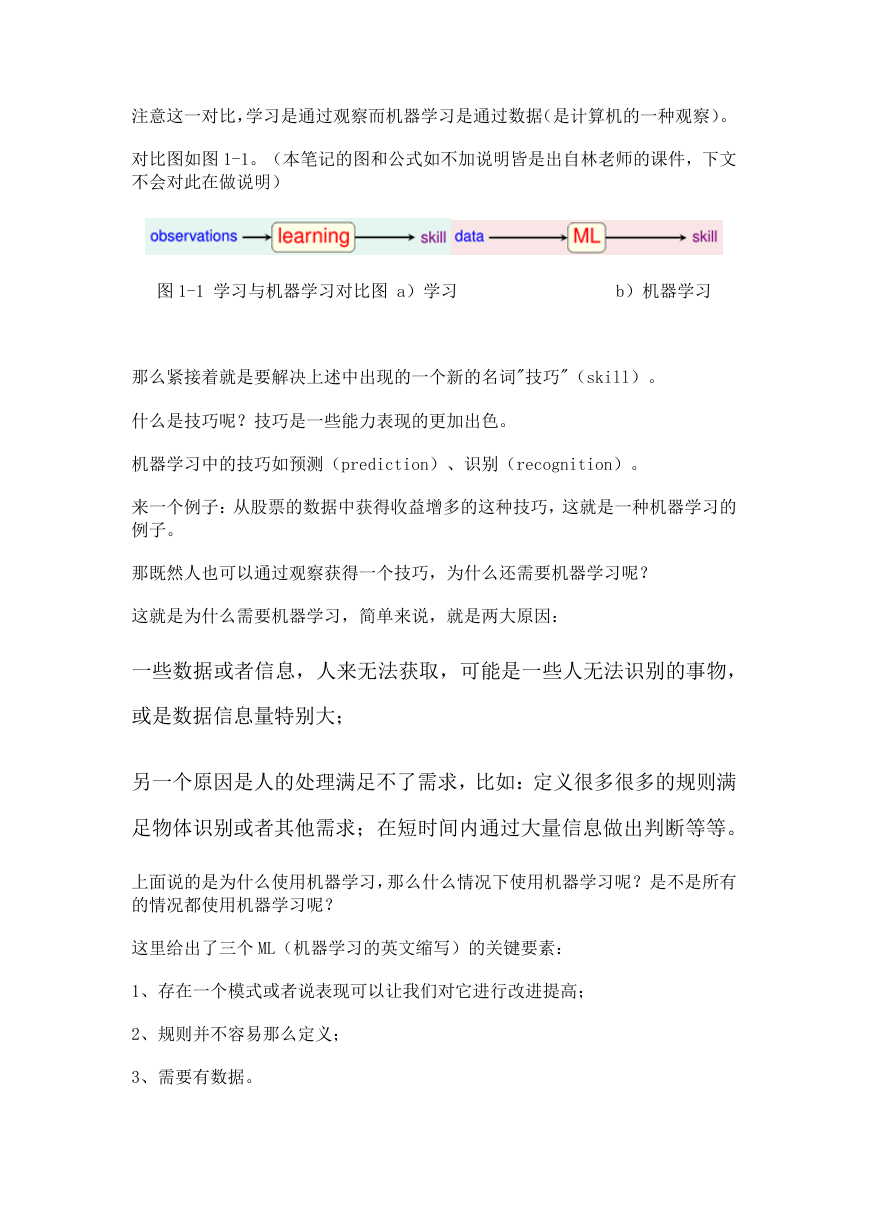

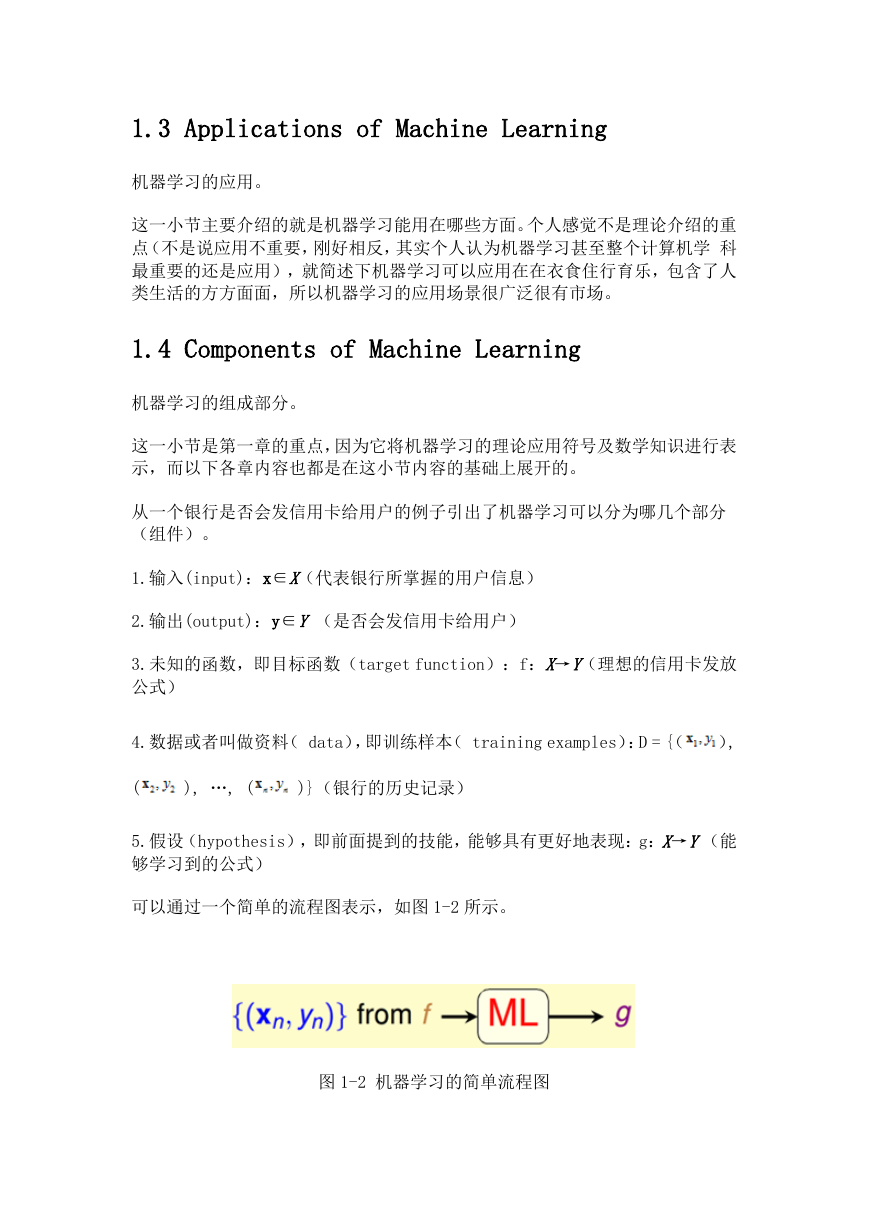

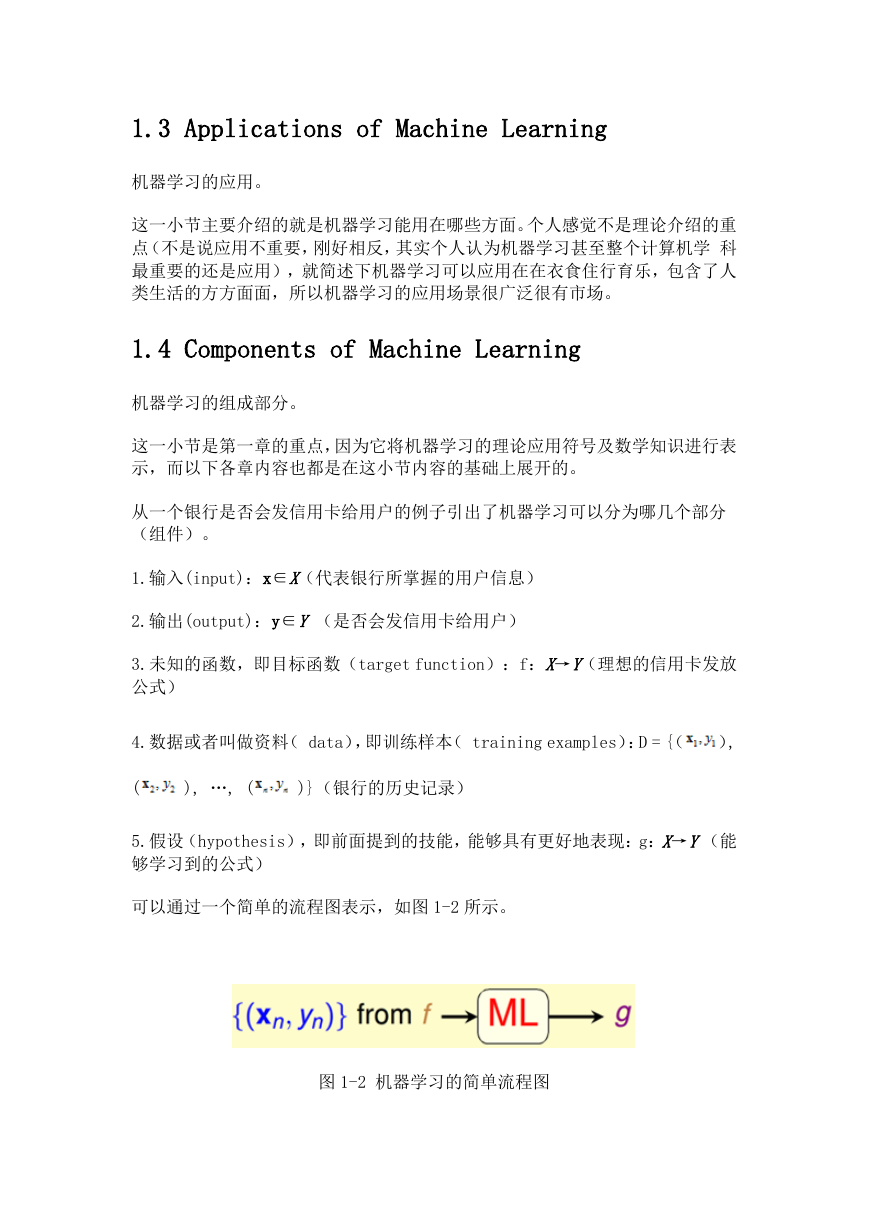

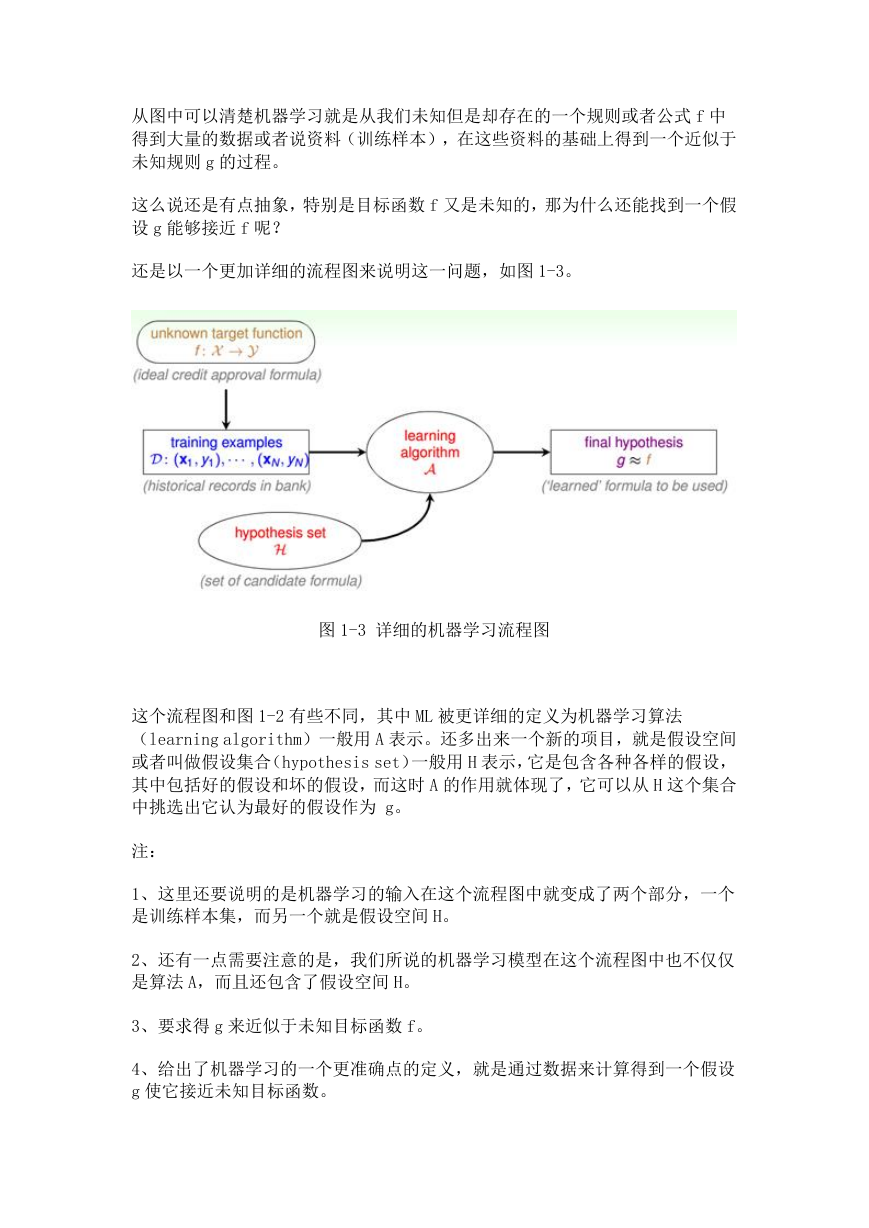

1.4 Components of Machine Learning

1.5 Machine Learning and Other Fields

1.5.1 ML VS DM (Data Mining)

1.5.2 M L VS AI (artificial intelligence)

1.5.3 ML VS statistic

二、Learning to Answer Yes/No

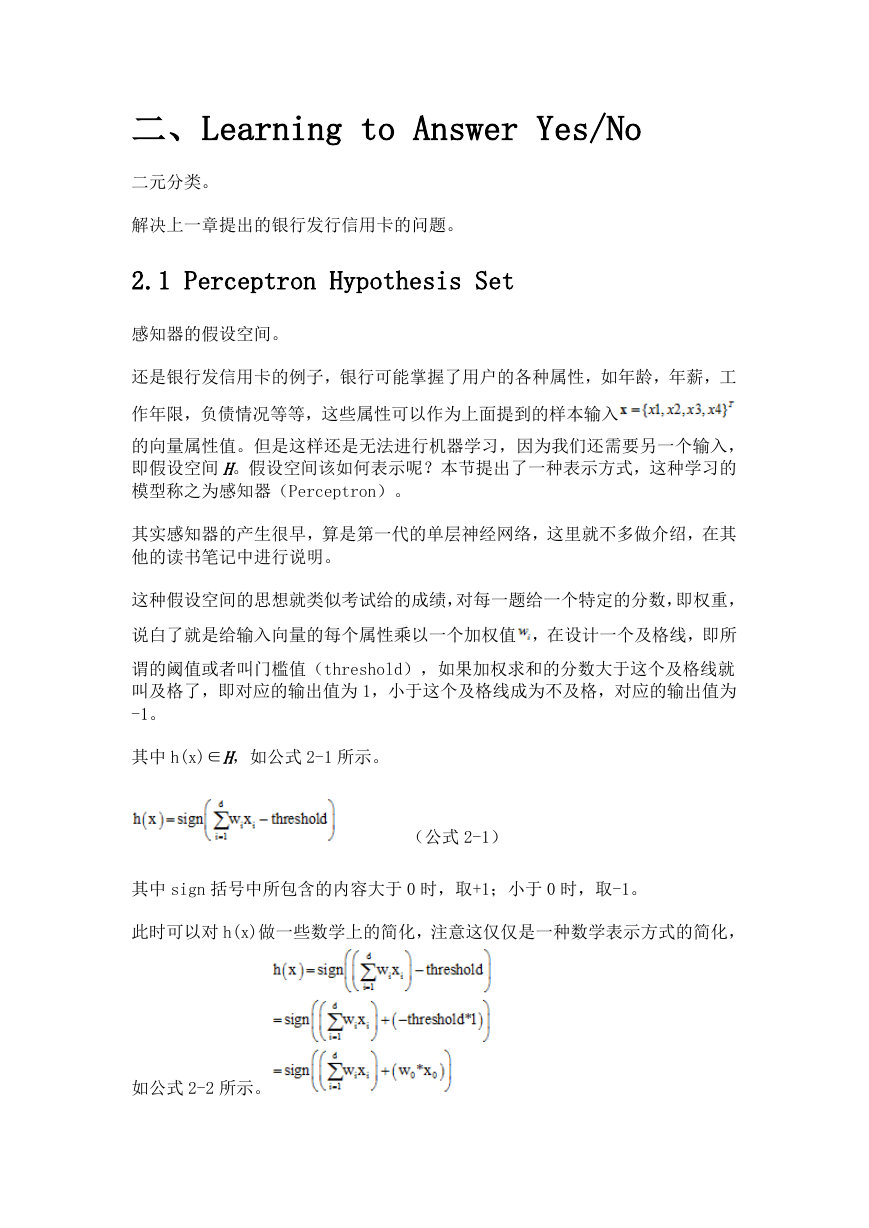

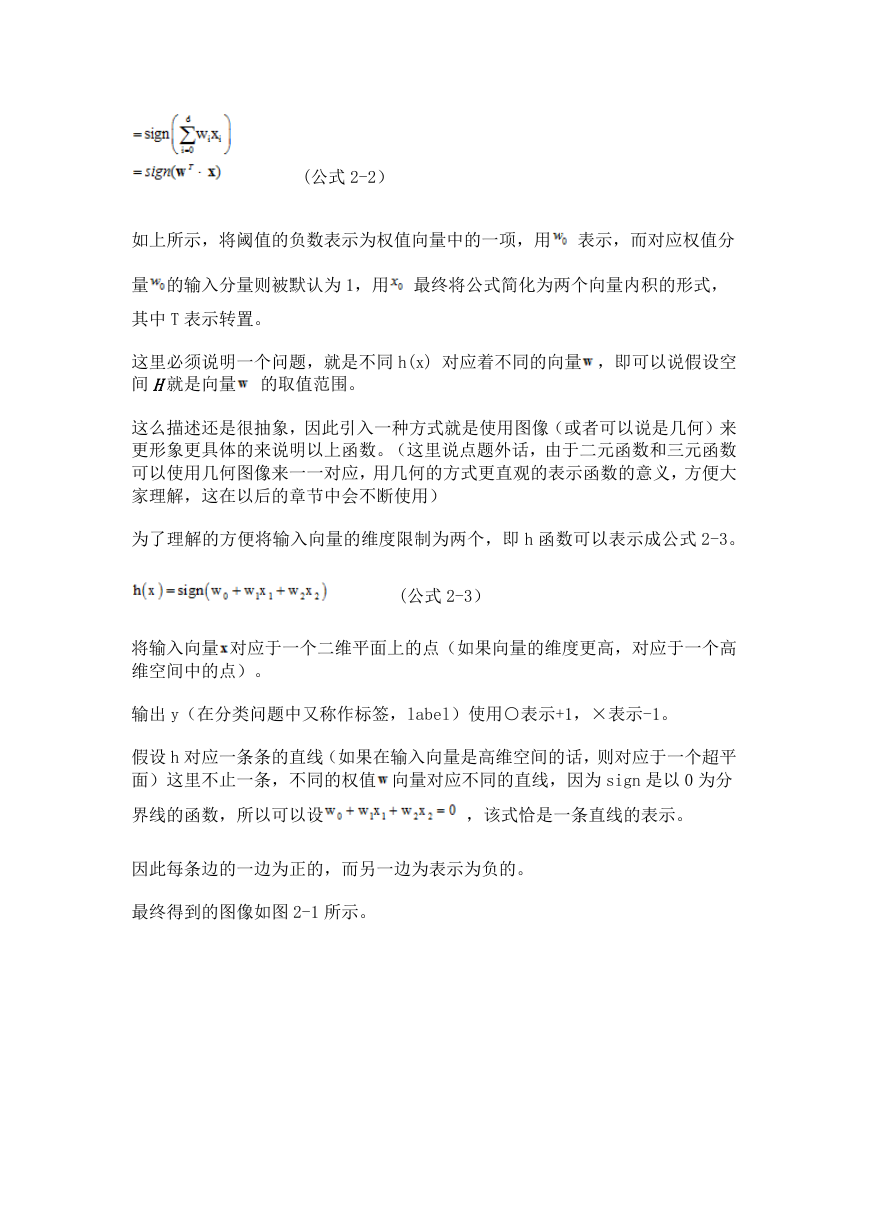

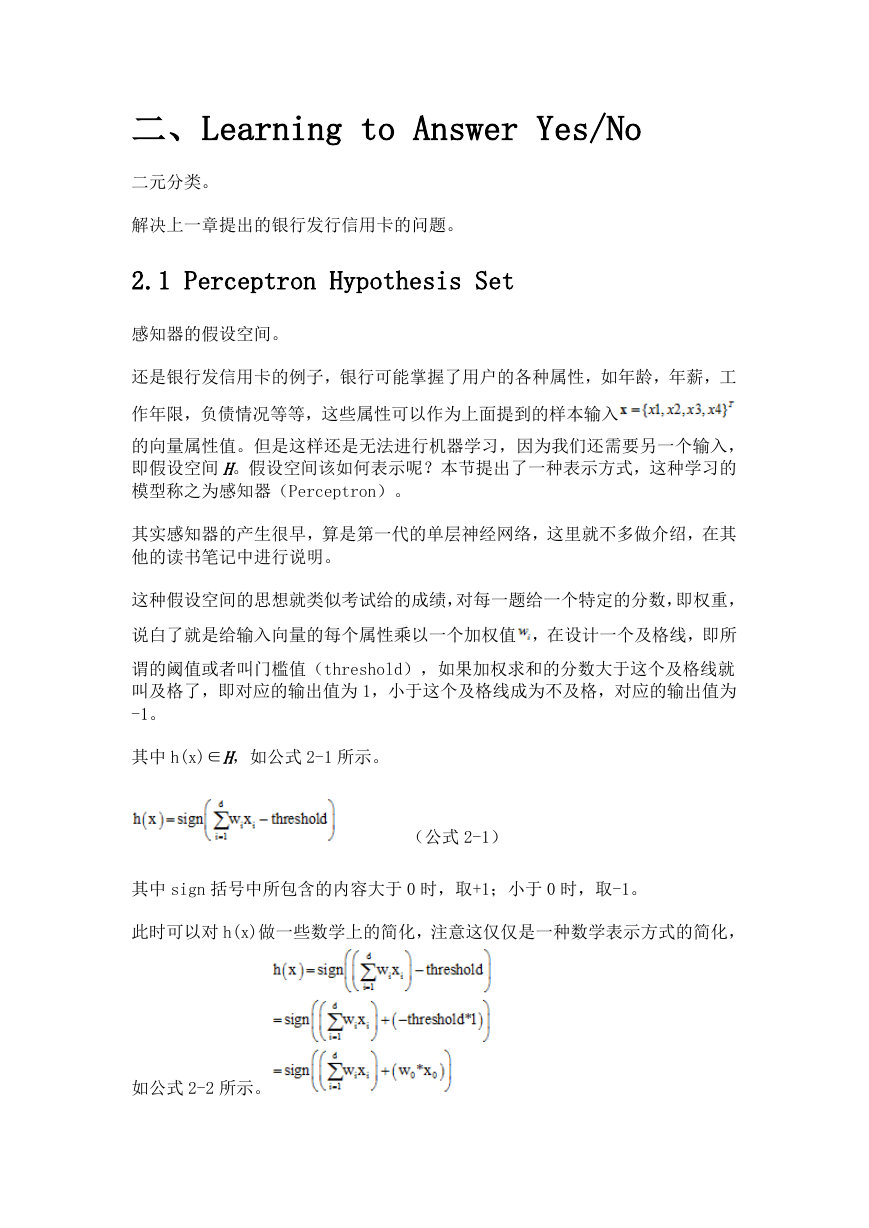

2.1 Perceptron Hypothesis Set

2.2 Perceptron Learning Algorithm (PLA)

2.3 Guarantee of PLA

2.4 Non-Separable Data

三、Types of Learning

3.1 Learning with Different Output Space

3.1.1 binary classification

3.1.2 Multiclass Classification

3.1.3 Regression

3.1.4 Structured Learning

3.2 Learning with Different Data Label

3.2.1 Supervised Learning

3.2.2 Unsupervised Learning

3.2.3 Semi-supervised Learning

3.2.4 Reinforcement Learning

3.3 Learning with Different Protocol

3.4 Learning with Different Input Space

四、Feasibility of Learning

4.1 Learning is Impossible

4.2 Probability to the Rescue

4.3 Connection to Learning

4.4 Connection to Real Learning

五、Training versus Testing

5.1 Recap and Preview

5.2 Effective Number of Lines

5.3 Effective Number of Hypotheses

5.4 Break Point

六、Theory of Generalization

6.1 Restriction of Break Point

6.2 Bounding Function- Basic Cases

6.3 Bounding Function- Inductive Cases

6.4 A Pictorial Proof

七、The VC Dimension

7.1 Definition of VC Dimension

7.2 VC Dimension of Perceptrons

7.3 Physical Intuition of VC Dimension

7.4 Interpreting VC Dimension

八、Noise and Error

8.1 Noise and Probabilistic Target

8.2 Error Measure

8.3 Algorithmic Error Measure

8.4 Weighted Classification

九、Linear Regression

9.1 Linear Regression Problem

9.2 Linear Regression Algorithm

9.3 Generalization Issue

9.4 Linear Regression for Binary Classification

十、Logistic Regression

10.1 Logistic Regression Problem

10.2 Logistic Regression Error

10.3 Gradient of Logistic Regression Error

10.4 Gradient Descent

十一、Linear Models for Classification

11.1 Linear Models for Binary Classification

11.2 Stochastic Gradient Descent

11.3 Multiclass via Logistic Regression

11.4 Multiclass via Binary Classification

十二、Nonlinear Transformation

12.1 Quadratic Hypotheses

12.2 Nonlinear Transform

12.3 Price of Nonlinear Transform

12.4 Structured Hypothesis Sets

十三、Hazard of Overfitting

13.1 What is Overfitting?

13.2 The Role of Noise and Data Size

13.3 Deterministic Noise

13.4 Dealing with Overfitting

十四、Regularization

14.1 Regularized Hypothesis Set

14.2 Weight Decay Regularization

14.3 Regularization and VC Theory

14.4 General Regularizers

Word 书签

OLE_LINK3

OLE_LINK9

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc