YOLOv4: Optimal Speed and Accuracy of Object Detection

Alexey Bochkovskiy∗

alexeyab84@gmail.com

Chien-Yao Wang∗

Hong-Yuan Mark Liao

Institute of Information Science

Institute of Information Science

Academia Sinica, Taiwan

kinyiu@iis.sinica.edu.tw

Academia Sinica, Taiwan

liao@iis.sinica.edu.tw

0

2

0

2

r

p

A

3

2

]

V

C

.

s

c

[

1

v

4

3

9

0

1

.

4

0

0

2

:

v

i

X

r

a

Abstract

There are a huge number of features which are said to

improve Convolutional Neural Network (CNN) accuracy.

Practical testing of combinations of such features on large

datasets, and theoretical justification of the result, is re-

quired. Some features operate on certain models exclusively

and for certain problems exclusively, or only for small-scale

datasets; while some features, such as batch-normalization

and residual-connections, are applicable to the majority of

models, tasks, and datasets. We assume that such universal

features include Weighted-Residual-Connections (WRC),

Cross-Stage-Partial-connections (CSP), Cross mini-Batch

Normalization (CmBN), Self-adversarial-training (SAT)

and Mish-activation. We use new features: WRC, CSP,

CmBN, SAT, Mish activation, Mosaic data augmentation,

CmBN, DropBlock regularization, and CIoU loss, and com-

bine some of them to achieve state-of-the-art results: 43.5%

AP (65.7% AP50) for the MS COCO dataset at a real-

time speed of ∼65 FPS on Tesla V100. Source code is at

https://github.com/AlexeyAB/darknet.

1. Introduction

The majority of CNN-based object detectors are largely

applicable only for recommendation systems. For example,

searching for free parking spaces via urban video cameras

is executed by slow accurate models, whereas car collision

warning is related to fast inaccurate models.

Improving

the real-time object detector accuracy enables using them

not only for hint generating recommendation systems, but

also for stand-alone process management and human input

reduction. Real-time object detector operation on conven-

tional Graphics Processing Units (GPU) allows their mass

usage at an affordable price. The most accurate modern

neural networks do not operate in real time and require large

number of GPUs for training with a large mini-batch-size.

We address such problems through creating a CNN that op-

erates in real-time on a conventional GPU, and for which

training requires only one conventional GPU.

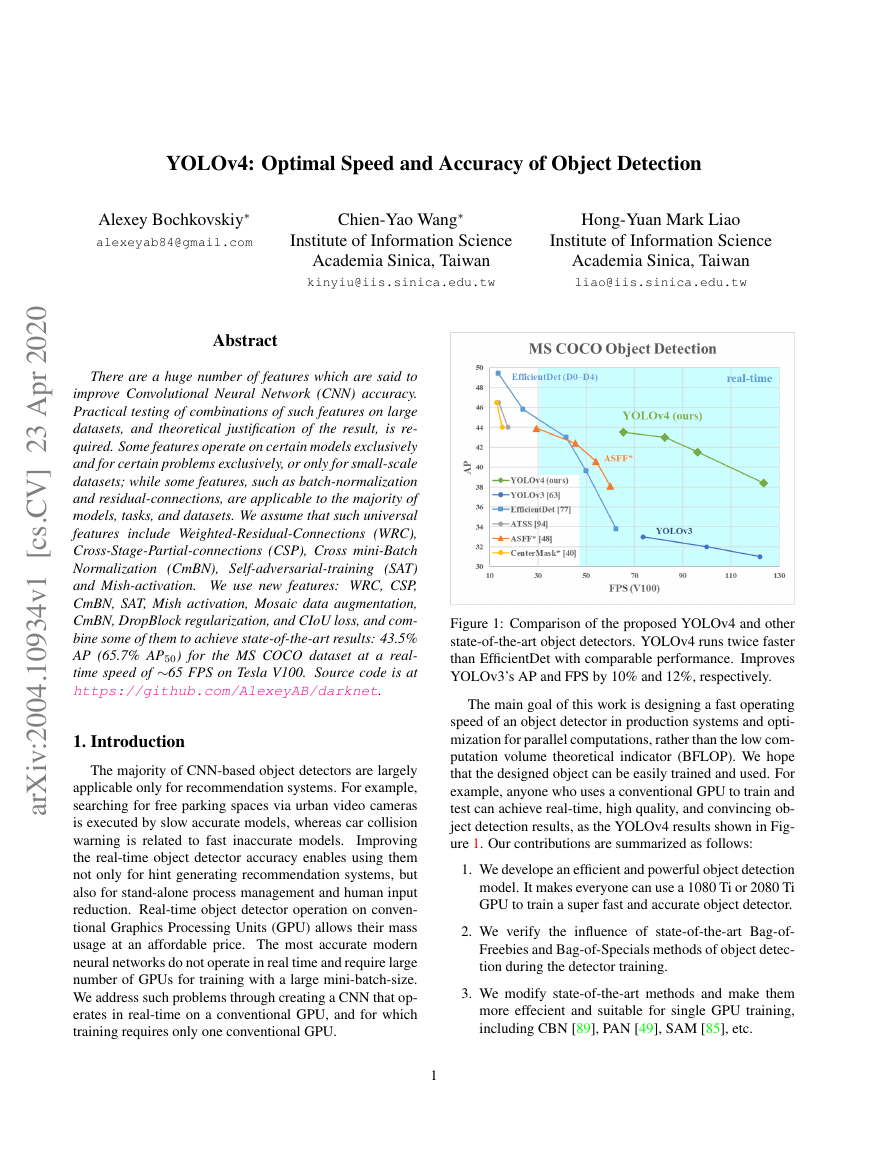

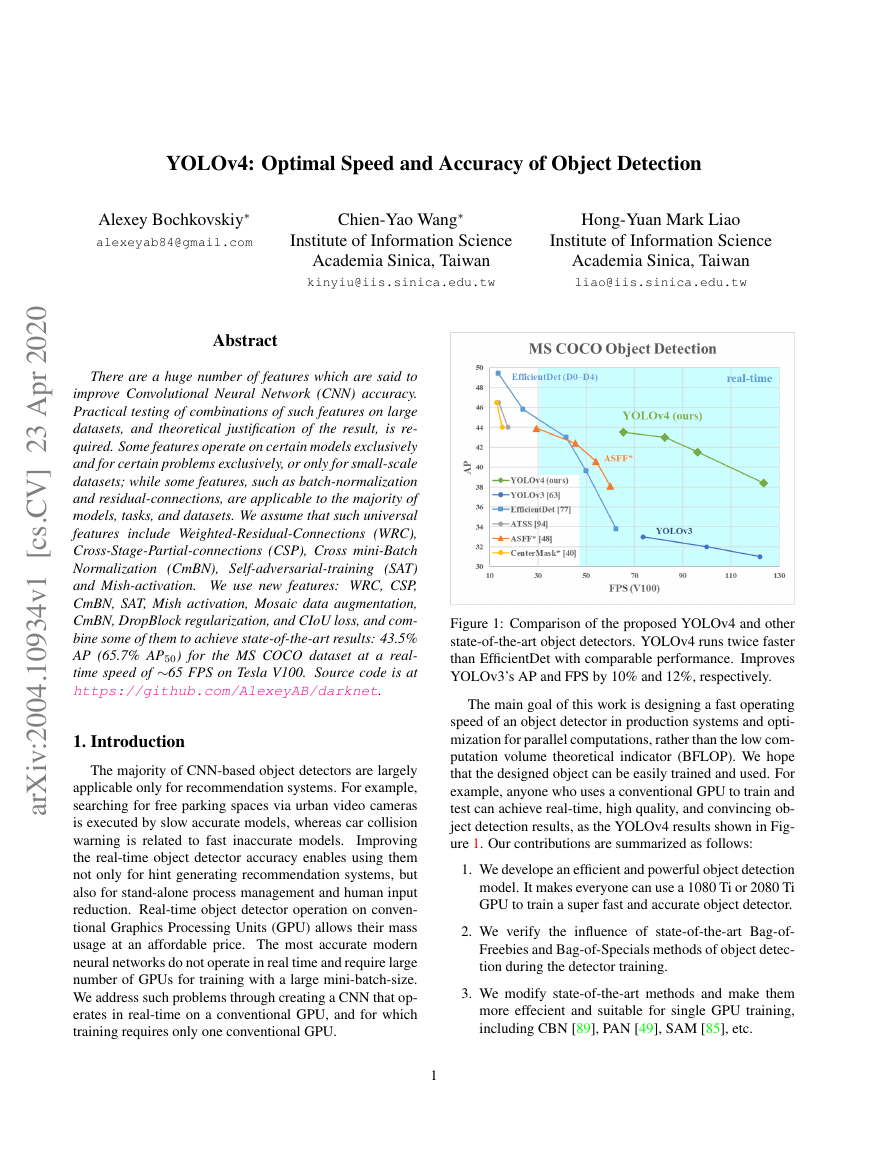

Figure 1: Comparison of the proposed YOLOv4 and other

state-of-the-art object detectors. YOLOv4 runs twice faster

than EfficientDet with comparable performance. Improves

YOLOv3’s AP and FPS by 10% and 12%, respectively.

The main goal of this work is designing a fast operating

speed of an object detector in production systems and opti-

mization for parallel computations, rather than the low com-

putation volume theoretical indicator (BFLOP). We hope

that the designed object can be easily trained and used. For

example, anyone who uses a conventional GPU to train and

test can achieve real-time, high quality, and convincing ob-

ject detection results, as the YOLOv4 results shown in Fig-

ure 1. Our contributions are summarized as follows:

1. We develope an efficient and powerful object detection

model. It makes everyone can use a 1080 Ti or 2080 Ti

GPU to train a super fast and accurate object detector.

2. We verify the influence of state-of-the-art Bag-of-

Freebies and Bag-of-Specials methods of object detec-

tion during the detector training.

3. We modify state-of-the-art methods and make them

more effecient and suitable for single GPU training,

including CBN [89], PAN [49], SAM [85], etc.

1

�

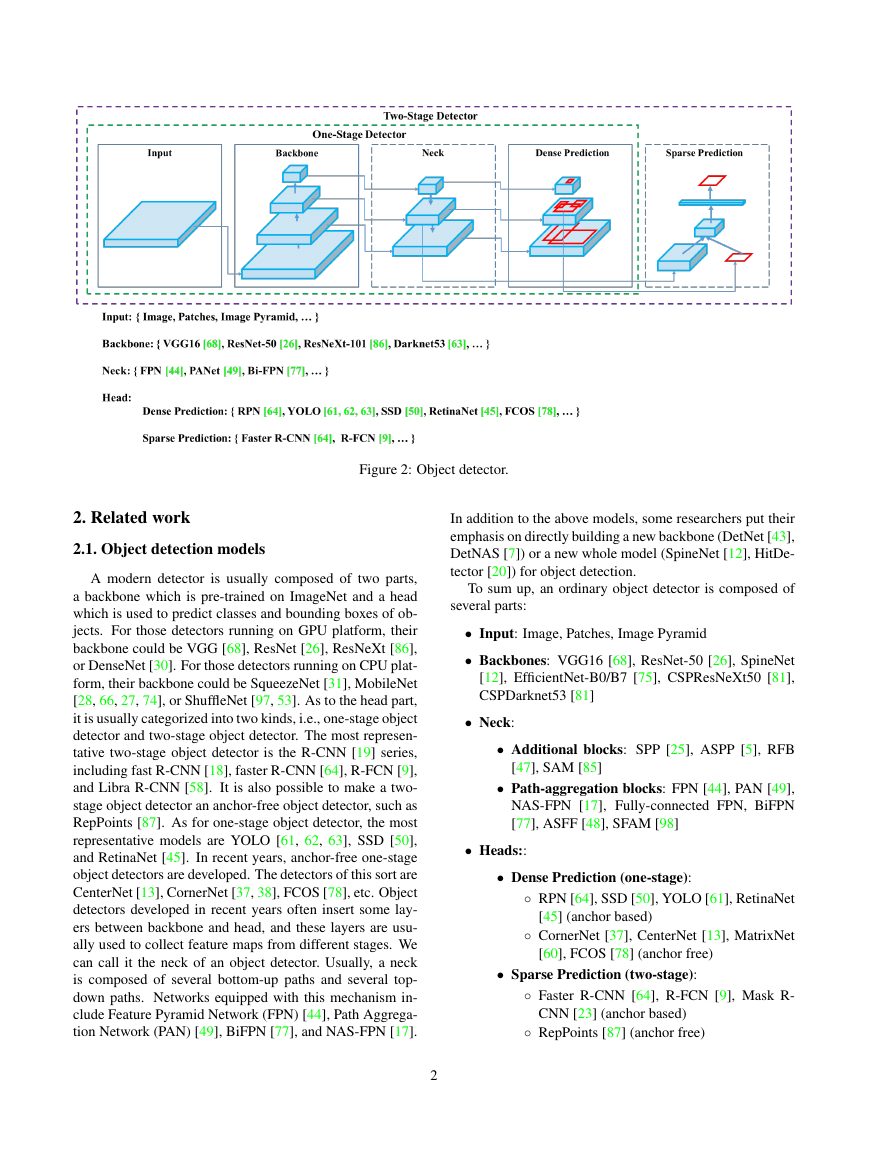

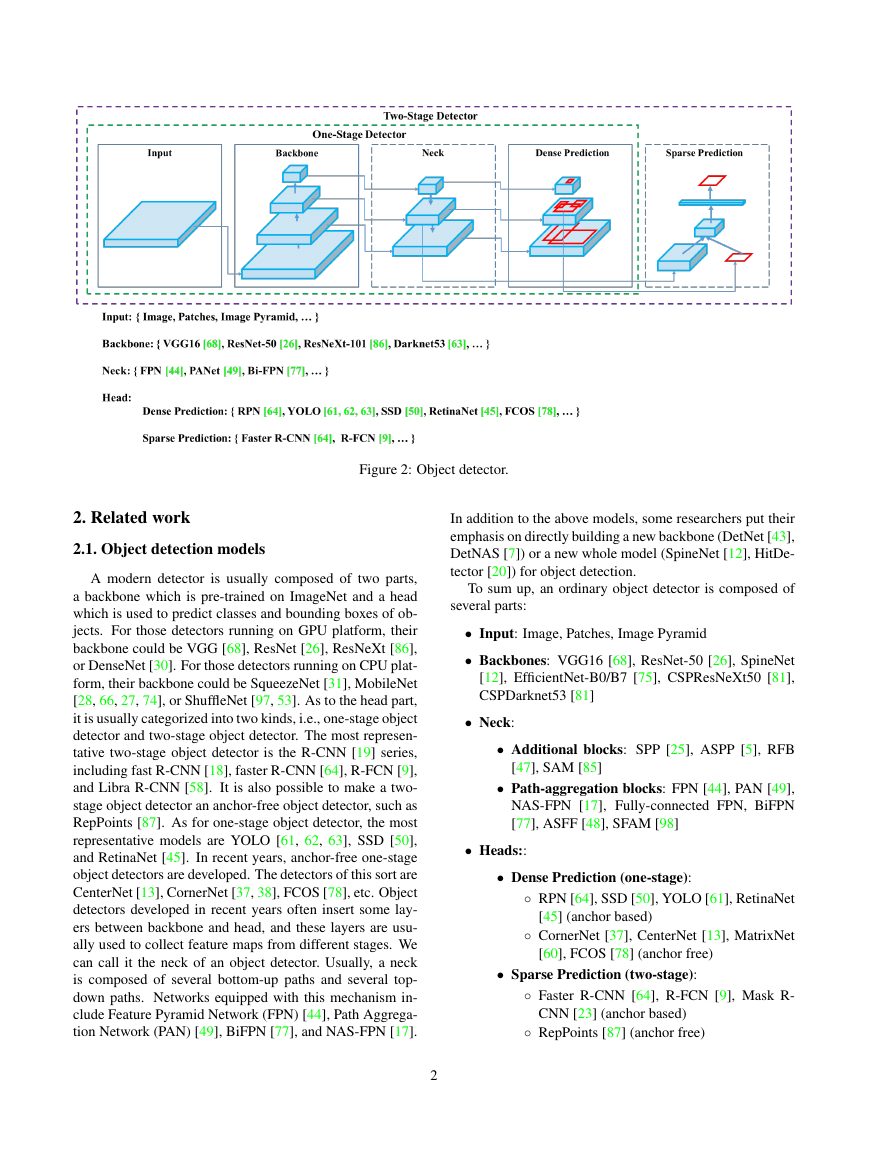

Figure 2: Object detector.

2. Related work

2.1. Object detection models

A modern detector is usually composed of two parts,

a backbone which is pre-trained on ImageNet and a head

which is used to predict classes and bounding boxes of ob-

jects. For those detectors running on GPU platform, their

backbone could be VGG [68], ResNet [26], ResNeXt [86],

or DenseNet [30]. For those detectors running on CPU plat-

form, their backbone could be SqueezeNet [31], MobileNet

[28, 66, 27, 74], or ShuffleNet [97, 53]. As to the head part,

it is usually categorized into two kinds, i.e., one-stage object

detector and two-stage object detector. The most represen-

tative two-stage object detector is the R-CNN [19] series,

including fast R-CNN [18], faster R-CNN [64], R-FCN [9],

and Libra R-CNN [58]. It is also possible to make a two-

stage object detector an anchor-free object detector, such as

RepPoints [87]. As for one-stage object detector, the most

representative models are YOLO [61, 62, 63], SSD [50],

and RetinaNet [45]. In recent years, anchor-free one-stage

object detectors are developed. The detectors of this sort are

CenterNet [13], CornerNet [37, 38], FCOS [78], etc. Object

detectors developed in recent years often insert some lay-

ers between backbone and head, and these layers are usu-

ally used to collect feature maps from different stages. We

can call it the neck of an object detector. Usually, a neck

is composed of several bottom-up paths and several top-

down paths. Networks equipped with this mechanism in-

clude Feature Pyramid Network (FPN) [44], Path Aggrega-

tion Network (PAN) [49], BiFPN [77], and NAS-FPN [17].

2

In addition to the above models, some researchers put their

emphasis on directly building a new backbone (DetNet [43],

DetNAS [7]) or a new whole model (SpineNet [12], HitDe-

tector [20]) for object detection.

To sum up, an ordinary object detector is composed of

several parts:

• Input: Image, Patches, Image Pyramid

• Backbones: VGG16 [68], ResNet-50 [26], SpineNet

[12], EfficientNet-B0/B7 [75], CSPResNeXt50 [81],

CSPDarknet53 [81]

• Neck:

• Additional blocks: SPP [25], ASPP [5], RFB

[47], SAM [85]

• Path-aggregation blocks: FPN [44], PAN [49],

NAS-FPN [17], Fully-connected FPN, BiFPN

[77], ASFF [48], SFAM [98]

• Heads::

• Dense Prediction (one-stage):

◦ RPN [64], SSD [50], YOLO [61], RetinaNet

◦ CornerNet [37], CenterNet [13], MatrixNet

[45] (anchor based)

[60], FCOS [78] (anchor free)

• Sparse Prediction (two-stage):

◦ Faster R-CNN [64], R-FCN [9], Mask R-

◦ RepPoints [87] (anchor free)

CNN [23] (anchor based)

�

2.2. Bag of freebies

Usually, a conventional object detector is trained off-

line. Therefore, researchers always like to take this advan-

tage and develop better training methods which can make

the object detector receive better accuracy without increas-

ing the inference cost. We call these methods that only

change the training strategy or only increase the training

cost as “bag of freebies.” What is often adopted by object

detection methods and meets the definition of bag of free-

bies is data augmentation. The purpose of data augmenta-

tion is to increase the variability of the input images, so that

the designed object detection model has higher robustness

to the images obtained from different environments. For

examples, photometric distortions and geometric distortions

are two commonly used data augmentation method and they

definitely benefit the object detection task. In dealing with

photometric distortion, we adjust the brightness, contrast,

hue, saturation, and noise of an image. For geometric dis-

tortion, we add random scaling, cropping, flipping, and ro-

tating.

The data augmentation methods mentioned above are all

pixel-wise adjustments, and all original pixel information in

the adjusted area is retained. In addition, some researchers

engaged in data augmentation put their emphasis on sim-

ulating object occlusion issues. They have achieved good

results in image classification and object detection. For ex-

ample, random erase [100] and CutOut [11] can randomly

select the rectangle region in an image and fill in a random

or complementary value of zero. As for hide-and-seek [69]

and grid mask [6], they randomly or evenly select multiple

rectangle regions in an image and replace them to all ze-

ros. If similar concepts are applied to feature maps, there

are DropOut [71], DropConnect [80], and DropBlock [16]

methods. In addition, some researchers have proposed the

methods of using multiple images together to perform data

augmentation. For example, MixUp [92] uses two images

to multiply and superimpose with different coefficient ra-

tios, and then adjusts the label with these superimposed ra-

tios. As for CutMix [91], it is to cover the cropped image

to rectangle region of other images, and adjusts the label

according to the size of the mix area.

In addition to the

above mentioned methods, style transfer GAN [15] is also

used for data augmentation, and such usage can effectively

reduce the texture bias learned by CNN.

Different from the various approaches proposed above,

some other bag of freebies methods are dedicated to solving

the problem that the semantic distribution in the dataset may

have bias. In dealing with the problem of semantic distri-

bution bias, a very important issue is that there is a problem

of data imbalance between different classes, and this prob-

lem is often solved by hard negative example mining [72]

or online hard example mining [67] in two-stage object de-

tector. But the example mining method is not applicable

3

to one-stage object detector, because this kind of detector

belongs to the dense prediction architecture. Therefore Lin

et al.

[45] proposed focal loss to deal with the problem

of data imbalance existing between various classes. An-

other very important issue is that it is difficult to express the

relationship of the degree of association between different

categories with the one-hot hard representation. This rep-

resentation scheme is often used when executing labeling.

The label smoothing proposed in [73] is to convert hard la-

bel into soft label for training, which can make model more

robust. In order to obtain a better soft label, Islam et al. [33]

introduced the concept of knowledge distillation to design

the label refinement network.

The last bag of freebies is the objective function of

Bounding Box (BBox) regression. The traditional object

detector usually uses Mean Square Error (MSE) to di-

rectly perform regression on the center point coordinates

and height and width of the BBox, i.e., {xcenter, ycenter,

w, h}, or the upper left point and the lower right point,

i.e., {xtop lef t, ytop lef t, xbottom right, ybottom right}. As

for anchor-based method, it is to estimate the correspond-

for example {xcenter of f set, ycenter of f set,

ing offset,

wof f set, hof f set} and {xtop lef t of f set, ytop lef t of f set,

xbottom right of f set, ybottom right of f set}. However, to di-

rectly estimate the coordinate values of each point of the

BBox is to treat these points as independent variables, but

in fact does not consider the integrity of the object itself. In

order to make this issue processed better, some researchers

recently proposed IoU loss [90], which puts the coverage of

predicted BBox area and ground truth BBox area into con-

sideration. The IoU loss computing process will trigger the

calculation of the four coordinate points of the BBox by ex-

ecuting IoU with the ground truth, and then connecting the

generated results into a whole code. Because IoU is a scale

invariant representation, it can solve the problem that when

traditional methods calculate the l1 or l2 loss of {x, y, w,

h}, the loss will increase with the scale. Recently, some

researchers have continued to improve IoU loss. For exam-

ple, GIoU loss [65] is to include the shape and orientation

of object in addition to the coverage area. They proposed to

find the smallest area BBox that can simultaneously cover

the predicted BBox and ground truth BBox, and use this

BBox as the denominator to replace the denominator origi-

nally used in IoU loss. As for DIoU loss [99], it additionally

considers the distance of the center of an object, and CIoU

loss [99], on the other hand simultaneously considers the

overlapping area, the distance between center points, and

the aspect ratio. CIoU can achieve better convergence speed

and accuracy on the BBox regression problem.

�

2.3. Bag of specials

For those plugin modules and post-processing methods

that only increase the inference cost by a small amount

but can significantly improve the accuracy of object detec-

tion, we call them “bag of specials”. Generally speaking,

these plugin modules are for enhancing certain attributes in

a model, such as enlarging receptive field, introducing at-

tention mechanism, or strengthening feature integration ca-

pability, etc., and post-processing is a method for screening

model prediction results.

Common modules that can be used to enhance recep-

tive field are SPP [25], ASPP [5], and RFB [47]. The

SPP module was originated from Spatial Pyramid Match-

ing (SPM) [39], and SPMs original method was to split fea-

ture map into several d × d equal blocks, where d can be

{1, 2, 3, ...}, thus forming spatial pyramid, and then extract-

ing bag-of-word features. SPP integrates SPM into CNN

and use max-pooling operation instead of bag-of-word op-

eration. Since the SPP module proposed by He et al. [25]

will output one dimensional feature vector, it is infeasible to

be applied in Fully Convolutional Network (FCN). Thus in

the design of YOLOv3 [63], Redmon and Farhadi improve

SPP module to the concatenation of max-pooling outputs

with kernel size k × k, where k = {1, 5, 9, 13}, and stride

equals to 1. Under this design, a relatively large k × k max-

pooling effectively increase the receptive field of backbone

feature. After adding the improved version of SPP module,

YOLOv3-608 upgrades AP50 by 2.7% on the MS COCO

object detection task at the cost of 0.5% extra computation.

The difference in operation between ASPP [5] module and

improved SPP module is mainly from the original k×k ker-

nel size, max-pooling of stride equals to 1 to several 3 × 3

kernel size, dilated ratio equals to k, and stride equals to 1

in dilated convolution operation. RFB module is to use sev-

eral dilated convolutions of k×k kernel, dilated ratio equals

to k, and stride equals to 1 to obtain a more comprehensive

spatial coverage than ASPP. RFB [47] only costs 7% extra

inference time to increase the AP50 of SSD on MS COCO

by 5.7%.

The attention module that is often used in object detec-

tion is mainly divided into channel-wise attention and point-

wise attention, and the representatives of these two atten-

tion models are Squeeze-and-Excitation (SE) [29] and Spa-

tial Attention Module (SAM) [85], respectively. Although

SE module can improve the power of ResNet50 in the Im-

ageNet image classification task 1% top-1 accuracy at the

cost of only increasing the computational effort by 2%, but

on a GPU usually it will increase the inference time by

about 10%, so it is more appropriate to be used in mobile

devices. But for SAM, it only needs to pay 0.1% extra cal-

culation and it can improve ResNet50-SE 0.5% top-1 accu-

racy on the ImageNet image classification task. Best of all,

it does not affect the speed of inference on the GPU at all.

In terms of feature integration, the early practice is to use

skip connection [51] or hyper-column [22] to integrate low-

level physical feature to high-level semantic feature. Since

multi-scale prediction methods such as FPN have become

popular, many lightweight modules that integrate different

feature pyramid have been proposed. The modules of this

sort include SFAM [98], ASFF [48], and BiFPN [77]. The

main idea of SFAM is to use SE module to execute channel-

wise level re-weighting on multi-scale concatenated feature

maps. As for ASFF, it uses softmax as point-wise level re-

weighting and then adds feature maps of different scales.

In BiFPN, the multi-input weighted residual connections is

proposed to execute scale-wise level re-weighting, and then

add feature maps of different scales.

In the research of deep learning, some people put their

focus on searching for good activation function. A good

activation function can make the gradient more efficiently

propagated, and at the same time it will not cause too

much extra computational cost.

In 2010, Nair and Hin-

ton [56] propose ReLU to substantially solve the gradient

vanish problem which is frequently encountered in tradi-

tional tanh and sigmoid activation function. Subsequently,

LReLU [54], PReLU [24], ReLU6 [28], Scaled Exponential

Linear Unit (SELU) [35], Swish [59], hard-Swish [27], and

Mish [55], etc., which are also used to solve the gradient

vanish problem, have been proposed. The main purpose of

LReLU and PReLU is to solve the problem that the gradi-

ent of ReLU is zero when the output is less than zero. As

for ReLU6 and hard-Swish, they are specially designed for

quantization networks. For self-normalizing a neural net-

work, the SELU activation function is proposed to satisfy

the goal. One thing to be noted is that both Swish and Mish

are continuously differentiable activation function.

The post-processing method commonly used in deep-

learning-based object detection is NMS, which can be used

to filter those BBoxes that badly predict the same ob-

ject, and only retain the candidate BBoxes with higher re-

sponse. The way NMS tries to improve is consistent with

the method of optimizing an objective function. The orig-

inal method proposed by NMS does not consider the con-

text information, so Girshick et al. [19] added classification

confidence score in R-CNN as a reference, and according to

the order of confidence score, greedy NMS was performed

in the order of high score to low score. As for soft NMS [1],

it considers the problem that the occlusion of an object may

cause the degradation of confidence score in greedy NMS

with IoU score. The DIoU NMS [99] developers way of

thinking is to add the information of the center point dis-

tance to the BBox screening process on the basis of soft

NMS. It is worth mentioning that, since none of above post-

processing methods directly refer to the captured image fea-

tures, post-processing is no longer required in the subse-

quent development of an anchor-free method.

4

�

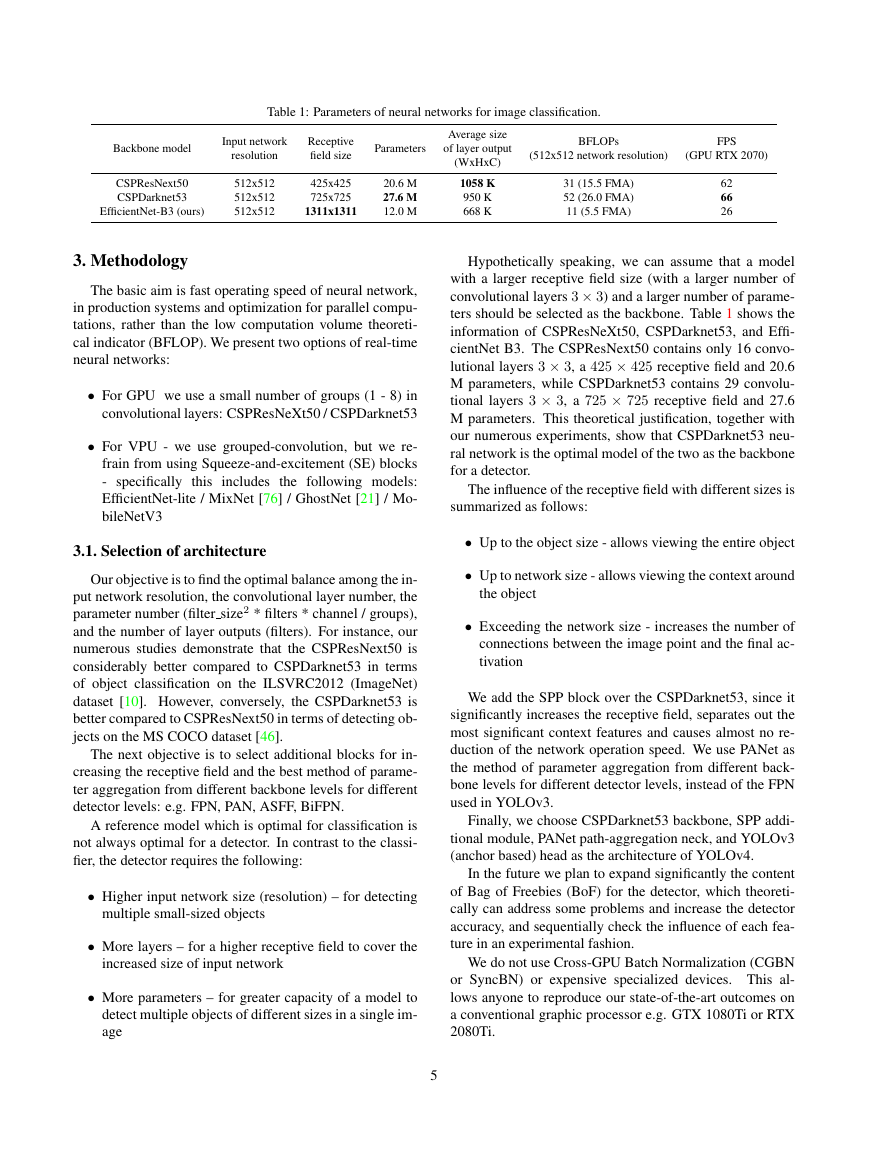

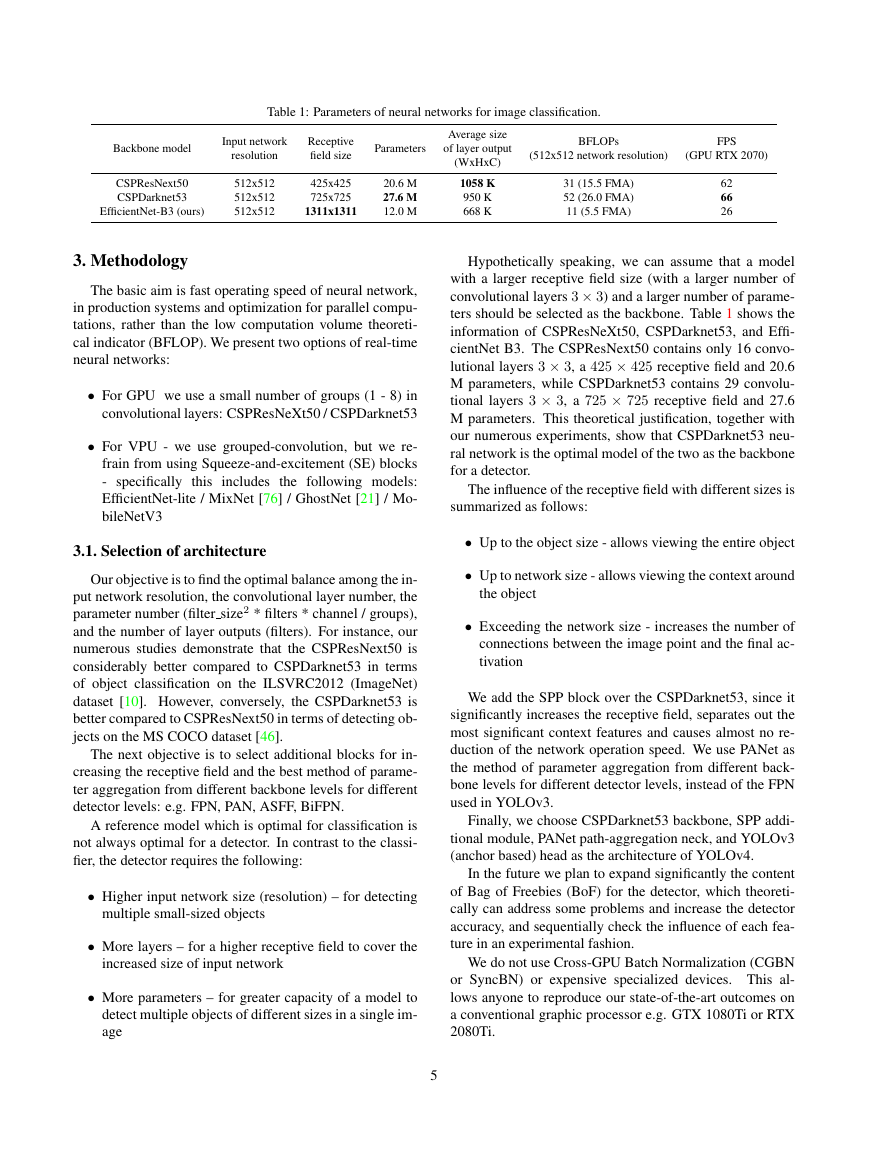

Table 1: Parameters of neural networks for image classification.

Backbone model

Input network

resolution

Receptive

field size

Parameters

CSPResNext50

CSPDarknet53

EfficientNet-B3 (ours)

512x512

512x512

512x512

425x425

725x725

1311x1311

20.6 M

27.6 M

12.0 M

Average size

of layer output

(WxHxC)

1058 K

950 K

668 K

BFLOPs

(512x512 network resolution)

FPS

(GPU RTX 2070)

31 (15.5 FMA)

52 (26.0 FMA)

11 (5.5 FMA)

62

66

26

3. Methodology

The basic aim is fast operating speed of neural network,

in production systems and optimization for parallel compu-

tations, rather than the low computation volume theoreti-

cal indicator (BFLOP). We present two options of real-time

neural networks:

• For GPU we use a small number of groups (1 - 8) in

convolutional layers: CSPResNeXt50 / CSPDarknet53

• For VPU - we use grouped-convolution, but we re-

frain from using Squeeze-and-excitement (SE) blocks

- specifically this includes the following models:

EfficientNet-lite / MixNet [76] / GhostNet [21] / Mo-

bileNetV3

3.1. Selection of architecture

Our objective is to find the optimal balance among the in-

put network resolution, the convolutional layer number, the

parameter number (filter size2 * filters * channel / groups),

and the number of layer outputs (filters). For instance, our

numerous studies demonstrate that the CSPResNext50 is

considerably better compared to CSPDarknet53 in terms

of object classification on the ILSVRC2012 (ImageNet)

dataset [10]. However, conversely, the CSPDarknet53 is

better compared to CSPResNext50 in terms of detecting ob-

jects on the MS COCO dataset [46].

The next objective is to select additional blocks for in-

creasing the receptive field and the best method of parame-

ter aggregation from different backbone levels for different

detector levels: e.g. FPN, PAN, ASFF, BiFPN.

A reference model which is optimal for classification is

not always optimal for a detector. In contrast to the classi-

fier, the detector requires the following:

• Higher input network size (resolution) – for detecting

multiple small-sized objects

• More layers – for a higher receptive field to cover the

increased size of input network

• More parameters – for greater capacity of a model to

detect multiple objects of different sizes in a single im-

age

Hypothetically speaking, we can assume that a model

with a larger receptive field size (with a larger number of

convolutional layers 3 × 3) and a larger number of parame-

ters should be selected as the backbone. Table 1 shows the

information of CSPResNeXt50, CSPDarknet53, and Effi-

cientNet B3. The CSPResNext50 contains only 16 convo-

lutional layers 3 × 3, a 425 × 425 receptive field and 20.6

M parameters, while CSPDarknet53 contains 29 convolu-

tional layers 3 × 3, a 725 × 725 receptive field and 27.6

M parameters. This theoretical justification, together with

our numerous experiments, show that CSPDarknet53 neu-

ral network is the optimal model of the two as the backbone

for a detector.

The influence of the receptive field with different sizes is

summarized as follows:

• Up to the object size - allows viewing the entire object

• Up to network size - allows viewing the context around

the object

• Exceeding the network size - increases the number of

connections between the image point and the final ac-

tivation

We add the SPP block over the CSPDarknet53, since it

significantly increases the receptive field, separates out the

most significant context features and causes almost no re-

duction of the network operation speed. We use PANet as

the method of parameter aggregation from different back-

bone levels for different detector levels, instead of the FPN

used in YOLOv3.

Finally, we choose CSPDarknet53 backbone, SPP addi-

tional module, PANet path-aggregation neck, and YOLOv3

(anchor based) head as the architecture of YOLOv4.

In the future we plan to expand significantly the content

of Bag of Freebies (BoF) for the detector, which theoreti-

cally can address some problems and increase the detector

accuracy, and sequentially check the influence of each fea-

ture in an experimental fashion.

We do not use Cross-GPU Batch Normalization (CGBN

or SyncBN) or expensive specialized devices. This al-

lows anyone to reproduce our state-of-the-art outcomes on

a conventional graphic processor e.g. GTX 1080Ti or RTX

2080Ti.

5

�

3.2. Selection of BoF and BoS

For improving the object detection training, a CNN usu-

ally uses the following:

• Activations: ReLU, leaky-ReLU, parametric-ReLU,

ReLU6, SELU, Swish, or Mish

• Bounding box regression loss: MSE, IoU, GIoU,

CIoU, DIoU

• Data augmentation: CutOut, MixUp, CutMix

• Regularization method: DropOut, DropPath [36],

Spatial DropOut [79], or DropBlock

• Normalization of the network activations by their

mean and variance: Batch Normalization (BN) [32],

Cross-GPU Batch Normalization (CGBN or SyncBN)

[93], Filter Response Normalization (FRN) [70], or

Cross-Iteration Batch Normalization (CBN) [89]

• Skip-connections: Residual connections, Weighted

residual connections, Multi-input weighted residual

connections, or Cross stage partial connections (CSP)

As for training activation function, since PReLU and

SELU are more difficult to train, and ReLU6 is specifically

designed for quantization network, we therefore remove the

above activation functions from the candidate list. In the

method of reqularization, the people who published Drop-

Block have compared their method with other methods in

detail, and their regularization method has won a lot. There-

fore, we did not hesitate to choose DropBlock as our reg-

ularization method. As for the selection of normalization

method, since we focus on a training strategy that uses only

one GPU, syncBN is not considered.

3.3. Additional improvements

In order to make the designed detector more suitable for

training on single GPU, we made additional design and im-

provement as follows:

• We introduce a new method of data augmentation Mo-

saic, and Self-Adversarial Training (SAT)

• We select optimal hyper-parameters while applying

genetic algorithms

• We modify some exsiting methods to make our design

suitble for efficient training and detection - modified

SAM, modified PAN, and Cross mini-Batch Normal-

ization (CmBN)

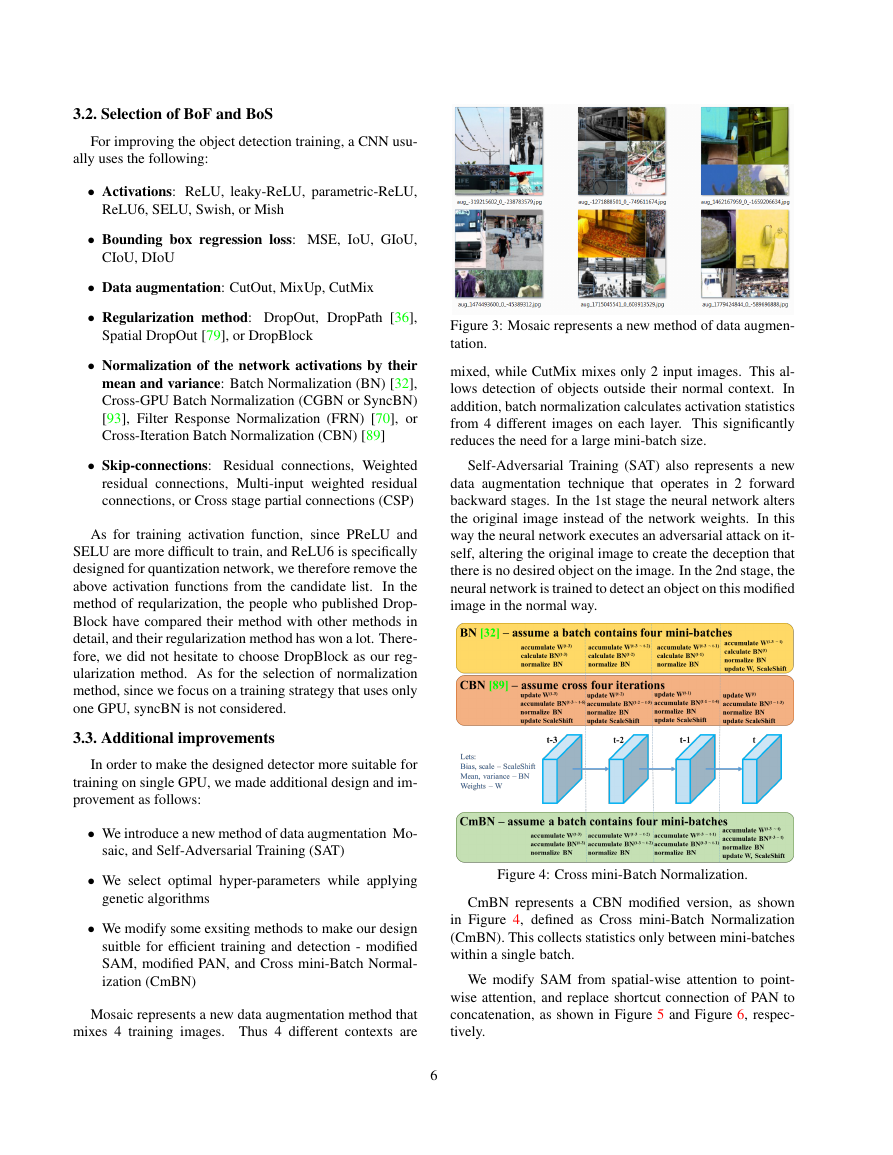

Mosaic represents a new data augmentation method that

mixes 4 training images. Thus 4 different contexts are

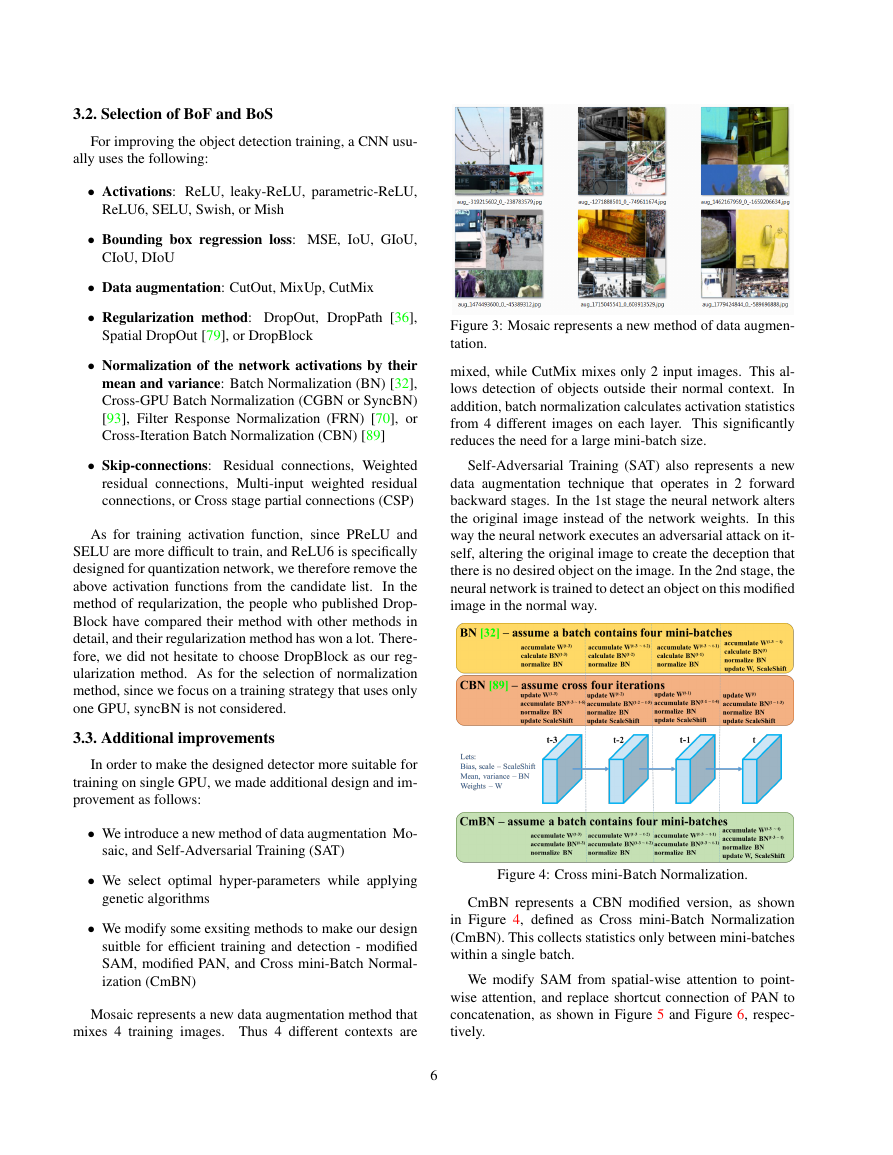

Figure 3: Mosaic represents a new method of data augmen-

tation.

mixed, while CutMix mixes only 2 input images. This al-

lows detection of objects outside their normal context. In

addition, batch normalization calculates activation statistics

from 4 different images on each layer. This significantly

reduces the need for a large mini-batch size.

Self-Adversarial Training (SAT) also represents a new

data augmentation technique that operates in 2 forward

backward stages. In the 1st stage the neural network alters

the original image instead of the network weights. In this

way the neural network executes an adversarial attack on it-

self, altering the original image to create the deception that

there is no desired object on the image. In the 2nd stage, the

neural network is trained to detect an object on this modified

image in the normal way.

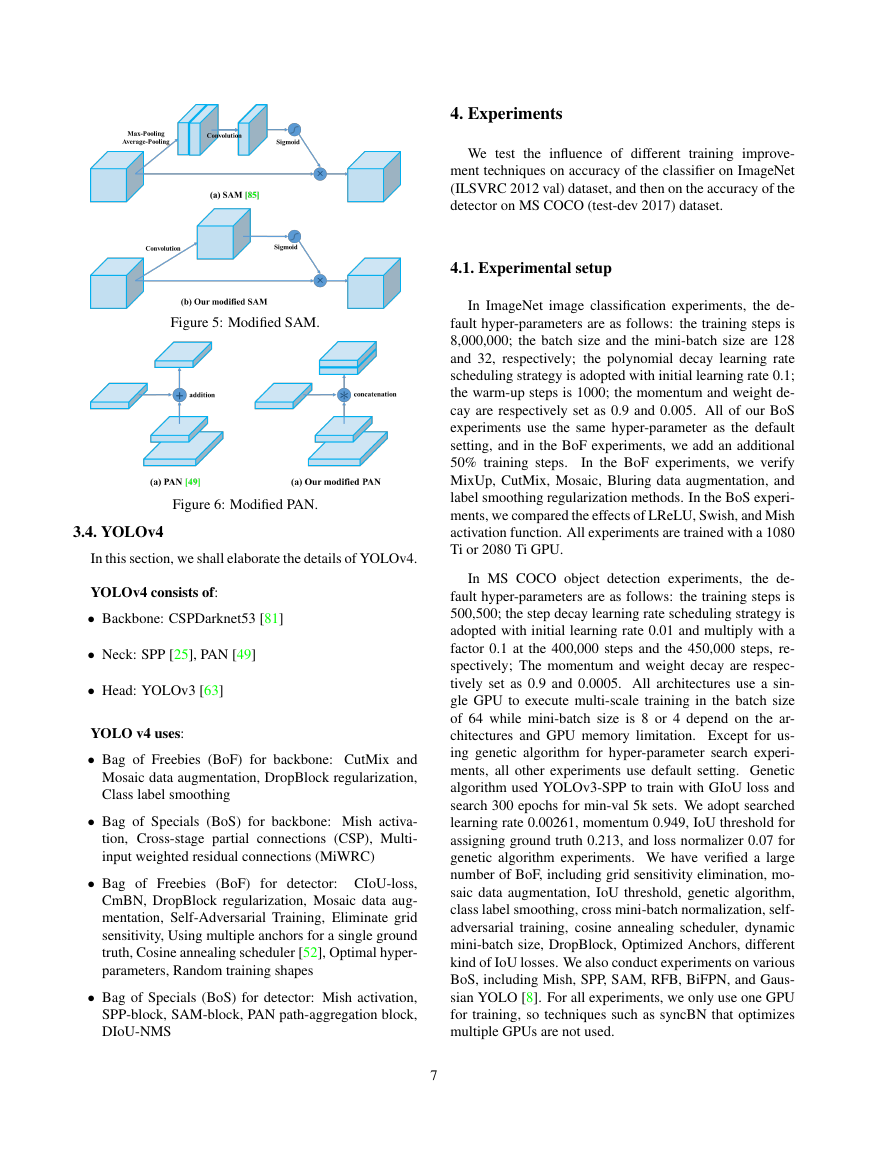

Figure 4: Cross mini-Batch Normalization.

CmBN represents a CBN modified version, as shown

in Figure 4, defined as Cross mini-Batch Normalization

(CmBN). This collects statistics only between mini-batches

within a single batch.

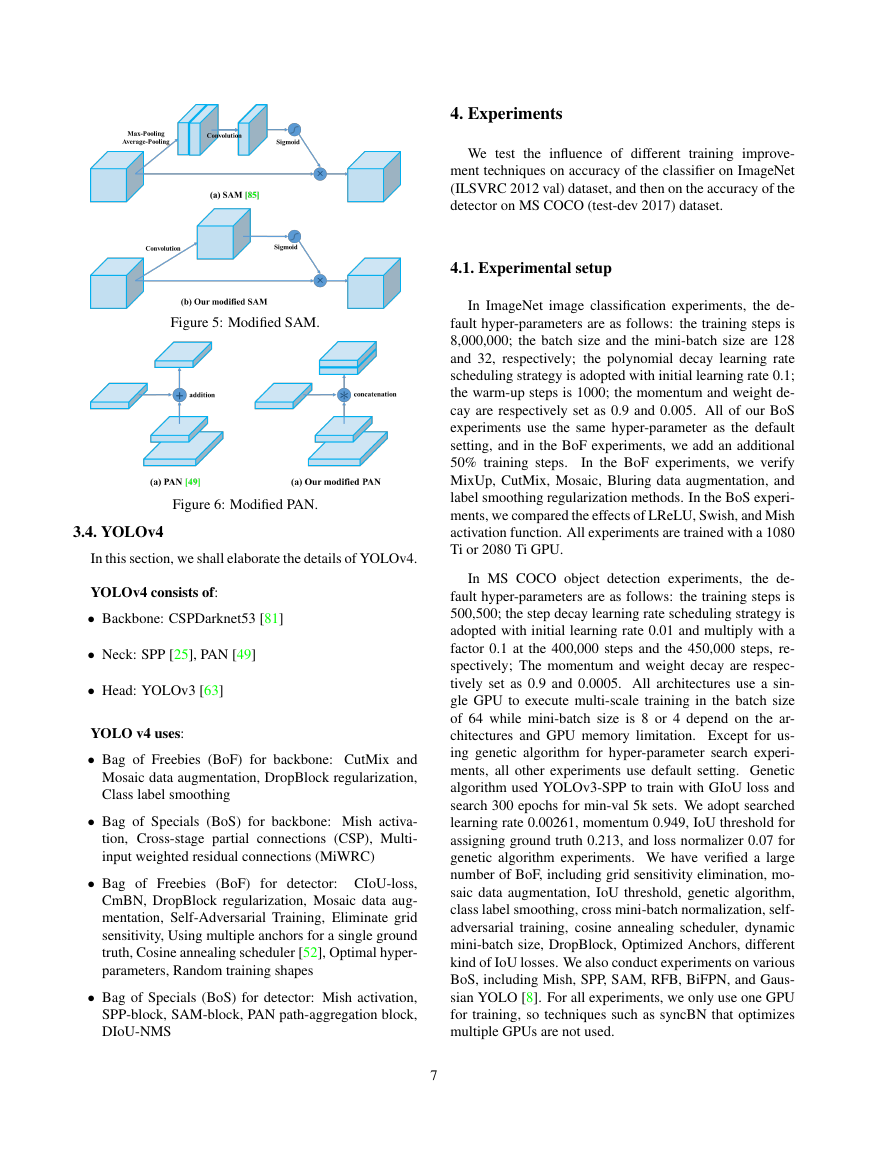

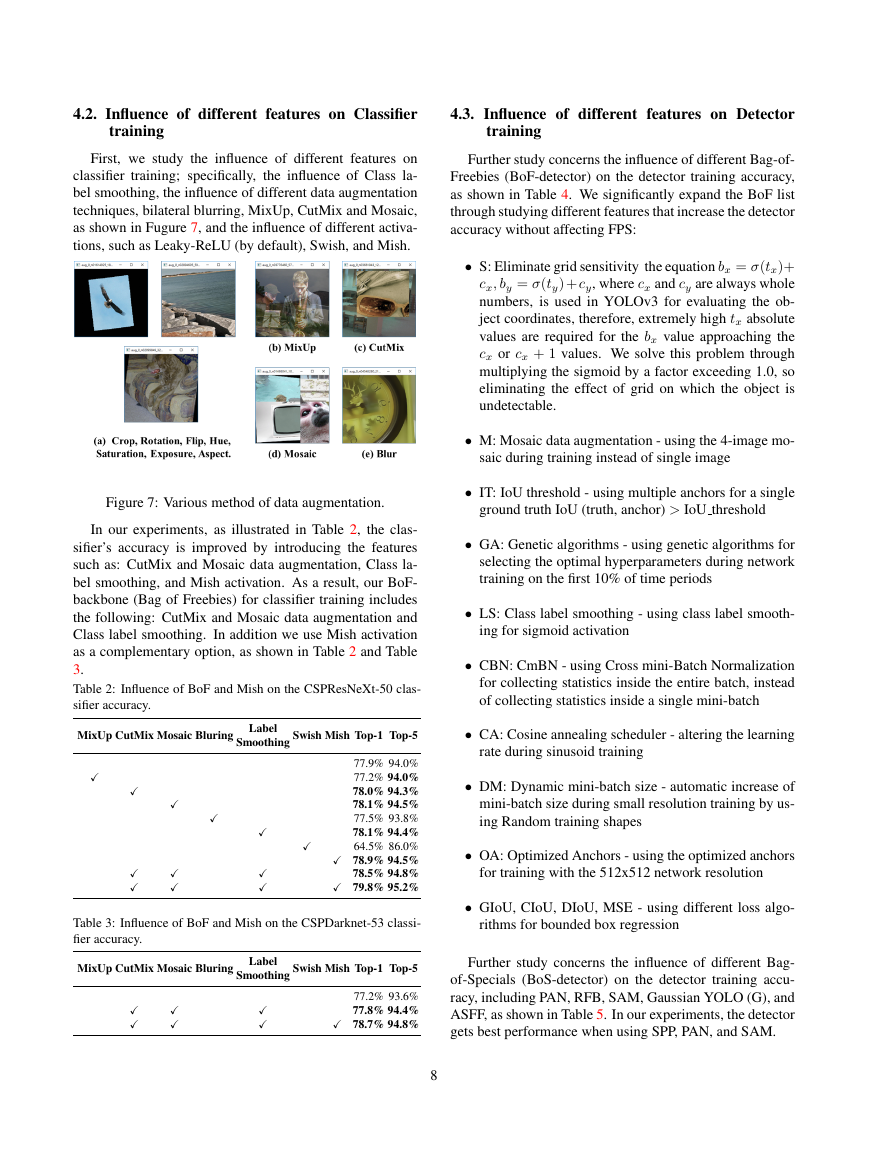

We modify SAM from spatial-wise attention to point-

wise attention, and replace shortcut connection of PAN to

concatenation, as shown in Figure 5 and Figure 6, respec-

tively.

6

�

4. Experiments

We test

the influence of different

training improve-

ment techniques on accuracy of the classifier on ImageNet

(ILSVRC 2012 val) dataset, and then on the accuracy of the

detector on MS COCO (test-dev 2017) dataset.

Figure 5: Modified SAM.

Figure 6: Modified PAN.

3.4. YOLOv4

In this section, we shall elaborate the details of YOLOv4.

YOLOv4 consists of:

• Backbone: CSPDarknet53 [81]

• Neck: SPP [25], PAN [49]

• Head: YOLOv3 [63]

YOLO v4 uses:

• Bag of Freebies (BoF) for backbone: CutMix and

Mosaic data augmentation, DropBlock regularization,

Class label smoothing

• Bag of Specials (BoS) for backbone: Mish activa-

tion, Cross-stage partial connections (CSP), Multi-

input weighted residual connections (MiWRC)

• Bag of Freebies (BoF) for detector: CIoU-loss,

CmBN, DropBlock regularization, Mosaic data aug-

mentation, Self-Adversarial Training, Eliminate grid

sensitivity, Using multiple anchors for a single ground

truth, Cosine annealing scheduler [52], Optimal hyper-

parameters, Random training shapes

• Bag of Specials (BoS) for detector: Mish activation,

SPP-block, SAM-block, PAN path-aggregation block,

DIoU-NMS

7

4.1. Experimental setup

In ImageNet image classification experiments, the de-

fault hyper-parameters are as follows: the training steps is

8,000,000; the batch size and the mini-batch size are 128

and 32, respectively; the polynomial decay learning rate

scheduling strategy is adopted with initial learning rate 0.1;

the warm-up steps is 1000; the momentum and weight de-

cay are respectively set as 0.9 and 0.005. All of our BoS

experiments use the same hyper-parameter as the default

setting, and in the BoF experiments, we add an additional

50% training steps.

In the BoF experiments, we verify

MixUp, CutMix, Mosaic, Bluring data augmentation, and

label smoothing regularization methods. In the BoS experi-

ments, we compared the effects of LReLU, Swish, and Mish

activation function. All experiments are trained with a 1080

Ti or 2080 Ti GPU.

In MS COCO object detection experiments,

the de-

fault hyper-parameters are as follows: the training steps is

500,500; the step decay learning rate scheduling strategy is

adopted with initial learning rate 0.01 and multiply with a

factor 0.1 at the 400,000 steps and the 450,000 steps, re-

spectively; The momentum and weight decay are respec-

tively set as 0.9 and 0.0005. All architectures use a sin-

gle GPU to execute multi-scale training in the batch size

of 64 while mini-batch size is 8 or 4 depend on the ar-

chitectures and GPU memory limitation. Except for us-

ing genetic algorithm for hyper-parameter search experi-

ments, all other experiments use default setting. Genetic

algorithm used YOLOv3-SPP to train with GIoU loss and

search 300 epochs for min-val 5k sets. We adopt searched

learning rate 0.00261, momentum 0.949, IoU threshold for

assigning ground truth 0.213, and loss normalizer 0.07 for

genetic algorithm experiments. We have verified a large

number of BoF, including grid sensitivity elimination, mo-

saic data augmentation, IoU threshold, genetic algorithm,

class label smoothing, cross mini-batch normalization, self-

adversarial training, cosine annealing scheduler, dynamic

mini-batch size, DropBlock, Optimized Anchors, different

kind of IoU losses. We also conduct experiments on various

BoS, including Mish, SPP, SAM, RFB, BiFPN, and Gaus-

sian YOLO [8]. For all experiments, we only use one GPU

for training, so techniques such as syncBN that optimizes

multiple GPUs are not used.

�

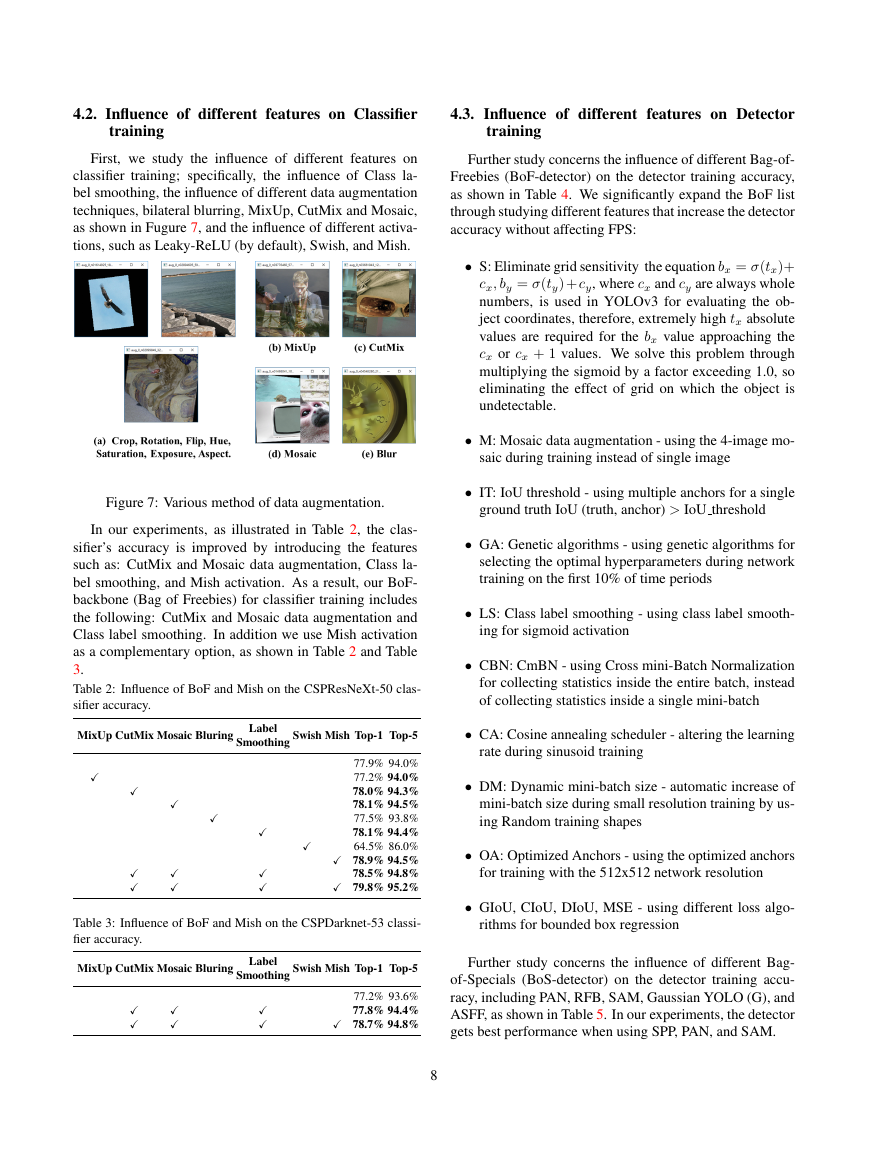

4.2. Influence of different features on Classifier

training

First, we study the influence of different features on

classifier training; specifically, the influence of Class la-

bel smoothing, the influence of different data augmentation

techniques, bilateral blurring, MixUp, CutMix and Mosaic,

as shown in Fugure 7, and the influence of different activa-

tions, such as Leaky-ReLU (by default), Swish, and Mish.

Figure 7: Various method of data augmentation.

In our experiments, as illustrated in Table 2, the clas-

sifier’s accuracy is improved by introducing the features

such as: CutMix and Mosaic data augmentation, Class la-

bel smoothing, and Mish activation. As a result, our BoF-

backbone (Bag of Freebies) for classifier training includes

the following: CutMix and Mosaic data augmentation and

Class label smoothing. In addition we use Mish activation

as a complementary option, as shown in Table 2 and Table

3.

Table 2: Influence of BoF and Mish on the CSPResNeXt-50 clas-

sifier accuracy.

MixUp CutMix Mosaic Bluring Label

Smoothing Swish Mish Top-1 Top-5

77.9% 94.0%

77.2% 94.0%

78.0% 94.3%

78.1% 94.5%

77.5% 93.8%

78.1% 94.4%

64.5% 86.0%

78.9% 94.5%

78.5% 94.8%

79.8% 95.2%

Table 3: Influence of BoF and Mish on the CSPDarknet-53 classi-

fier accuracy.

MixUp CutMix Mosaic Bluring Label

Smoothing Swish Mish Top-1 Top-5

77.2% 93.6%

77.8% 94.4%

78.7% 94.8%

4.3. Influence of different features on Detector

training

Further study concerns the influence of different Bag-of-

Freebies (BoF-detector) on the detector training accuracy,

as shown in Table 4. We significantly expand the BoF list

through studying different features that increase the detector

accuracy without affecting FPS:

• S: Eliminate grid sensitivity the equation bx = σ(tx)+

cx, by = σ(ty) + cy, where cx and cy are always whole

numbers, is used in YOLOv3 for evaluating the ob-

ject coordinates, therefore, extremely high tx absolute

values are required for the bx value approaching the

cx or cx + 1 values. We solve this problem through

multiplying the sigmoid by a factor exceeding 1.0, so

eliminating the effect of grid on which the object is

undetectable.

• M: Mosaic data augmentation - using the 4-image mo-

saic during training instead of single image

• IT: IoU threshold - using multiple anchors for a single

ground truth IoU (truth, anchor) > IoU threshold

• GA: Genetic algorithms - using genetic algorithms for

selecting the optimal hyperparameters during network

training on the first 10% of time periods

• LS: Class label smoothing - using class label smooth-

ing for sigmoid activation

• CBN: CmBN - using Cross mini-Batch Normalization

for collecting statistics inside the entire batch, instead

of collecting statistics inside a single mini-batch

• CA: Cosine annealing scheduler - altering the learning

rate during sinusoid training

• DM: Dynamic mini-batch size - automatic increase of

mini-batch size during small resolution training by us-

ing Random training shapes

• OA: Optimized Anchors - using the optimized anchors

for training with the 512x512 network resolution

• GIoU, CIoU, DIoU, MSE - using different loss algo-

rithms for bounded box regression

Further study concerns the influence of different Bag-

of-Specials (BoS-detector) on the detector training accu-

racy, including PAN, RFB, SAM, Gaussian YOLO (G), and

ASFF, as shown in Table 5. In our experiments, the detector

gets best performance when using SPP, PAN, and SAM.

8

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc