Comparative Study of WebRTC Open Source SFUs

for Video Conferencing

Emmanuel Andr´e∗, Nicolas Le Breton∗§, Augustin Lemesle∗§, Ludovic Roux∗ and Alexandre Gouaillard∗

∗CoSMo Software, Singapore, Email: {emmanuel.andre, ludovic.roux, alex.gouaillard}@cosmosoftware.io

§CentraleSup´elec, France, Email: {nicolas.lebreton, augustin.lemesle}@supelec.fr

Abstract—WebRTC capable media servers are ubiquitous, and

among them, Selective Forwarding Units (SFU) seem to generate

more and more interest, especially as a mandatory component

of WebRTC 1.0 Simulcast. The two most represented use cases

implemented using a WebRTC SFU are video conferencing

and broadcasting. To date, there has not been any scientific

comparative study of WebRTC SFUs. We propose here a new

approach based on the KITE testing engine. We apply it to the

comparative study of five main open-source WebRTC SFUs, used

for video conferencing, under load. The results show that such

approach is viable, and provide unexpected and refreshingly new

insights on the scalability of those SFUs.

Index Terms—WebRTC, Media Server, Load Testing, Real-

Time Communications

I. INTRODUCTION

Nowadays, most WebRTC applications, systems and ser-

vices support much more than the original one-to-one peer-to-

peer WebRTC use case, and use at least one media server to

implement them.

For interoperability with pre-existing technologies like SIP

for Voice over IP (VoIP), Public Switched Telephone Network

(PSTN), or Flash (RTMP), which can only handle one stream

of a given type (audio, video) at a time, one requires media

mixing capacities and will choose a Multipoint Control Unit

(MCU) [1]. However, most of the more recent media servers

are designed as Selective Forwarding Units (SFU) [2]; a design

that is not only less CPU intensive on the server, but that also

allows for advanced bandwidth adaptation with multiple en-

coding (simulcast) and Scalable Video Coding (SVC) codecs.

The latter of these allow for even better resilience against

network quality problems like packet loss.

Even when solely focusing on use cases that are imple-

mentable with an SFU, there are still many other remaining.

Arguably, the two most popular use cases are video confer-

ence (many-to-many, all equally receiving and sending), and

streaming / broadcasting (one-to-many, with one sending and

many receiving).

While most of the open-source (and closed-source) Web-

RTC media servers have implemented testing tools (see section

II-A), most of those tools are specific to the server they test,

and cannot be reused to test others or to make a comparative

study. Moreover, the published benchmarks differ so much in

terms of methodology that direct comparison is impossible. So

far, there has not been any single comparative study of media

servers, even from frameworks that claim to be media server

and signalling agnostic.

In this paper, we will focus on scalability testing of a video

conference use case using a single WebRTC SFU media server.

The novelty here is the capacity to run exactly the same test

scenario in the same conditions against several different media

servers installed on the same instance type. To compare the

performance of each SFU for this use case, we report the

measurements of their bit rates and of their latency all along

the test.

The rest of the paper is structured as follows: section II

provides a quick overview of the state of the art of WebRTC

testing. Section III describes, in detail, the configurations,

metrics, tools and test logic that were used to generate the

results presented in section IV, and analyzed in section V.

II. BACKGROUND

Verification and Validation (V&V) is the set of techniques

that are used to assess software products and services [3]. For

a given software, testing is defined as observing the execution

of the software on the specific subset of all possible inputs and

settings and provide an evaluation of the output or behavior

according to certain metrics.

Testing of web applications is a subset of V&V, for which

testing and quality assurance is especially challenging due

to the heterogeneity of the applications [4]. In their 2006

paper, Di Lucca and Fasolino [5] categorize the different types

of testing depending on their non-functional requirements.

According to them, the most important are performance, load,

stress, compatibility, accessibility, usability and security.

A. Specific WebRTC Testing tools

A lot of specific WebRTC testing tools exist; for instance,

the media server (e.g.

tools that assume something about

signalling) or the use cases (e.g. broadcasting) they test.

WebRTCBench [6] is an open-source benchmark suite in-

troduced by University of California, Irvine in 2015. This

framework aims at measuring performance of WebRTC peer-

to-peer (P2P) connection establishment and data channel /

video calls. It does not provide for the testing of media servers.

The Jitsi team have developed Jitsi-Hammer [7], an ad-hoc

traffic generator dedicated to testing the performance of their

open source Jitsi Videobridge [8].

The creators of Janus gateway [9] have developed an ad-

hoc tool

to assess their gateway performance in different

configurations. From this initial assessment, they proposed

Jattack [10], an automated stressing tool for the analysis

�

of performance and scalability of WebRTC-enabled servers.

In their paper, they claim that Jattack is generic and that it can

be used to assess the performance of WebRTC gateways other

than Janus. However, without the code being freely available,

this could not be verified.

Most of the tools coming from the VoIP world assume

SIP as the signalling protocol and will not work with other

signaling protocols (XMPP, MQTT, JSON/WebSockets).

WebRTC-test [11] is an open source framework for func-

tional and load testing of WebRTC on RestComm; a cloud

platform aimed at developing voice, video and text messaging

applications.

Finally, Red5 has re-purposed an open source RTMP load

test tool called “bees with machine guns” to support WebRTC

[12].

B. Generic WebRTC Testing

In the past few years, several research groups have ad-

dressed the specific problem of generic WebRTC testing. For

instance, having a testing framework or engine that would

be agnostic to the operating system, browser, application,

network, signaling, or media server used. Specifically, the

Kurento open source project (Kurento Testing Framework) and

the KITE project have generated quite a few articles on this

subject.

1) Kurento Testing Framework a.k.a. ElasTest:

In [13], the authors introduce the Kurento Testing Frame-

work (KTF), based on Selenium and WebDriver. They mix

load-testing, quality testing, performance testing, and func-

tional testing. They apply it on a streaming/broadcast use case

(one-to-many) on a relatively small scale: only the Kurento

Media Server (KMS) was used, with one server, one room

and 50 viewers.

In [14], the authors add limited network instrumentation

to KTF. They provide results on the same configuration as

above with only minor modifications (NUBOMEDIA is used

to install the KMS). They reach 200 viewers at the cost of

using native applications (fake clients that implement only the

WebRTC parts responsible of negotiation and transport, not

the media processing pipeline). Using fake clients generates

different traffic and behavior, introducing a de-facto bias in

the results.

In [15], the authors add to KTF (renamed ElasTest) support

for testing mobile devices through Appium. It is not clear

whether they support mobile browsers and, if they do, which

browsers and on which OS, or mobile apps. They now

recommend to install KMS through OpenVidu, and propose

to extend the WebDriver protocol to add several APIs. While

WebDriver protocol implementation modifications are easy on

the Selenium side, on the browser-side they would require the

browser vendors to modify their (sometimes closed-source)

WebDriver implementations, which has never happened in the

past.

2) Karoshi Interoperability Test Engine: KITE:

The KITE project, created and managed by companies

actively involved in the WebRTC standard, has also been very

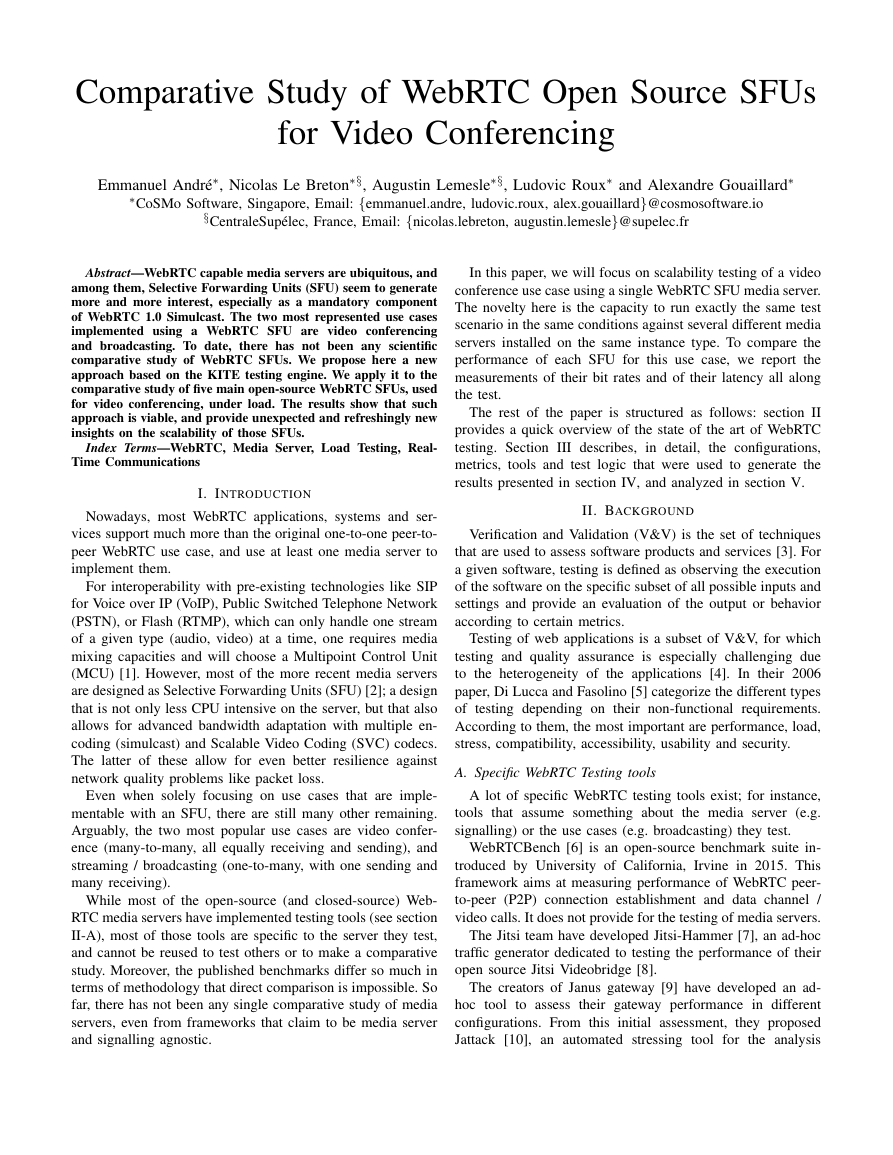

AWS instance type

Rationale

RAM

Dedicated bandwidth

Media Server VM

c4.4xlarge

Cost effective instance

with dedicated comput-

ing capacity

30 GB

2 Gbps

Client VM

c4.xlarge

Cost effective instance

with dedicated comput-

ing capacity (4 vCPU)

7.5 GB

750 Mbps

TABLE I: VMs for the media servers and the clients.

active in the WebRTC testing field with an original focus on

compliance testing for the WebRTC standardization process

at W3C [16]. KITE is running around a thousand tests across

21+ browsers / browser revisions / operating systems / OS

revisions on a daily basis. The results are reported on the

official webrtc.org page.1

In the process of WebRTC 1.0 specification compliance

testing, simulcast testing required using an SFU to test against

(see the specification2 chapter 5.1 for simulcast and RID,

and section 11.4 for the corresponding example). Since there

was no reference WebRTC SFU implementation, it has been

decided to run the simulcast tests in Chrome browser against

the most well-known of the open source SFUs.

3) Comparison and Choice:

KTF has been understandingly focused on testing the KMS

from the start, and only ever exhibited results in their publi-

cations about testing KMS in the one-to-many use case.

KITE has been designed with scalability and flexibility in

mind. The work done in the context of WebRTC 1.0 simulcast

compliance testing paved the way for generic SFU testing

support.

We decided to extend that work to comparatively load

test most of the open-source WebRTC SFUs, in the video

conference use case, with a single server configuration.

III. SYSTEM UNDER TEST AND ENVIRONMENT

A. Cloud and Network Settings

All tests were done using Amazon Web Services (AWS)

Elastic Compute Cloud (EC2). Each SFU and each of its

connecting web client apps were run on separate Virtual

Machines (VMs) in the same AWS Virtual Private Cloud

(VPC) to avoid network fluctuations and interference. The

instance types for the VMs used are described in Table I.

B. WebRTC Open Source Media Servers

We set up the following five open-source WebRTC SFUs,

using the latest source code downloaded from their respective

public GitHub repositories (except for Kurento/OpenVidu, for

which the Docker container was used), in a separate AWS EC2

Virtual Machine and with default configuration:

• Jitsi Meet (JVB version 0.1.1077)3

• Janus Gateway (version 0.4.3)4 with plugin/client web

app5

1https://webrtc.org/testing/kite/

2https://www.w3.org/TR/webrtc/

3https://github.com/jitsi/jitsi-meet

4https://github.com/meetecho/janus-gateway

5https://janus.conf.meetecho.com/videoroomtest.html

�

Fig. 1: Configuration of the tests.

• Medooze (version 0.32.0)6 with plugin/client web app7

• Kurento/OpenVidu (from Docker container, Kurento Me-

dia Server version 6.7.0)8 with plugin/client web app9

• Mediasoup (version 2.2.3)10

Note that on Kurento, the min and max bit rates are hard-

coded at 600,000 bps in MediaEndpoint.java at line 276. The

same limit is 1,700,000 bps for Jitsi, Janus, Medooze and

Mediasoup as seen in Table IV column (D).

We did not modify the source code of the SFUs, so these

sending limits remained active for Kurento and Jitsi when

running the tests.

The five open-souce WebRTC SFUs tested use the same

signalling transport protocol (WebSockets) and do not differ

enough in their signalling protocol to induce any measurable

impact in the chosen metrics. They all implement WebRTC,

which means they all proceed through discovery, handshake

and media transport establishment exactly the same standard

way, respectively using ICE [17], JSEP [18] and DTLS-SRTP

[19].

C. Web Client Applications

To test the five SFUs with the same parameters and to

collect useful information (getStats see section III-E and full

size screen captures), we made the following modifications to

the corresponding web client apps:

• increase the maximum number of participants per meet-

ing room to 40

• support for multiple meeting rooms, including roomId

and userId in the URL

• increase sending bit rate limit to 5,000,000 bps (for Janus

only, as it was configurable on the client web app)

• support for displaying up to 9 videos with the exact same

dimensions as the original test video (540×360 pixels)

6https://github.com/medooze/mp4v2.git

7https://github.com/medooze/sfu/tree/master/www

8https://openvidu.io/docs/deployment/deploying-ubuntu/

9https://github.com/OpenVidu/openvidu-tutorials/tree/master/openvidu-js-

node

10https://www.npmjs.com/package/mediasoup

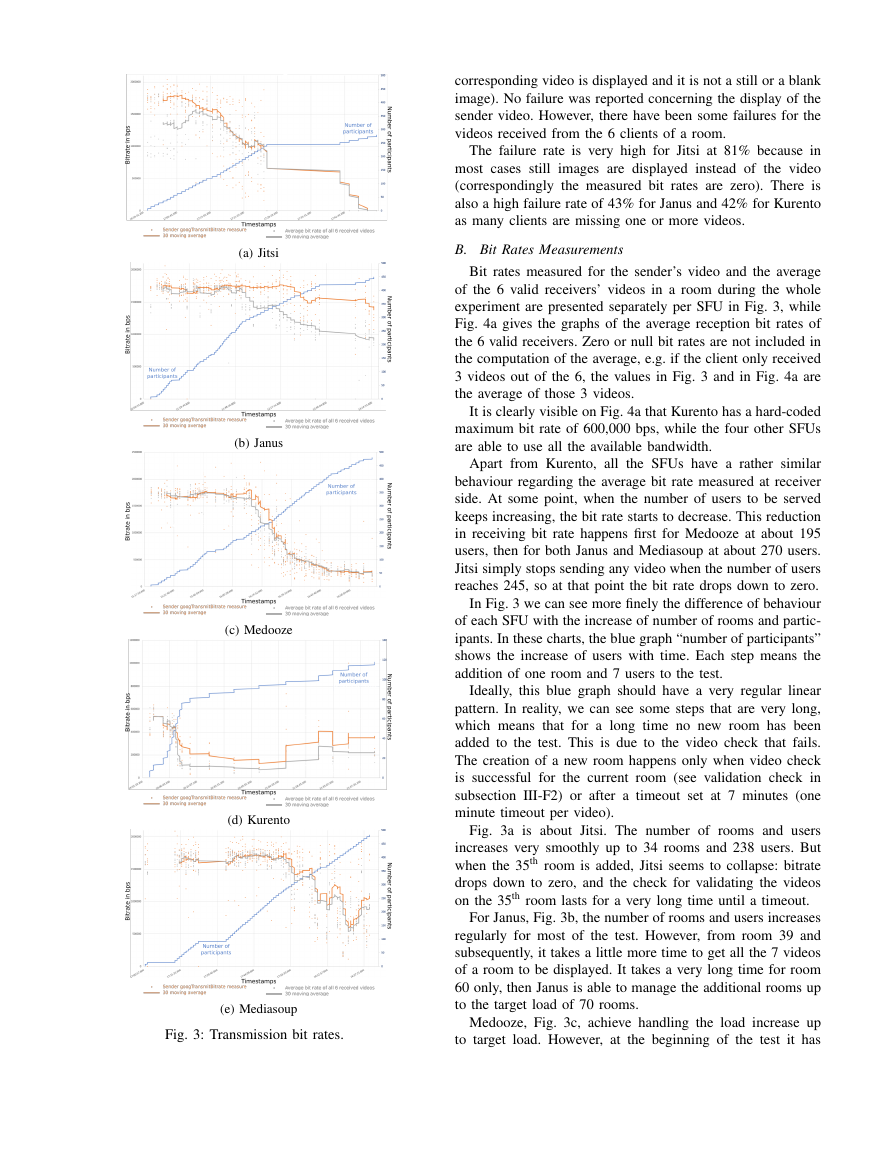

Fig. 2: Screenshot of Janus Video Room Test web app after

modifications.

• removal or re-positioning of all

the text and images

overlays added by the client web app so that they are

displayed above each video area

• expose the JavaScript RTCPeerConnection objects to call

getStats() on.

Each client web app is run on a dedicated VM, which

has been chosen to ensure there will be more than enough

resources to process the 7 videos (1 sent and 6 received).

A Selenium node, instrumenting Chrome 67, is running on

each client VM. KITE communicates with each node, through

a Selenium hub, using the WebDriver protocol (see Fig. 1).

Fig. 2 shows a screenshot of the modified Janus Video Room

web client application with the changes described above.

The sender video is displayed at the top left corner of the

window. The received videos received from each of the six

remote clients are displayed as shown on Fig. 2. The original

dimensions of the full image with the 7 videos are 1980×1280

pixels. The user id, dimension and bit rates are displayed above

the video leaving it free of obstruction for quality analysis.

D. Controlled Media for Quality Assessment

As the clients are joining a video conference, they are

supposed to send video and audio. To control the media that

each client sends and in order to make quality measurements,

we use Chrome fake media functionality. The sender and each

client play the same video.11 Chrome is launched with the

following options to activate the fake media functionality:

• allow-file-access-from-files

• use-file-for-fake-video-capture=

e-dv548_lwe08_christa_casebeer_003.y4m

• use-file-for-fake-audio-capture=

e-dv548_lwe08_christa_casebeer_003.wav

• window-size=1980,1280

11Credits

for

aka

Take One

on Alternageek.com,

the video file used:

Linuxchic

“Internet personality Christa

Casebeer,

02,”

by Christian Einfeldt. DigitalTippingPoint.com https://archive.org/details/e-

dv548 lwe08 christa casebeer 003.ogg

The original file, 540×360 pixels, encoded with H.264 Constrained Baseline

Profile 30 fps for the video part, has been converted using ffmpeg to

YUV4MPEG2 format keeping the same resolution of 540×360 pixels, colour

space 4:2:0, 30 fps, progressive. Frame number has been added as an overlay

to the top left of each frame, while time in seconds has been added at the

bottom left. The original audio part, MPEG-AAC 44100 Hz 128 kpbs, has

been converted using ffmpeg to WAV 44100 Hz 1411 kbps.

�

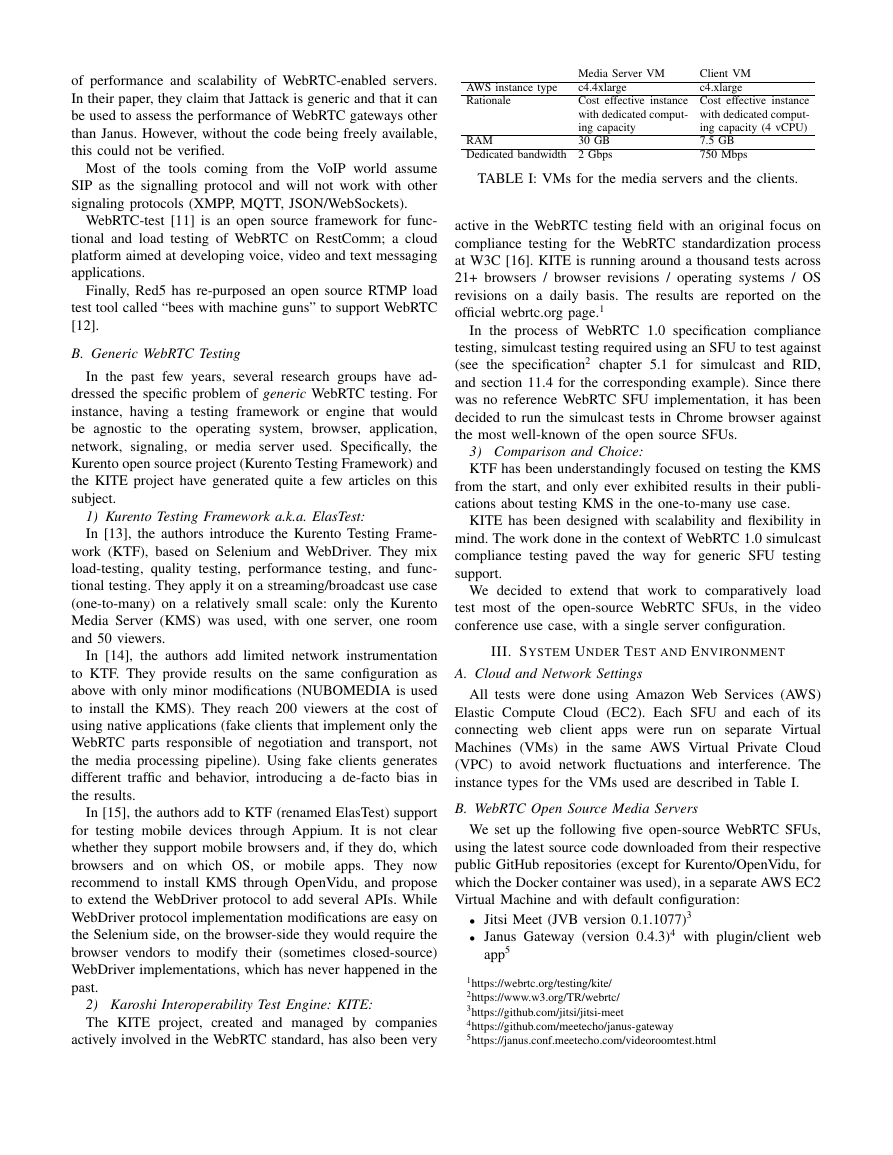

Number of rooms

(7 participants

in a room)

Number of

client VMs

Jitsi

Janus Medooze Kurento Mediasoup

40

70

70

280

490

490

20

140

70

490

TABLE II: Load test parameters per SFU.

The last option (window-size) was set to be large enough to

accommodate 7 videos on the screen with original dimensions

of 540×360 pixels.

E. Metrics and Probing

1) Client-side: getStats() function:

The test calls getStats() to retrieve all of the statistics values

provided by Chrome. The bit rates for the sent video, and all

received videos, are computed by KITE by calling getStats

twice (during ramp-up) or 8 times (at load reached) using the

byteReceived and timestamp value of the first and last

getStats objects.

2) Client-side: Video Verification:

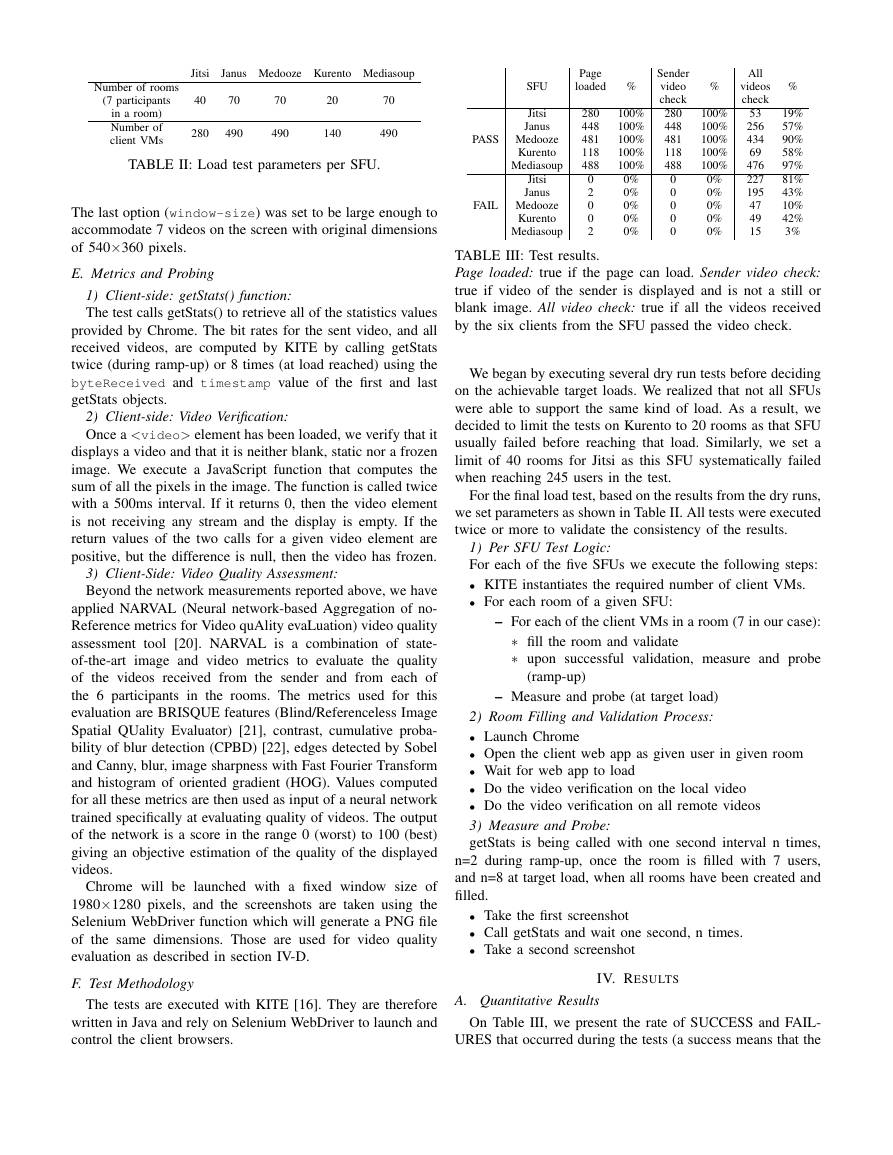

Once a

corresponding video is displayed and it is not a still or a blank

image). No failure was reported concerning the display of the

sender video. However, there have been some failures for the

videos received from the 6 clients of a room.

The failure rate is very high for Jitsi at 81% because in

most cases still images are displayed instead of the video

(correspondingly the measured bit rates are zero). There is

also a high failure rate of 43% for Janus and 42% for Kurento

as many clients are missing one or more videos.

(a) Jitsi

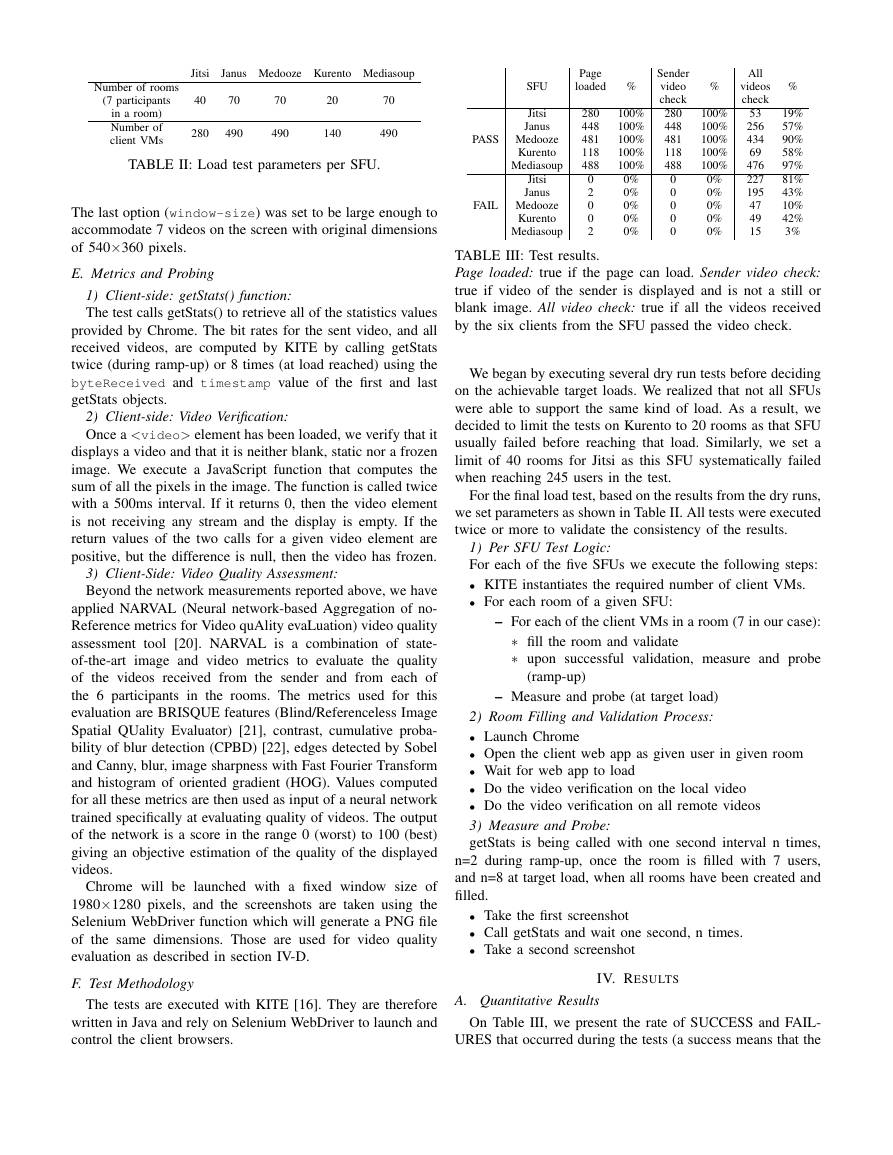

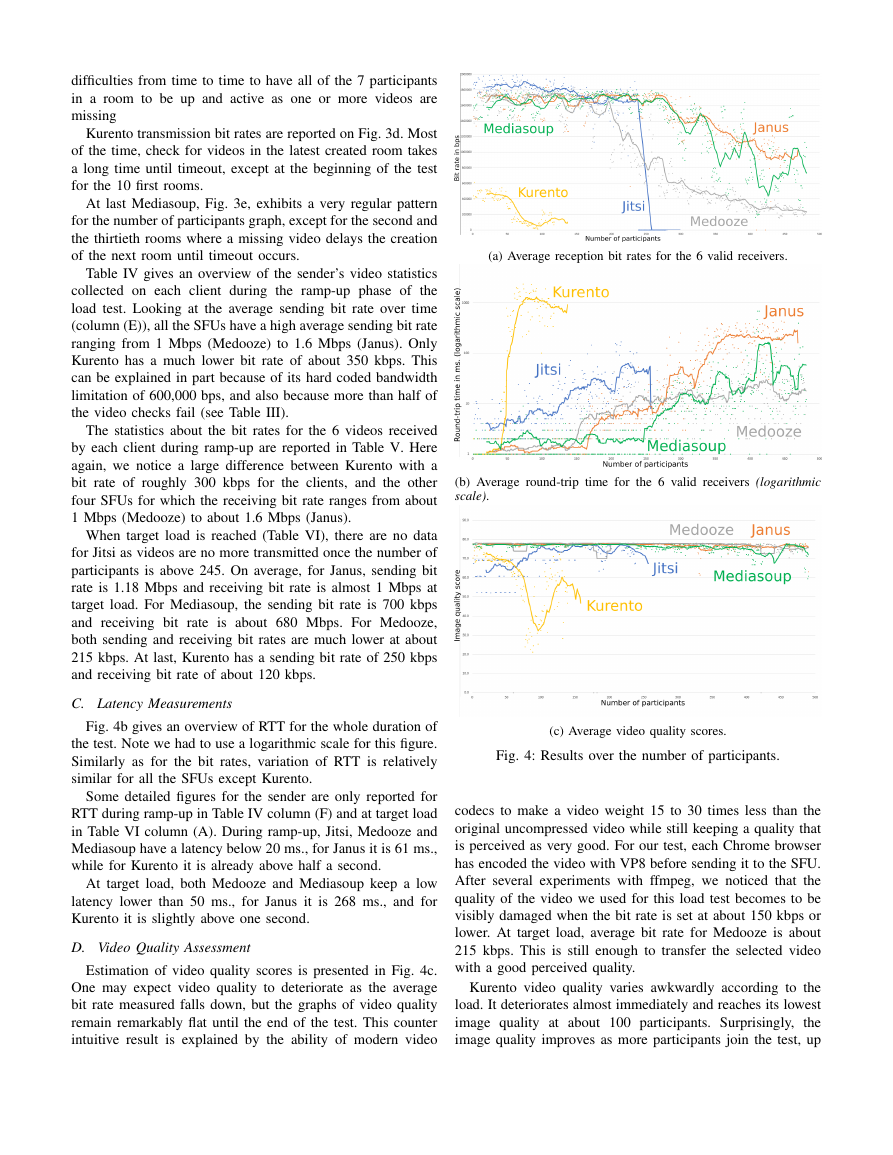

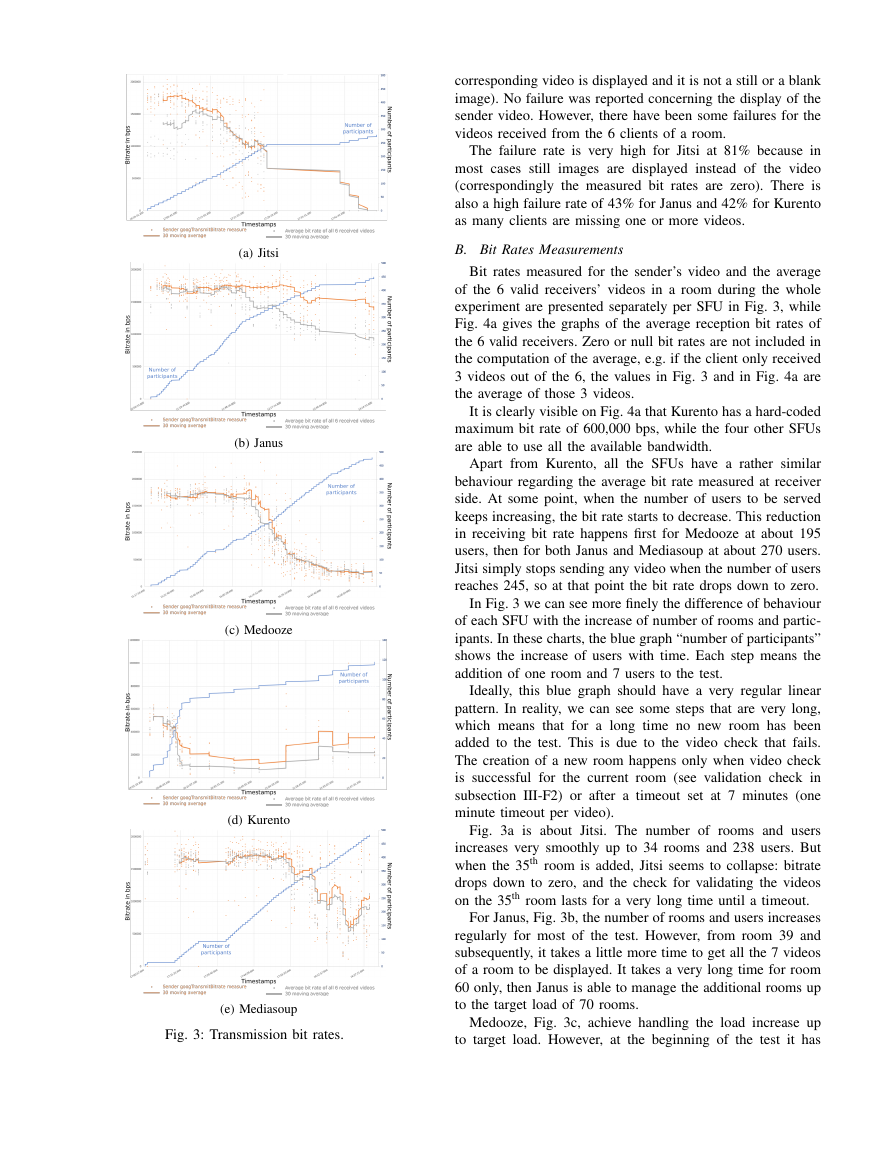

B. Bit Rates Measurements

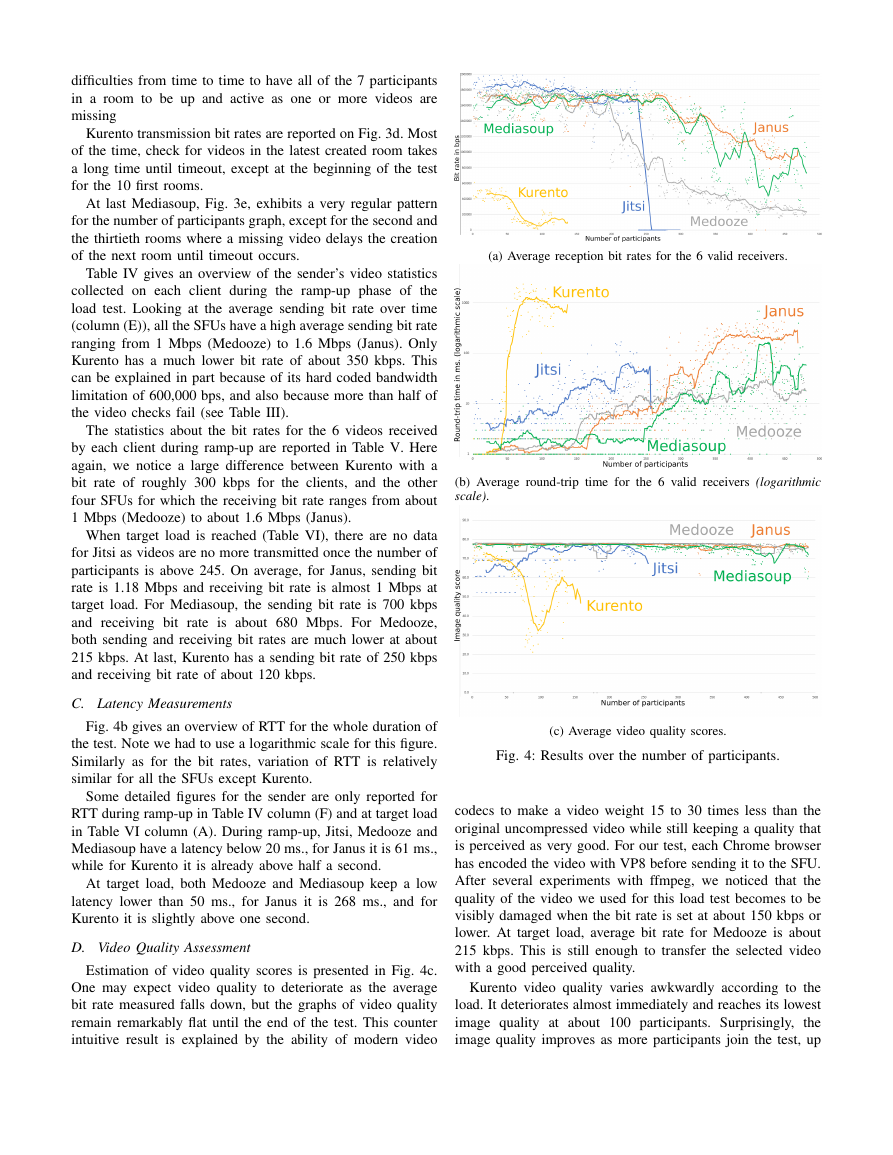

Bit rates measured for the sender’s video and the average

of the 6 valid receivers’ videos in a room during the whole

experiment are presented separately per SFU in Fig. 3, while

Fig. 4a gives the graphs of the average reception bit rates of

the 6 valid receivers. Zero or null bit rates are not included in

the computation of the average, e.g. if the client only received

3 videos out of the 6, the values in Fig. 3 and in Fig. 4a are

the average of those 3 videos.

It is clearly visible on Fig. 4a that Kurento has a hard-coded

maximum bit rate of 600,000 bps, while the four other SFUs

are able to use all the available bandwidth.

Apart from Kurento, all the SFUs have a rather similar

behaviour regarding the average bit rate measured at receiver

side. At some point, when the number of users to be served

keeps increasing, the bit rate starts to decrease. This reduction

in receiving bit rate happens first for Medooze at about 195

users, then for both Janus and Mediasoup at about 270 users.

Jitsi simply stops sending any video when the number of users

reaches 245, so at that point the bit rate drops down to zero.

In Fig. 3 we can see more finely the difference of behaviour

of each SFU with the increase of number of rooms and partic-

ipants. In these charts, the blue graph “number of participants”

shows the increase of users with time. Each step means the

addition of one room and 7 users to the test.

Ideally, this blue graph should have a very regular linear

pattern. In reality, we can see some steps that are very long,

which means that for a long time no new room has been

added to the test. This is due to the video check that fails.

The creation of a new room happens only when video check

is successful for the current room (see validation check in

subsection III-F2) or after a timeout set at 7 minutes (one

minute timeout per video).

Fig. 3a is about Jitsi. The number of rooms and users

increases very smoothly up to 34 rooms and 238 users. But

when the 35th room is added, Jitsi seems to collapse: bitrate

drops down to zero, and the check for validating the videos

on the 35th room lasts for a very long time until a timeout.

For Janus, Fig. 3b, the number of rooms and users increases

regularly for most of the test. However, from room 39 and

subsequently, it takes a little more time to get all the 7 videos

of a room to be displayed. It takes a very long time for room

60 only, then Janus is able to manage the additional rooms up

to the target load of 70 rooms.

Medooze, Fig. 3c, achieve handling the load increase up

to target load. However, at the beginning of the test it has

(b) Janus

(c) Medooze

(d) Kurento

(e) Mediasoup

Fig. 3: Transmission bit rates.

�

difficulties from time to time to have all of the 7 participants

in a room to be up and active as one or more videos are

missing

Kurento transmission bit rates are reported on Fig. 3d. Most

of the time, check for videos in the latest created room takes

a long time until timeout, except at the beginning of the test

for the 10 first rooms.

At last Mediasoup, Fig. 3e, exhibits a very regular pattern

for the number of participants graph, except for the second and

the thirtieth rooms where a missing video delays the creation

of the next room until timeout occurs.

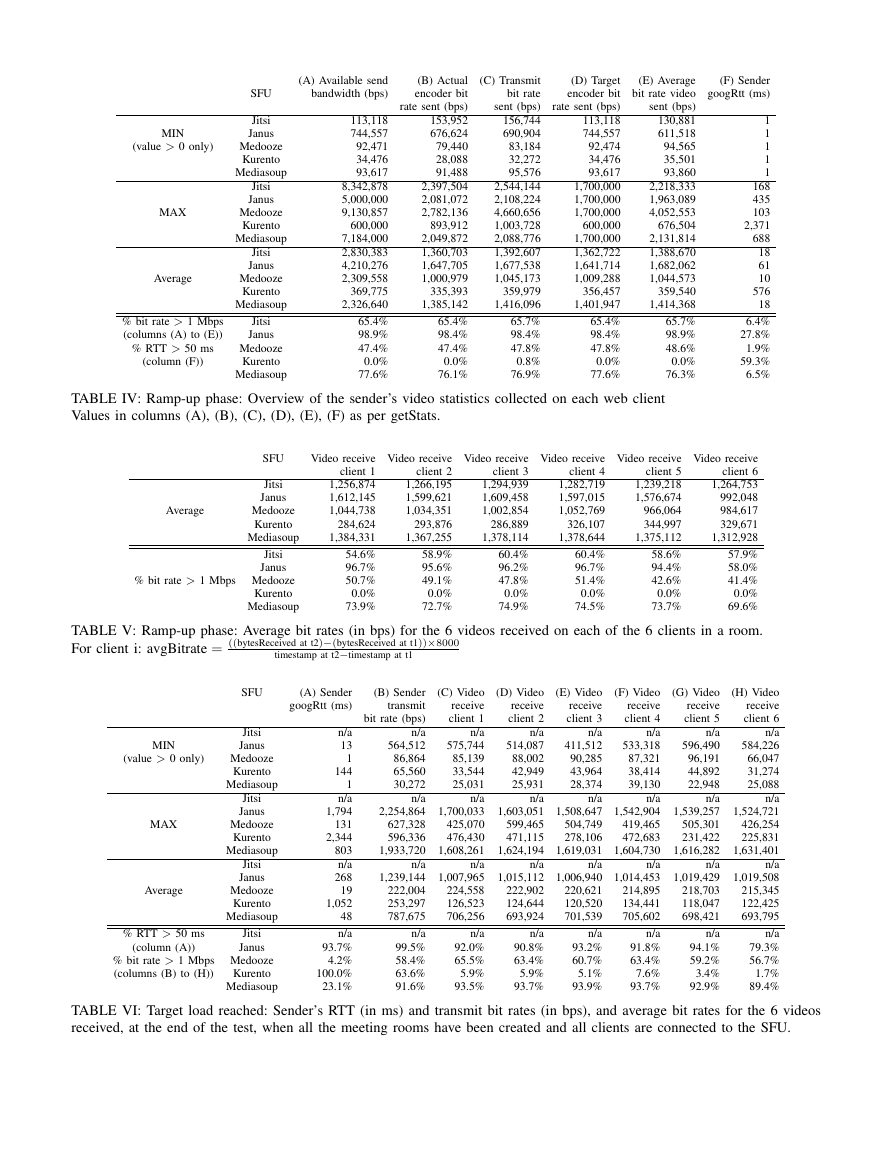

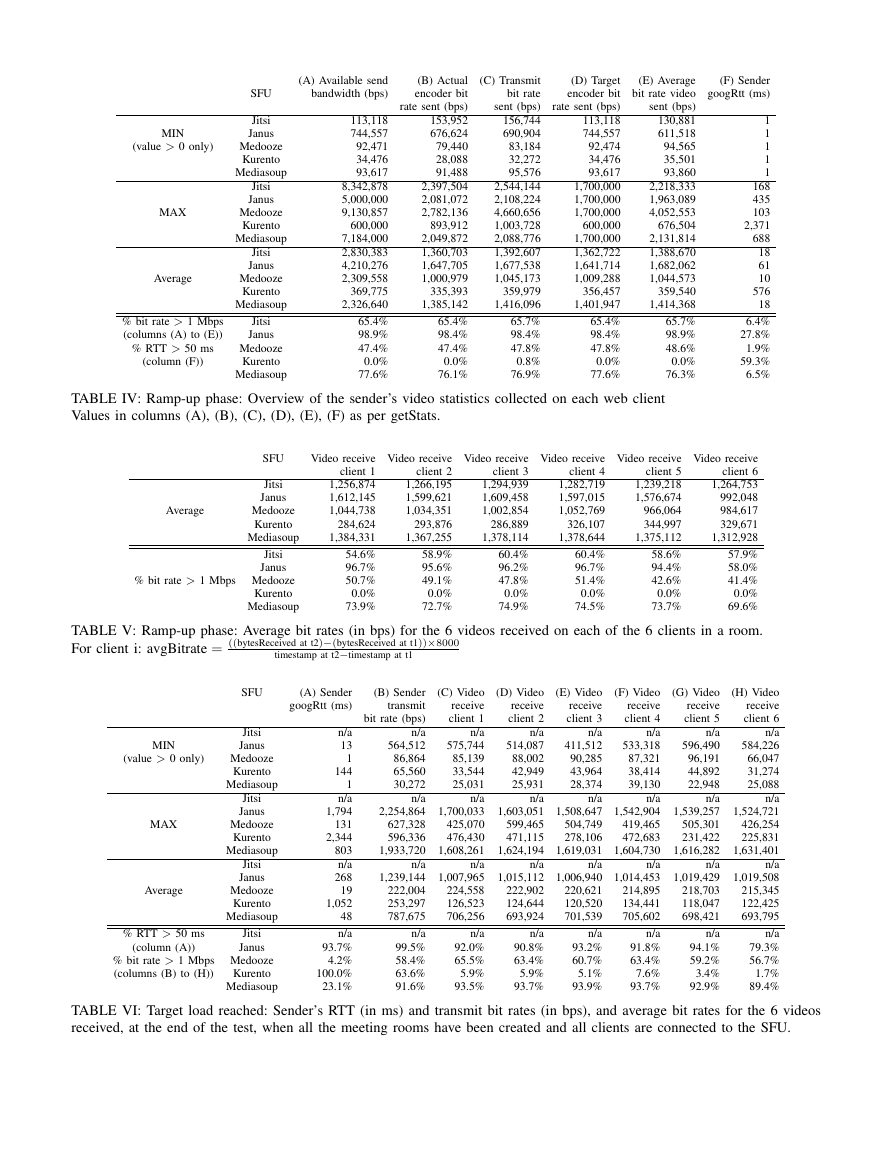

Table IV gives an overview of the sender’s video statistics

collected on each client during the ramp-up phase of the

load test. Looking at the average sending bit rate over time

(column (E)), all the SFUs have a high average sending bit rate

ranging from 1 Mbps (Medooze) to 1.6 Mbps (Janus). Only

Kurento has a much lower bit rate of about 350 kbps. This

can be explained in part because of its hard coded bandwidth

limitation of 600,000 bps, and also because more than half of

the video checks fail (see Table III).

The statistics about the bit rates for the 6 videos received

by each client during ramp-up are reported in Table V. Here

again, we notice a large difference between Kurento with a

bit rate of roughly 300 kbps for the clients, and the other

four SFUs for which the receiving bit rate ranges from about

1 Mbps (Medooze) to about 1.6 Mbps (Janus).

When target load is reached (Table VI), there are no data

for Jitsi as videos are no more transmitted once the number of

participants is above 245. On average, for Janus, sending bit

rate is 1.18 Mbps and receiving bit rate is almost 1 Mbps at

target load. For Mediasoup, the sending bit rate is 700 kbps

and receiving bit rate is about 680 Mbps. For Medooze,

both sending and receiving bit rates are much lower at about

215 kbps. At last, Kurento has a sending bit rate of 250 kbps

and receiving bit rate of about 120 kbps.

C. Latency Measurements

Fig. 4b gives an overview of RTT for the whole duration of

the test. Note we had to use a logarithmic scale for this figure.

Similarly as for the bit rates, variation of RTT is relatively

similar for all the SFUs except Kurento.

Some detailed figures for the sender are only reported for

RTT during ramp-up in Table IV column (F) and at target load

in Table VI column (A). During ramp-up, Jitsi, Medooze and

Mediasoup have a latency below 20 ms., for Janus it is 61 ms.,

while for Kurento it is already above half a second.

At target load, both Medooze and Mediasoup keep a low

latency lower than 50 ms., for Janus it is 268 ms., and for

Kurento it is slightly above one second.

D. Video Quality Assessment

Estimation of video quality scores is presented in Fig. 4c.

One may expect video quality to deteriorate as the average

bit rate measured falls down, but the graphs of video quality

remain remarkably flat until the end of the test. This counter

intuitive result is explained by the ability of modern video

(a) Average reception bit rates for the 6 valid receivers.

(b) Average round-trip time for the 6 valid receivers (logarithmic

scale).

(c) Average video quality scores.

Fig. 4: Results over the number of participants.

codecs to make a video weight 15 to 30 times less than the

original uncompressed video while still keeping a quality that

is perceived as very good. For our test, each Chrome browser

has encoded the video with VP8 before sending it to the SFU.

After several experiments with ffmpeg, we noticed that the

quality of the video we used for this load test becomes to be

visibly damaged when the bit rate is set at about 150 kbps or

lower. At target load, average bit rate for Medooze is about

215 kbps. This is still enough to transfer the selected video

with a good perceived quality.

Kurento video quality varies awkwardly according to the

load. It deteriorates almost immediately and reaches its lowest

image quality at about 100 participants. Surprisingly,

the

image quality improves as more participants join the test, up

�

to about 130 participants, before dropping again.

V. ANALYSIS

This study exhibits interesting behaviours of the five SFUs

that have been evaluated when they have to handle an increas-

ing number of rooms and peers in a video conference use

case.

Kurento is plagued by both a hard coded maximum band-

width and a high RTT problem. When the number of partici-

pants is above 42, RTT rises sharply to reach and stay at about

one second. The bit rate, already very low at the start of the

test because of the hard coded limitation, falls quickly above

49 participants to a very low value before improving a little

above 100 participants. Interestingly, video quality, which was

worsening since the beginning of the test, starts to get better

at about 100 participants just when the bit rates improve. But

this improvement lasts only until 130 participants have joined

the test.

Jitsi has some internal problem that makes it suddenly stop

transmitting videos when there are more than 245 peers in the

test.

All the three other SFUs tested behave roughly in a similar

way. Bit rate is maintained at the same level while the number

of peers increases, then at some point bit rate start to decrease.

It happens first to Medooze at about 190 peers. Janus and

Mediasoup are able to keep the maximum level of bit rate

for a higher load as the decrease of bit rate starts above

280 peers. We note also that the decrease of bit rate is sharper

for Medooze.

VI. CONCLUSION AND FUTURE WORK

We have shown that it is now possible to comparatively test

WebRTC SFUs using KITE. Several bugs and oddities have

been found and reported to their respective team in the process.

This work was focused on the testing system, and not on

the tests themselves. In the future we would like to add more

metrics and tests on client side, for example to assess audio

quality as well, and to run the tests on all supported browsers

to check how browser specific WebRTC implementations make

a difference. On the server side, we would like to add CPU,

RAM and bandwidth estimation probes to assess the server

scalability on load.

We would like to extend this work to variations of the video

conferencing use cases by making the number of rooms and

the number of users per rooms a variable of the test run. That

would allow to reproduce the results of [7] and [10].

We would like to extend this work to different use cases,

for example broadcasting / streaming. That would allow,

among other things, to reproduce the results from the Kurento

Research Team.

ACKNOWLEDGMENT

We would like to thanks Boris Grozev (Jitsi), Lorenzo

I˜naki Baz Castillo (Mediasoup), Sergio

Miniero (Janus),

Murillo (Medooze), Lennart Schulte (callstats.io), Lynsey

Haynes (slack) and other Media server experts who provided

live feedback on early result during CommCon UK 2018.

We would also like to thanks Boni Garc´ıa for discussions

around the Kurento Team Testing research results.

REFERENCES

[1] M. Westerlund and S. Wenger, RFC 7667: RTP Topologies, IETF, Nov.

2015. [Online]. Available: https://datatracker.ietf.org/doc/rfc7667/

[2] B. Grozev, L. Marinov, L. Marinov, and E. Ivov, “Last N: relevance-

based selectivity for forwarding video in multimedia conferences,” in

Proceedings of the 25th ACM Workshop on Network and Operating

Systems Support for Digital Audio and Video, 2015.

[3] A. Bertolino, “Software testing research: Achievements, challenges,

dreams,” in Future of Software Engineering, 2007.

[4] Y.-F. Li, P. K. Das, and D. L. Dowe, “Two decades of web application

testing — a survey of recent advances,” Information Systems, 2014.

[5] G. A. Di Lucca and A. R. Fasolino, “Testing web-based applications:

The state of the art and future trends,” Information and Software

Technology, 2006.

[6] S. Taheri, L. A. Beni, A. V. Veidenbaum, A. Nicolau, R. Cammarota,

J. Qiu, Q. Lu, and M. R. Haghighat, “WebRTCBench: A benchmark for

performance assessment of WebRTC implementations,” in 13th IEEE

Symposium on Embedded Systems for Real-time Multimedia, 2015.

[7] Jitsi-Hammer, a traffic generator

for Jitsi Videobridge.

[Online].

Available: https://github.com/jitsi/jitsi-hammer

[8] B. Grozev

and

E.

Ivov.

evaluation.

performance-evaluation/

[Online]. Available:

Jitsi

videobridge

performance

https://jitsi.org/jitsi-videobridge-

[9] A. Amirante, T. Castaldi, L. Miniero, and S. P. Romano, “Performance

analysis of the Janus WebRTC gateway,” in EuroSys 2015, Workshop on

All-Web Real-Time Systems, 2015.

[10] ——, “Jattack: a WebRTC load testing tool,” in IPTComm 2016,

Principles, Systems and Applications of IP Telecommunications, 2016.

[11] WebRTC-test, framework for functional and load testing of WebRTC.

[Online]. Available: https://github.com/RestComm/WebRTC-test

[12] Red5Pro load testing: WebRTC and more.

[Online]. Available:

https://blog.red5pro.com/load-testing-with-webrtc-and-more/

[13] B. Garc´ıa, L. Lopez-Fernandez, F. Gort´azar, and M. Gallego, “Analysis

of video quality and end-to-end latency in WebRTC,” in IEEE Globecom

2016, Workshop on Quality of Experience for Multimedia Communica-

tions, 2016.

[14] B. Garc´ıa, L. Lopez-Fernandez, F. Gort´azar, M. Gallego, and M. Paris,

“WebRTC testing: Challenges and practical solutions,” IEEE Communi-

cations Standards Magazine, 2017.

[15] B. Garc´ıa, F. Gort´azar, M. Gallego, and E. Jim´enez, “User impersonation

as a service in end-to-end testing,” in MODELSWARD 2018, 6th

International Conference on Model-Driven Engineering and Software

Development, 2018.

[16] A. Gouaillard and L. Roux, “Real-time communication testing evolu-

tion with WebRTC 1.0,” in IPTComm 2017, Principles, Systems and

Applications of IP Telecommunications, 2017.

[17] A. Keranen, C. Holmberg, and J. Rosenberg, RFC 8445: Interactive

Connectivity Establishment (ICE): A Protocol

for Network Address

Translator (NAT) Traversal, IETF, July 2018. [Online]. Available:

https://datatracker.ietf.org/doc/rfc8445/

[18] J. Uberti, C.

Jennings,

and E. Rescorla,

Establishment Protocol [draft

IETF, Oct. 2017.

Available: https://tools.ietf.org/html/draft-ietf-rtcweb-jsep-24/

IETF],

JavaScript Session

[Online].

[19] D. McGrew and E. Rescorla, RFC 5764: Datagram Transport Layer

Security (DTLS) Extension to Establish Keys for the Secure Real-time

Transport Protocol (SRTP), IETF, May 2010. [Online]. Available:

https://datatracker.ietf.org/doc/rfc5764/

[20] A. Lemesle, A. Marion, L. Roux, and A. Gouaillard, “NARVAL, a no-

reference video quality tool for real-time communications,” in Proc. of

Human Vision and Electronic Imaging, Jan. 2019, [submitted].

[21] A. Mittal, A. K. Moorthy, and A. C. Bovik, “No-reference image

quality assessment in the spatial domain,” IEEE Transactions on Image

Processing, 2012.

[22] N. D. Narvekar and L. J. Karam, “A no-reference image blur metric

based on the cumulative probability of blur detection (CPBD),” IEEE

Transactions on Image Processing, 2011.

�

SFU

(A) Available send

bandwidth (bps)

MIN

(value > 0 only)

MAX

Average

% bit rate > 1 Mbps

(columns (A) to (E))

% RTT > 50 ms

(column (F))

Jitsi

Janus

Medooze

Kurento

Mediasoup

Jitsi

Janus

Medooze

Kurento

Mediasoup

Jitsi

Janus

Medooze

Kurento

Mediasoup

Jitsi

Janus

Medooze

Kurento

Mediasoup

113,118

744,557

92,471

34,476

93,617

8,342,878

5,000,000

9,130,857

600,000

7,184,000

2,830,383

4,210,276

2,309,558

369,775

2,326,640

65.4%

98.9%

47.4%

0.0%

77.6%

(B) Actual

encoder bit

rate sent (bps)

153,952

676,624

79,440

28,088

91,488

2,397,504

2,081,072

2,782,136

893,912

2,049,872

1,360,703

1,647,705

1,000,979

335,393

1,385,142

65.4%

98.4%

47.4%

0.0%

76.1%

(C) Transmit

bit rate

sent (bps)

156,744

690,904

83,184

32,272

95,576

2,544,144

2,108,224

4,660,656

1,003,728

2,088,776

1,392,607

1,677,538

1,045,173

359,979

1,416,096

65.7%

98.4%

47.8%

0.8%

76.9%

(D) Target

(E) Average

encoder bit bit rate video

sent (bps)

130,881

611,518

94,565

35,501

93,860

2,218,333

1,963,089

4,052,553

676,504

2,131,814

1,388,670

1,682,062

1,044,573

359,540

1,414,368

65.7%

98.9%

48.6%

0.0%

76.3%

rate sent (bps)

113,118

744,557

92,474

34,476

93,617

1,700,000

1,700,000

1,700,000

600,000

1,700,000

1,362,722

1,641,714

1,009,288

356,457

1,401,947

65.4%

98.4%

47.8%

0.0%

77.6%

(F) Sender

googRtt (ms)

1

1

1

1

1

168

435

103

2,371

688

18

61

10

576

18

6.4%

27.8%

1.9%

59.3%

6.5%

TABLE IV: Ramp-up phase: Overview of the sender’s video statistics collected on each web client

Values in columns (A), (B), (C), (D), (E), (F) as per getStats.

Average

SFU

Jitsi

Janus

Medooze

Kurento

Mediasoup

Video receive Video receive Video receive Video receive Video receive Video receive

client 6

1,264,753

992,048

984,617

329,671

1,312,928

57.9%

58.0%

41.4%

0.0%

69.6%

TABLE V: Ramp-up phase: Average bit rates (in bps) for the 6 videos received on each of the 6 clients in a room.

For client i: avgBitrate = ((bytesReceived at t2)−(bytesReceived at t1))×8000

client 2

1,266,195

1,599,621

1,034,351

293,876

1,367,255

58.9%

95.6%

49.1%

0.0%

72.7%

client 3

1,294,939

1,609,458

1,002,854

286,889

1,378,114

60.4%

96.2%

47.8%

0.0%

74.9%

client 4

1,282,719

1,597,015

1,052,769

326,107

1,378,644

60.4%

96.7%

51.4%

0.0%

74.5%

client 1

1,256,874

1,612,145

1,044,738

284,624

1,384,331

54.6%

96.7%

50.7%

0.0%

73.9%

client 5

1,239,218

1,576,674

966,064

344,997

1,375,112

58.6%

94.4%

42.6%

0.0%

73.7%

% bit rate > 1 Mbps Medooze

Kurento

Mediasoup

Jitsi

Janus

timestamp at t2−timestamp at t1

SFU

(A) Sender

googRtt (ms)

MIN

(value > 0 only)

MAX

Average

Jitsi

Janus

Medooze

Kurento

Mediasoup

Jitsi

Janus

Medooze

Kurento

Mediasoup

Jitsi

Janus

Medooze

Kurento

Mediasoup

% RTT > 50 ms

(column (A))

Jitsi

Janus

% bit rate > 1 Mbps Medooze

Kurento

(columns (B) to (H))

Mediasoup

n/a

13

1

144

1

n/a

1,794

131

2,344

803

n/a

268

19

1,052

48

n/a

93.7%

4.2%

100.0%

23.1%

(B) Sender

transmit

bit rate (bps)

n/a

564,512

86,864

65,560

30,272

n/a

2,254,864

627,328

596,336

1,933,720

n/a

1,239,144

222,004

253,297

787,675

n/a

99.5%

58.4%

63.6%

91.6%

(C) Video

receive

client 1

n/a

575,744

85,139

33,544

25,031

n/a

1,700,033

425,070

476,430

1,608,261

n/a

1,007,965

224,558

126,523

706,256

n/a

92.0%

65.5%

5.9%

93.5%

(D) Video

receive

client 2

n/a

514,087

88,002

42,949

25,931

n/a

1,603,051

599,465

471,115

1,624,194

n/a

1,015,112

222,902

124,644

693,924

n/a

90.8%

63.4%

5.9%

93.7%

(E) Video

receive

client 3

n/a

411,512

90,285

43,964

28,374

n/a

1,508,647

504,749

278,106

1,619,031

n/a

1,006,940

220,621

120,520

701,539

n/a

93.2%

60.7%

5.1%

93.9%

(F) Video

receive

client 4

n/a

533,318

87,321

38,414

39,130

n/a

1,542,904

419,465

472,683

1,604,730

n/a

1,014,453

214,895

134,441

705,602

n/a

91.8%

63.4%

7.6%

93.7%

(G) Video

receive

client 5

n/a

596,490

96,191

44,892

22,948

n/a

1,539,257

505,301

231,422

1,616,282

n/a

1,019,429

218,703

118,047

698,421

n/a

94.1%

59.2%

3.4%

92.9%

(H) Video

receive

client 6

n/a

584,226

66,047

31,274

25,088

n/a

1,524,721

426,254

225,831

1,631,401

n/a

1,019,508

215,345

122,425

693,795

n/a

79.3%

56.7%

1.7%

89.4%

TABLE VI: Target load reached: Sender’s RTT (in ms) and transmit bit rates (in bps), and average bit rates for the 6 videos

received, at the end of the test, when all the meeting rooms have been created and all clients are connected to the SFU.

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc