Introduction to Probability

2nd Edition

Problem Solutions

(last updated: 7/31/08)

c Dimitri P. Bertsekas and John N. Tsitsiklis

Massachusetts Institute of Technology

WWW site for book information and orders

http://www.athenasc.com

Athena Scientific, Belmont, Massachusetts

1

�

C H A P T E R 1

Solution to Problem 1.1. We have

A = {2, 4, 6},

B = {4, 5, 6},

so A ∪ B = {2, 4, 5, 6}, and

On the other hand,

(A ∪ B)c = {1, 3}.

Ac ∩ Bc = {1, 3, 5} ∩ {1, 2, 3} = {1, 3}.

Similarly, we have A ∩ B = {4, 6}, and

(A ∩ B)c = {1, 2, 3, 5}.

On the other hand,

Ac ∪ Bc = {1, 3, 5} ∪ {1, 2, 3} = {1, 2, 3, 5}.

Solution to Problem 1.2.

sets S and T , we have

(a) By using a Venn diagram it can be seen that for any

S = (S ∩ T ) ∪ (S ∩ T c).

(Alternatively, argue that any x must belong to either T or to T c, so x belongs to S

if and only if it belongs to S ∩ T or to S ∩ T c.) Apply this equality with S = Ac and

T = B, to obtain the first relation

Ac = (Ac ∩ B) ∪ (Ac ∩ Bc).

Interchange the roles of A and B to obtain the second relation.

(b) By De Morgan’s law, we have

(A ∩ B)c = Ac ∪ Bc,

and by using the equalities of part (a), we obtain

(A∩B)c =(Ac∩B)∪(Ac∩Bc)∪(A∩Bc)∪(Ac∩Bc) = (Ac∩B)∪(Ac∩Bc)∪(A∩Bc).

(c) We have A = {1, 3, 5} and B = {1, 2, 3}, so A ∩ B = {1, 3}. Therefore,

(A ∩ B)c = {2, 4, 5, 6},

2

�

and

Ac ∩ B = {2},

Ac ∩ Bc = {4, 6},

A ∩ Bc = {5}.

Thus, the equality of part (b) is verified.

Solution to Problem 1.5. Let G and C be the events that the chosen student is

a genius and a chocolate lover, respectively. We have P(G) = 0.6, P(C) = 0.7, and

P(G∩ C) = 0.4. We are interested in P(Gc ∩ C c), which is obtained with the following

calculation:

P(Gc∩C c) = 1−P(G∪C) = 1−P(G)+P(C)−P(G∩C) = 1−(0.6+0.7−0.4) = 0.1.

Solution to Problem 1.6. We first determine the probabilities of the six possible

outcomes. Let a = P({1}) = P({3}) = P({5}) and b = P({2}) = P({4}) = P({6}).

We are given that b = 2a. By the additivity and normalization axioms, 1 = 3a + 3b =

3a + 6a = 9a. Thus, a = 1/9, b = 2/9, and P({1, 2, 3}) = 4/9.

Solution to Problem 1.7. The outcome of this experiment can be any finite sequence

of the form (a1, a2, . . . , an), where n is an arbitrary positive integer, a1, a2, . . . , an−1

belong to {1, 3}, and an belongs to {2, 4}. In addition, there are possible outcomes

in which an even number is never obtained. Such outcomes are infinite sequences

(a1, a2, . . .), with each element in the sequence belonging to {1, 3}. The sample space

consists of all possible outcomes of the above two types.

Solution to Problem 1.8. Let pi be the probability of winning against the opponent

played in the ith turn. Then, you will win the tournament if you win against the 2nd

player (probability p2) and also you win against at least one of the two other players

[probability p1 + (1 − p1)p3 = p1 + p3 − p1p3]. Thus, the probability of winning the

tournament is

p2(p1 + p3 − p1p3).

The order (1, 2, 3) is optimal if and only if the above probability is no less than the

probabilities corresponding to the two alternative orders, i.e.,

p2(p1 + p3 − p1p3) ≥ p1(p2 + p3 − p2p3),

p2(p1 + p3 − p1p3) ≥ p3(p2 + p1 − p2p1).

It can be seen that the first inequality above is equivalent to p2 ≥ p1, while the second

inequality above is equivalent to p2 ≥ p3.

Solution to Problem 1.9.

(a) Since Ω = ∪n

i=1Si, we have

n[

A =

(A ∩ Si),

i=1

while the sets A ∩ Si are disjoint. The result follows by using the additivity axiom.

(b) The events B ∩ C c, Bc ∩ C, B ∩ C, and Bc ∩ C c form a partition of Ω, so by part

(a), we have

P(A) = P(A ∩ B ∩ C c) + P(A ∩ Bc ∩ C) + P(A ∩ B ∩ C) + P(A ∩ Bc ∩ C c).

(1)

3

�

The event A ∩ B can be written as the union of two disjoint events as follows:

A ∩ B = (A ∩ B ∩ C) ∪ (A ∩ B ∩ C c),

so that

Similarly,

P(A ∩ B) = P(A ∩ B ∩ C) + P(A ∩ B ∩ C c).

P(A ∩ C) = P(A ∩ B ∩ C) + P(A ∩ Bc ∩ C).

(2)

(3)

Combining Eqs. (1)-(3), we obtain the desired result.

Solution to Problem 1.10. Since the events A ∩ Bc and Ac ∩ B are disjoint, we

have using the additivity axiom repeatedly,

P(A∩Bc)∪(Ac∩B) = P(A∩Bc)+P(Ac∩B) = P(A)−P(A∩B)+P(B)−P(A∩B).

Solution to Problem 1.14.

(a) Each possible outcome has probability 1/36. There

are 6 possible outcomes that are doubles, so the probability of doubles is 6/36 = 1/6.

(b) The conditioning event (sum is 4 or less) consists of the 6 outcomes

(1, 1), (1, 2), (1, 3), (2, 1), (2, 2), (3, 1),

2 of which are doubles, so the conditional probability of doubles is 2/6 = 1/3.

(c) There are 11 possible outcomes with at least one 6, namely, (6, 6), (6, i), and (i, 6),

for i = 1, 2, . . . , 5. Thus, the probability that at least one die is a 6 is 11/36.

(d) There are 30 possible outcomes where the dice land on different numbers. Out of

these, there are 10 outcomes in which at least one of the rolls is a 6. Thus, the desired

conditional probability is 10/30 = 1/3.

Solution to Problem 1.15. Let A be the event that the first toss is a head and

let B be the event that the second toss is a head. We must compare the conditional

probabilities P(A ∩ B | A) and P(A ∩ B | A ∪ B). We have

P(A ∩ B | A) =

and

P(A ∩ B | A ∪ B) =

P(A ∩ B) ∩ A

P(A ∩ B) ∩ (A ∪ B)

P(A)

=

P(A ∪ B)

P(A ∩ B)

P(A)

,

P(A ∩ B)

P(A ∪ B)

.

=

Since P(A ∪ B) ≥ P(A), the first conditional probability above is at least as large, so

Alice is right, regardless of whether the coin is fair or not. In the case where the coin

is fair, that is, if all four outcomes HH, HT , T H, T T are equally likely, we have

P(A ∩ B)

P(A)

=

1/4

1/2

=

1

2

,

P(A ∩ B)

P(A ∪ B)

=

1/4

3/4

=

1

3

.

A generalization of Alice’s reasoning is that if A, B, and C are events such that

B ⊂ C and A ∩ B = A ∩ C (for example, if A ⊂ B ⊂ C), then the event A is at least

4

�

as likely if we know that B has occurred than if we know that C has occurred. Alice’s

reasoning corresponds to the special case where C = A ∪ B.

Solution to Problem 1.16.

In this problem, there is a tendency to reason that since

the opposite face is either heads or tails, the desired probability is 1/2. This is, however,

wrong, because given that heads came up, it is more likely that the two-headed coin

was chosen. The correct reasoning is to calculate the conditional probability

p = P(two-headed coin was chosen| heads came up)

=

P(two-headed coin was chosen and heads came up)

P(heads came up)

.

We have

P(two-headed coin was chosen and heads came up) =

1

3

,

P(heads came up) =

1

2

,

so by taking the ratio of the above two probabilities, we obtain p = 2/3. Thus, the

probability that the opposite face is tails is 1 − p = 1/3.

Solution to Problem 1.17. Let A be the event that the batch will be accepted.

Then A = A1 ∩ A2 ∩ A3 ∩ A4, where Ai, i = 1, . . . , 4, is the event that the ith item is

not defective. Using the multiplication rule, we have

P(A) = P(A1)P(A2 | A1)P(A3 | A1∩A2)P(A4 | A1∩A2∩A3) =

95

100

· 94

99

· 93

98

· 92

97

= 0.812.

Solution to Problem 1.18. Using the definition of conditional probabilities, we

have

P(A ∩ B ∩ B)

P(A ∩ B)

P(B)

=

P(B)

P(A ∩ B | B) =

= P(A| B).

Solution to Problem 1.19. Let A be the event that Alice does not find her paper

in drawer i. Since the paper is in drawer i with probability pi, and her search is

successful with probability di, the multiplication rule yields P(Ac) = pidi, so that

P(A) = 1 − pidi. Let B be the event that the paper is in drawer j. If j 6= i, then

A ∩ B = B, P(A ∩ B) = P(B), and we have

P(A ∩ B)

pj

P(B | A) =

=

P(B)

P(A)

=

1 − pidi

.

P(A)

Similarly, if i = j, we have

P(B | A) =

P(A ∩ B)

P(A)

=

P(B)P(A| B)

P(A)

pi(1 − di)

1 − pidi

.

=

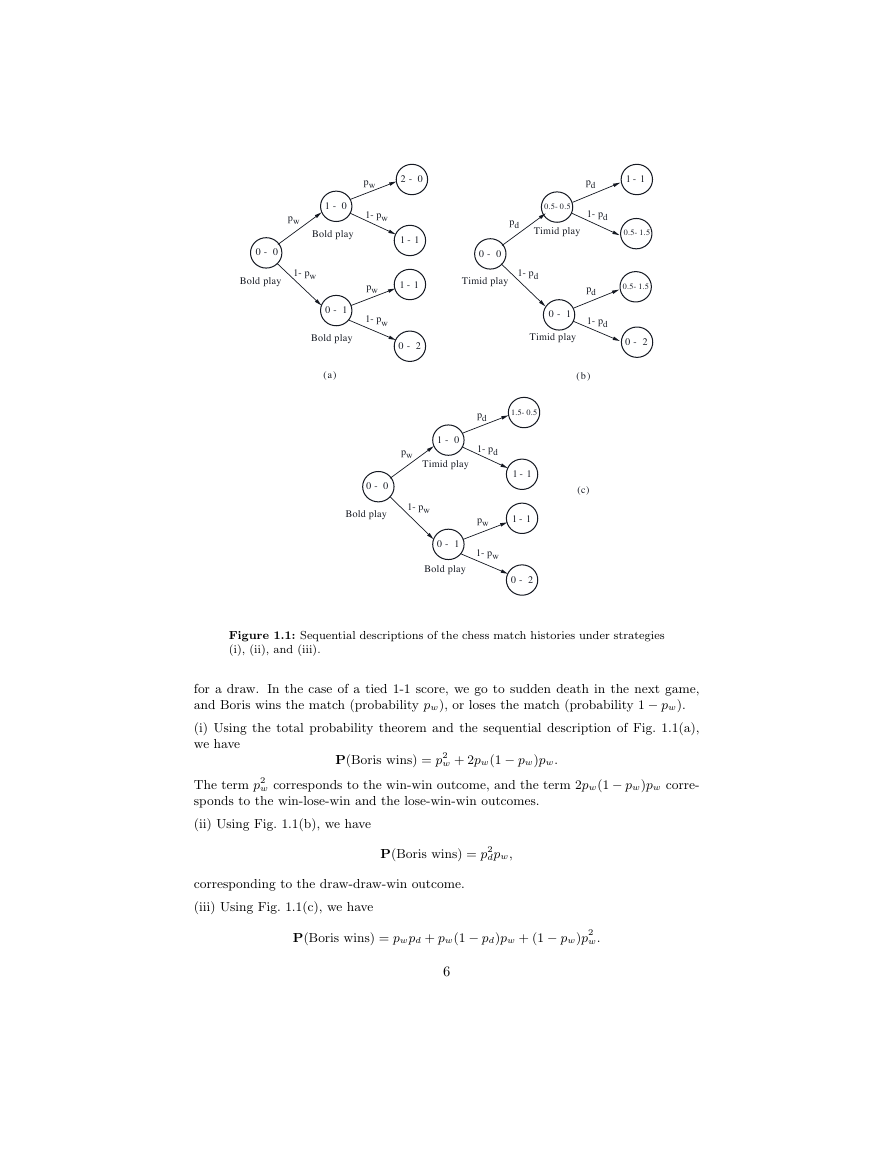

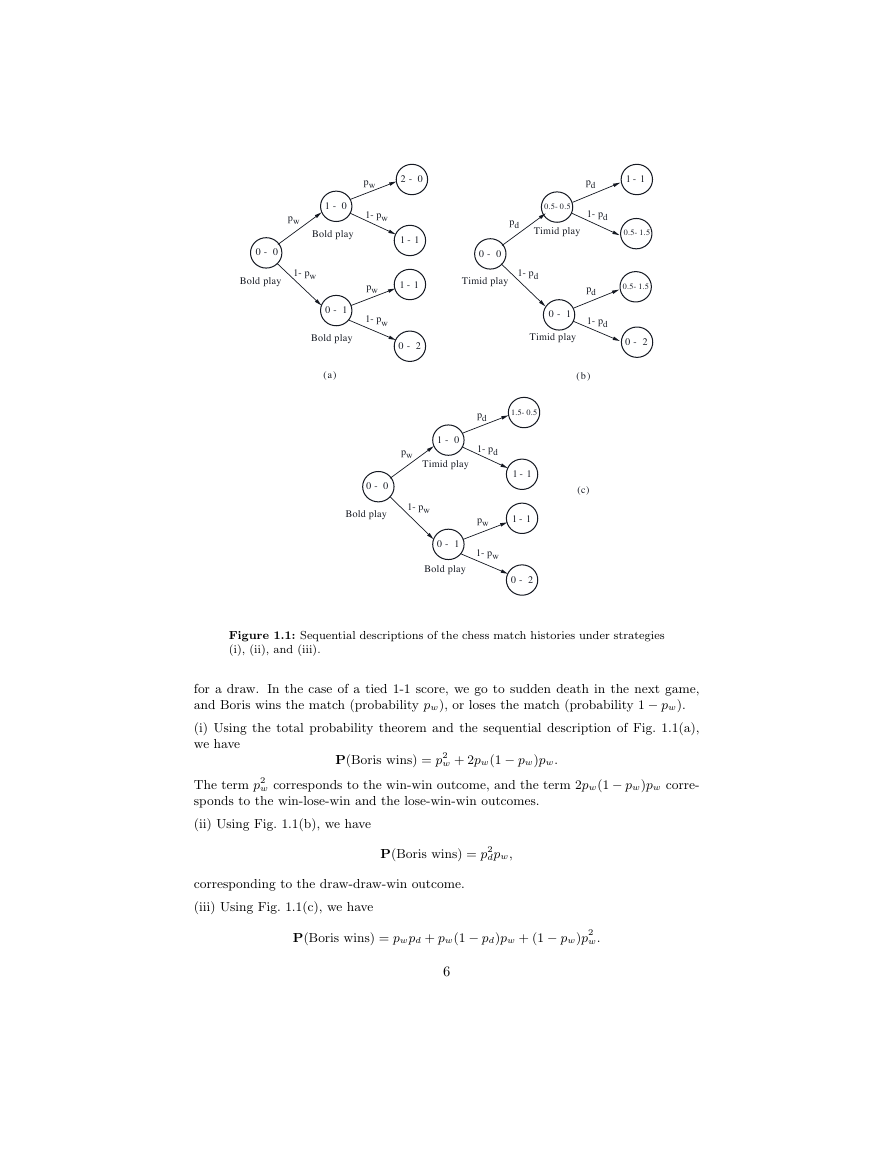

(a) Figure 1.1 provides a sequential description for the

Solution to Problem 1.20.

three different strategies. Here we assume 1 point for a win, 0 for a loss, and 1/2 point

5

�

Figure 1.1: Sequential descriptions of the chess match histories under strategies

(i), (ii), and (iii).

for a draw. In the case of a tied 1-1 score, we go to sudden death in the next game,

and Boris wins the match (probability pw), or loses the match (probability 1 − pw).

(i) Using the total probability theorem and the sequential description of Fig. 1.1(a),

we have

P(Boris wins) = p2

w + 2pw(1 − pw)pw.

w corresponds to the win-win outcome, and the term 2pw(1 − pw)pw corre-

The term p2

sponds to the win-lose-win and the lose-win-win outcomes.

(ii) Using Fig. 1.1(b), we have

P(Boris wins) = p2

dpw,

corresponding to the draw-draw-win outcome.

(iii) Using Fig. 1.1(c), we have

P(Boris wins) = pwpd + pw(1 − pd)pw + (1 − pw)p2

w.

6

0 - 0Timid playpwBold play(a)(b)(c)Bold playBold playBold playBold playTimid playTimid playTimid play0 - 01 - 02 - 01 - 11 - 10 - 10 - 20 - 20 - 11 - 10.5-0.50.5-1.50.5-1.50 - 01 - 01 - 11 - 10 - 10 - 21.5-0.5pwpwpwpwpdpdpd1-pd1-pd1-pdpd1-pd1-pw1-pw1-pw1-pw1-pw�

The term pwpd corresponds to the win-draw outcome, the term pw(1 − pd)pw corre-

sponds to the win-lose-win outcome, and the term (1 − pw)p2

w corresponds to lose-win-

win outcome.

(b) If pw < 1/2, Boris has a greater probability of losing rather than winning any one

game, regardless of the type of play he uses. Despite this, the probability of winning

the match with strategy (iii) can be greater than 1/2, provided that pw is close enough

to 1/2 and pd is close enough to 1. As an example, if pw = 0.45 and pd = 0.9, with

strategy (iii) we have

P(Boris wins) = 0.45 · 0.9 + 0.452 · (1 − 0.9) + (1 − 0.45) · 0.452 ≈ 0.54.

With strategies (i) and (ii), the corresponding probabilities of a win can be calculated

to be approximately 0.43 and 0.36, respectively. What is happening here is that with

strategy (iii), Boris is allowed to select a playing style after seeing the result of the first

game, while his opponent is not. Thus, by being able to dictate the playing style in

each game after receiving partial information about the match’s outcome, Boris gains

an advantage.

Solution to Problem 1.21. Let p(m, k) be the probability that the starting player

wins when the jar initially contains m white and k black balls. We have, using the

total probability theorem,

p(m, k) =

m

m + k

+

k

m + k

1 − p(m, k − 1) = 1 − k

p(m, k − 1).

m + k

The probabilities p(m, 1), p(m, 2), . . . , p(m, n) can be calculated sequentially using this

formula, starting with the initial condition p(m, 0) = 1.

Solution to Problem 1.22. We derive a recursion for the probability pi that a white

ball is chosen from the ith jar. We have, using the total probability theorem,

pi+1 =

m + 1

m + n + 1

pi +

m

m + n + 1

(1 − pi) =

1

m + n + 1

pi +

m

m + n + 1

,

starting with the initial condition p1 = m/(m + n). Thus, we have

p2 =

1

m + n + 1

· m

m + n

+

m

m + n + 1

=

m

m + n

.

More generally, this calculation shows that if pi−1 = m/(m + n), then pi = m/(m + n).

Thus, we obtain pi = m/(m + n) for all i.

Solution to Problem 1.23. Let pi,n−i(k) denote the probability that after k ex-

changes, a jar will contain i balls that started in that jar and n− i balls that started in

the other jar. We want to find pn,0(4). We argue recursively, using the total probability

7

�

theorem. We have

1

n

· 1

n

pn,0(4) =

· pn−1,1(3),

pn−1,1(3) = pn,0(2) + 2 · n − 1

n

· pn−1,1(1),

pn,0(2) =

1

n

· 1

n

pn−1,1(2) = 2 · n − 1

n

n − 1

· 1

n

· n − 1

· pn−1,1(1),

· pn−1,1(1),

n

n

· pn−1,1(2) +

· 1

n

2

n

· 2

n

· pn−2,2(2),

pn−2,2(2) =

pn−1,1(1) = 1.

1

Combining these equations, we obtain

4(n − 1)2

pn,0(4) =

1

n2

n2 +

n4

1

n2 +

=

1

n2

.

8(n − 1)2

n4

4(n − 1)2

n4

+

Solution to Problem 1.24. Intuitively, there is something wrong with this rationale.

The reason is that it is not based on a correctly specified probabilistic model.

In

particular, the event where both of the other prisoners are to be released is not properly

accounted in the calculation of the posterior probability of release.

To be precise, let A, B, and C be the prisoners, and let A be the one who considers

asking the guard. Suppose that all prisoners are a priori equally likely to be released.

Suppose also that if B and C are to be released, then the guard chooses B or C with

equal probability to reveal to A. Then, there are four possible outcomes:

(1) A and B are to be released, and the guard says B (probability 1/3).

(2) A and C are to be released, and the guard says C (probability 1/3).

(3) B and C are to be released, and the guard says B (probability 1/6).

(4) B and C are to be released, and the guard says C (probability 1/6).

Thus,

P(A is to be released| guard says B) =

P(A is to be released and guard says B)

P(guard says B)

=

1/3

1/3 + 1/6

=

2

3

.

Similarly,

P(A is to be released| guard says C) =

2

3

.

Thus, regardless of the identity revealed by the guard, the probability that A is released

is equal to 2/3, the a priori probability of being released.

Solution to Problem 1.25. Let m and m be the larger and the smaller of the two

amounts, respectively. Consider the three events

A = {X < m),

B = {m < X < m),

C = {m < X).

8

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc