�

MARKOV DECISION PROCESSES WITH THEIR

APPLICATIONS

Advances in Mechanics and Mathematics

VOLUME 14

Series Editor:

David Y. Gao

Virginia Polytechnic Institute and State University, U.S.A

Ray W. Ogden

University of Glasgow, U.K.

Advisory Editors:

I. Ekeland

University of British Columbia, Canada

S. Liao

Shanghai Jiao Tung University, P.R. China

K.R. Rajagopal

Texas A&M University, U.S.A.

T. Ratiu

Ecole Polytechnique, Switzerland

W. Yang

Tsinghua University, P.R. China

�

MARKOV DECISION PROCESSES WITH THEIR

APPLICATIONS

By

Prof. Ph.D. Qiying Hu

Fudan University, China

Prof. Ph.D. Wuyi Yue

Konan University, Japan

�

Library of Congress Control Number: 2006930245

ISBN-13: 978-0-387-36950-1

e-ISBN-13: 978-0-387-36951-8

Printed on acid-free paper.

AMS Subject Classifications: 90C40, 90C39, 93C65, 91B26, 90B25

© 2008 Springer Science+Business Media, LLC

All rights reserved. This work may not be translated or copied in whole or in part without the

written permission of the publisher (Springer Science+Business Media, LLC, 233 Spring Street,

New York, NY 10013, USA), except for brief excerpts in connection with reviews or scholarly

analysis. Use in connection with any form of information storage and retrieval, electronic

adaptation, computer software, or by similar or dissimilar methodology now known or hereafter

developed is forbidden.

The use in this publication of trade names, trademarks, service marks, and similar terms, even if

they are not identified as such, is not to be taken as an expression of opinion as to whether or

not they are subject to proprietary rights.

9 8 7 6 5 4 3 2 1

springer.com

�

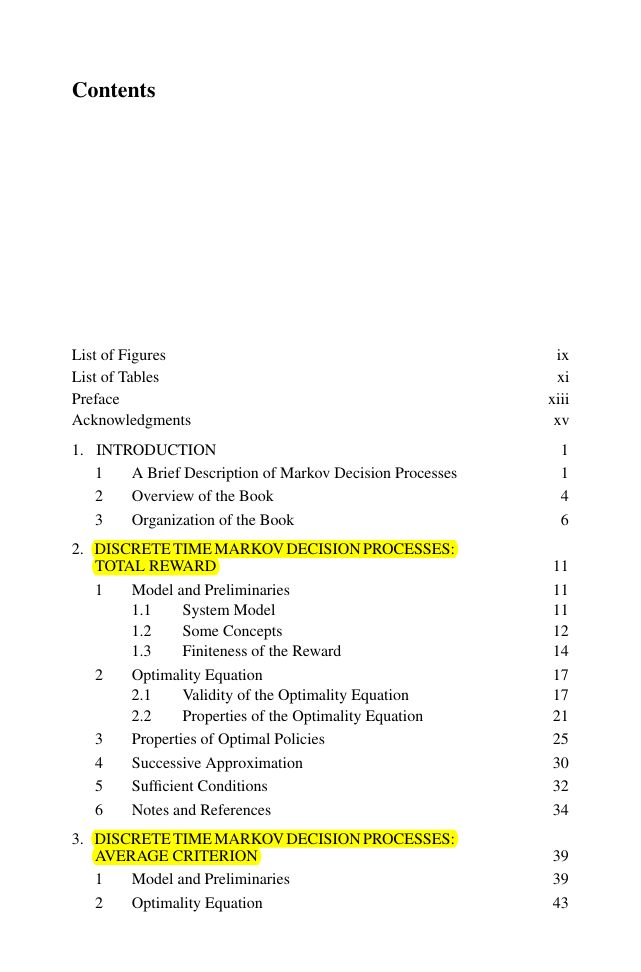

Contents

List of Figures

List of Tables

Preface

Acknowledgments

1.

INTRODUCTION

1

2

3

A Brief Description of Markov Decision Processes

Overview of the Book

Organization of the Book

2. DISCRETE TIME MARKOV DECISION PROCESSES:

TOTAL REWARD

1

System Model

Some Concepts

Finiteness of the Reward

Model and Preliminaries

1.1

1.2

1.3

Optimality Equation

2.1

2.2

Properties of Optimal Policies

Successive Approximation

Sufficient Conditions

Notes and References

2

3

4

5

6

Validity of the Optimality Equation

Properties of the Optimality Equation

3. DISCRETE TIME MARKOV DECISION PROCESSES:

AVERAGE CRITERION

1

2

Model and Preliminaries

Optimality Equation

ix

xi

xiii

xv

1

1

4

6

11

11

11

12

14

17

17

21

25

30

32

34

39

39

43

�

vi

MARKOV DECISION PROCESSES WITH THEIR APPLICATIONS

2.1

2.2

2.3

Optimality Inequalities

3.1

3.2

Notes and References

3

4

Properties of ACOE and Optimal Policies

Sufficient Conditions

Recurrent Conditions

Conditions

Properties of ACOI and Optimal Policies

4. CONTINUOUS TIME MARKOV DECISION PROCESSES

1

2

3

4

Model and Conditions

Model Decomposition

Some Properties

Optimality Equation and Optimal Policies

A Stationary Model: Total Reward

1.1

1.2

1.3

1.4

A Nonstationary Model: Total Reward

2.1

2.2

A Stationary Model: Average Criterion

Notes and References

Model and Conditions

Optimality Equation

5. SEMI-MARKOV DECISION PROCESSES

1

2

3

Model and Conditions

Model

1.1

Regular Conditions

1.2

Criteria

1.3

Transformation

2.1

2.2

Notes and References

Total Reward

Average Criterion

6. MARKOV DECISION PROCESSES IN SEMI-MARKOV

ENVIRONMENTS

1

Continuous Time MDP in Semi-Markov Environments

1.1

1.2

1.3

1.4

1.5

SMDP in Semi-Markov Environments

Model

Optimality Equation

Approximation by Weak Convergence

Markov Environment

Phase Type Environment

2

44

48

50

53

54

57

60

63

63

63

67

71

77

85

85

87

95

101

105

105

105

107

110

111

112

115

119

121

121

121

127

137

140

143

148

�

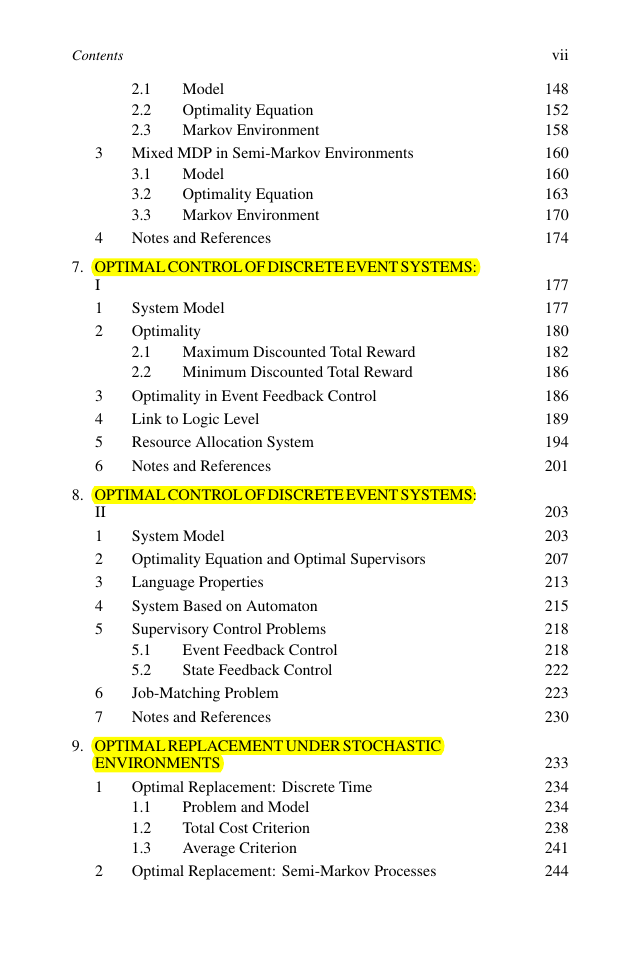

Contents

3

4

Model

Optimality Equation

Markov Environment

2.1

2.2

2.3

Mixed MDP in Semi-Markov Environments

3.1

3.2

3.3

Notes and References

Model

Optimality Equation

Markov Environment

7. OPTIMAL CONTROL OF DISCRETE EVENT SYSTEMS:

8. OPTIMAL CONTROL OF DISCRETE EVENT SYSTEMS:

I

1

2

3

4

5

6

2

II

1

2

3

4

5

6

7

Maximum Discounted Total Reward

Minimum Discounted Total Reward

System Model

Optimality

2.1

2.2

Optimality in Event Feedback Control

Link to Logic Level

Resource Allocation System

Notes and References

System Model

Optimality Equation and Optimal Supervisors

Language Properties

System Based on Automaton

Supervisory Control Problems

5.1

5.2

Job-Matching Problem

Notes and References

Event Feedback Control

State Feedback Control

9. OPTIMAL REPLACEMENT UNDER STOCHASTIC

ENVIRONMENTS

1

Optimal Replacement: Discrete Time

1.1

1.2

1.3

Optimal Replacement: Semi-Markov Processes

Problem and Model

Total Cost Criterion

Average Criterion

vii

148

152

158

160

160

163

170

174

177

177

180

182

186

186

189

194

201

203

203

207

213

215

218

218

222

223

230

233

234

234

238

241

244

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc