Statistics

copy to come from copywriter for review

Monographs on Statistics and Applied Probability 143

Statistical Learning

with Sparsity

The Lasso and

Generalizations

143

S

t

a

t

i

s

t

i

c

a

l

L

e

a

r

n

i

n

g

w

i

t

h

S

p

a

r

s

i

t

y

H

a

s

t

i

e

•

T

i

b

s

h

i

r

a

n

i

•

W

a

n

w

i

K25103

r

i

g

h

t

w w w . c r c p r e s s . c o m

Trevor Hastie

Robert Tibshirani

Martin Wainwright

K25103_cover.indd 1

2/24/15 1:35 PM

�

To our parents:

Valerie and Patrick Hastie

Vera and Sami Tibshirani

Patricia and John Wainwright

and to our families:

Samantha, Timothy, and Lynda

Charlie, Ryan, Jess, Julie, and Cheryl

Haruko and Hana

�

�

Contents

Preface

1 Introduction

2 The Lasso for Linear Models

Introduction

2.1

2.2 The Lasso Estimator

2.3 Cross-Validation and Inference

2.4 Computation of the Lasso Solution

Single Predictor: Soft Thresholding

2.4.1

2.4.2 Multiple Predictors: Cyclic Coordinate Descent

2.4.3

Soft-Thresholding and Orthogonal Bases

2.5 Degrees of Freedom

2.6 Uniqueness of the Lasso Solutions

2.7 A Glimpse at the Theory

2.8 The Nonnegative Garrote

2.9

2.10 Some Perspective

Exercises

‘q Penalties and Bayes Estimates

3 Generalized Linear Models

Introduction

3.1

3.2 Logistic Regression

3.2.1 Example: Document Classification

3.2.2 Algorithms

3.3 Multiclass Logistic Regression

3.3.1 Example: Handwritten Digits

3.3.2 Algorithms

3.3.3 Grouped-Lasso Multinomial

3.4 Log-Linear Models and the Poisson GLM

3.4.1 Example: Distribution Smoothing

3.5 Cox Proportional Hazards Models

3.5.1 Cross-Validation

3.5.2 Pre-Validation

3.6 Support Vector Machines

3.6.1 Logistic Regression with Separable Data

ix

xv

1

7

7

8

13

14

15

15

17

17

19

20

20

22

23

24

29

29

31

32

35

36

37

39

39

40

40

42

43

45

46

49

�

x

3.7 Computational Details and glmnet

Bibliographic Notes

Exercises

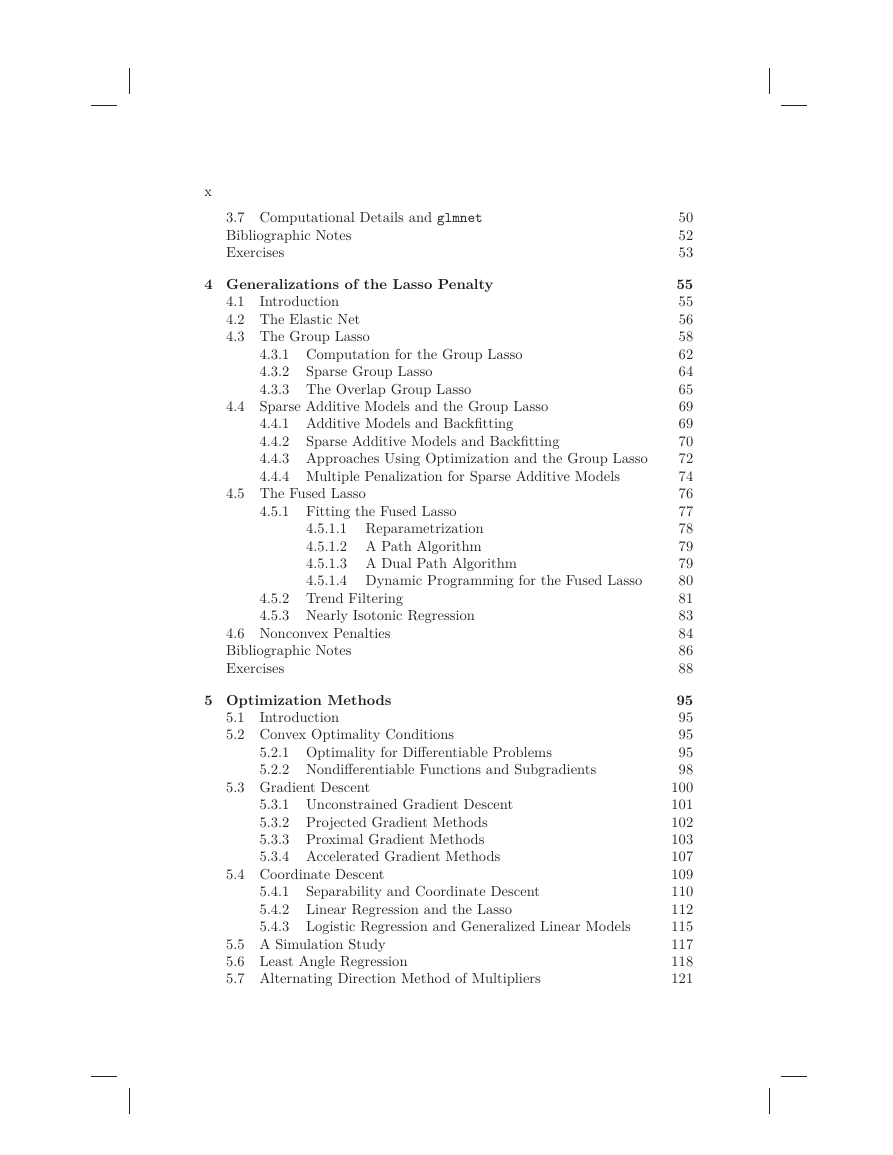

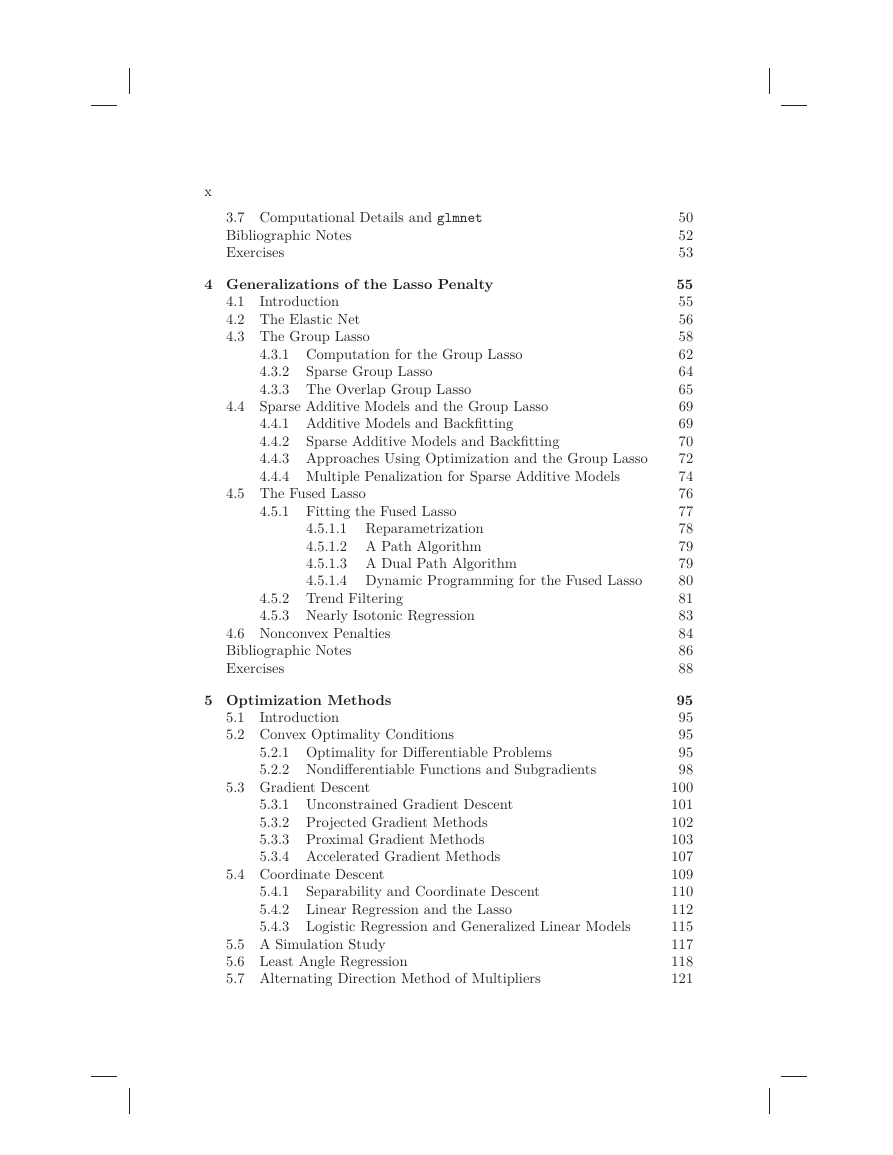

4 Generalizations of the Lasso Penalty

Introduction

4.1

4.2 The Elastic Net

4.3 The Group Lasso

4.3.1 Computation for the Group Lasso

4.3.2

4.3.3 The Overlap Group Lasso

Sparse Group Lasso

4.4 Sparse Additive Models and the Group Lasso

Sparse Additive Models and Backfitting

4.4.1 Additive Models and Backfitting

4.4.2

4.4.3 Approaches Using Optimization and the Group Lasso

4.4.4 Multiple Penalization for Sparse Additive Models

5.2.1 Optimality for Differentiable Problems

5.2.2 Nondifferentiable Functions and Subgradients

5.3 Gradient Descent

5.3.1 Unconstrained Gradient Descent

5.3.2 Projected Gradient Methods

5.3.3 Proximal Gradient Methods

5.3.4 Accelerated Gradient Methods

5.4 Coordinate Descent

Separability and Coordinate Descent

5.4.1

5.4.2 Linear Regression and the Lasso

5.4.3 Logistic Regression and Generalized Linear Models

5.5 A Simulation Study

5.6 Least Angle Regression

5.7 Alternating Direction Method of Multipliers

4.5 The Fused Lasso

4.5.1 Fitting the Fused Lasso

4.5.1.1 Reparametrization

4.5.1.2 A Path Algorithm

4.5.1.3 A Dual Path Algorithm

4.5.1.4 Dynamic Programming for the Fused Lasso

4.5.2 Trend Filtering

4.5.3 Nearly Isotonic Regression

4.6 Nonconvex Penalties

Bibliographic Notes

Exercises

5 Optimization Methods

Introduction

5.1

5.2 Convex Optimality Conditions

50

52

53

55

55

56

58

62

64

65

69

69

70

72

74

76

77

78

79

79

80

81

83

84

86

88

95

95

95

95

98

100

101

102

103

107

109

110

112

115

117

118

121

�

5.8 Minorization-Maximization Algorithms

5.9 Biconvexity and Alternating Minimization

5.10 Screening Rules

Bibliographic Notes

Appendix

Exercises

6 Statistical Inference

6.1 The Bayesian Lasso

6.2 The Bootstrap

6.3 Post-Selection Inference for the Lasso

6.3.1 The Covariance Test

6.3.2 A General Scheme for Post-Selection Inference

6.3.2.1 Fixed-λ Inference for the Lasso

6.3.2.2 The Spacing Test for LAR

6.3.3 What Hypothesis Is Being Tested?

6.3.4 Back to Forward Stepwise Regression

Inference via a Debiased Lasso

6.4

6.5 Other Proposals for Post-Selection Inference

Bibliographic Notes

Exercises

xi

123

124

127

131

132

134

139

139

142

147

147

150

154

156

157

158

158

160

161

162

Introduction

7.1

7.2 The Singular Value Decomposition

7.3 Missing Data and Matrix Completion

7.3.1 The Netflix Movie Challenge

7.3.2 Matrix Completion Using Nuclear Norm

7.3.3 Theoretical Results for Matrix Completion

7.3.4 Maximum Margin Factorization and Related Methods

7 Matrix Decompositions, Approximations, and Completion 167

167

169

169

170

174

177

181

184

185

187

190

195

196

7.4 Reduced-Rank Regression

7.5 A General Matrix Regression Framework

7.6 Penalized Matrix Decomposition

7.7 Additive Matrix Decomposition

Bibliographic Notes

Exercises

8 Sparse Multivariate Methods

Introduction

8.1

8.2 Sparse Principal Components Analysis

8.2.1

8.2.2

Some Background

Sparse Principal Components

8.2.2.1

Sparsity from Maximum Variance

8.2.2.2 Methods Based on Reconstruction

8.2.3 Higher-Rank Solutions

201

201

202

202

204

204

206

207

�

xii

Illustrative Application of Sparse PCA

8.2.3.1

Sparse PCA via Fantope Projection

Sparse Autoencoders and Deep Learning

Some Theory for Sparse PCA

8.3 Sparse Canonical Correlation Analysis

8.2.4

8.2.5

8.2.6

8.3.1 Example: Netflix Movie Rating Data

8.4 Sparse Linear Discriminant Analysis

8.4.1 Normal Theory and Bayes’ Rule

8.4.2 Nearest Shrunken Centroids

8.4.3 Fisher’s Linear Discriminant Analysis

8.4.3.1 Example: Simulated Data with Five Classes

8.4.4 Optimal Scoring

8.4.4.1 Example: Face Silhouettes

8.5 Sparse Clustering

8.5.1

Some Background on Clustering

8.5.1.1 Example: Simulated Data with Six Classes

Sparse Hierarchical Clustering

Sparse K-Means Clustering

8.5.2

8.5.3

8.5.4 Convex Clustering

Bibliographic Notes

Exercises

9 Graphs and Model Selection

Introduction

9.1

9.2 Basics of Graphical Models

9.2.1 Factorization and Markov Properties

9.2.1.1 Factorization Property

9.2.1.2 Markov Property

9.2.1.3 Equivalence of Factorization and Markov

Properties

9.2.2

Some Examples

9.2.2.1 Discrete Graphical Models

9.2.2.2 Gaussian Graphical Models

9.3 Graph Selection via Penalized Likelihood

9.3.1 Global Likelihoods for Gaussian Models

9.3.2 Graphical Lasso Algorithm

9.3.3 Exploiting Block-Diagonal Structure

9.3.4 Theoretical Guarantees for the Graphical Lasso

9.3.5 Global Likelihood for Discrete Models

9.4 Graph Selection via Conditional Inference

9.4.1 Neighborhood-Based Likelihood for Gaussians

9.4.2 Neighborhood-Based Likelihood for Discrete Models

9.4.3 Pseudo-Likelihood for Mixed Models

9.5 Graphical Models with Hidden Variables

Bibliographic Notes

209

210

210

212

213

215

217

217

218

221

222

225

226

227

227

228

228

230

231

232

234

241

241

241

241

242

243

243

244

244

245

246

247

248

251

252

253

254

255

256

259

261

261

�

Exercises

10 Signal Approximation and Compressed Sensing

10.1 Introduction

10.2 Signals and Sparse Representations

10.2.1 Orthogonal Bases

10.2.2 Approximation in Orthogonal Bases

10.2.3 Reconstruction in Overcomplete Bases

10.3 Random Projection and Approximation

10.3.1 Johnson–Lindenstrauss Approximation

10.3.2 Compressed Sensing

10.4 Equivalence between ‘0 and ‘1 Recovery

10.4.1 Restricted Nullspace Property

10.4.2 Sufficient Conditions for Restricted Nullspace

10.4.3 Proofs

10.4.3.1 Proof of Theorem 10.1

10.4.3.2 Proof of Proposition 10.1

Bibliographic Notes

Exercises

11 Theoretical Results for the Lasso

11.1 Introduction

11.1.1 Types of Loss Functions

11.1.2 Types of Sparsity Models

11.2 Bounds on Lasso ‘2-Error

11.2.1 Strong Convexity in the Classical Setting

11.2.2 Restricted Eigenvalues for Regression

11.2.3 A Basic Consistency Result

11.3 Bounds on Prediction Error

11.4 Support Recovery in Linear Regression

11.4.1 Variable-Selection Consistency for the Lasso

11.4.1.1 Some Numerical Studies

11.4.2 Proof of Theorem 11.3

11.5 Beyond the Basic Lasso

Bibliographic Notes

Exercises

Bibliography

Author Index

Index

xiii

263

269

269

269

269

271

274

276

277

278

280

281

282

284

284

284

285

286

289

289

289

290

291

291

293

294

299

301

301

303

305

310

311

312

315

337

343

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc