Copyright

Table of Contents

Preface

What to Expect from This Book

Who This Book Is For

How to Read This Book

Overview of Chapters

Programming and Code Examples

GitHub Repository

Executing Distributed Jobs

Permissions and Citation

Feedback and How to Contact Us

Safari® Books Online

How to Contact Us

Acknowledgments

Part I. Introduction to Distributed Computing

Chapter 1. The Age of the Data Product

What Is a Data Product?

Building Data Products at Scale with Hadoop

Leveraging Large Datasets

Hadoop for Data Products

The Data Science Pipeline and the Hadoop Ecosystem

Big Data Workflows

Conclusion

Chapter 2. An Operating System for Big Data

Basic Concepts

Hadoop Architecture

A Hadoop Cluster

HDFS

YARN

Working with a Distributed File System

Basic File System Operations

File Permissions in HDFS

Other HDFS Interfaces

Working with Distributed Computation

MapReduce: A Functional Programming Model

MapReduce: Implemented on a Cluster

Beyond a Map and Reduce: Job Chaining

Submitting a MapReduce Job to YARN

Conclusion

Chapter 3. A Framework for Python and Hadoop Streaming

Hadoop Streaming

Computing on CSV Data with Streaming

Executing Streaming Jobs

A Framework for MapReduce with Python

Counting Bigrams

Other Frameworks

Advanced MapReduce

Combiners

Partitioners

Job Chaining

Conclusion

Chapter 4. In-Memory Computing with Spark

Spark Basics

The Spark Stack

Resilient Distributed Datasets

Programming with RDDs

Interactive Spark Using PySpark

Writing Spark Applications

Visualizing Airline Delays with Spark

Conclusion

Chapter 5. Distributed Analysis and Patterns

Computing with Keys

Compound Keys

Keyspace Patterns

Pairs versus Stripes

Design Patterns

Summarization

Indexing

Filtering

Toward Last-Mile Analytics

Fitting a Model

Validating Models

Conclusion

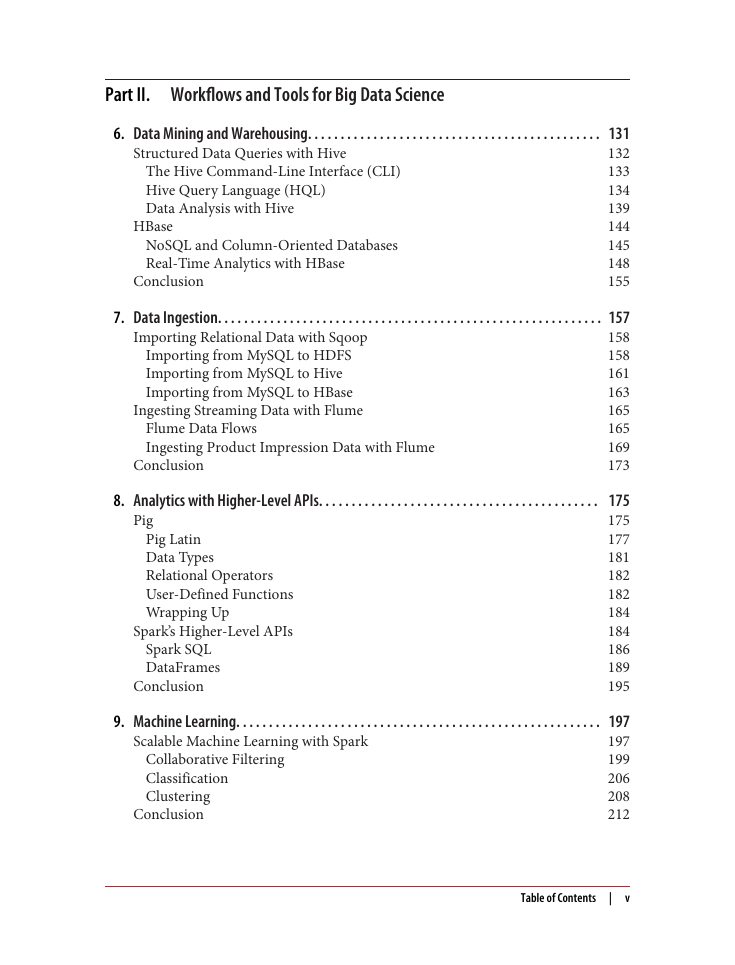

Part II. Workflows and Tools for Big Data Science

Chapter 6. Data Mining and Warehousing

Structured Data Queries with Hive

The Hive Command-Line Interface (CLI)

Hive Query Language (HQL)

Data Analysis with Hive

HBase

NoSQL and Column-Oriented Databases

Real-Time Analytics with HBase

Conclusion

Chapter 7. Data Ingestion

Importing Relational Data with Sqoop

Importing from MySQL to HDFS

Importing from MySQL to Hive

Importing from MySQL to HBase

Ingesting Streaming Data with Flume

Flume Data Flows

Ingesting Product Impression Data with Flume

Conclusion

Chapter 8. Analytics with Higher-Level APIs

Pig

Pig Latin

Data Types

Relational Operators

User-Defined Functions

Wrapping Up

Spark’s Higher-Level APIs

Spark SQL

DataFrames

Conclusion

Chapter 9. Machine Learning

Scalable Machine Learning with Spark

Collaborative Filtering

Classification

Clustering

Conclusion

Chapter 10. Summary: Doing Distributed Data Science

Data Product Lifecycle

Data Lakes

Data Ingestion

Computational Data Stores

Machine Learning Lifecycle

Conclusion

Appendix A. Creating a Hadoop Pseudo-Distributed Development Environment

Quick Start

Setting Up Linux

Creating a Hadoop User

Configuring SSH

Installing Java

Disabling IPv6

Installing Hadoop

Unpacking

Environment

Hadoop Configuration

Formatting the Namenode

Starting Hadoop

Restarting Hadoop

Appendix B. Installing Hadoop Ecosystem Products

Packaged Hadoop Distributions

Self-Installation of Apache Hadoop Ecosystem Products

Basic Installation and Configuration Steps

Sqoop-Specific Configurations

Hive-Specific Configuration

HBase-Specific Configurations

Installing Spark

Glossary

Index

About the Authors

Colophon

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc