Part I

Virtualization

1

�

�

3

A Dialogue on Virtualization

Professor: And thus we reach the first of our three pieces on operating systems:

virtualization.

Student: But what is virtualization, oh noble professor?

Professor: Imagine we have a peach.

Student: A peach? (incredulous)

Professor: Yes, a peach. Let us call that the physical peach. But we have many

eaters who would like to eat this peach. What we would like to present to each

eater is their own peach, so that they can be happy. We call the peach we give

eaters virtual peaches; we somehow create many of these virtual peaches out of

the one physical peach. And the important thing: in this illusion, it looks to each

eater like they have a physical peach, but in reality they don’t.

Student: So you are sharing the peach, but you don’t even know it?

Professor: Right! Exactly.

Student: But there’s only one peach.

Professor: Yes. And...?

Student: Well, if I was sharing a peach with somebody else, I think I would

notice.

Professor: Ah yes! Good point. But that is the thing with many eaters; most

of the time they are napping or doing something else, and thus, you can snatch

that peach away and give it to someone else for a while. And thus we create the

illusion of many virtual peaches, one peach for each person!

Student: Sounds like a bad campaign slogan. You are talking about computers,

right Professor?

Professor: Ah, young grasshopper, you wish to have a more concrete example.

Good idea! Let us take the most basic of resources, the CPU. Assume there is one

physical CPU in a system (though now there are often two or four or more). What

virtualization does is take that single CPU and make it look like many virtual

CPUs to the applications running on the system. Thus, while each application

3

�

4

A DIALOGUE ON VIRTUALIZATION

thinks it has its own CPU to use, there is really only one. And thus the OS has

created a beautiful illusion: it has virtualized the CPU.

Student: Wow! That sounds like magic. Tell me more! How does that work?

Professor: In time, young student, in good time. Sounds like you are ready to

begin.

Student: I am! Well, sort of. I must admit, I’m a little worried you are going to

start talking about peaches again.

Professor: Don’t worry too much; I don’t even like peaches. And thus we be-

gin...

OPERATING

SYSTEMS

[VERSION 1.00]

WWW.OSTEP.ORG

�

4

The Abstraction: The Process

In this chapter, we discuss one of the most fundamental abstractions that

the OS provides to users: the process. The definition of a process, infor-

mally, is quite simple: it is a running program [V+65,BH70]. The program

itself is a lifeless thing: it just sits there on the disk, a bunch of instructions

(and maybe some static data), waiting to spring into action. It is the oper-

ating system that takes these bytes and gets them running, transforming

the program into something useful.

It turns out that one often wants to run more than one program at

once; for example, consider your desktop or laptop where you might like

to run a web browser, mail program, a game, a music player, and so forth.

In fact, a typical system may be seemingly running tens or even hundreds

of processes at the same time. Doing so makes the system easy to use, as

one never need be concerned with whether a CPU is available; one simply

runs programs. Hence our challenge:

THE CRUX OF THE PROBLEM:

HOW TO PROVIDE THE ILLUSION OF MANY CPUS?

Although there are only a few physical CPUs available, how can the

OS provide the illusion of a nearly-endless supply of said CPUs?

The OS creates this illusion by virtualizing the CPU. By running one

process, then stopping it and running another, and so forth, the OS can

promote the illusion that many virtual CPUs exist when in fact there is

only one physical CPU (or a few). This basic technique, known as time

sharing of the CPU, allows users to run as many concurrent processes as

they would like; the potential cost is performance, as each will run more

slowly if the CPU(s) must be shared.

To implement virtualization of the CPU, and to implement it well, the

OS will need both some low-level machinery and some high-level in-

telligence. We call the low-level machinery mechanisms; mechanisms

are low-level methods or protocols that implement a needed piece of

functionality. For example, we’ll learn later how to implement a context

1

�

2

THE ABSTRACTION: THE PROCESS

TIP: USE TIME SHARING (AND SPACE SHARING)

Time sharing is a basic technique used by an OS to share a resource. By

allowing the resource to be used for a little while by one entity, and then

a little while by another, and so forth, the resource in question (e.g., the

CPU, or a network link) can be shared by many. The counterpart of time

sharing is space sharing, where a resource is divided (in space) among

those who wish to use it. For example, disk space is naturally a space-

shared resource; once a block is assigned to a file, it is normally not as-

signed to another file until the user deletes the original file.

switch, which gives the OS the ability to stop running one program and

start running another on a given CPU; this time-sharing mechanism is

employed by all modern OSes.

On top of these mechanisms resides some of the intelligence in the

OS, in the form of policies. Policies are algorithms for making some

kind of decision within the OS. For example, given a number of possi-

ble programs to run on a CPU, which program should the OS run? A

scheduling policy in the OS will make this decision, likely using histori-

cal information (e.g., which program has run more over the last minute?),

workload knowledge (e.g., what types of programs are run), and perfor-

mance metrics (e.g., is the system optimizing for interactive performance,

or throughput?) to make its decision.

4.1 The Abstraction: A Process

The abstraction provided by the OS of a running program is something

we will call a process. As we said above, a process is simply a running

program; at any instant in time, we can summarize a process by taking an

inventory of the different pieces of the system it accesses or affects during

the course of its execution.

To understand what constitutes a process, we thus have to understand

its machine state: what a program can read or update when it is running.

At any given time, what parts of the machine are important to the execu-

tion of this program?

One obvious component of machine state that comprises a process is

its memory.

Instructions lie in memory; the data that the running pro-

gram reads and writes sits in memory as well. Thus the memory that the

process can address (called its address space) is part of the process.

Also part of the process’s machine state are registers; many instructions

explicitly read or update registers and thus clearly they are important to

the execution of the process.

Note that there are some particularly special registers that form part

of this machine state. For example, the program counter (PC) (sometimes

called the instruction pointer or IP) tells us which instruction of the pro-

gram is currently being executed; similarly a stack pointer and associated

OPERATING

SYSTEMS

[VERSION 1.00]

WWW.OSTEP.ORG

�

THE ABSTRACTION: THE PROCESS

3

TIP: SEPARATE POLICY AND MECHANISM

In many operating systems, a common design paradigm is to separate

high-level policies from their low-level mechanisms [L+75]. You can

think of the mechanism as providing the answer to a how question about

a system; for example, how does an operating system perform a context

switch? The policy provides the answer to a which question; for example,

which process should the operating system run right now? Separating the

two allows one easily to change policies without having to rethink the

mechanism and is thus a form of modularity, a general software design

principle.

frame pointer are used to manage the stack for function parameters, local

variables, and return addresses.

Finally, programs often access persistent storage devices too. Such I/O

information might include a list of the files the process currently has open.

4.2 Process API

Though we defer discussion of a real process API until a subsequent

chapter, here we first give some idea of what must be included in any

interface of an operating system. These APIs, in some form, are available

on any modern operating system.

• Create: An operating system must include some method to cre-

ate new processes. When you type a command into the shell, or

double-click on an application icon, the OS is invoked to create a

new process to run the program you have indicated.

• Destroy: As there is an interface for process creation, systems also

provide an interface to destroy processes forcefully. Of course, many

processes will run and just exit by themselves when complete; when

they don’t, however, the user may wish to kill them, and thus an in-

terface to halt a runaway process is quite useful.

• Wait: Sometimes it is useful to wait for a process to stop running;

thus some kind of waiting interface is often provided.

• Miscellaneous Control: Other than killing or waiting for a process,

there are sometimes other controls that are possible. For example,

most operating systems provide some kind of method to suspend a

process (stop it from running for a while) and then resume it (con-

tinue it running).

• Status: There are usually interfaces to get some status information

about a process as well, such as how long it has run for, or what

state it is in.

c 2008–18, ARPACI-DUSSEAU

THREE

EASY

PIECES

�

4

THE ABSTRACTION: THE PROCESS

CPU

Memory

code

static data

heap

stack

Process

code

static data

Program

Disk

Loading:

Takes on-disk program

and reads it into the

address space of process

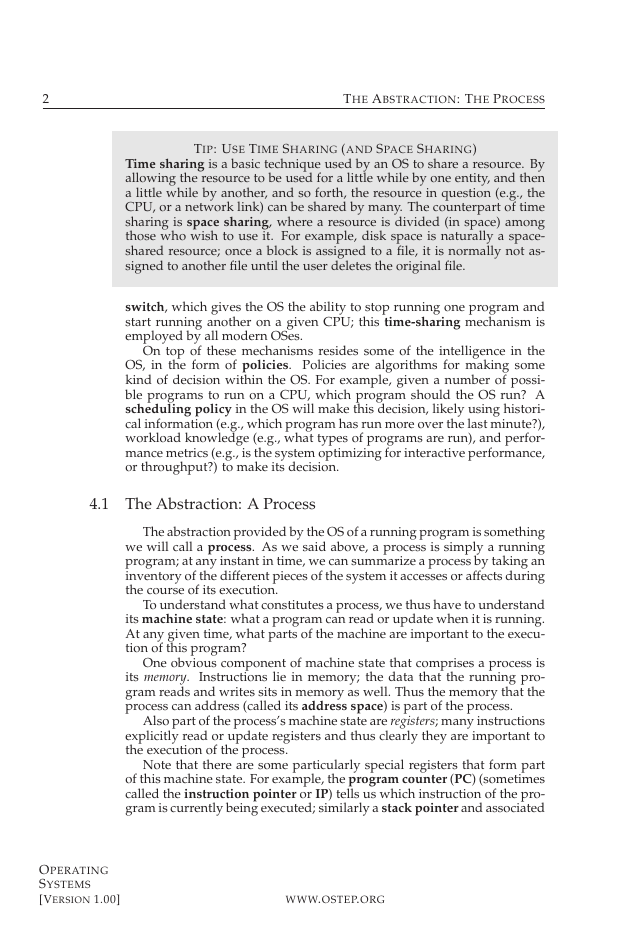

Figure 4.1: Loading: From Program To Process

4.3 Process Creation: A Little More Detail

One mystery that we should unmask a bit is how programs are trans-

formed into processes. Specifically, how does the OS get a program up

and running? How does process creation actually work?

The first thing that the OS must do to run a program is to load its code

and any static data (e.g., initialized variables) into memory, into the ad-

dress space of the process. Programs initially reside on disk (or, in some

modern systems, flash-based SSDs) in some kind of executable format;

thus, the process of loading a program and static data into memory re-

quires the OS to read those bytes from disk and place them in memory

somewhere (as shown in Figure 4.1).

In early (or simple) operating systems, the loading process is done ea-

gerly, i.e., all at once before running the program; modern OSes perform

the process lazily, i.e., by loading pieces of code or data only as they are

needed during program execution. To truly understand how lazy loading

of pieces of code and data works, you’ll have to understand more about

the machinery of paging and swapping, topics we’ll cover in the future

when we discuss the virtualization of memory. For now, just remember

that before running anything, the OS clearly must do some work to get

the important program bits from disk into memory.

OPERATING

SYSTEMS

[VERSION 1.00]

WWW.OSTEP.ORG

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc