FPGA加速机器学习与ADAS/自动驾驶应用

罗霖

Andy.luo@Xilinx.com

2017年4月

�

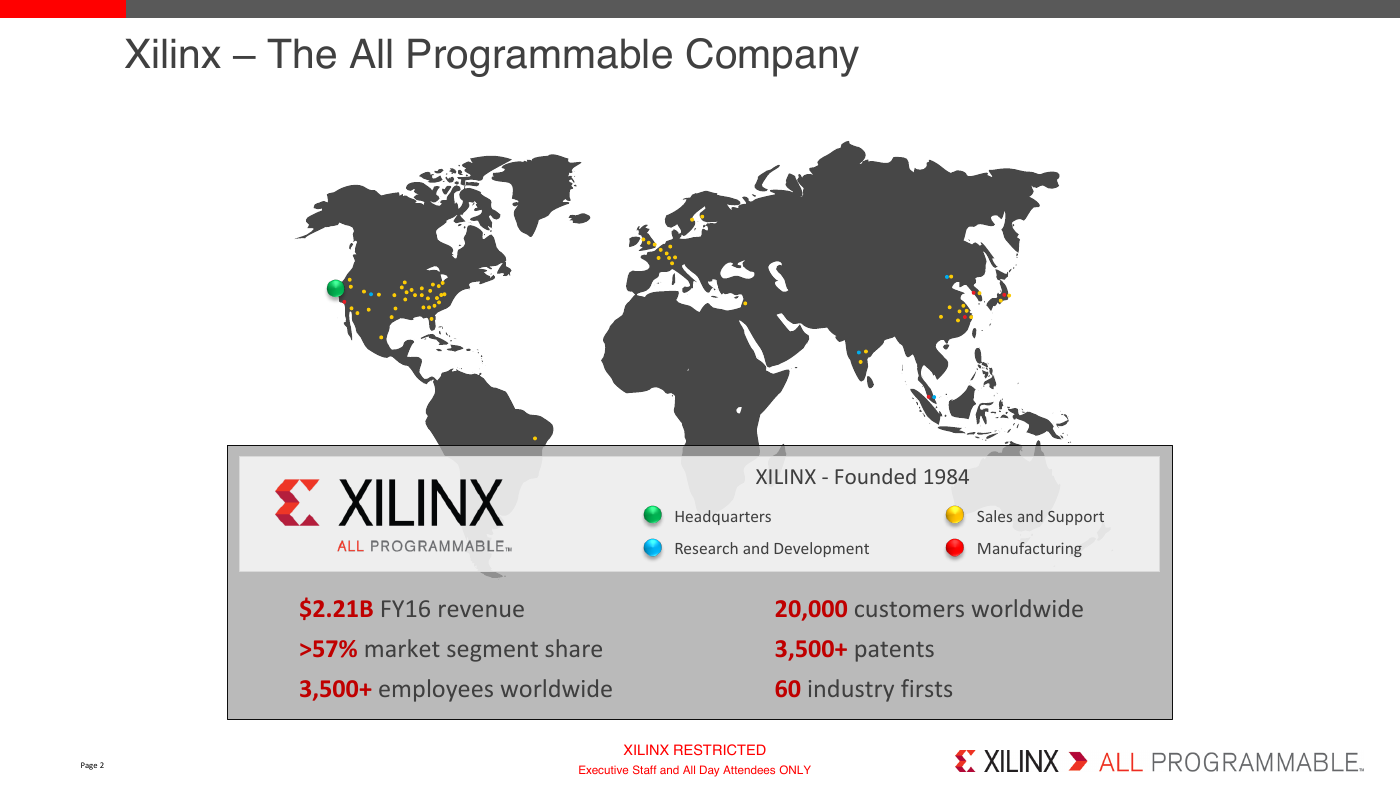

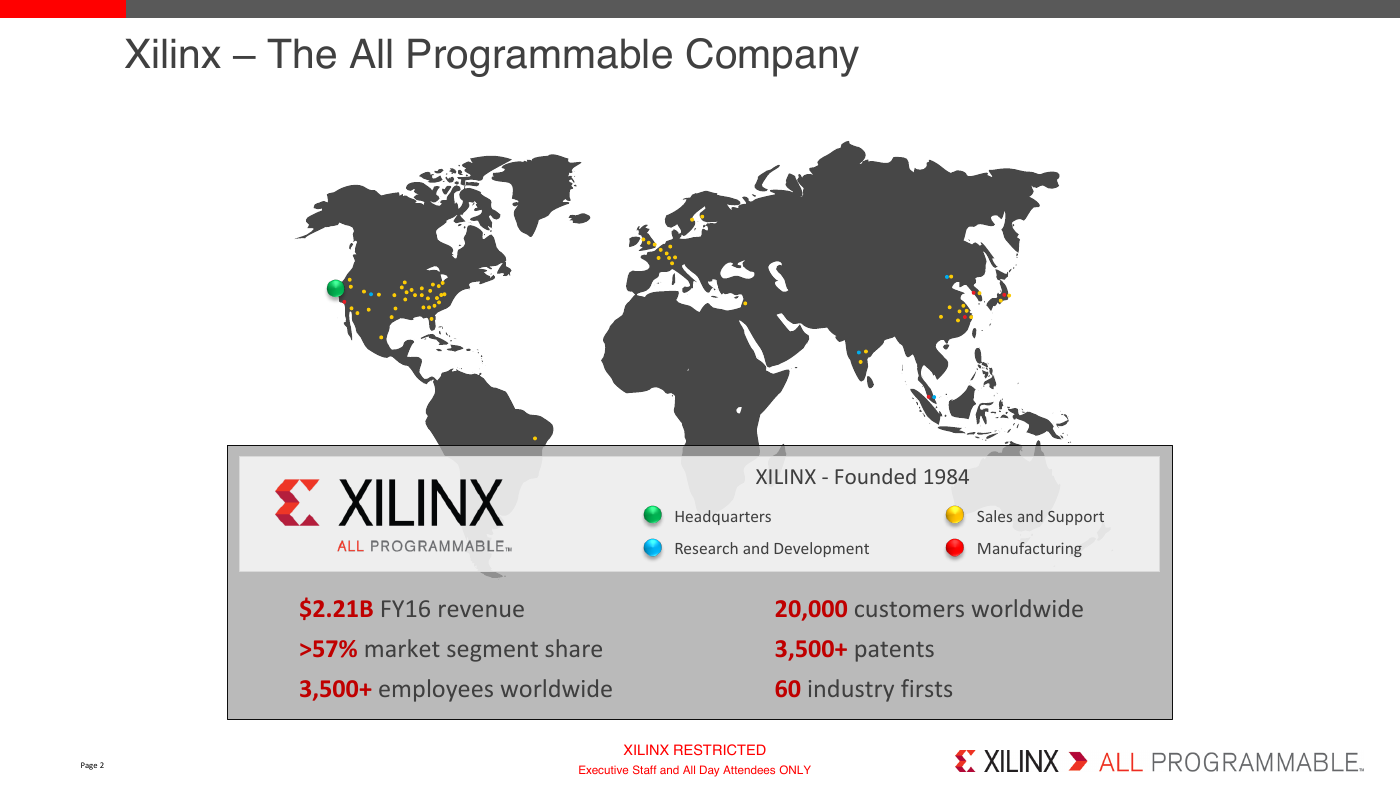

Xilinx – The All Programmable Company

XILINX - Founded 1984

Headquarters

Sales and Support

Research and Development

Manufacturing

$2.21B FY16 revenue

20,000 customers worldwide

>57% market segment share

3,500+ employees worldwide

3,500+ patents

60 industry firsts

Page 2

XILINX RESTRICTED

Executive Staff and All Day Attendees ONLY

.

�

What is FPGA

A field-programmable gate array (FPGA) is an integrated circuit

that can be programmed in the field after manufacture.

FPGA contain an array of programmable logic blocks and a

hierarchy of reconfigurable interconnects that allow the blocks to

be "wired together“.

Usually programmed with HDL (VHDL/Verilog) and now supports

C/C++/OpenCL and model-based tool (Matlab, Labview…)

A very wide range of applications including wired&wireless communication, date center,

aerospace&defense, industrial, medical, automotive, test&measurement, audio&video,

even consumer…

XILINX RESTRICTED

Executive Staff and All Day Attendees ONLY

.

�

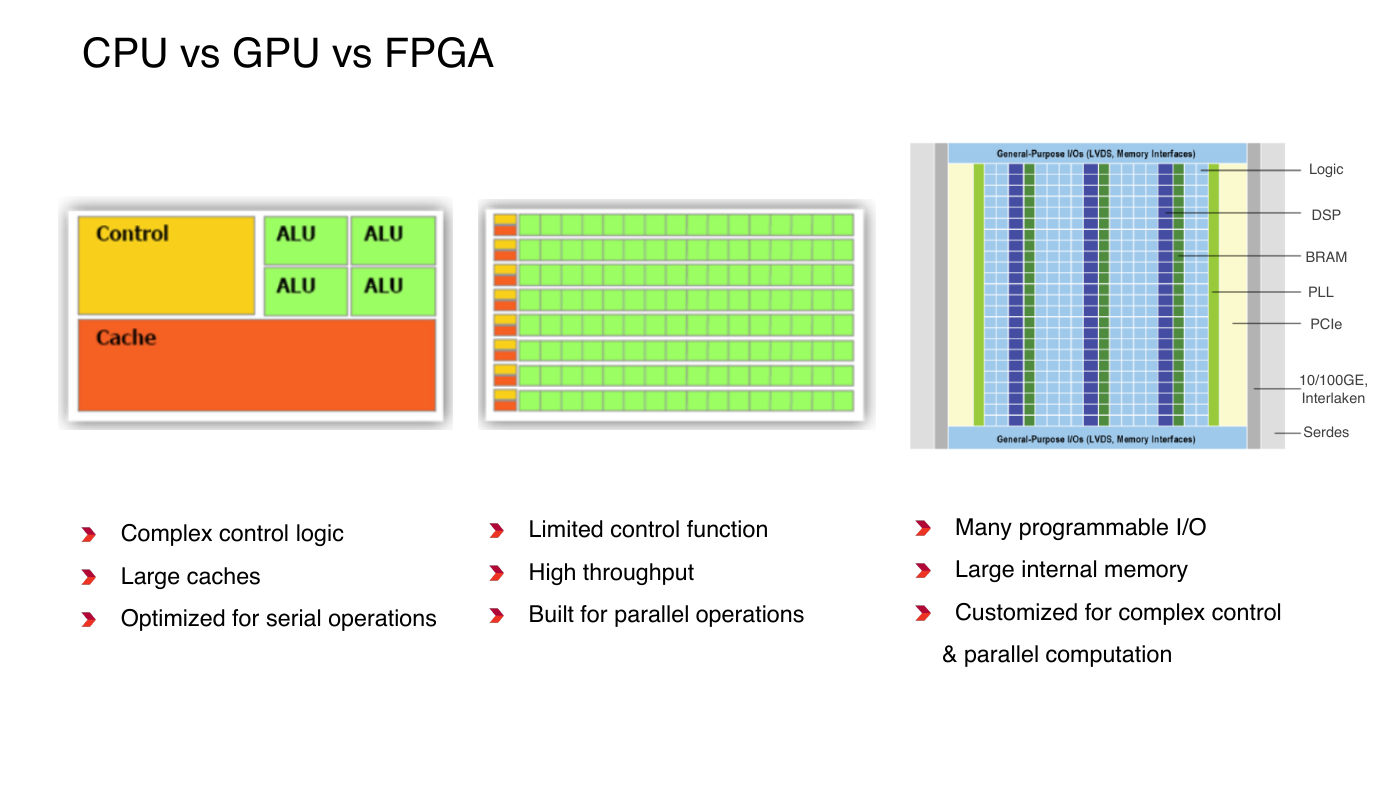

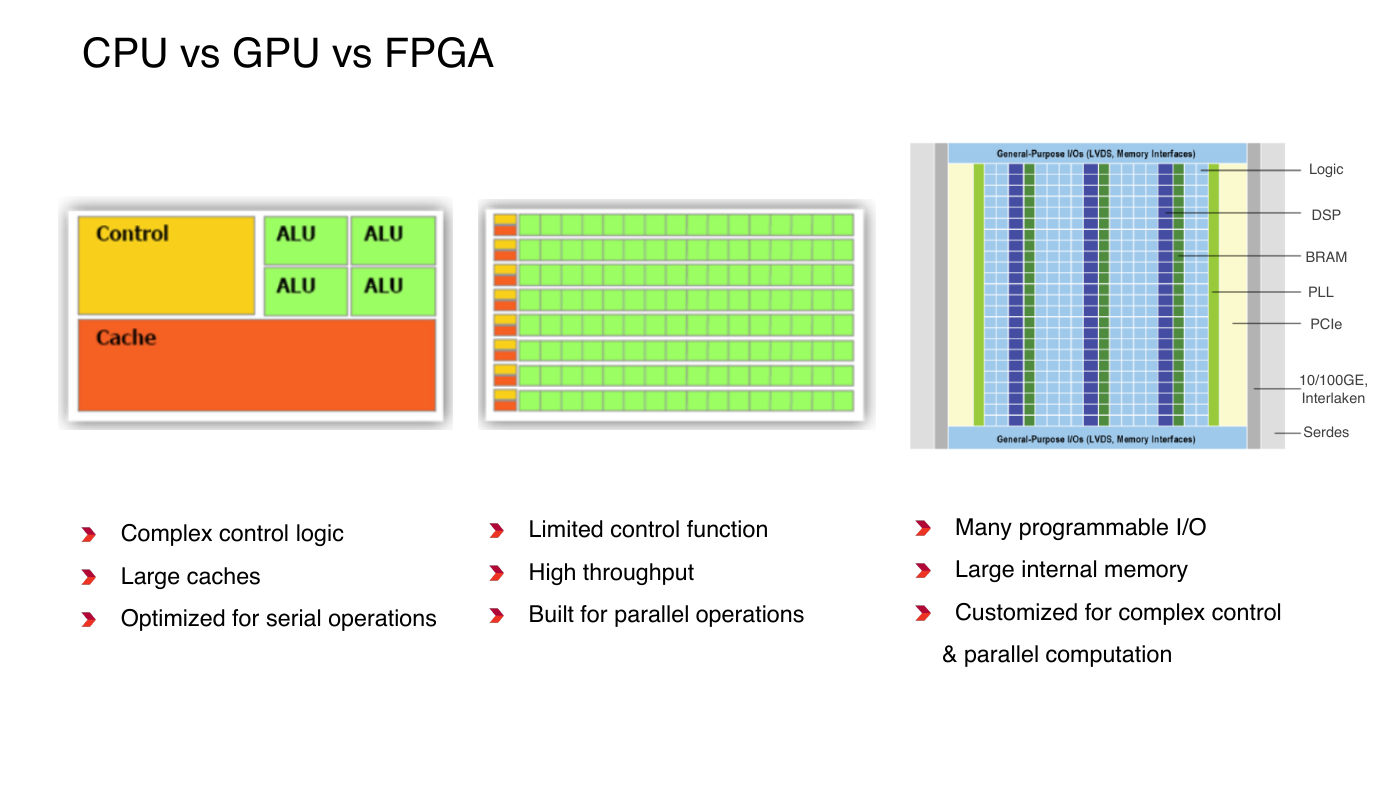

CPU vs GPU vs FPGA

Logic

DSP

BRAM

PLL

PCIe

10/100GE,

Interlaken

Serdes

Complex control logic

Large caches

Limited control function

High throughput

Many programmable I/O

Large internal memory

Optimized for serial operations

Built for parallel operations

Customized for complex control

& parallel computation

�

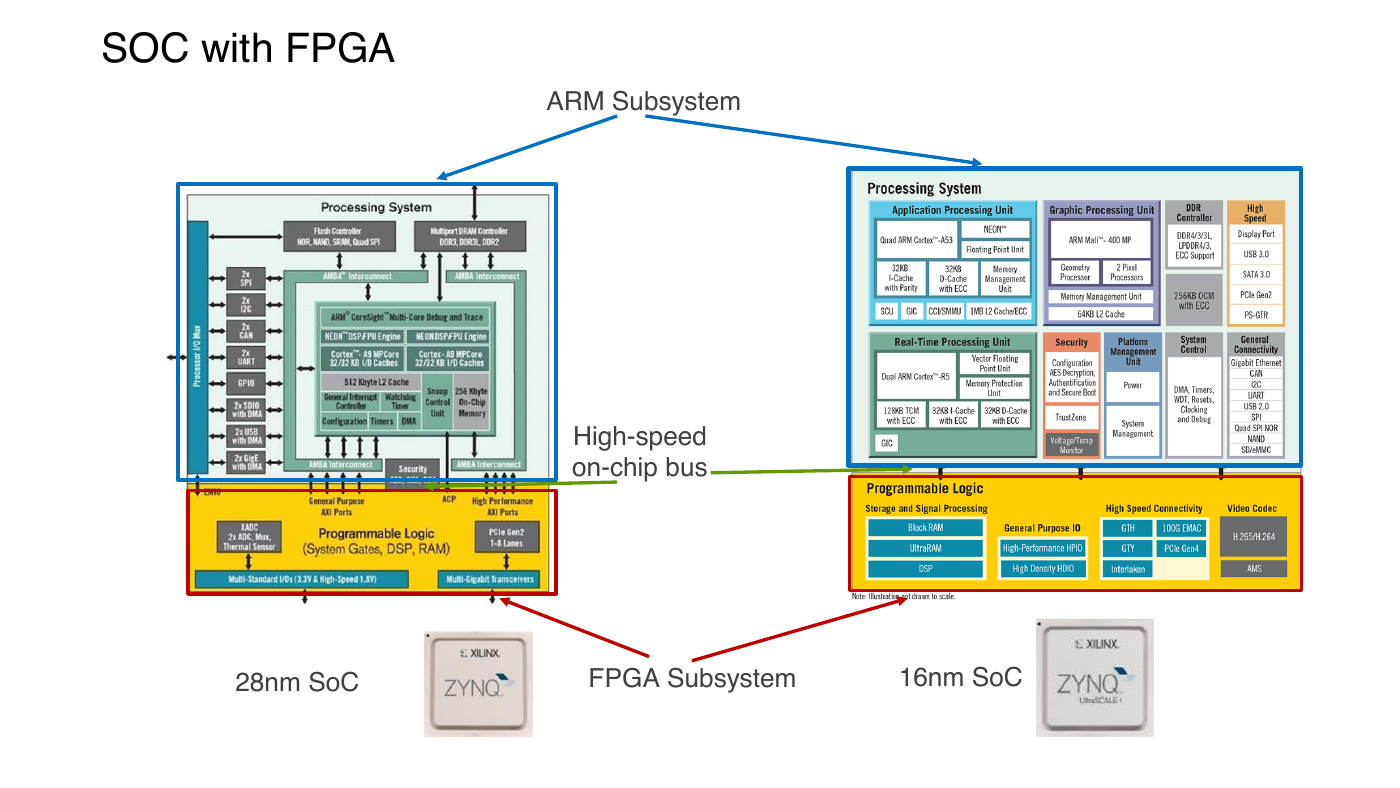

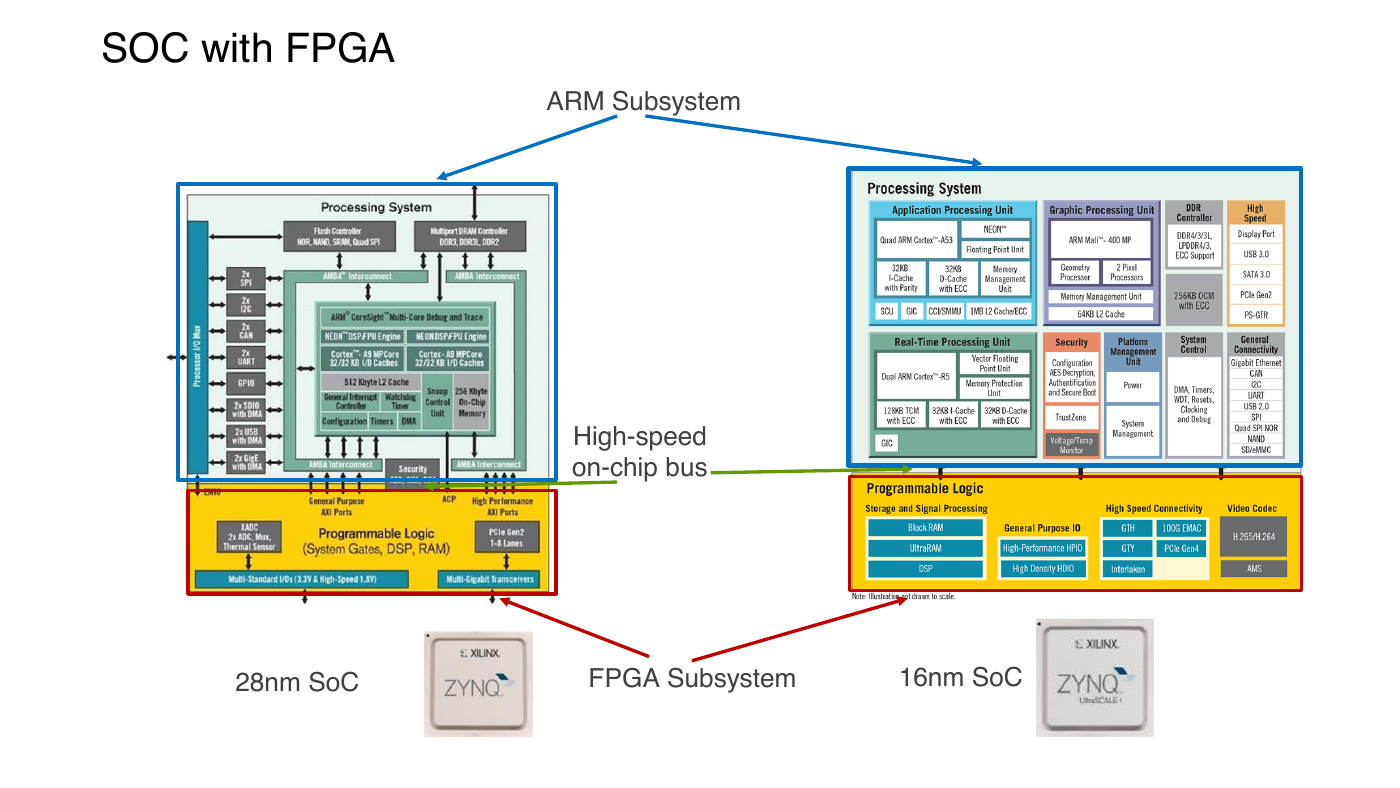

SOC with FPGA

ARM Subsystem

High-speed

on-chip bus

28nm SoC

FPGA Subsystem

16nm SoC

�

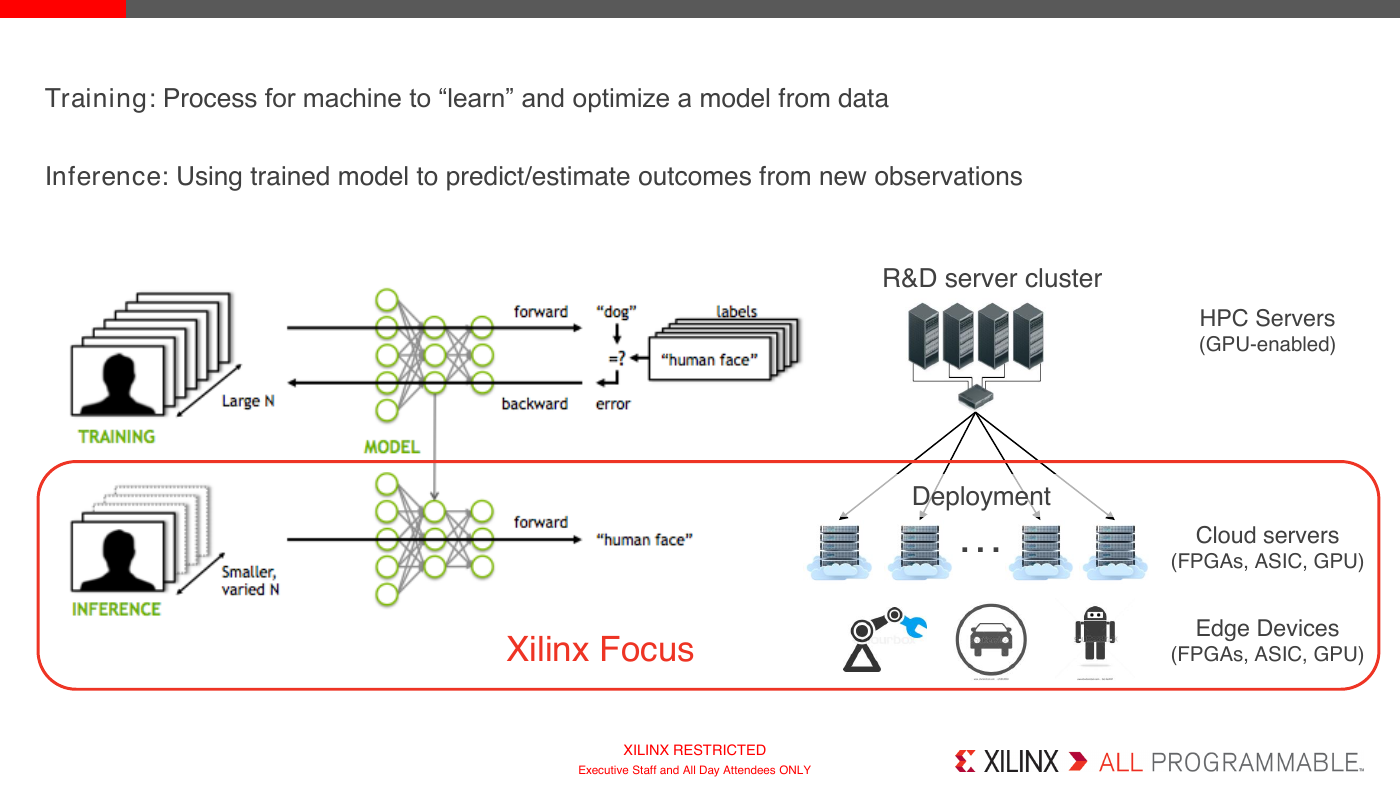

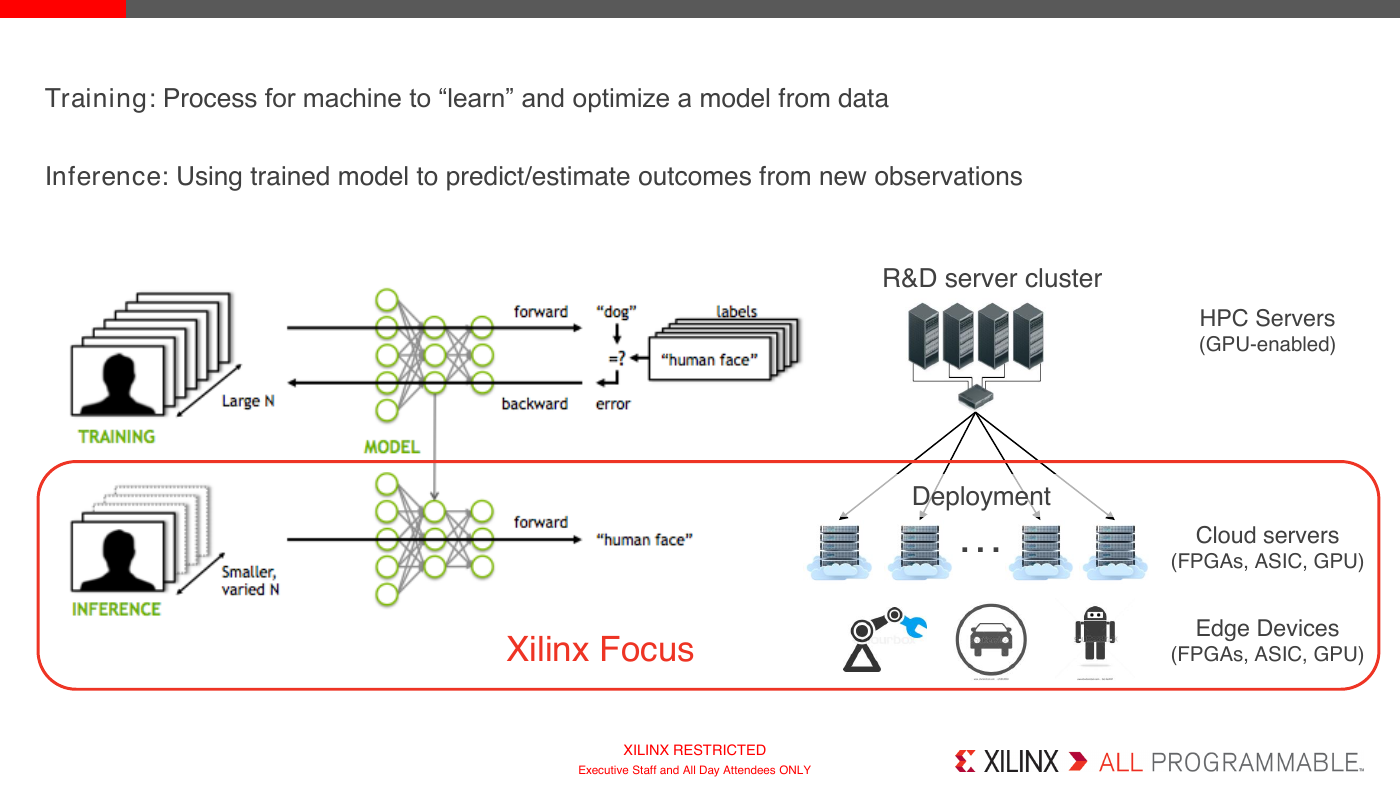

Training: Process for machine to “learn” and optimize a model from data

Inference: Using trained model to predict/estimate outcomes from new observations

R&D server cluster

HPC Servers

(GPU-enabled)

Deployment

…

Cloud servers

(FPGAs, ASIC, GPU)

Edge Devices

(FPGAs, ASIC, GPU)

Xilinx Focus

XILINX RESTRICTED

Executive Staff and All Day Attendees ONLY

.

�

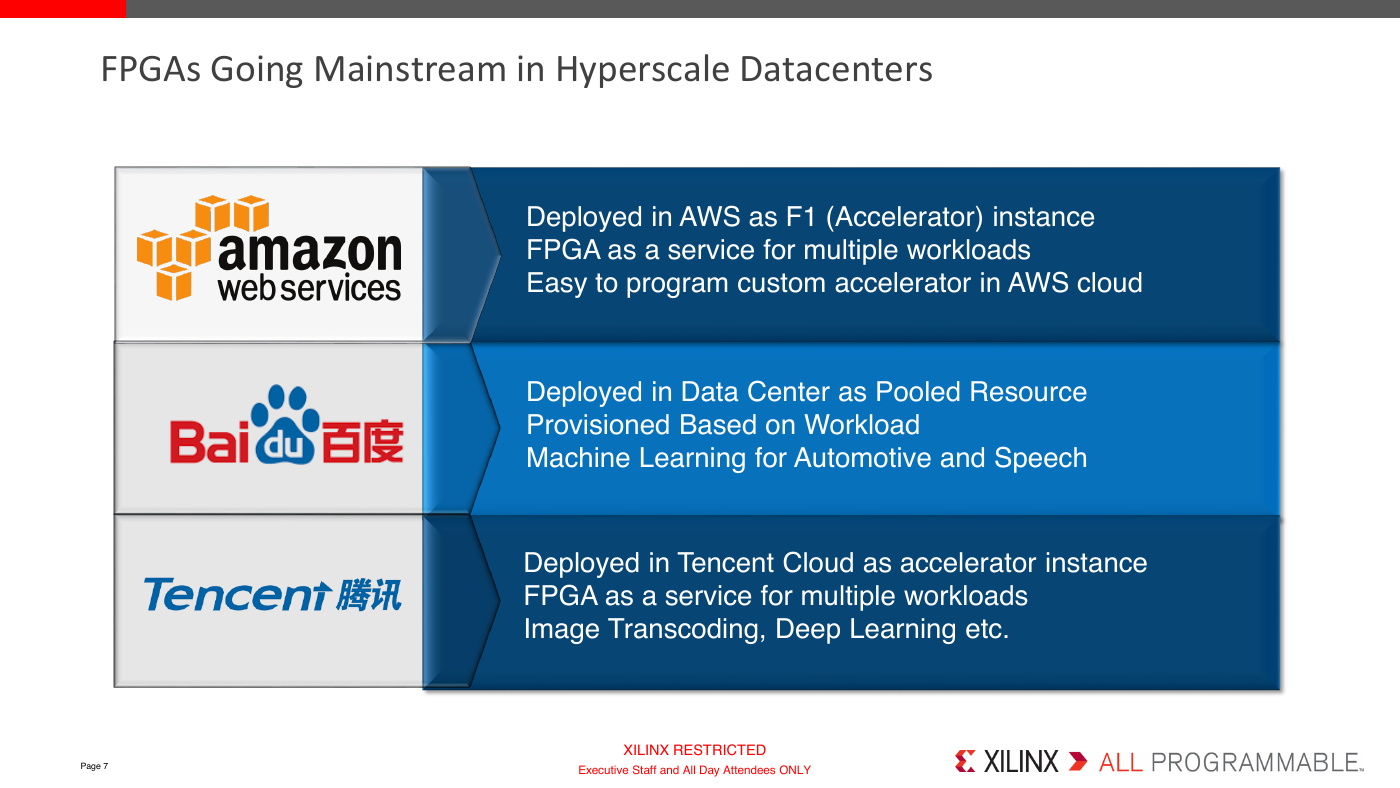

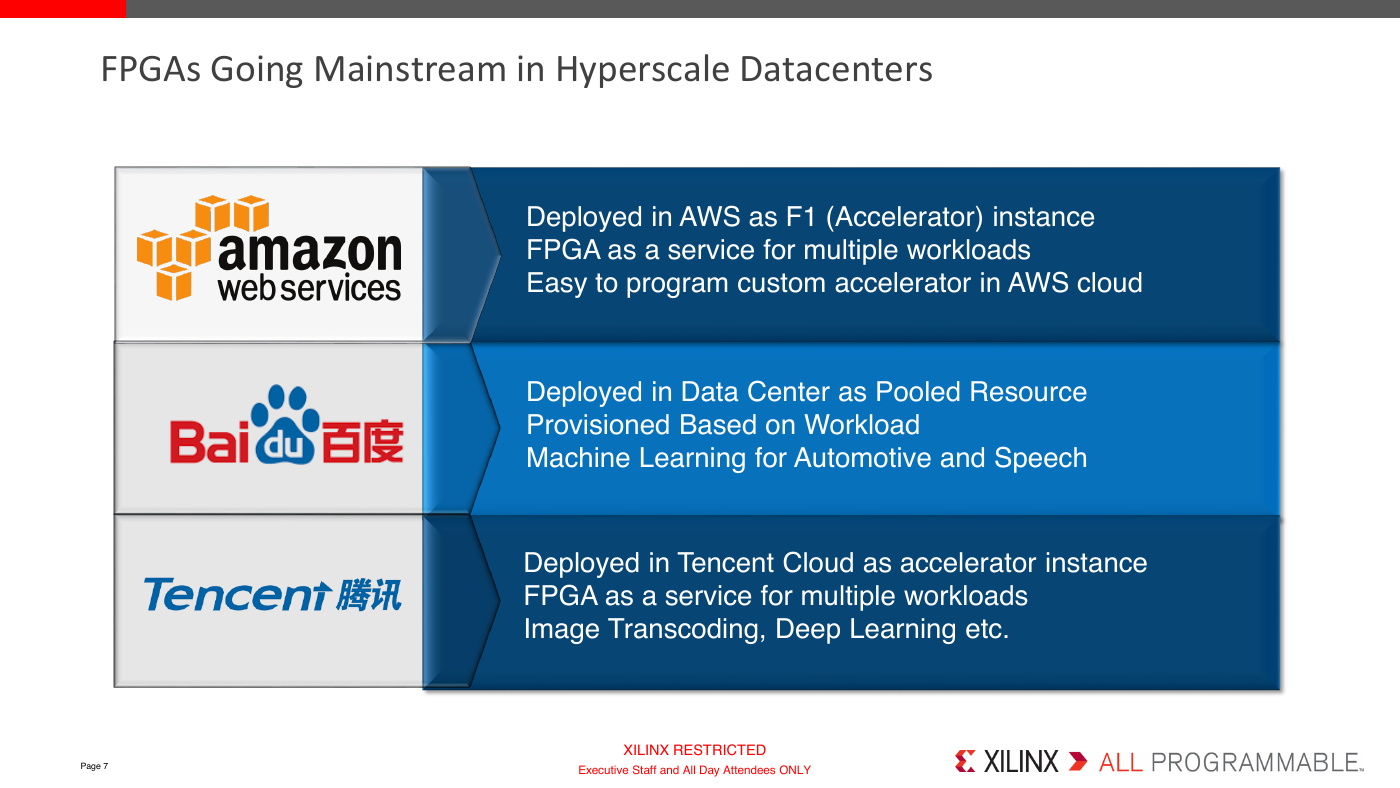

FPGAs Going Mainstream in Hyperscale Datacenters

Deployed in AWS as F1 (Accelerator) instance

FPGA as a service for multiple workloads

Easy to program custom accelerator in AWS cloud

Deployed in Data Center as Pooled Resource

Provisioned Based on Workload

Machine Learning for Automotive and Speech

Deployed in Tencent Cloud as accelerator instance

FPGA as a service for multiple workloads

Image Transcoding, Deep Learning etc.

Page 7

XILINX RESTRICTED

Executive Staff and All Day Attendees ONLY

.

�

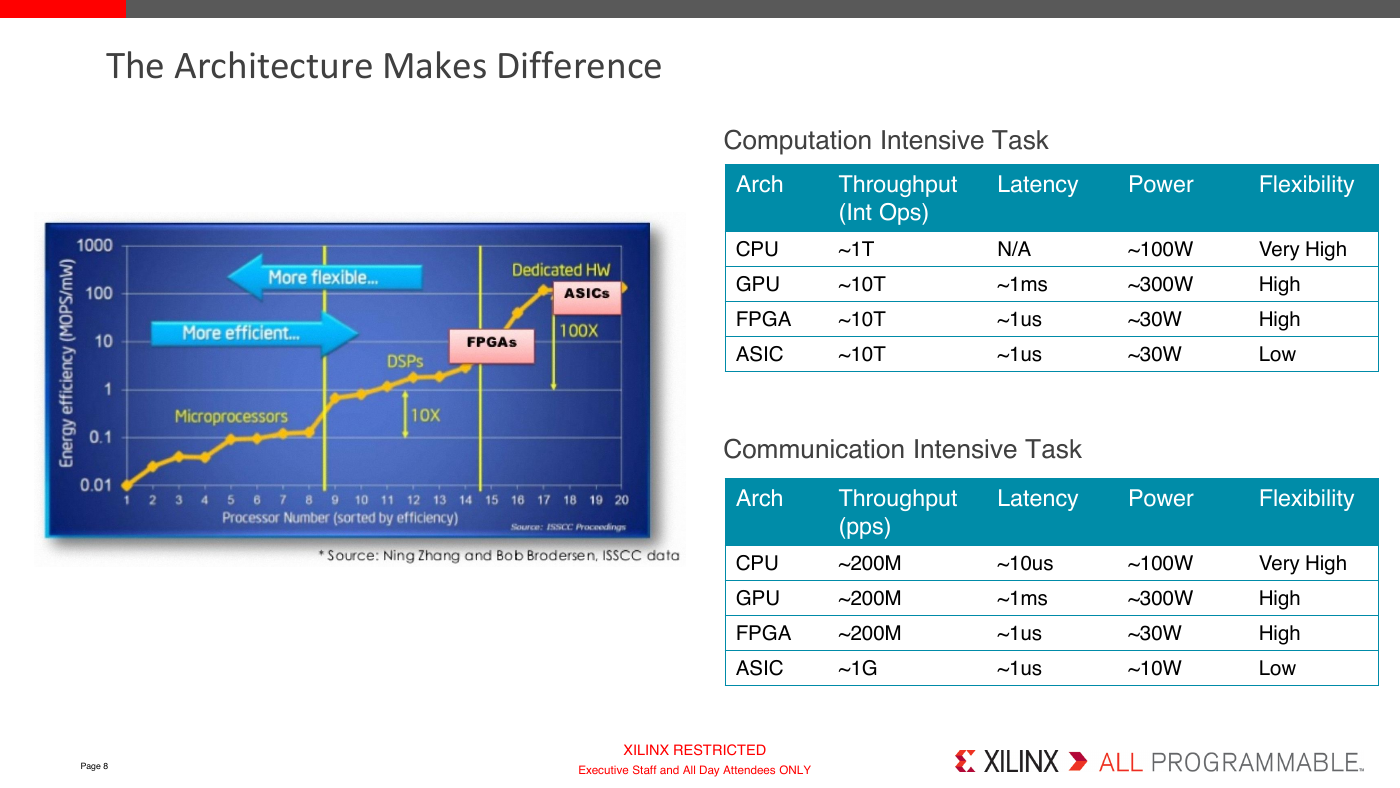

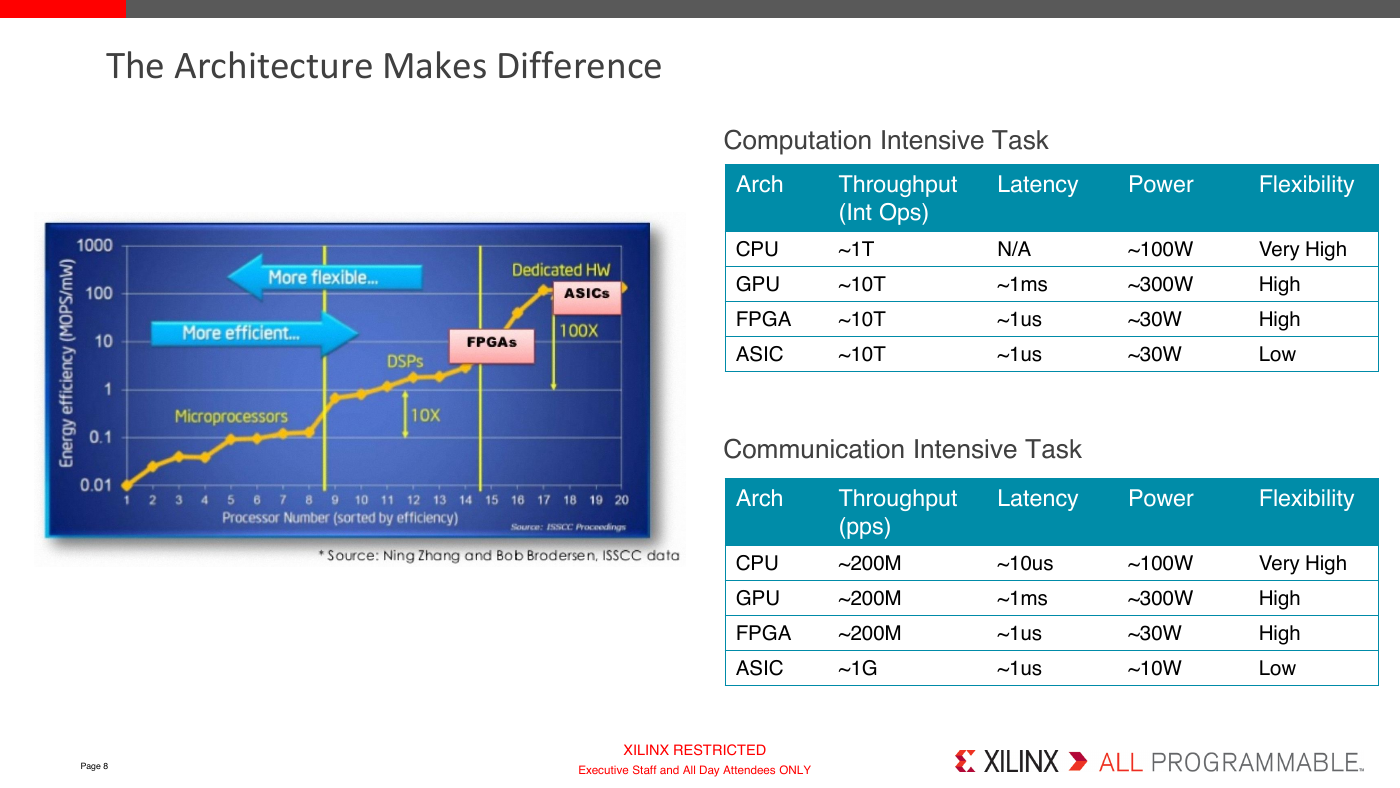

The Architecture Makes Difference

Computation Intensive Task

Arch

CPU

GPU

FPGA

ASIC

Throughput

(Int Ops)

Latency

Power

Flexibility

~1T

~10T

~10T

~10T

N/A

~1ms

~1us

~1us

~100W

~300W

~30W

~30W

Very High

High

High

Low

Communication Intensive Task

Arch

CPU

GPU

Throughput

(pps)

~200M

~200M

FPGA

~200M

ASIC

~1G

Latency

Power

Flexibility

~10us

~1ms

~1us

~1us

~100W

~300W

~30W

~10W

Very High

High

High

Low

Page 8

XILINX RESTRICTED

Executive Staff and All Day Attendees ONLY

.

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc