Markov Processes for Stochastic Modeling

Copyright Page

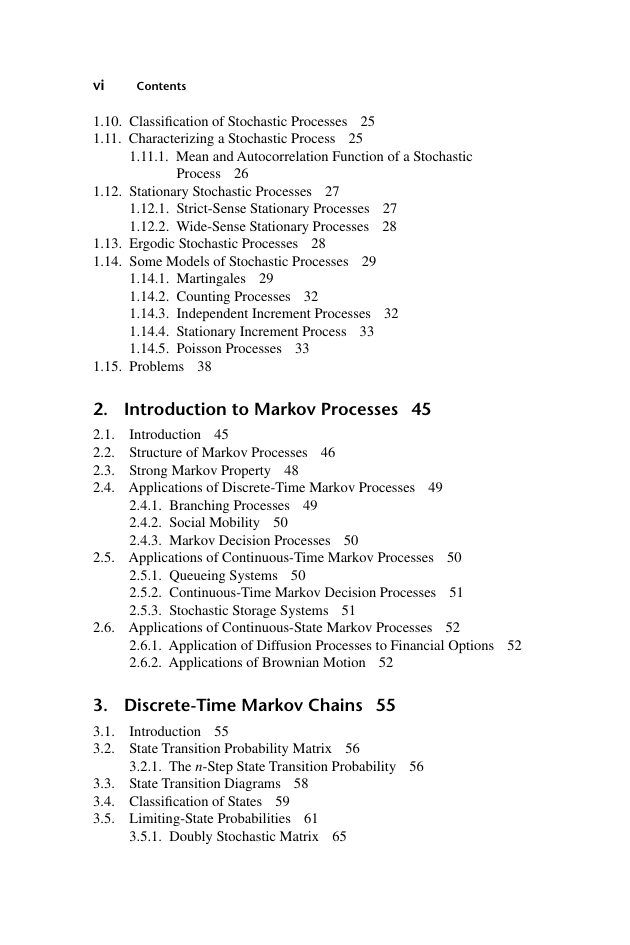

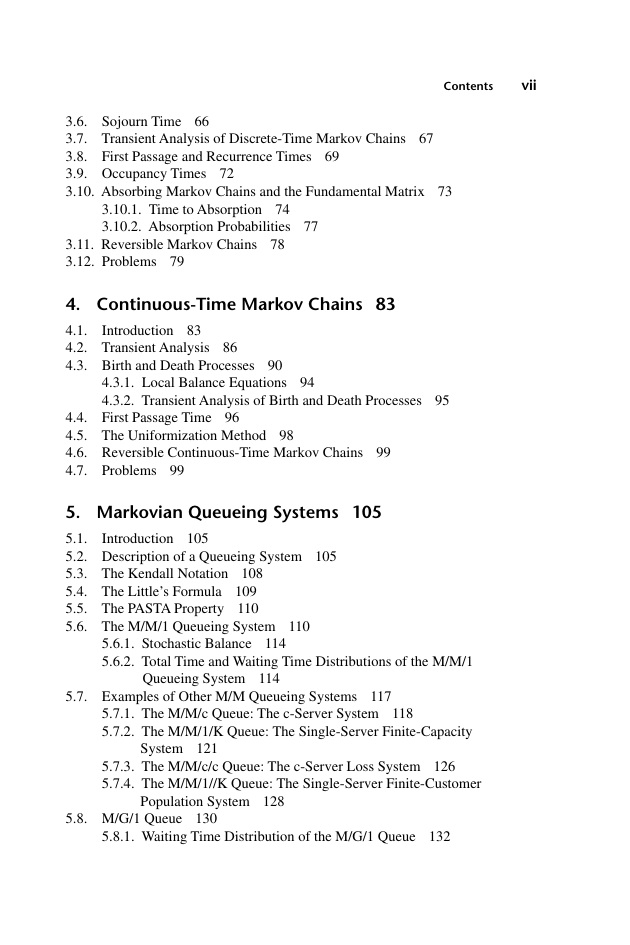

Contents

Preface

Chapter 1. Basic Concepts

1.1 Review of Probability

1.1.1 Conditional Probability

1.1.2 Independence

1.1.3 Total Probability and the Bayes’ Theorem

1.2 Random Variables

1.2.1 Distribution Functions

1.2.2 Discrete Random Variables

1.2.3 Continuous Random Variables

1.2.4 Expectations

1.2.5 Expectation of Nonnegative Random Variables

1.2.6 Moments of Random Variables and the Variance

1.3 Transform Methods

1.3.1 The s-Transform

1.3.2 The z-Transform

1.4 Bivariate Random Variables

1.4.1 Discrete Bivariate Random Variables

1.4.2 Continuous Bivariate Random Variables

1.4.3 Covariance and Correlation Coefficient

1.5 Many Random Variables

1.6 Fubini’s Theorem

1.7 Sums of Independent Random Variables

1.8 Some Probability Distributions

1.8.1 The Bernoulli Distribution

1.8.2 The Binomial Distribution

1.8.3 The Geometric Distribution

1.8.4 The Pascal Distribution

1.8.5 The Poisson Distribution

1.8.6 The Exponential Distribution

1.8.7 The Erlang Distribution

1.8.8 Normal Distribution

1.9 Introduction to Stochastic Processes

1.10 Classification of Stochastic Processes

1.11 Characterizing a Stochastic Process

1.11.1 Mean and Autocorrelation Function of a Stochastic Process

1.12 Stationary Stochastic Processes

1.12.1 Strict-Sense Stationary Processes

1.12.2 Wide-Sense Stationary Processes

1.13 Ergodic Stochastic Processes

1.14 Some Models of Stochastic Processes

1.14.1 Martingales

1.14.2 Counting Processes

1.14.3 Independent Increment Processes

1.14.4 Stationary Increment Process

1.14.5 Poisson Processes

1.15 Problems

Chapter 2. Introduction to Markov Processes

2.1 Introduction

2.2 Structure of Markov Processes

2.3 Strong Markov Property

2.4 Applications of Discrete-Time Markov Processes

2.4.1 Branching Processes

2.4.2 Social Mobility

2.4.3 Markov Decision Processes

2.5 Applications of Continuous-Time Markov Processes

2.5.1 Queueing Systems

2.5.2 Continuous-Time Markov Decision Processes

2.5.3 Stochastic Storage Systems

2.6 Applications of Continuous-State Markov Processes

2.6.1 Application of Diffusion Processes to Financial Options

2.6.2 Applications of Brownian Motion

Chapter 3. Discrete-Time Markov Chains

3.1 Introduction

3.2 State Transition Probability Matrix

3.2.1 The n-Step State Transition Probability

3.3 State Transition Diagrams

3.4 Classification of States

3.5 Limiting-State Probabilities

3.5.1 Doubly Stochastic Matrix

3.6 Sojourn Time

3.7 Transient Analysis of Discrete-Time Markov Chains

3.8 First Passage and Recurrence Times

3.9 Occupancy Times

3.10 Absorbing Markov Chains and the Fundamental Matrix

3.10.1 Time to Absorption

3.10.2 Absorption Probabilities

3.11 Reversible Markov Chains

3.12 Problems

Chapter 4. Continuous-Time Markov Chains

4.1 Introduction

4.2 Transient Analysis

4.3 Birth and Death Processes

4.3.1 Local Balance Equations

4.3.2 Transient Analysis of Birth and Death Processes

4.4 First Passage Time

4.5 The Uniformization Method

4.6 Reversible Continuous-Time Markov Chains

4.7 Problems

Chapter 5. Markovian Queueing Systems

5.1 Introduction

5.2 Description of a Queueing System

5.3 The Kendall Notation

5.4 The Little’s Formula

5.5 The PASTA Property

5.6 The M/M/1 Queueing System

5.6.1 Stochastic Balance

5.6.2 Total Time and Waiting Time Distributions of the M/M/1 Queueing System

5.7 Examples of Other M/M Queueing Systems

5.7.1 The M/M/c Queue: The c-Server System

5.7.2 The M/M/1/K Queue: The Single-Server Finite-Capacity System

5.7.3 The M/M/c/c Queue: The c-Server Loss System

5.7.4 The M/M/1//K Queue: The Single-Server Finite-Customer Population System

5.8 M/G/1 Queue

5.8.1 Waiting Time Distribution of the M/G/1 Queue

5.8.2 The M/Ek/1 Queue

5.8.3 The M/D/1 Queue

5.8.4 The M/M/1 Queue Revisited

5.8.5 The M/HK/1 Queue

5.9 G/M/1 Queue

5.9.1 The Ek/M/1 Queue

5.9.2 The D/M/1 Queue

5.9.3 The H2/ M/1 Queue

5.10 Applications of Markovian Queues

5.11 Problems

Chapter 6. Markov Renewal Processes

6.1 Introduction

6.2 The Renewal Equation

6.2.1 Alternative Approach

6.3 The Elementary Renewal Theorem

6.4 Random Incidence and Residual Time

6.5 Markov Renewal Process

6.5.1 The Markov Renewal Function

6.6 Semi-Markov Processes

6.6.1 Discrete-Time Semi-Markov Processes

6.6.2 Continuous-Time Semi-Markov Processes

6.7 Markov Jump Processes

6.7.1 The Homogeneous Markov Jump Process

6.8 Problems

Chapter 7. Markovian Arrival Processes

7.1 Introduction

7.2 Overview of Matrix-Analytic Methods

7.3 Markovian Arrival Process

7.3.1 Properties of MAP

7.4 Batch Markovian Arrival Process

7.4.1 Properties of BMAP

7.5 Markov-Modulated Poisson Process

7.5.1 The Interrupted Poisson Process

7.5.2 The Switched Poisson Process

7.5.3 Properties of MMPP

7.5.4 The MMPP(2)/M/1 Queue

7.6 Markov-Modulated Bernoulli Process

7.6.1 The MMBP(2)

7.7 Sample Applications of MAP and Its Derivatives

7.8 Problems

Chapter 8. Random Walk

8.1 Introduction

8.2 The Two-Dimensional Random Walk

8.3 Random Walk as a Markov Chain

8.4 Symmetric Random Walk as a Martingale

8.5 Random Walk with Barriers

8.6 Gambler’s Ruin

8.6.1 Ruin Probability

8.6.2 Duration of a Game

8.7 First Return Times

8.8 First Passage Times

8.9 Maximum of a Random Walk

8.10 Random Walk on a Graph

8.10.1 Random Walk on a Weighted Graph

8.11 Markov Random Walk

8.11.1 Markov Random Walk in Semisupervised Machine Learning

8.12 Random Walk with Correlation

8.13 Continuous-Time Random Walk

8.13.1 The Master Equation

8.14 Sample Applications of Random Walk

8.14.1 The Ballot Problem

8.14.2 Web Search

8.14.3 Mobility Models in Mobile Networks

8.14.4 Insurance Risk

8.14.5 Content of a Dam

8.14.6 Cash Management

8.15 Problems

Chapter 9. Brownian Motion and Diffusion Processes

9.1 Introduction

9.2 Brownian Motion

9.2.1 Brownian Motion with Drift

9.2.2 Brownian Motion as a Markov Process

9.2.3 Brownian Motion as a Martingale

9.2.4 First Passage Time of a Brownian Motion

9.2.5 Maximum of a Brownian Motion

9.2.6 First Passage Time in an Interval

9.2.7 The Brownian Bridge

9.3 Introduction to Stochastic Calculus

9.3.1 The Ito Integral

9.3.2 The Stochastic Differential

9.3.3 The Ito’s Formula

9.3.4 Stochastic Differential Equations

9.4 Geometric Brownian Motion

9.5 Fractional Brownian Motion

9.6 Application of Brownian Motion to Option Pricing

9.7 Random Walk Approximation of Brownian Motion

9.8 The Ornstein-Uhlenbeck Process

9.8.1 Mean Reverting Ornstein-Uhlenbeck Process

9.8.2 Applications of the Ornstein-Uhlenbeck Process

9.9 Diffusion Processes

9.10 Examples of Diffusion Processes

9.10.1 Brownian Motion

9.10.2 Brownian Motion with Drift

9.10.3 Levy Processes

9.11 Relationship Between the Diffusion Process and Random Walk

9.12 Problems

Chapter 10. Controlled Markov Processes

10.1 Introduction

10.2 Markov Decision Processes

10.2.1 Overview of Dynamic Programming

10.2.2 Markov Reward Processes

10.2.3 MDP Basics

10.2.4 MDPs with Discounting

10.2.5 Solution Methods

10.3 Semi-Markov Decision Processes

10.3.1 Semi-Markov Reward Model

10.3.2 Discounted Reward

10.3.3 Analysis of the Continuous-Decision-Interval SMDPs

10.3.4 Solution by Policy-Iteration

10.3.5 SMDP with Discounting

10.3.6 Solution by Policy Iteration when Discounting Is Used

10.3.7 Analysis of the Discrete-Decision-Interval SMDPs with Discounting

10.3.8 Continuous-Time Markov Decision Processes

10.3.9 Applications of Semi-Markov Decision Processes

10.4 Partially Observable Markov Decision Processes

10.4.1 Partially Observable Markov Processes

10.4.2 POMDP Basics

10.4.3 Solving POMDPs

10.4.4 Computing the Optimal Policy

10.4.5 Approximate Solutions of POMDP

10.5 Problems

Chapter 11. Hidden Markov Models

11.1 Introduction

11.2 HMM Basics

11.3 HMM Assumptions

11.4 Three Fundamental Problems

11.5 Solution Methods

11.5.1 The Evaluation Problem

11.5.2 The Decoding Problem and the Viterbi Algorithm

11.5.3 The Learning Problem and the Baum-Welch Algorithm

11.6 Types of Hidden Markov Models

11.7 Hidden Markov Models with Silent States

11.8 Extensions of Hidden Markov Models

11.8.1 Hierarchical Hidden Markov Model

11.8.2 Factorial Hidden Markov Model

11.8.3 Coupled Hidden Markov Model

11.8.4 Hidden Semi-Markov Models

11.8.5 Profile HMMs for Biological Sequence Analysis

11.9 Other Extensions of HMM

11.10 Problems

Chapter 12. Markov Random Fields

12.1 Introduction

12.2 Markov Random Fields

12.2.1 Graphical Representation

12.2.2 Gibbs Random Fields and the Hammersley-Clifford Theorem

12.3 Examples of Markov Random Fields

12.3.1 The Ising Model

12.3.2 The Potts Model

12.3.3 Gauss-Markov Random Fields

12.4 Hidden Markov Random Fields

12.5 Applications of Markov Random Fields

12.6 Problems

Chapter 13. Markov Point Processes

13.1 Introduction

13.2 Temporal Point Processes

13.2.1 Specific Temporal Point Processes

13.3 Spatial Point Processes

13.3.1 Specific Spatial Point Processes

13.4 Spatial-Temporal Point Processes

13.5 Operations on Point Processes

13.5.1 Thinning

13.5.2 Superposition

13.5.3 Clustering

13.6 Marked Point Processes

13.7 Markov Point Processes

13.8 Markov Marked Point Processes

13.9 Applications of Markov Point Processes

13.10 Problems

Chapter 14. Markov Chain Monte Carlo

14.1 Introduction

14.2 Monte Carlo Simulation Basics

14.2.1 Generating Random Variables from the Random Numbers

14.2.2 Monte Carlo Integration

14.3 Markov Chains Revisited

14.4 MCMC Simulation

14.4.1 The Metropolis-Hastings Algorithm

14.4.2 Gibbs Sampling

14.5 Applications of MCMC

14.5.1 Simulated Annealing

14.5.2 Inference in Belief Networks

14.6 Choice of Method

14.7 Problems

References

Index

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc