CHAPTER 1

1.1

Let

ru k( )

ry k( )

[

E u n( )u* n

k–(

)

]

=

[

E y n( )y* n k–(

)

]

=

We are given that

y n( )

=

a+(

u n

) u n

–

a–(

)

Hence, substituting Eq. (3) into (2), and then using Eq. (1), we get

(1)

(2)

(3)

ry k( )

=

E u n

a+(

[

(

) u n a–(

–

)

) u* n

(

k–+(

a

)

(

u* n a–

–

k–

)

)

]

=

2ru k( )

–

(

ru 2a

)

k+

(

–

ru

2a–

k+

)

1.2 We know that the correlation matrix R is Hermitian; that is

RH

R=

Given that the inverse matrix R-1 exists, we may write

R 1– RH

I=

where I is the identity matrix. Taking the Hermitian transpose of both sides:

RR H–

I=

Hence,

R H–

R 1–

=

That is, the inverse matrix R-1 is Hermitian.

1.3

For the case of a two-by-two matrix, we may

Ru

=

Rs Rν+

1

�

=

=

r11 r12

r21 r22

+

σ2 0

0 σ2

σ2+

r11

r21

r12

σ2+

r22

For Ru to be nonsingular, we require

(

det Ru

)

=

(

r11

σ2+

) r22

(

σ2+

)

–

r12r21

0>

With r12 = r21 for real data, this condition reduces to

(

r11

σ2+

) r22

(

σ2+

)

–

r12r21

0>

Since this is quadratic in

larity of Ru:

σ2

, we may impose the following condition on

σ2

for nonsingu-

σ2

>

1

(

--- r11

2

r22+

)

1

–

4∆

--------------------------------------

)2 1–

(

r11

r

r22+

where

∆

r

=

r11r22

2–

r12

1.4 We are given

R

=

1 1

1 1

This matrix is positive definite because

aT Ra

=

[

a1,a2

] 1 1

1 1

=

2

a1

+

2a1a2

+

a1

a2

2

a2

2

�

(

=

a1

a2+

)2

0>

for all nonzero values of a1 and a2

(Positive definiteness is stronger than nonnegative definiteness.)

But the matrix R is singular because

det R(

)

=

1( )2

–

1( )2

=

0

Hence, it is possible for a matrix to be positive definite and yet it can be singular.

1.5

(a)

RM+1

=

r 0( )

r

rH

RM

Let

1–

RM+1

=

a

b

bH

C

where a, b and C are to be determined. Multiplying (1) by (2):

IM+1

=

r 0( )

r

rH

RM

a

b

bH

C

where IM+1 is the identity matrix. Therefore,

r 0( )a

+

rHb

1=

ra RMb

+

0=

rbH RMC

+

IM=

r 0( )bH rHC+

0T=

From Eq. (4):

3

(1)

(2)

(3)

(4)

(5)

(6)

�

b

–=

1– ra

RM

Hence, from (3) and (7):

a

=

1

------------------------------------

1– r

rHRM

r 0( )

–

Correspondingly,

b

–=

1– r

RM

------------------------------------

1– r

rHRM

r 0( )

–

From (5):

C

=

1–

RM

–

1– rbH

RM

=

1–

RM

+

1– rrHRM

1–

RM

------------------------------------

1– r

rHRM

r 0( )

–

As a check, the results of Eqs. (9) and (10) should satisfy Eq. (6).

(7)

(8)

(9)

(10)

r 0( )bH rHC+

=

–

1–

r 0( )rHRM

------------------------------------

1– r

rHRM

r 0( )

–

+

1–

rHRM

+

1–

1– rrHRM

rHRM

-------------------------------------

1– r

rHRM

r 0( )

–

0T=

We have thus shown that

1–

RM+1

=

=

0

0

0

0

0T

1–

RM

0T

1–

RM

+

a

1

1– r

RM

1–

rHRM

–

1– rrHRM

RM

1–

+

a

1

1– r

RM

–

[

1

–

1–

rHRM

]

4

�

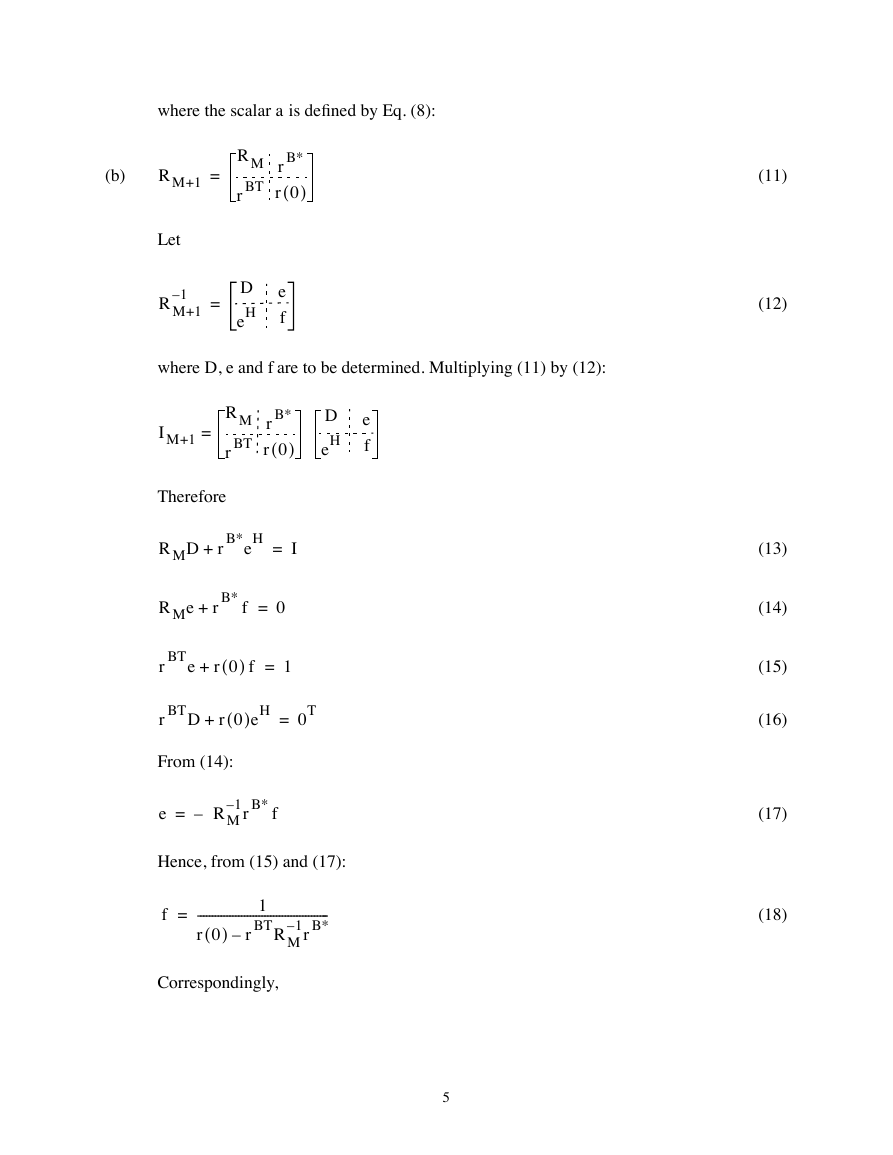

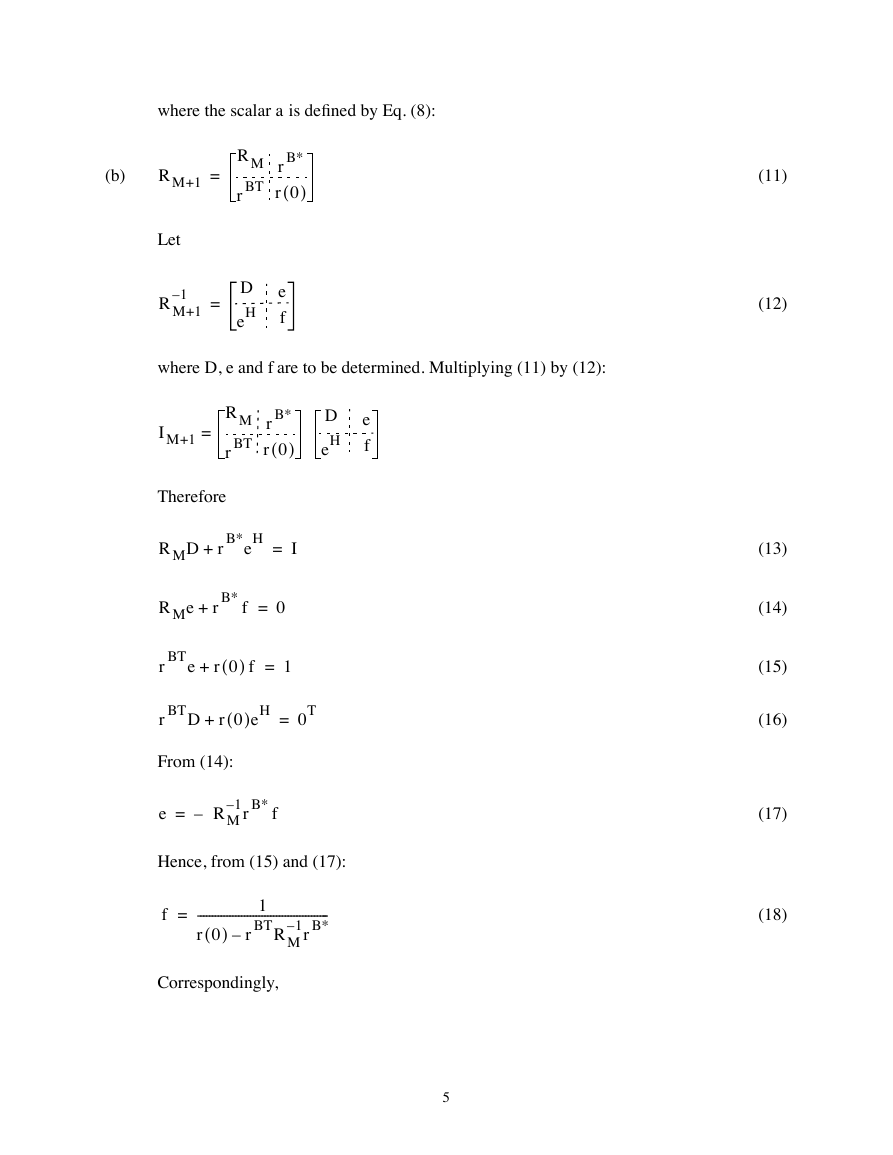

where the scalar a is defined by Eq. (8):

(b)

RM+1

=

RM

rBT

rB*

r 0( )

Let

1–

RM+1

=

D

eH

e

f

where D, e and f are to be determined. Multiplying (11) by (12):

IM+1

=

RM

rBT

rB*

r 0( )

D

eH

e

f

Therefore

RMD rB*eH

+

I=

RMe

+

rB* f

0=

rBT e

r 0( ) f

+

1=

rBT D r 0( )eH

+

0T=

From (14):

e

=

1– rB* f

– RM

Hence, from (15) and (17):

f

=

---------------------------------------------

1– rB*

r 0( )

1

rBT RM

–

Correspondingly,

5

(11)

(12)

(13)

(14)

(15)

(16)

(17)

(18)

�

e

–=

1– rB*

RM

rBT RM

–

---------------------------------------------

1– rB*

r 0( )

From (13):

D

=

1–

RM

–

1– rB*eH

RM

=

1–

RM

+

1– rB*rBT RM

1–

RM

---------------------------------------------

1– rB*

r 0( )

–

rBT RM

As a check, the results of Eqs. (19) and (20) must satisfy Eq. (16). Thus

(19)

(20)

rBT D r 0( )eH

+

=

1–

rBT RM

+

1– rB*rBT RM

1–

rBT RM

------------------------------------------------

1– rB*

rBT RM

r 0( )

–

–

0T=

We have thus shown that

1–

r 0( )rBT RM

rBT RM

–

---------------------------------------------

1– rB*

r 0( )

1–

RM+1

=

1–

RM

0T

0

0

+

f

1– rB*rBT RM

RM

–

rBT RM

1–

1–

–

1– rB*

RM

1

=

1–

RM

0T

0

0

+

f

1– rB*

R– M

1

[

–

rBT RM

1–

]

1

where the scalar f is defined by Eq. (18).

1.6

(a) We express the difference equation describing the first-order AR process u(n) as

u n( )

=

v n( ) w1u n 1–(

+

)

where w1 = -a1. Solving this equation by repeated substitution, we get

u n( )

=

v n( ) w1v n 1–(

+

) w1u n

+

2–(

)

6

�

…=

=

v n( ) w1v n 1–(

+

) w1

+

2v n 2–(

) … w1

+

+

n-1v 1( )

(1)

Here we have used the initial condition

u 0( )

0=

or equivalently

u 1( )

v 1( )

=

Taking the expected value of both sides of Eq. (1) and using

[

E v n( )

]

µ=

for all n,

we get the geometric series

[

E u n( )

]

=

µ w1

+

µ w1

+

2µ … w1

+

n-1µ

+

=

n–

µ 1 w1

---------------

1 w1–

µn,

,

1≠

w1

w1

1=

This result shows that if

AR process u(n) is not stationary. If, however,

condition:

, then E[u(n)] is a function of time n. Accordingly, the

the AR parameter satisfies the

µ 0≠

1<

or

a1

1<

w1

then

[

E n( )

]

→

µ

--------------- as n

1 w1–

∞→

Under this condition, we say that the AR process is asymptotically stationary to order

one.

(b) When the white noise process v(n) has zero mean, the AR process u(n) will likewise

have zero mean. Then

7

�

var v n( )

[

]

=

σ

2

v

var u n( )

[

]

=

E u2 n( )

[

].

Substituting Eq. (1) into (2), and recognizing that for the white noise process

[

E v n( )v k( )

]

=

σ

2

v

0,

n

n

k=

k≠

we get the geometric series

(2)

(3)

var u n( )

[

]

=

σ

2 1 w1

(

2 w1

+

+

v

4 … w1

+

+

2n-2

)

=

σ

2n

2 1 w1

–

------------------

v

2–

1 w1

,

σ

2n,

v

1≠

w1

w1

1=

When |a1| < 1 or |w1| < 1, then

var u n( )

[

]

≈

2

σ

v

---------------

2–

1 w1

=

2

σ

v

--------------

2–

1 a1

for large n

(c) The autocorrelation function of the AR process u(n) equals E[u(n)u(n-k)]. Substituting

Eq. (1) into this formula, and using Eq. (3), we get

[

E u n( )u n k–(

)

]

=

σ

2 w1

(

k w1

+

v

k+2 … w1

+

+

k+2n-2

)

=

σ

2w1

v

2n

k 1 w1

–

------------------

2–

1 w1

,

1≠

w1

σ

2n,

v

w1

1=

8

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc