International Journal of Geosciences, 2019, 10, 1-11

http://www.scirp.org/journal/ijg

ISSN Online: 2156-8367

ISSN Print: 2156-8359

A Review of Researches on Deep Learning in

Remote Sensing Application

Ming Zhu1,2*, Yongning He2, Qingyu He2

1Institute of Geoscience and Resources, China University of Geosciences, Beijing, China

2Geographic Information Center of Guangxi, Nanning, China

How to cite this paper: Zhu, M., He, Y.N.

and He, Q.Y. (2019) A Review of Re-

searches on Deep Learning in Remote

Sensing Application. International Journal

of Geosciences, 10, 1-11.

https://doi.org/10.4236/ijg.2019.101001

Received: December 18, 2018

Accepted: January 7, 2019

Published: January 10, 2019

Copyright © 2019 by author(s) and

Scientific Research Publishing Inc.

This work is licensed under the Creative

Commons Attribution International

License (CC BY 4.0).

http://creativecommons.org/licenses/by/4.0/

Open Access

Abstract

In recent years, deep learning has been widely used in the field of image un-

derstanding and made breakthroughs research progress in image under-

standing. Because remote sensing application and image understanding are

inseparable, researchers have carried out a lot of research on the application

of deep learning in remote sensing field, and extended the deep learning me-

thod to various application fields of remote sensing. This paper summarizes

the basic principles of deep learning and its research progress and typical ap-

plications in remote sensing, introduces the current main deep learning mod-

el and its development history, focuses on the analysis and elaboration of the

research status of deep learning in remote sensing image classification, object

detection and change detection, and on this basis, summarizes the typical ap-

plications and their application effects. Finally, according to the current ap-

plication of deep learning in remote sensing, the main problems and future

development directions are summarized.

Keywords

Deep Learning, Remote Sensing Application, CNN, Land Cover Classification,

Object Detection, Change Detection

1. Introduction

Remote sensing is a technical means using sensors on satellite, aircraft or other

platforms to collect targets’ radiation information, with which specific informa-

tion can be obtained. In recent years, with the rapid development of remote

sensing technology, the capacity of acquiring remote sensing data has been en-

hancing. Meantime, the spectral, spatial and temporal resolution of remote

sensing imagery have been improving [1], providing solid data bases for the re-

DOI: 10.4236/ijg.2019.101001 Jan. 10, 2019

1

International Journal of Geosciences

�

M. Zhu et al.

DOI: 10.4236/ijg.2019.101001

mote sensing application. Although better and better imagery can be acquired

through remote sensing, in practice the application of remote sensing imagery

relies heavily on manual processing, while machine interpretation is only an aid

to manual work. Traditionally, machine interpretation of remote sensing im-

agery is achieved through statistical methods such as maximum likelihood and

K-means clustering, which are based on remote sensing features like spectrum

and textures. In the past few years, methods including artificial neural network,

support vector machine, genetic algorithm and object oriented method are de-

veloping rapidly with certain fruits achieved [2]. However, generally speaking,

all these methods require manually extraction of image features or design of in-

terpretation rules, thus lead to long design cycles and limited the potential of al-

gorithm improvement. Besides, the accuracy and efficiency of automatic inter-

pretation of remote sensing imagery cannot meet the needs of most applications.

Since the remote sensing application is heavily dependent on manual work, the

effectiveness of remote sensing is severely restricted by the experience and ex-

pertise of the operator [3].

Deep learning is an important domain of machine learning research. Com-

pared with traditional machine learning, deep learning is a representation-

learning method with multiple layers. Data abstraction and extraction from the

lower layers to higher layers are accomplished through simple nonlinear mod-

ules. Current deep learning often use deep neural network (DNN) to construct

the layers, which are the stacks of simple nonlinear modules. Input data is passed

between the layers, whose mapping relationship reduces the dimension and ex-

tract the key characteristics of data [4]. Relying on the deep convolution neural

network (DCNN), deep learning provides an end-to-end machine learning

model that can automatically extract image features without extraction algo-

rithms designed by human. Compared with traditional methods, deep learning

is completely data-driven, which can automatically find the best ways to extract

image features through learning [5] [6].

This paper briefly introduces the development of deep learning, and makes a

detailed analysis for the current application fields of remote sensing land cover

classification, target detection and change detection, expounds the main deep

learning methods and research progress in these three fields, introduces the cur-

rent application situation of deep learning in remote sensing field, and summa-

rizes the current research work and main models. Finally, the application of

deep learning is, summarized the existing problems are pointed out, and the fu-

ture development direction of deep learning for remote sensing is prospected.

2. Common Deep Learning Methods

in Remote Sensing Application

The deep learning method in remote sensing application is mainly used in three

aspects, namely surface classification, object detection and change detection. A

review of the current research results indicates that the major technical approach

2

International Journal of Geosciences

�

M. Zhu et al.

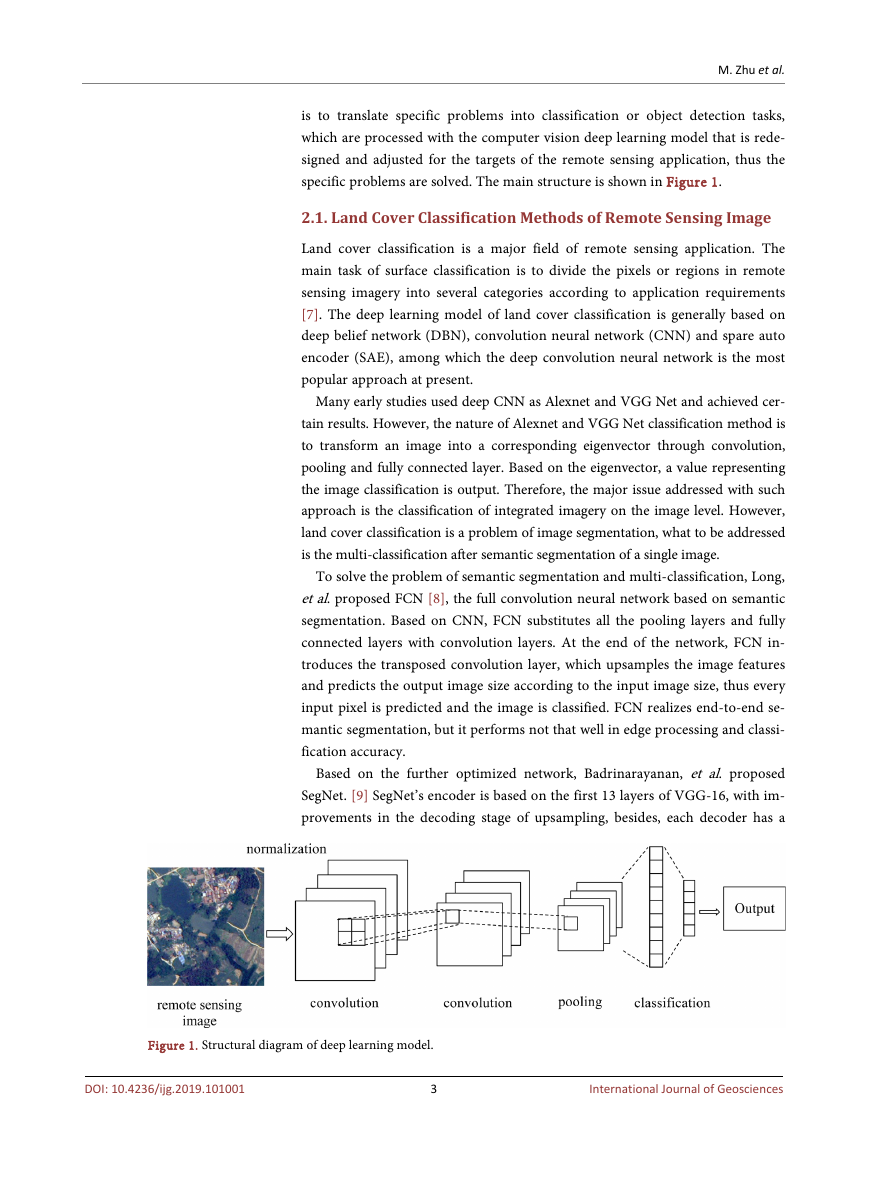

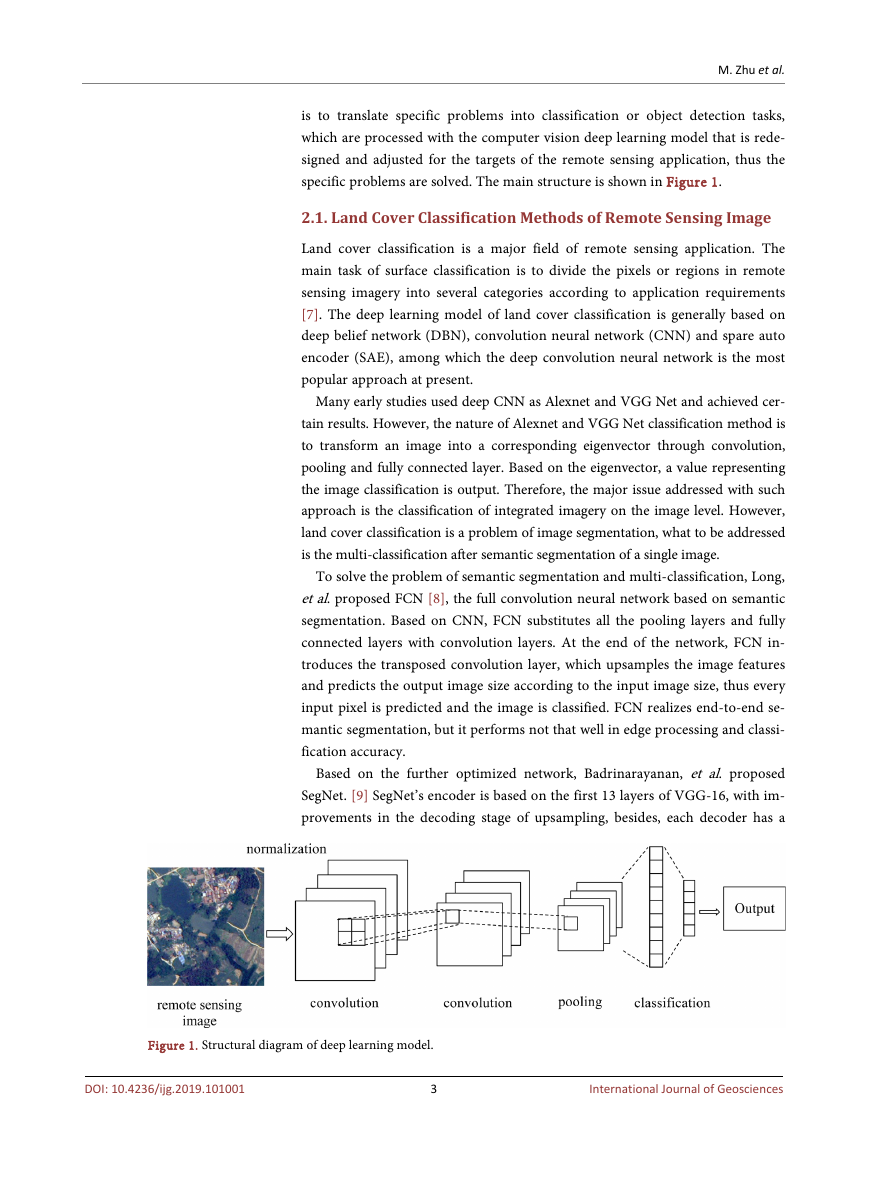

is to translate specific problems into classification or object detection tasks,

which are processed with the computer vision deep learning model that is rede-

signed and adjusted for the targets of the remote sensing application, thus the

specific problems are solved. The main structure is shown in Figure 1.

2.1. Land Cover Classification Methods of Remote Sensing Image

Land cover classification is a major field of remote sensing application. The

main task of surface classification is to divide the pixels or regions in remote

sensing imagery into several categories according to application requirements

[7]. The deep learning model of land cover classification is generally based on

deep belief network (DBN), convolution neural network (CNN) and spare auto

encoder (SAE), among which the deep convolution neural network is the most

popular approach at present.

Many early studies used deep CNN as Alexnet and VGG Net and achieved cer-

tain results. However, the nature of Alexnet and VGG Net classification method is

to transform an image into a corresponding eigenvector through convolution,

pooling and fully connected layer. Based on the eigenvector, a value representing

the image classification is output. Therefore, the major issue addressed with such

approach is the classification of integrated imagery on the image level. However,

land cover classification is a problem of image segmentation, what to be addressed

is the multi-classification after semantic segmentation of a single image.

To solve the problem of semantic segmentation and multi-classification, Long,

et al. proposed FCN [8], the full convolution neural network based on semantic

segmentation. Based on CNN, FCN substitutes all the pooling layers and fully

connected layers with convolution layers. At the end of the network, FCN in-

troduces the transposed convolution layer, which upsamples the image features

and predicts the output image size according to the input image size, thus every

input pixel is predicted and the image is classified. FCN realizes end-to-end se-

mantic segmentation, but it performs not that well in edge processing and classi-

fication accuracy.

Based on the further optimized network, Badrinarayanan, et al. proposed

SegNet. [9] SegNet’s encoder is based on the first 13 layers of VGG-16, with im-

provements in the decoding stage of upsampling, besides, each decoder has a

Figure 1. Structural diagram of deep learning model.

DOI: 10.4236/ijg.2019.101001

3

International Journal of Geosciences

�

M. Zhu et al.

corresponding encoder, and thus, with the same segmentation accuracy can be

achieved with less training parameters and low memory overhead. To address the

reduced resolution brought by subsampling or polling, based on the advantages of

the above networks, DeepLab [10], adopts Atrous convolution to expand the re-

ceptive field to acquire more contextual information. The latest DeepLab V3+ [11]

[12] comes with improved Atrous convolution algorithm. ResNet, achieved with

the pre-training on Imagnet, is used as the major network for feature extraction. In

the ResNet residue block, Atrous convolution and different expansion rates are

used to capture multi-scale contextual information in each convolution. To inte-

grate multi-scale information, DeepLab v3+ introduces the encoder-decoder ar-

chitecture and adopts the Xception model. With these improvements, the seg-

mentation accuracy is maintained while the back end dense CRF is discarded.

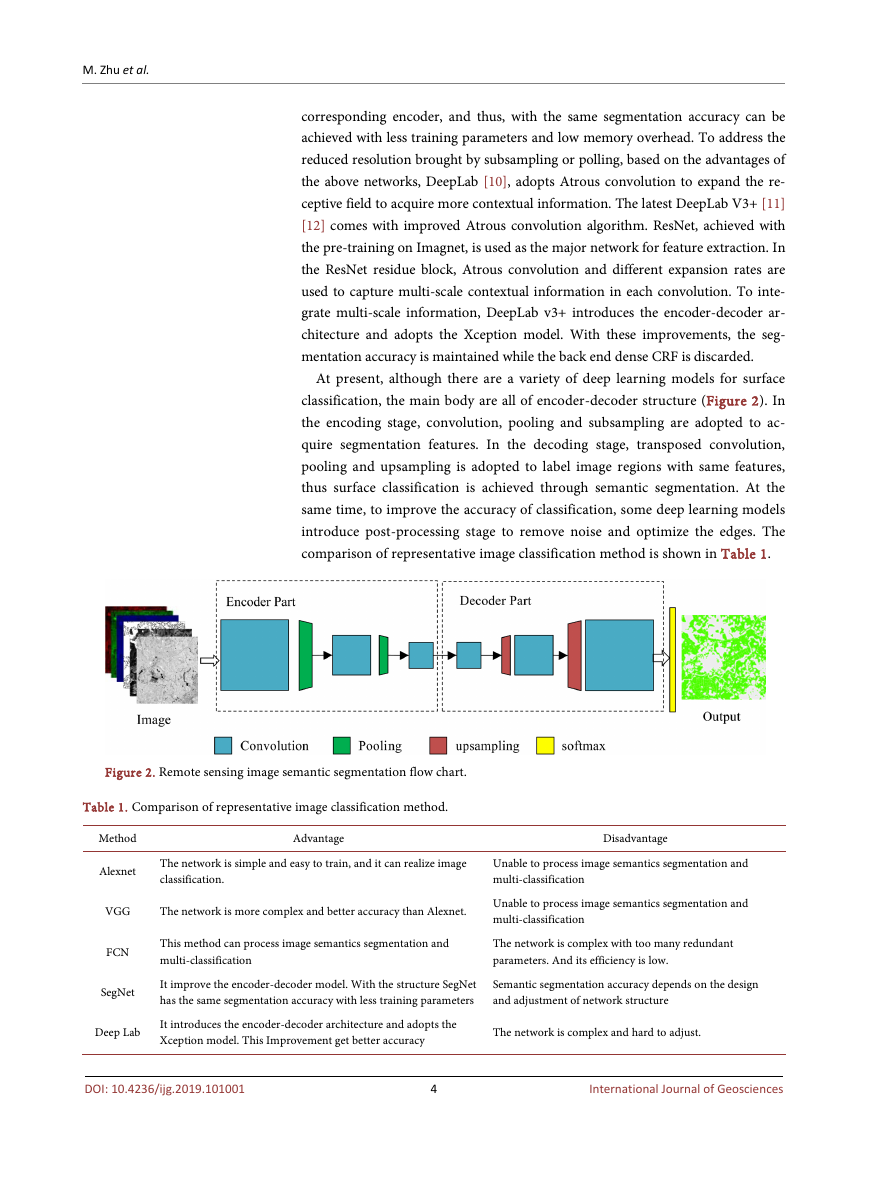

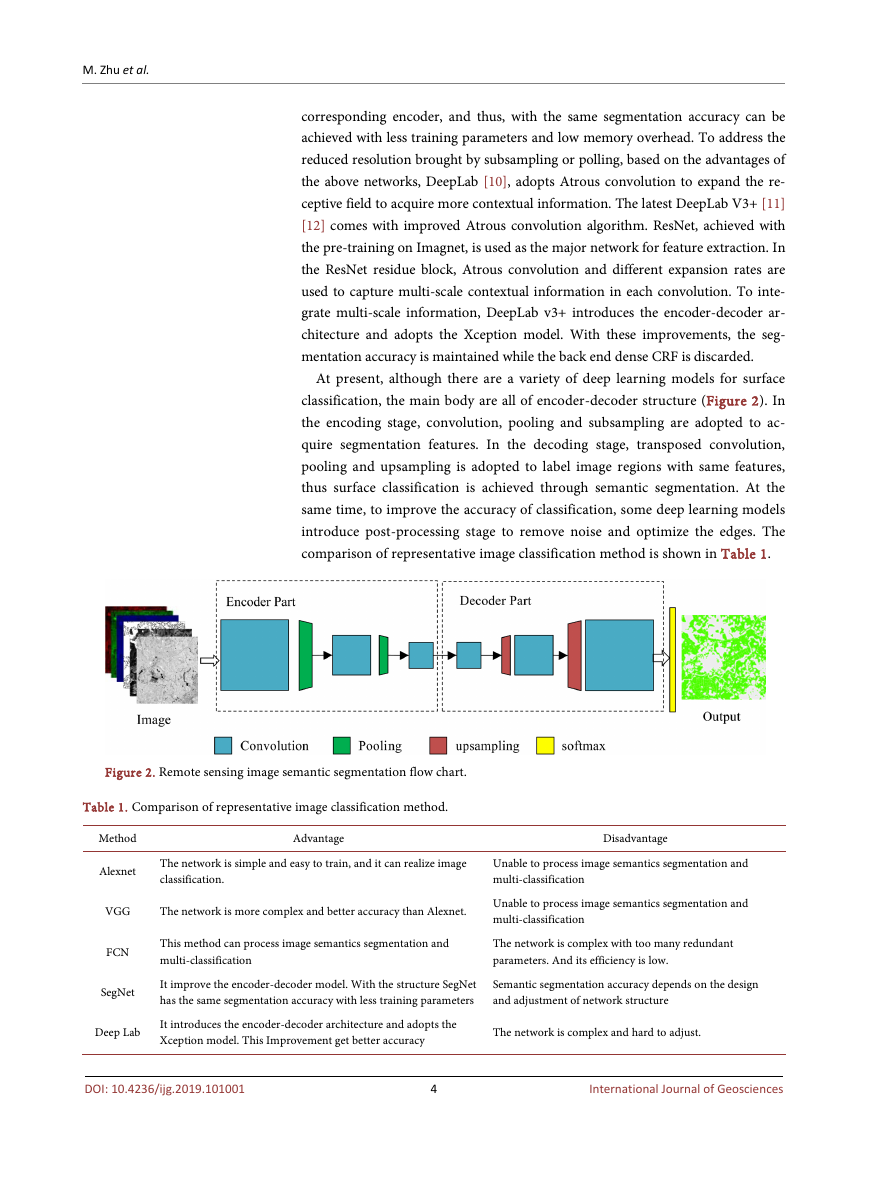

At present, although there are a variety of deep learning models for surface

classification, the main body are all of encoder-decoder structure (Figure 2). In

the encoding stage, convolution, pooling and subsampling are adopted to ac-

quire segmentation features. In the decoding stage, transposed convolution,

pooling and upsampling is adopted to label image regions with same features,

thus surface classification is achieved through semantic segmentation. At the

same time, to improve the accuracy of classification, some deep learning models

introduce post-processing stage to remove noise and optimize the edges. The

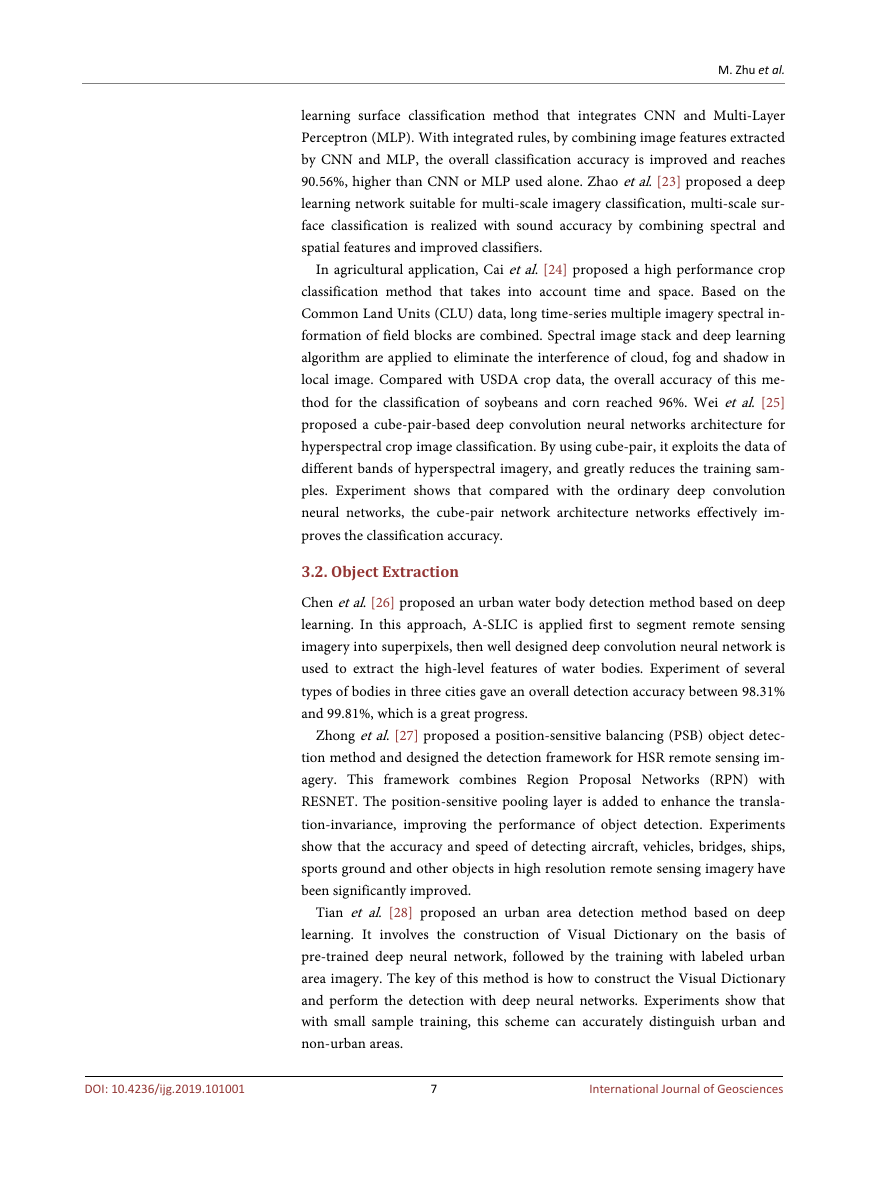

comparison of representative image classification method is shown in Table 1.

Figure 2. Remote sensing image semantic segmentation flow chart.

Table 1. Comparison of representative image classification method.

Method

Alexnet

Advantage

Disadvantage

The network is simple and easy to train, and it can realize image

classification.

Unable to process image semantics segmentation and

multi-classification

VGG

The network is more complex and better accuracy than Alexnet.

Unable to process image semantics segmentation and

multi-classification

FCN

SegNet

This method can process image semantics segmentation and

multi-classification

The network is complex with too many redundant

parameters. And its efficiency is low.

It improve the encoder-decoder model. With the structure SegNet

has the same segmentation accuracy with less training parameters

Semantic segmentation accuracy depends on the design

and adjustment of network structure

Deep Lab

It introduces the encoder-decoder architecture and adopts the

Xception model. This Improvement get better accuracy

The network is complex and hard to adjust.

DOI: 10.4236/ijg.2019.101001

4

International Journal of Geosciences

�

M. Zhu et al.

2.2. Object Detection

Object detection is another common application of remote sensing. The deep

learning model of object detection is mainly based on region-based convolution

neural networks (R-CNN), which is the earliest proposed method of deep learn-

ing object detection. The main idea is to transform the object detection problem

into the classification problem. The image is divided into a large number of can-

didate regions by selective search algorithm, CNN is then applied to obtain the

eigenvectors of candidate regions, and finally object detection is completed by

the classifier, which determines the type of the candidate area [13]. The proposal

of R-CNN has greatly improved the success rate of image object detection, but

R-CNN will generate partially overlapping candidate areas from each detection

target. Such areas are repeatedly fed into CNN for feature calculation, thus re-

ducing the efficiency of detection. To reduce overlapping candidate areas, He

Kaiming proposed Spatial Pyramid Pooling Networks (SPP-Net) [14], which in-

troduces the spatial pyramid pooling layer after the last convolution layer, thus

repetitive processing is eliminated, allowing image of any sizes to be processed

with CNN. With these improvement, SPP-Net has greatly increased the speed of

object detection. Based on SPP-Net, Girshick proposed Fast R-CNN [15], which

simplifies the spatial pyramid pooling layer of SPP-Net, thus, the RoI pooling

layer is formed to extract features. The substitution of SVM by Softmax greatly

improves the speed of training and detection. It is more accurate and 213 times

faster than R-CNN. To further improve the efficiency of Fast R-CNN in gene-

rating candidate area, Ren et al. proposed Faster R-CNN [16], which introduces

Region Proposal Network (RPN), meantime, RPN and Fast R-CNN are com-

bined as an integrated network to generate candidate regions. With further im-

proved network structure, YOLO [17] and Single Shot Multibox Detector (SSD)

[18] maintain almost the same detection accuracy with significantly improved

detection speed. The comparison of representative image object detection me-

thod is shown in Table 2.

Table 2. Comparison of representative image object detection method.

Method

R-CNN

SPP-Net

Advantage

Disadvantage

The network transform the object detection problem into the

classification problem and greatly improv the accuracy.

It generate partially overlapping candidate areas from

each detection target.

It introduces the spatial pyramid pooling layer after the last

convolution layer, thus repetitive processing is eliminated.

Training is a multi-stage process with long training time.

Fast R-CNN

Its raining and testing are significantly faster than SPP-net.

The input image can be any size.

The network still depend on candidate region selection

algorithm.

Faster R-CNN

This network is faster than Fast R-CNN and no longer

depend on region selection algorithm

The training process is complex, and there is still much

room for optimization in the calculation process.

SSD

YOLO

The multi-scale feature map is adopted and the processing

speed is fast.

The robustness of this network to small object detection is

not high.

The network can meet the real-time requirements with using

the full image as Context information.

It is relatively sensitive to the scale of the object, and the

effect of small target detection is not good.

DOI: 10.4236/ijg.2019.101001

5

International Journal of Geosciences

�

M. Zhu et al.

DOI: 10.4236/ijg.2019.101001

2.3. Change Detection

Change detection is the process of detecting changes using remote sensing im-

agery obtained at different times. These changes are due in part to natural phe-

nomena, such as droughts, floods, and landslides, the other part is due in human

activities as new roads, excavation of the surface or construction of new houses.

Compared to models for surface classification and object detection, there are less

deep learning models for image change detection [7]. The current change detec-

tion based on deep learning mainly adopts two technic approaches. One is to

detect the correspondent points of two imagery through deep learning and de-

termine whether there are changes to the correspondent points. The other ap-

proach is to translate the change detection problem into the surface classification

problem, and acquire the changed region through semantic segmentation, com-

paring and classification of map spots. From the experimental results, the se-

mantic segmentation approach is easier to achieve, faster in speed and better in

detection accuracy.

3. Progress in Researches on Deep Learning

in Remote Sensing Application

With constant optimization of the deep learning model for remote sensing, deep

learning is gradually applied in the surface classification, object detection and

change detection of remote sensing imagery. The results of various applications

show that compared with the traditional methods, new breakthroughs has been

made in the accuracy and efficiency.

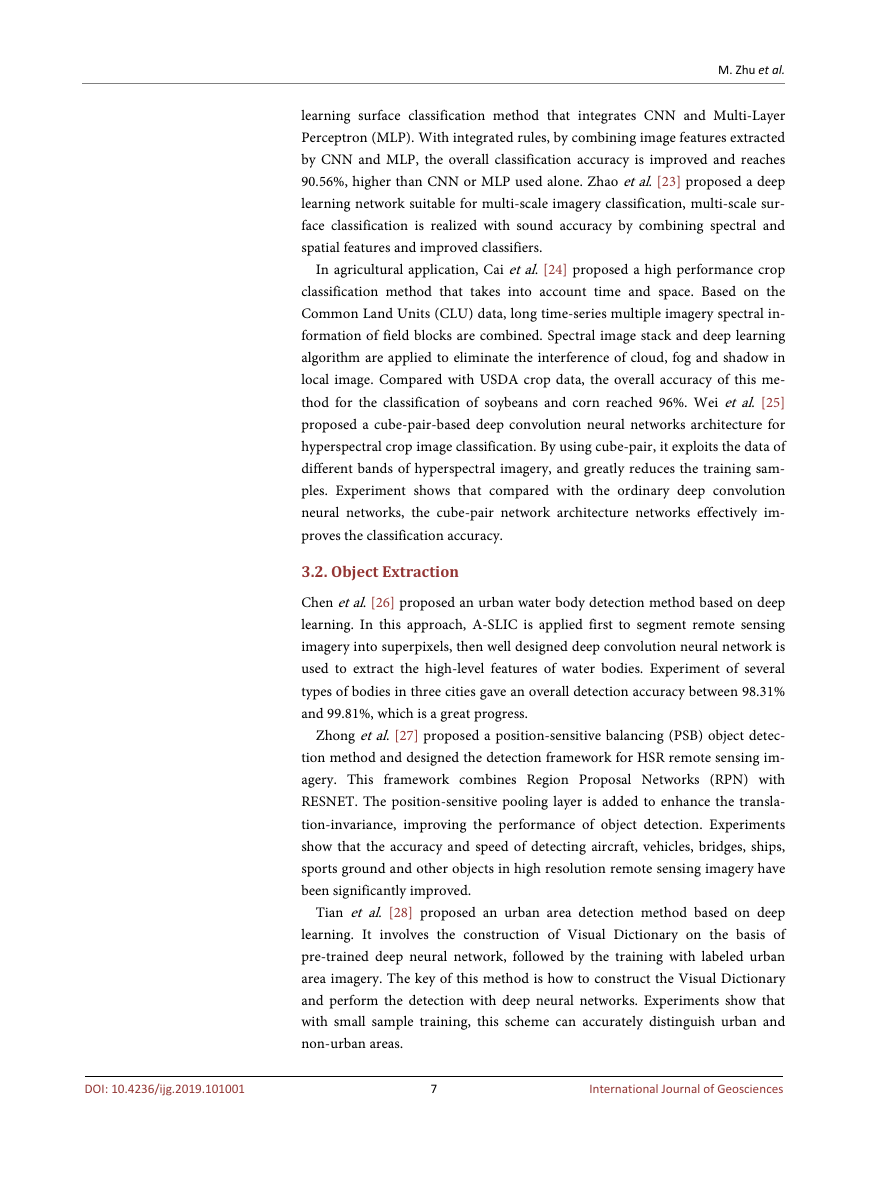

3.1. Imagery Based Land Cover Classification

Fu et al. [19] expanded the network for remote sensing image surface classifica-

tion, a skip-layer structure is added to enable the FCN for multi-resolution im-

age classification. Atrous convolution is introduced to improve the density of

output features. CRF is applied in detection to refine the output class, thus im-

proves the accuracy of high-resolution image classification. To address the

problems in vegetation classification, namely, small difference of object feature

and loss of features in encoding stage of FCN, Zhang et al. [20] Added a feature

extraction layer with convolution kernel containing the features of vegetation to

be extracted and an encoding layer adopting non-linear activation function, as a

result, the accuracy of vegetation classification is improved. Sharma et al. [21]

proposed a deep learning land cover classification method for middle-resolution

imagery. This method takes Landsat 8 image as the research object, changes the

CNN input from single pixel to 5 × 5 pixel image block. The image block input

contains not only the image band information, but also the spatial relation of

adjacent pixels. The experimental data shows that compared with the pix-

el-based CNN, the deep learning method based on block increased the overall

classification accuracy of farmland, wetland, forest, water body and other fea-

tures by 24.23%. Zhang et al. [22] proposed a high resolution imagery deep

6

International Journal of Geosciences

�

M. Zhu et al.

learning surface classification method that integrates CNN and Multi-Layer

Perceptron (MLP). With integrated rules, by combining image features extracted

by CNN and MLP, the overall classification accuracy is improved and reaches

90.56%, higher than CNN or MLP used alone. Zhao et al. [23] proposed a deep

learning network suitable for multi-scale imagery classification, multi-scale sur-

face classification is realized with sound accuracy by combining spectral and

spatial features and improved classifiers.

In agricultural application, Cai et al. [24] proposed a high performance crop

classification method that takes into account time and space. Based on the

Common Land Units (CLU) data, long time-series multiple imagery spectral in-

formation of field blocks are combined. Spectral image stack and deep learning

algorithm are applied to eliminate the interference of cloud, fog and shadow in

local image. Compared with USDA crop data, the overall accuracy of this me-

thod for the classification of soybeans and corn reached 96%. Wei et al. [25]

proposed a cube-pair-based deep convolution neural networks architecture for

hyperspectral crop image classification. By using cube-pair, it exploits the data of

different bands of hyperspectral imagery, and greatly reduces the training sam-

ples. Experiment shows that compared with the ordinary deep convolution

neural networks, the cube-pair network architecture networks effectively im-

proves the classification accuracy.

3.2. Object Extraction

Chen et al. [26] proposed an urban water body detection method based on deep

learning. In this approach, A-SLIC is applied first to segment remote sensing

imagery into superpixels, then well designed deep convolution neural network is

used to extract the high-level features of water bodies. Experiment of several

types of bodies in three cities gave an overall detection accuracy between 98.31%

and 99.81%, which is a great progress.

Zhong et al. [27] proposed a position-sensitive balancing (PSB) object detec-

tion method and designed the detection framework for HSR remote sensing im-

agery. This framework combines Region Proposal Networks (RPN) with

RESNET. The position-sensitive pooling layer is added to enhance the transla-

tion-invariance, improving the performance of object detection. Experiments

show that the accuracy and speed of detecting aircraft, vehicles, bridges, ships,

sports ground and other objects in high resolution remote sensing imagery have

been significantly improved.

Tian et al. [28] proposed an urban area detection method based on deep

learning. It involves the construction of Visual Dictionary on the basis of

pre-trained deep neural network, followed by the training with labeled urban

area imagery. The key of this method is how to construct the Visual Dictionary

and perform the detection with deep neural networks. Experiments show that

with small sample training, this scheme can accurately distinguish urban and

non-urban areas.

7

International Journal of Geosciences

DOI: 10.4236/ijg.2019.101001

�

M. Zhu et al.

DOI: 10.4236/ijg.2019.101001

3.3. Change Detection

To obtain the spectral and texture changes of the correspondent points between

images, Zhang Xinlong et al. applied modified change vector analysis algorithm

and grey level co-occurrence matrix that both concerning spatial-contextual in-

formation. By setting adaptive sampling intervals, samples of the most likely

changed and unchanged areas are extracted. A Gaussian-Bernoulli Deep Boltzmann

Machine model containing the label layer is constructed and trained to extract

the deep features of changed and unchanged areas, thus effectively identify

changed areas [29].

Khan et al. proposed a forest change detection method. It transforms the

change detection task into a region classification problem. Features of change

are extracted through deep neural network. Based on these features, a multire-

solution profile (MRP) of the target area is built and a candidate set of bound-

ing-box is generated to detect potential changed areas. The detection accuracy of

improved model reached 91.6%, which is 16% higher than traditional methods.

The model can be well generalized, and can be widely used in the change detec-

tion tasks of various regions [30].

3.4. Discussion

Although great progress has been made in the application deep learning me-

thods in remote sensing, there are still the following shortcomings:

1) Lack of strict mathematical interpretation. Deep learning is merely a

process fitting of the input data and the output result, there is a lack of strict

mathematical basis for the design and improvement of the networks.

2) The requirements for training samples are high. To achieve better results in

application, the requirements for quantity and quality of training samples are

very high. Although some scholars have made certain progress in small sample

training, for practical application in specific areas, a large number of training

samples are required for higher accuracy.

3) Comprehensibility of network features is poor. Features extracted by the

network lacks practical significance after being passed to the deep level. Though

there are available visual development tools, the specific meaning of automatic

network extraction cannot be designed. The construction, adjustment and im-

provement of deep network still rely on the experience of developers.

4) Few engineering application. Most research focus on network architecture

and the verification algorithm, there are few researches on cloud computing ar-

chitecture, data storage and retrieval mechanism for engineering applications.

Few engineering project are completed and put into practical application.

5) Image recognition based on deep learning only relies on sample training,

and image is mapped to specific results through complex computations. Howev-

er, in this process, deep learning does not really understand the specific meaning

of mapping, so it is impossible to use prior knowledge for image recognition and

judgment.

8

International Journal of Geosciences

�

2023年江西萍乡中考道德与法治真题及答案.doc

2023年江西萍乡中考道德与法治真题及答案.doc 2012年重庆南川中考生物真题及答案.doc

2012年重庆南川中考生物真题及答案.doc 2013年江西师范大学地理学综合及文艺理论基础考研真题.doc

2013年江西师范大学地理学综合及文艺理论基础考研真题.doc 2020年四川甘孜小升初语文真题及答案I卷.doc

2020年四川甘孜小升初语文真题及答案I卷.doc 2020年注册岩土工程师专业基础考试真题及答案.doc

2020年注册岩土工程师专业基础考试真题及答案.doc 2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc

2023-2024学年福建省厦门市九年级上学期数学月考试题及答案.doc 2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc

2021-2022学年辽宁省沈阳市大东区九年级上学期语文期末试题及答案.doc 2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc

2022-2023学年北京东城区初三第一学期物理期末试卷及答案.doc 2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc

2018上半年江西教师资格初中地理学科知识与教学能力真题及答案.doc 2012年河北国家公务员申论考试真题及答案-省级.doc

2012年河北国家公务员申论考试真题及答案-省级.doc 2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc

2020-2021学年江苏省扬州市江都区邵樊片九年级上学期数学第一次质量检测试题及答案.doc 2022下半年黑龙江教师资格证中学综合素质真题及答案.doc

2022下半年黑龙江教师资格证中学综合素质真题及答案.doc